Page 86

7

Toward the Development of Disease Early Warning Systems

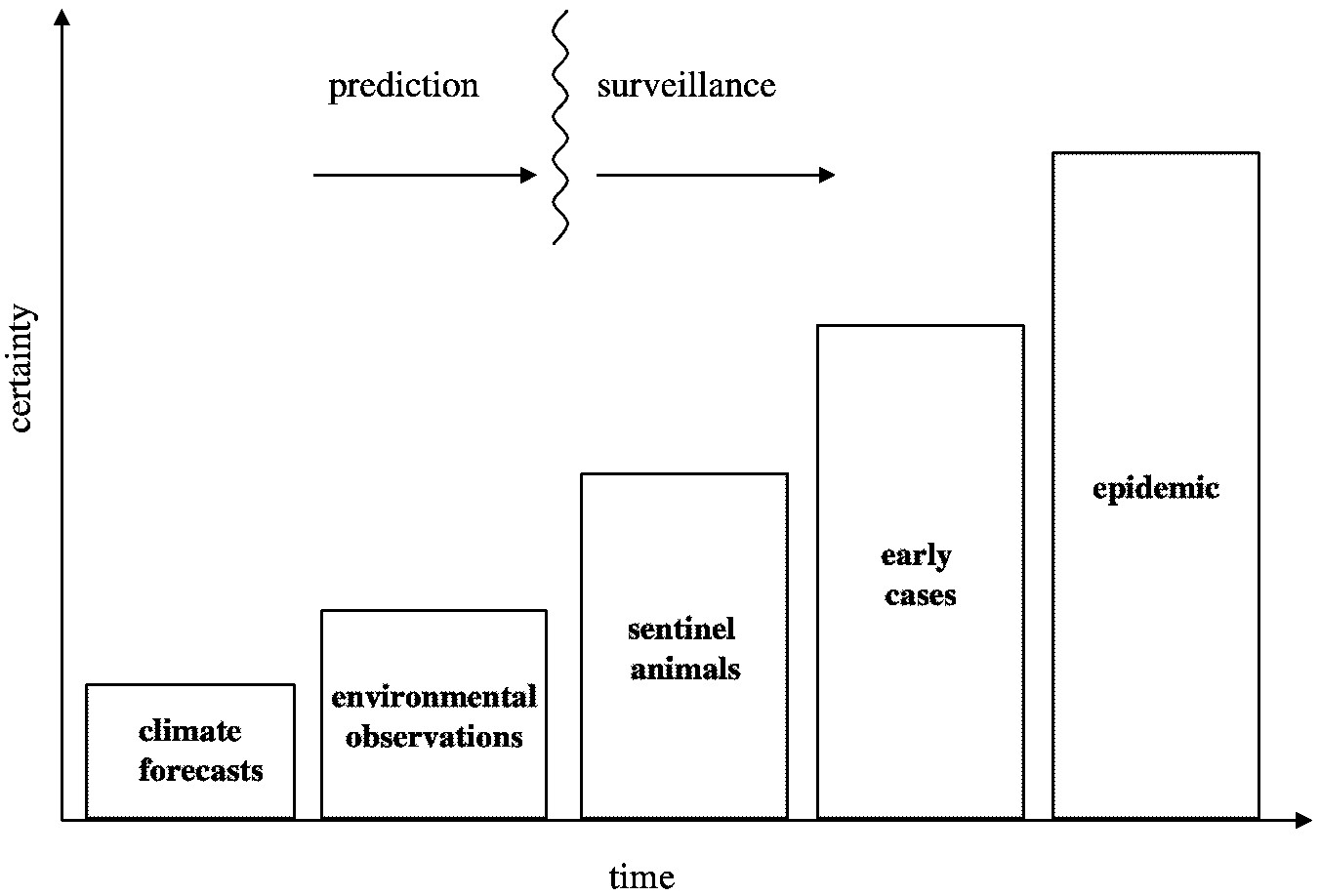

An early warning system is an instrument for communicating information about impending risks to vulnerable people before a hazard event occurs, thereby enabling actions to be taken to mitigate potential harm, and sometimes, providing an opportunity to prevent the hazardous event from occurring. Early warning systems are routinely used for hazardous natural events such as hurricanes and volcano eruptions. In contrast, to date very little attention has been paid to the development of such systems for infectious disease epidemics. The goal of a disease early warning system would be to provide public health officials and the general public with as much advance notice as possible about the likelihood of a disease outbreak in a particular location, thus widening the range of feasible response options. The inherent dilemma of an early warning system, however, is that more lead time usually means less predictive certainty, as illustrated in Figure 7-1.

The most commonly used bellweather of an impending epidemic is the appearance of early cases of the disease in a population. In some instances, “sentinel” animals are placed in high-risk locations and monitored for evidence of infection, since infections among these animals will typically presage human cases. These “surveillance and response” approaches provide fairly high predictive certainty of an impending disease outbreak but often leave public health authorities with little advance notice for mobilizing actions to prevent further spread of the disease agent.

In contrast, ecological observations and climate forecasts can potentially be used in efforts to predict the appearance of a pathogen and thus allow opportunities to minimize its transmission. This approach is likely to have a much lower

Page 87

~ enlarge ~

FIGURE 7-1 Progression of the types of information that can used to indicate an impending disease outbreak.

predictive value, however, given the uncertainties associated with most climate/disease relationships and the confounding influences of other factors. It is highly unlikely that precise predictions of an epidemic could be made solely on the basis of climate forecasts and environmental observations. Yet, this information can feasibly be used as the basis for issuing an alert (or a “watch”) that environmental conditions are conducive to disease outbreak, which in turn can trigger intensive surveillance efforts for the area in question. If surveillance data then confirm the presence of the pathogen or an increase in its abundance subsequent warnings could be issued as needed. This watch/warning approach is analogous to the system used by the U.S. National Weather Service to alert communities to impending severe weather. A benefit of this multi-staged early warning approach is that response plans can be gradually ramped up as forecast certainty increases. This would give public health officials several opportunities to weigh the costs of response actions against the risk posed to the public.

DEVELOPING EFFECTIVE EARLY WARNING SYSTEMS

Early warning systems are often defined narrowly as instruments for detecting and forecasting an impending hazard event, but this definition does not clar-

Page 88

Box 7-1

|

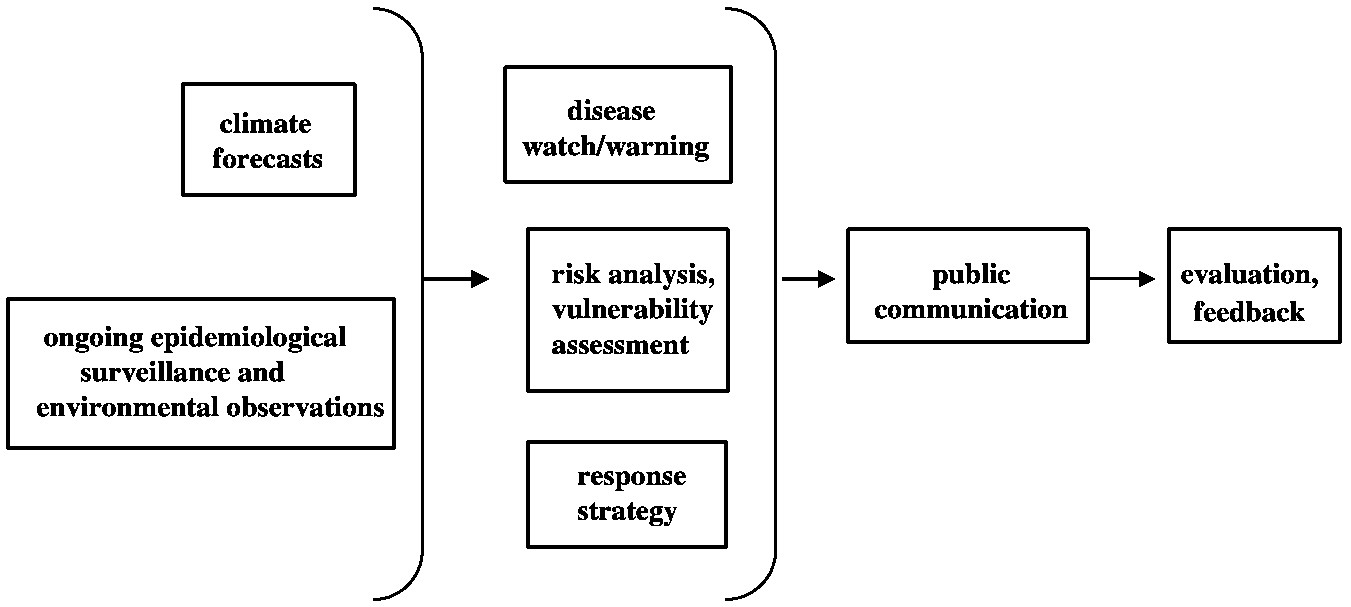

ify whether the warning information is actually used to reduce risks. To fulfill an effective risk reduction function, an early warning system should be understood as an information system designed to facilitate decision making of the relevant national and local-level institutions and to enable vulnerable individuals and social groups to take actions to mitigate the impacts of an impending hazard. The focus must be not only on improving hazard monitoring and prediction but also on improving coordination among relevant parties, such as the scientific organizations that forecast hazard events, the national and local management agencies that assess risk and develop response strategies, and the public communication channels used to disseminate warning information.

Page 89

~ enlarge ~

FIGURE 7-2 Diagram of the components of an effective disease early warning system.

Figure 7-2 illustrates the different components necessary for an operational early warning system. Climate forecasts and information from ongoing epidemiological surveillance and environmental observations may be used as input for predictive models that generate watches or warnings about an impending disease risk. This information is then coupled with vulnerability assessments to determine which segments of a population are most likely to face harm from an impending hazard and risk analysis to determine the likely impact of the impending hazard on these groups. The actions required to reduce the impacts of an impending hazard are determined through development of a response strategy. Finally, a public communication system facilitates the timely dissemination of information on impending hazards, risk scenarios, and preparedness strategies to vulnerable groups. Issues related to climate forecasting and predictive disease models are discussed in earlier chapters. The other central components of an early warning system are discussed in more detail below.

Epidemiological surveillance. Epidemiological surveillance systems that are ongoing and systematic, that use standardized routines for quality assurance, and that provide for analysis and timely dissemination of information are critical to early warning systems. The characteristics and limitations of current surveillance systems are discussed in Chapter 5. Historically, surveillance has focused specifically on monitoring the incidence of infection or disease. In the context of an early warning system, however, surveillance also needs to include monitoring of changes in vector population abundance, and may entail the use of “sentinel” animal networks (such as chicken flocks) to provide an early indication of the pathogen's presence in a particular area. These types of ecologically-based surveillance systems are already commonly used in some contexts, for example, in surveillance for St. Louis encephalitis and Western Equine encephalomyelitis

Page 90

in California (Reisen et al., 1992; Reisen et al., 2000) and West Nile virus in the eastern U.S. (MMWR, 2000).

Environmental observations. Systematic climate observations are an important component of an early warning system, in part because the impacts of weather or climate events often depends on antecedent conditions. For instance, whether a heavy rainfall event will lead to flooding may depend on the recent history of precipitation in the area. New remote-sensing technologies for monitoring ecological parameters such as soil moisture, vegetative cover, and sea surface temperature on a global scale (discussed in Chapter 5) will inevitably play an important role in disease early warning systems.

Vulnerability assessment. Vulnerability refers to a population's sensitivity to a hazard, as well as its ability to cope with the hazard. Vulnerability assessment provides a context for interpreting surveillance data and for understanding the impacts of disruption among any of the links that connect nutrition, shelter, economic systems, and human health. At the household level, factors such as diet, shelter, sanitation, and water supply affect vulnerability to infectious disease. Common risk factors in developing countries (and in parts of developed countries) include lack of access to clean water, poor sanitation, inadequate shelter, and low immunization coverage. A community's vulnerability is also affected by such demographic factors as age structure and population density, and by pre-existing health threats such as HIV. Vulnerability assessment should be closely coupled to public health surveillance; both are crucial for evaluating the potential for outbreaks and for developing disease control strategies.

Risk analysis. Risk analysis is carried out to assign specific probabilities to the likely impacts of an impending hazard. Risk is a complex variable related to hazard types and patterns of vulnerability, possible impacts of the hazard events, and the capacity of communities to absorb and recover from these impacts. In many countries, risk patterns are rapidly changing as a result of urbanization, economic change, population growth, migration, environmental degradation, and armed conflict. Yet risk analysis is still fundamentally static in character in many places, often because cartographic and census information is so out of date that it bears little relationship to real risk levels. A priority must be to build the capacity to monitor dynamic changes in risk patterns at a high level of spatial and temporal resolution, in order to provide accurate information on the likely impact of a specific hazard event in a given area.

Preparedness/response. Warning systems must be developed in concert with developments in local-, national-, or regional-level response capabilities, particularly in highly vulnerable areas. Often, improvements in scientific predictive capabilities are not accompanied by commensurate improvements in the

Page 91

ability to actually use this warning information. Experience suggests the importance of developing response strategies based on community needs and priorities. Response plans that contradict the accepted coping strategies of a vulnerable group are not likely to be implemented effectively. The actions taken to prevent or mitigate disease outbreaks can often carry significant costs and, in particular, can pose a major burden on resources in poor countries. If the costs of carrying out a recommended response strategy are seen to be greater than the public health benefits gained, this can undermine the credibility of the early warning system as a whole. In addition, implementation of control measures (such as widespread spraying of insecticides) can sometimes face active public resistance. Such potential pitfalls and cost-benefit considerations need to be carefully assessed so that response plans can be adapted to best suit the priorities, needs, and capacity of the local community.

Public communication. To ensure that warning information and recommended response strategies are heeded by the populations at risk, effective public communication strategies must be developed. As discussed in Freimuth et al. (2000), effective health communication programs identify and prioritize audience segments; deliver accurate, science-based messages from credible sources; and reach audiences through familiar channels. Attention must be paid to issues

Specific strategies for responding to a disease warning depend on the perceived magnitude of the threat and on the socioeconomic conditions and institutional resources of the region in question. As an example, the following is a list of actions that were recommended or supported by the Pan-American Health Organization to help countries in South and Central America prepare for the onset of the 1997-1998 El Niño (adapted from PAHO, 1998): Training workshops for public health professionals in high-risk areas for strengthening entomological surveillance, vector control, and prevention activities such as the distribution of mosquito netting. Provision of basic supplies for water storage and treatment. Local workshops to find solutions to environmental sanitation problems. Identification of shelter sites and requirements for their installation and control of food distribution. Characterization of rodents and vectors of public health significance in disaster areas. Strengthening of laboratory diagnosis for leptospirosis and hantavirus. Vaccination of the affected population against whooping cough, tetanus, and diphtheria to guard against potential outbreaks.

Box 7-2

Examples of Response Strategies

Page 92

of credibility and trust. If the warning authority lacks status in the area concerned or has a history of conflicting relationships with local communities, warnings may not be well received. Likewise, if too many warnings had been given previously or no warning was given at all when previous disease outbreaks did occur, the credibility of future warnings will be jeopardized. Finally, to prevent overreaction and even panic by the general public, disease early warnings must include clear explanations of the actual level of risk involved and of the particular groups that are most vulnerable.Implementation Issues

Early warning systems are only as good as their weakest link, and they can fail for a number of reasons: the hazard forecast or risk scenarios generated may be inaccurate; there may be a failure to communicate warning information in sufficient time or in a way that can be usefully interpreted by people exposed to a hazard; appropriate response decisions may not be made due to a lack of information, opaque decision-making procedures, or perceived political risks; or intervention actions may incur unacceptable costs for the community.

One potentially key barrier in effectively implementing an early warning system is lack of clear decision-making protocols among the relevant institutions. In any community there needs to be a designated focal point for coordinating the actions of key institutions such as water treatment plants, hospitals, public health agencies, and transportation providers. In this respect, public health organizations could build on lessons that have been learned by organizations that coordinate responses to natural disasters, such as the U.S. Federal Emergency Management Agency. Clear performance standards and regular performance evaluations can help build public awareness and confidence in the system. To help ensure that lessons from one event are incorporated into planning for future events, management agencies need to evaluate the extent to which warnings are heeded and preparedness strategies are implemented.

A critical lesson that has been learned from existing early warning systems is the importance of involving the system's end users (public health officials, mosquito control officers, water quality monitors, policymakers, etc.). “Stakeholder” participation will enable those who generate forecasts to understand what is needed in terms of lead time, spatial/temporal resolution and accuracy of forecasts, and the specific parameters that should be reported in the forecast. Stakeholders should also be involved in activities such as vulnerability and risk analyses, since people at risk are more likely to take action on the basis of information they were involved in producing.

International-, national-, and local-level institutions all have important roles to play in fostering the development and implementation of a disease early warning system. For instance:

Page 93

-

International/regional level: Seasonal climate forecasts can be developed and/or disseminated by organizations such as the International Research Institute for Climate Prediction, NOAA's Climate Prediction Center, the European Centre for Medium-Range Weather Forecasting, and the World Meteorological Organization. Institutions such as the World Health Organization and the Pan American Health Organization can provide technical assistance and training to help develop national capabilities for surveillance and early warning systems.

-

National level: Scientific teams working within national public health agencies or other organizations can use climate forecasts together with information from vulnerability assessments and surveillance networks to assess the disease risks posed to specific communities. National governments and scientific agencies can also help strengthen the basic infrastructure for epidemiological and environmental surveillance systems.

-

State/local level: For areas determined to be at risk, disease watches/ warnings can be disseminated to public health officials and other local authorities, who can then make decisions about intensifying surveillance or mobilizing intervention efforts. Local-level organizations, including community leaders and neighborhood representatives, can aid in activities such as monitoring vulnerability patterns, assessing intervention strategies and local response capacity, and strengthening networks for decision making and public communication.

Current Feasibility of Early Warning Systems

The previous sections describe the components of an effective climate-based disease early warning system. The feasibility of actually implementing such a system depends on numerous factors. For instance:

-

It must be possible to provide sufficiently reliable climate forecasts for the region in question.

-

There must be a strong understanding of the fundamental climate/disease linkages, so that the methods used to generate warnings offer a reasonable level of predictive value.

-

There must be effective response measures available to implement within the window of lead time provided by the early warning.

-

The community in question must be able to support the needed infrastructure such as surveillance systems and networks for disseminating information between organizations and to the general public.

Based on these criteria, there are at present very few contexts in which establishment of an effective, operational early warning system is entirely feasible. Exceptions may include cases such as the one described in Box 7-2, where a qualitative understanding of climate/disease associations is sufficient to warrant

Page 94

low-risk or “no-regrets” intervention actions. If progress is made in meeting the challenges listed above, however, there is potential for eventually developing effective disease early warning systems in many contexts. In the meantime, valuable information can be gained from further development of models to create “experimental” disease forecasts and from pilot projects that foster new scientific and institutional collaborations and provide real-world experience in using seasonal forecasts to meet the needs of specific communities. There have been several examples of such pilot programs in recent years, supported by institutions such as NOAA's Office of Global Programs, the International Research Institute for Climate Prediction, and the Inter-American Institute.

Case Study: West Nile Virus—Risk and Response

The emergence of West Nile encephalitis during the summer of 1999 in New York City, the first report of the disease in the United States, provides a useful example of several concepts discussed in this report. For instance, it illustrates the importance of interdisciplinary scientific approaches (in this case, the need for cooperation between scientists who deal with animal health and those that deal with human health). It also demonstrates the challenges of coordinating response actions among various government agencies and public health officials, and effectively communicating about a new disease risk to the public.

On August 23 an outbreak of human encephalitis was reported to the New York City Department of Health. On September 3 the CDC announced that the disease was the mosquito-borne St. Louis encephalitis (SLE). In response, New York City immediately initiated a campaign of aerial and ground spraying of pesticides to reduce the population of mosquitos. At this time the same area had reports of dead crows and viral encephalitis of unknown origin in several exotic bird species, including a Chilean flamingo at the Bronx Zoo. The SLE virus does not normally cause disease in birds; hence, at first the CDC assumed that the human and avian outbreaks were unrelated. On September 24, however, results presented from further immunological and molecular tests of samples taken from humans and birds revealed that both epidemics were caused by West Nile, a virus related to the SLE virus but unknown in the Western Hemisphere until that time (Briese et al., 1999; Jia et al., 1999; Lanciotti et al., 1999).

Risk and vulnerability. Mosquito-borne illness was extremely rare in New York City before the 1999 outbreak of West Nile virus. This contributed to the vulnerability of the population prior to the outbreak and the high perception of risk after the disease was publicized. Vulnerability came from the low emphasis on mosquito control, low behavioral avoidance of mosquitos (standing water, unscreened windows, outdoor evening activities), and the lack of immunity in the population. The perception of risk was high because of the relative novelty of a mosquito-borne disease, the lack of an effective treatment, and the potential

Page 95

seriousness of the symptoms including death. In 1999 there were 61 confirmed cases of West Nile virus in the New York City area, of which seven were fatal. Additionally, thousands of crows and other birds, nine horses, and one cat were reported to have died from the disease (Asnis et al., 2000).

West Nile virus was isolated in 1999 from Culex pipiens mosquitos, a species that is abundant in New York City. This mosquito feeds on both birds and people, usually in the evening. West Nile virus-infected adult Cu. pipiens were found during the winter, hidden in relatively warm, secluded shelters, which may explain why transmission of the virus continued during 2000. At least three species of Aedes mosquitos, which may bite during the daytime, also were found to be infected in and around New York City.

Preparedness and response. Health officials identified and responded to the outbreak of encephalitis relatively quickly. The disease was first misidentified because the symptoms and pathology of West Nile virus and SLE are similar and because the fluid and tissue samples from patients cross-reacted with SLE in the initial antibody screen. In this instance, however, the consequences of misidentification were minimal because the response measures for SLE and West Nile were the same. The New York City Department of Health was notified on August 23, 1999, of suspected cases of encephalitis, and mosquito control measures were activated after the cases were identified as SLE two weeks later. Although the response was rapid, it was still late relative to the peak of the epidemic.

To manage the potential spread of West Nile virus, the New Jersey Department of Health and Senior Services developed a preparedness plan that included the following components:

-

Human, animal, and mosquito surveillance to detect whether and where the virus is present, including sentinel chicken flocks.

-

Continued comprehensive mosquito control activities to suppress the emergence of mosquito populations.

-

A coordinated system of reporting and laboratory testing of samples.

-

A wide-reaching communication and education plan for the public and professionals (educational materials distributed through local health departments), including media releases, a Web site, and a strengthened alert network of physicians and hospital personnel.

In the summer of 2000, surveillance programs detected West Nile virus-infected birds in five northeastern states—New York, New Jersey, Massachusetts, Connecticut, and Maryland—indicating epizootic transmission of the disease through a much wider geographic area than in 1999.

Public communication. Why was the response to West Nile virus so extensive and crisis oriented? In the month after the first reported cases of encephalitis, the New York Times published 47 articles on the epidemic. In the following year, 30 articles were published during the month of July alone, indicating that

Page 96

Box 7-3

|

public interest had not diminished. The actual risk of contracting encephalitis was not communicated effectively relative to the intensity of the emergency response. In some cases people contacted the health department after finding a mosquito in their home or called a hospital emergency room after being bitten by one (New York Times, June 4, 2000). The pesticide-spraying campaign by itself caused alarm in the community, both from the implication that there was a health crisis and concern over side effects of heavy pesticide use. Another factor that elevated public concern was the novelty of the disease. Even prior to identification of the disease as West Nile, mosquito-borne viral encephalitis was an exotic disease for most New Yorkers, eclipsing more familiar diseases such as influenza that pose a much greater health threat.

Potential climate linkages. West Nile virus has been found in a broad range of climates, reflecting a broad mosquito host range. It has been previously described in Central Europe, the Middle East, and sub-Saharan Africa, with some evidence that it may be spread by bird migration between Europe and Africa (Watson et al., 1972; Miller et al., 2000). West Nile virus is endemic in temperate areas and was probably brought to New York through international transport of an infected bird, mosquito, or human. There has, however, been

Page 97

speculation that climatic factors in 1999, particularly the mild winter followed by an especially hot, dry summer, contributed to the outbreak (Epstein, 2000). Others have made the argument that West Nile virus represents the type of health threat that could increase with global warming, stating for instance that “this harrowing event in New York only foreshadows what more temperate-climate countries can expect unless we all work to reverse global warming.” (Musil, 1999). It is certainly worthwhile to consider possible climatic influences on the emergence of West Nile encephalitis, but any claims about potential linkages should receive rigorous scientific review before they are used to make projections about future disease trends.

EXAMPLES OF THE USE OF CLIMATE FORECASTS

There are currently no examples of truly operational climate-based disease early warning systems. In some instances, investigators have begun developing models that use environmental observations to predict disease outbreaks (such as Rift Valley fever and hantavirus, discussed earlier), but thus far these models have only been used to make such “predictions” retroactively. Other realms of societal activity, however, do routinely use environmental observations and climate forecasts to issue early warnings to protect public welfare and safety and for resource and economic planning. We review here several of these examples—in the areas of agriculture, famine warning, forest fire control, and hurricane preparedness—for the purpose of highlighting useful analogies and lessons for the development of disease early warning systems.

ENSO Forecasts and Agricultural Planning

A number of studies have documented the value of ENSO forecasts for agriculture in the United States (e.g., Adams et al., 1995; Mjelde et al., 1997; Solow et al., 1998; Weiher, 1999), and these forecasts are used for making cropping decisions in Australian wheat production. Given Australia's location close to the “center of action” of ENSO events (i.e., the tropical Pacific) and the high climatic variability it experiences, its use of seasonal forecasting for agricultural production has been relatively well developed compared to other countries. Several decision-making tools have been developed, such as the RAINMAN and WHEATMAN software programs (Woodruff, 1992), which can assist farmers in making management decisions based on rainfall forecasts. The fundamental assumption in this research is that forecasts have value only if they can change decisions in a way that improves outcomes; in this context the question becomes: Can existing skill in seasonal rainfall prediction improve profitability by tactical modifications of management decisions?

Hammer et al. (1996) investigated the value of seasonal rainfall forecasts for making improved decisions regarding planting dates, varietal characteristics, and

Page 98

application of nitrogen fertilizer. The assumed strategy is that the forecast must generate a preferred combination of profit and risk compared to the fixed strategy (based on no forecast). They performed a cost-benefit analysis, determining profit as a function of wheat production, price, and variable and fixed costs. They also determined the average profit for each nitrogen application rate over all years and the average profit based on each phase of the ENSO cycle (Stone et al., 1996). Comparing results of the fixed strategy and the tactical strategy (considering the forecast ENSO phase), it was found that up to a 20 percent increase in profit and 35 percent reduction in risk could result by using forecast information.

While it is useful to determine the theoretical value of forecasts, the more important question is whether the forecasts are actually being used. In the case of the wheat farmers, the answer appears to be yes, as evidenced by the fact that the Queensland seasonal forecast Web site receives 38,000 hits per annum, and the Queensland bureau receives 1,200 fax requests for the forecast per annum, many of which are from farmers.

An important concept that this work has highlighted is that a forecast in any given year is not guaranteed; rather, forecasting essentially just shifts the probabilities of outcomes. The value of forecasts to farmers thus requires an appreciation of the concept of probability. In the case of the 1998 wheat crop, expected low yields failed to materialize in many cases despite strong El Niño conditions. Essentially in those regions of northeast Australia that had high soil moisture at the time of planting, yields close to normal were obtained, whereas the areas where initial soil moisture was low, lower than normal yields were obtained. Also, contrary to typical conditions for an El Niño year, rains did occur during the flowering phase (Meinke and Hochman, 2000). This reflects the complexity of the wheat cropping conditions and how these can interact with seasonal rainfall. Dealing with the problem of false positives for decision making is one of the central challenges in the use of seasonal forecasts in agriculture.

In a comprehensive review of the use of seasonal forecasts in agriculture in Australia (Hammer, 2000), five significant lessons emerged:

-

Understanding and predicting responses of the agricultural system are critical.

-

Application of seasonal forecasts concerns managing risks.

-

Forecasting information must be directly relevant to the decision makers.

-

Considerable effort must be expended to appropriately communicate probabilistic information.

-

There must be further consideration of connecting agricultural and climate models.

Page 99

Forest Fire Control

Short-term weather forecasts have been used for fire prediction systems in the United States since the early 1950s. The system primarily used today—the National Fire Danger Rating System—issues fire danger warnings based on once-daily weather observations and indices such as dead fuel moisture, live fuel moisture (greenness maps based on NDVI), drought maps, lower-atmospheric stability index maps, and lightening ignition efficiency values. These warnings are used to make decisions about prohibiting the building of open fires, shutting down logging activities, and allocating resources such as manpower and machinery.

Short-term weather forecasts are used in the course of a forest fire, and resource dispatch centers do sometimes use medium term forecasts (10-30 days) to determine what kinds of resources to have on standby. Given the short-term nature of the decision-making structure for controlling forest fires, there currently is little operational use for seasonal-term climate forecasts, but there may be some potential for this if the U.S. Forest Service was convinced of the forecasting predictive power, especially given the fact that there is a well-defined forest fire “season” in most regions. There is also some evidence tying the ENSO cycle with the frequency and intensity of forest fires worldwide (WMO, 1999; National Research Council, 1998). The U.S. Department of Agriculture's Forest Service/Forest Fire Laboratory prepares monthly fire weather forecasts (McCutchan et al., 1991) and a monthly “fire potential” calculated from temperature and relative humidity forecasts. These measures, however, are considered to be too spatially coarse, and their use is hampered by the generally poor accuracy of forecasts made at the monthly time scale. There has been some research on producing longer-term fire severity forecasts, but this work is still very preliminary (Roads et al., 2000).

Some of the lessons that fire managers have learned about how people actually use forecasts, which are likely applicable to a disease early warning system, include the following:

-

Even with sophisticated models and other forecasting tools at their disposal, resource managers often rely primarily on expert judgment to make decisions. Likewise, personal judgment is still required to make decisions about what constitutes an acceptable risk.

-

Systems that do rely primarily on expert judgment can be left in a vulnerable state if there is a shortage of people with sufficient experience (e.g., when experienced managers retire).

-

In systems where several different groups are drawing on a common limited pool of resources for mobilizing preparedness and response actions, a forecast of impending risk can lead to considerable organizational tensions resulting from competition for resources.

Page 100

Hurricane Forecasting

Hurricanes are among the weather entities most destructive to human society. They can cause enormous economic loses (e.g., Hurricane Andrew in 1992), and in countries with highly vulnerable populations the effects can be far more devastating with enormous loss of life (such as occurred with Hurricane Mitch in 1998).

There is a relatively well-developed prediction system for hurricanes operated by the U.S. National Hurricane Prediction Center in Miami, Florida. The two main aspects of a hurricane that are forecast are its track and intensity, which are forecast for 24-, 48-, and 72-hour periods using suites of dynamic and empirical models. While dynamical models and statistical techniques have developed significantly over the past 20 years, significant forecasting errors still occur. As of 1997, average landfall errors were about 115 miles (for 24-hour forecasts), an improvement of only about 20 miles from 20 years earlier (Pielke and Pielke, 1997).

It is these relatively short-term forecasts that are most critical for decision making regarding protection of life and property; however, longer-range hurricane forecasts are also produced (e.g., Gray et al., 1999). These forecasts are made in early August for the tropical cyclone season of that year (August through October). Forecasts are also made based on the state of the ENSO cycle, but these are very general forecasts that are not yet detailed enough to be of much use in decision making.

The main effects of hurricanes that reach land result from some combination of storm surges, winds, and rainfall. One of the societal problems associated with these events is that a high percentage of residents of coastal areas vulnerable to hurricanes have no personal understanding of the possible magnitudes of damage (Pulwarty and Riebsame, 1997), which affects the way they respond to hurricane warnings.

The main decisions related to hurricane prediction include issuing watches and warnings, issuing evacuation plans (and safety advice for those who do not leave), and protection of property through specific building codes and land use decisions. The evacuations issued in 1999 during Hurricane Floyd for the coast of South Carolina are a good example of the problem of false positives, since the hurricane did not make landfall where forecast, and of inadequate evacuation planning, which led to massive traffic congestion.

Some of the lessons learned from forecasting and responding to hurricanes that might be applicable to a disease early warning system include:

-

the need to prepare the population for the possibility of false-positive forecasts;

-

the challenge of educating populations that have not previously been exposed to the hazard in question; and

-

the need to assess the vulnerability of different populations within one region.

Page 101

Famine Early Warning System

The Famine Early Warning System (FEWS), operated by the U.S. Agency for International Development, is an information system designed to help decision makers prevent famine in sub-Saharan Africa by allowing them to better understand the causes of famine, detect changes that create famine risks, and determine appropriate famine mitigation and prevention strategies. FEWS focuses on monitoring high-risk countries where populations are particularly vulnerable to episodic food shortages. A wide variety of information is used to develop forecasts of the “food security” of these populations, including remote sensing (e.g., normalized difference vegetation index), rainfall, crop growth, crop production, and demographic, socioeconomic, and health information. FEWS provides regional and country-specific analyses, monthly bulletins integrating forecast information, and vulnerability assessments. These assessments are typically carried out in conjunction with local institutions, and the analyses and conclusions are shared with the host governments. The main steps of this system are summarized in Box 7-4.

Box 7-4

|

Page 102

Some of the difficulties that the operators of the system have encountered include a lack of clarity about main uses and users of data, lack of coordination and structure in data collection systems, and an unclear relationship between short-term crisis intervention and activities designed to address longer-term underlying problems. Some of the critical lessons learned include the following:

-

Involvement of the host country in developing the system and disseminating information is critical. Otherwise, the local population will not trust the information and it will not be used.

-

The methods used must be made clear to the local population, since lack of transparency in the system also leads to distrust and lack of use.

-

Even when sophisticated forecasts and modeling tools are available, people will often rely on simple intuitive information to make decisions (e.g., Is it raining?).

-

Perhaps most importantly, an early warning system is of little value if it is not connected to a system for making decisions about possible responses.

FEWS offers perhaps the most relevant example of an early warning system for the public health community to consider. In fact, since malnutrition and susceptibility to disease are closely linked, it could be highly advantageous for a disease early warning system to build on the infrastructure and personal networks that FEWS has already established in many regions.