6

Research Opportunities and Requirements

Much remains to be learned about the physics and geology of earthquakes. Few problems are more challenging to science or strategically relevant to the nation, and few have a greater potential for mobilizing efforts to elucidate the fundamental geological processes that shape our planet. Over the 25 years of the National Earthquake Hazard Reduction Program (NEHRP), scientists in the United States and elsewhere have made substantial strides in understanding seismic phenomena, but many key questions remain to be answered. It is fair to say that a comprehensive theory of earthquakes—one that adequately describes the dynamical interactions among faults, as well as the basic features of rupture nucleation, propagation, and arrest—does not yet exist. The quest for such a theory is the principal driver for basic research in the field and the primary motivation for the geosystems approach.

Basic research on this problem connects closely with the practical issues in earthquake risk reduction through the common aim of forecasting earthquakes and anticipating their effects. Economic losses from earthquakes are escalating owing to rapid urbanization in tectonically active areas (Figure 1.2). As a practical matter, the nation must counter the threat by redoubling its efforts to prepare for and respond to earthquakes. The Committee on the Science of Earthquakes posed three questions regarding the Earth science component of this national effort: (1) What are the primary goals for the next decade of earthquake research? (2) What resources will be needed to achieve these goals? (3) In what areas can tech-

nological investments accelerate progress? This chapter presents the committee consensus on these issues in nine key areas where better knowledge is needed. The order of topics reflects the organization of the earthquake research effort laid out in Chapters 3 through 5 and is not intended to imply a prioritization.

6.1 FAULT CHARACTERIZATION

Almost all destructive earthquakes are generated by the sudden slippage of faults near the Earth’s surface. Most dangerous faults in the continental crust (i.e., those that slip at average rates greater than a few millimeters per year) can be identified through a combination of geologic, geodetic, and seismologic measurements. New technologies in all three fields have enhanced the ability to locate active faults and assess their seismogenic potential (Chapter 4). These advances now warrant a substantial expansion in regional data gathering, including the densification of seismic and geodetic monitoring systems and more intense efforts to mine the geological record of fault activity. The goal should be a comprehensive catalog of fault information.

Goal: Document the location, slip rates, and earthquake history of dangerous faults throughout the United States.

Fault characterization at the detail required for comprehensive seismic hazard analysis will require nationwide efforts to improve capabilities in the three main observational areas of seismology, geodesy, and geology:

-

a national seismic network capable of recording all earthquakes down to moment magnitude (M) 3 with fidelity across the entire seismic bandwidth and with sufficient density to determine the source parameters, including focal mechanisms, of these events; the location threshold for regional networks should reach M 1.5 in areas of high seismic risk;

-

geodetic instrumentation for observing crustal deformation within active fault systems with enough spatial and temporal resolution to measure all significant motions, including aseismic events and the transients before, during, and after large earthquakes; and

-

programs of geologic field study to quantify fault slip rates and determine the history of fault rupture over many earthquake cycles.

Major programs for augmenting seismic and geodetic instrumentation have been proposed by the NEHRP science agencies. The Advanced National Seismic System (ANSS), to be deployed by the U.S. Geological

Survey (USGS), will upgrade the U.S. National Seismographic Network from 56 to 100 stations and modernize regional seismic networks (Box 6.1). These components of the ANSS plan, if brought into full operation, would furnish the instrumental system needed to satisfy the seismological objective stated above.

|

BOX 6.1 Advanced National Seismic System A major initiative is under way to increase the number of seismographs deployed in the United States, with an emphasis on urban areas with significant seismic risk.1 Plans for the ANSS call for doubling the size the U.S. National Seismographic Network, upgrading regional earthquake monitoring with 1000 new stations, and installing 6000 strong-motion instruments in cities with moderate and high seismic risk. Half of the urban instruments would be ground based (free-field), and the other half would be located in buildings and other structures of engineering interest. Additional components include network operation and data distribution centers and an array of portable seismographs for targeted (e.g., postearthquake) studies. The ANSS is designed to bridge the separation between strong-motion seismology and regional network seismology by recording ground motions over the broad range of frequencies and amplitudes required by both seismologists and engineers. The data collected in major earthquakes will allow engineers to study the strong-motion response of a diverse collection of structures, and they will permit seismologists to invert for the detailed slip history on a fault and thus constrain the fundamental processes involved in rupture dynamics. The high density of stations in urban areas, most of which are located on sedimentary basins, will enable seismologists to map site response at the fine scales needed to understand basin and near-surface effects. Moreover, the high density of stations proposed within certain buildings would calibrate and improve predictive engineering models of near-failure and nonlinear behaviors. The ANSS will be a real-time network that will broadcast information about the location and magnitude of each earthquake. It will also provide near-real-time maps of ground shaking and spectral response parameters using the ShakeMap procedures,2 which are proving valuable for emergency response and rapid assessment of damage and losses. The regional and national committees implementing ANSS include representatives of regional networks, engineering groups, and other users. The ANSS modernization effort will cost approximately $170 million, and its annual operational costs are estimated to be about $47 million. Congress appropriated $1.6 million in FY 2000 to improve real-time monitoring and reporting of earthquakes, $4 million in FY 2001 to install real-time instruments in several key cities, and $3.9 million in FY 2002. An additional $3.9 million has been requested in the President’s FY 2003 budget. |

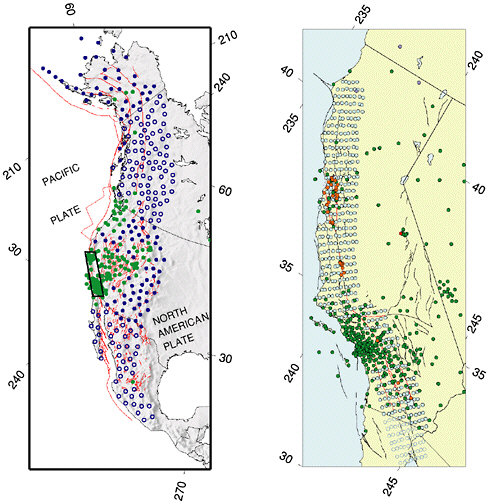

Particular opportunities exist for high-resolution geodetic measurements near active faults and other regions of concentrated deformation. The multiagency EarthScope initiative (Box 6.2) will provide denser and more complete geodetic coverage of North America. The Plate Boundary Observatory (PBO) will expand existing geodetic networks with additional permanent Global Positioning System (GPS) stations and campaign-style observations, allowing secular deformations to be separated from the transient signals associated with individual earthquakes and filling major gaps in measurements of the western United States plate boundary deformation zone. GPS receivers located with millimeter precision over baselines of thousands of kilometers will be deployed to map long-term strain rates across the width of the Pacific-North American plate boundary, while arrays of GPS stations will be used to measure the short-term deformations associated with volcanoes and earthquakes (Figure 6.1). PBO will also augment the presently sparse array of borehole strainmeters and seismometers along the main active faults from Alaska to Mexico to better characterize transient tectonic strain signals. Another geodetic component of EarthScope is a satellite-based system for interferometric synthetic aperture radar (InSAR) imaging, proposed as a joint initiative between the National Aeronautics and Space Administration (NASA), National Science Foundation (NSF), and the USGS. InSAR will be used to map decimeter-level deformations of fault ruptures continuously over areas tens to hundreds of kilometers wide, as well as a range of other phenomena such as strain accumulation between earthquakes, magma inflation of volcanoes, and ground subsidence. InSAR data are currently available to U.S. researchers only from foreign satellite systems, and the amount of data available is limited. A dedicated U.S. mission with free access to InSAR data is needed to complement PBO and exploit the exceptional scientific promise of spatially contiguous deformation mapping.

No initiative comparable to ANSS and EarthScope exists to organize the gathering of much-needed geologic data. Current plans call for the sponsorship of geologic field work in conjunction with the deployment of all four instrumental components of the EarthScope system. It is crucial that this planning provide mechanisms for the compilation and synthesis of fault-related data using geographic information systems and other information technologies. Geologic data tend to be more diverse and require more complex semantics and descriptive metadata to place them in the appropriate scientific context than the instrumental time series of seismology and geodesy. Perhaps owing to this complexity, few resources have thus far been allocated to consolidate geological information into community data bases. There is now a critical need for a substantial data-basing effort, ideally as a component of larger efforts now being discussed in the new field of “geoinformatics.”

|

BOX 6.2 EarthScope Initiative EarthScope is an initiative to build a network of multipurpose instruments and observatories that will significantly expand capabilities to observe the structure and active tectonics of the North American continent.1 The initiative, which was proposed by NSF with participation from NASA, USGS, and the Department of Energy, will deploy four new observational facilities:

A recent National Research Council report endorsed all four components of EarthScope and recommended that they be implemented as quickly as possible.2 Deployment costs for the EarthScope instrumental systems are estimated to be $64 million for USArray, $17.4 million for SAFOD, $91.3 million for PBO, and $245 million for InSAR. Data analysis and management expenditures are expected to be $15 million to $20 million per year. SOURCE: National Research Council, Basic Research Opportunities in Earth Science, National Academy Press, Washington, D.C., 154 pp., 2001. |

FIGURE 6.1 Map showing approximate configuration of the Plate Boundary Observatory. Left panel: Backbone array of continuous GPS receivers will capture the long-wavelength decadal field; instrument spacing varies between 100 and 200 kilometers. Filled blue circles give presently planned locations of stations in the United States; open blue circles, in Canada and Mexico. Existing sites are shown in green. Right panel: Strawman distribution of continuous GPS and strainmeters for an instrument cluster to cover the San Andreas fault system, comprising 400 new GPS receivers and 175 new strainmeters. Filled blue circles give presently planned locations of GPS stations in United States; open blue circles, in Mexico. Existing sites are shown in green. Existing strainmeters are shown in red. New strainmeters would be deployed along the most seismogenic portions of San Andreas fault system, highlighted in red. SOURCE: PBO Steering Committee, The Plate Boundary Observatory: Creating a four-dimensional image of the deformation of western North America, White paper providing the scientific rationale and deployment strategy for a Plate Boundary Observatory based on a workshop held October 3-5, 1999. Available at <http://www.earthscope.org>.

The long-term slip rates of most major faults in North America are either unknown or, at best, constrained by geologic measurements at only one or two sites. Geologic field work, combined with precise accelerator mass spectrometer dating using 14C and cosmogenic isotopes (36Cl, 10Be, 26Al), is necessary to quantify late-Pleistocene and Holocene slip rates on major faults. Similarly, deformation rates at longer (Quaternary to Tertiary) time scales, as delineated by 40Ar/39Ar, fission-track, and uranium-thorium-helium dating, are required to resolve the evolution of slip and rock-uplift rates. The geologic mapping of faults and the measurement of fault slip rates and prehistoric events is coordinated by the USGS, state geological surveys, and multi-institutional research organizations, such as the Southern California Earthquake Center (SCEC). However, additional programmatic resources will be needed to characterize the active faulting at the comprehensive level envisaged in this report.

Slip-rate data are especially lacking in contractional provinces, where many questions still remain about how strain is partitioned among the major faults and between seismogenic faults and aseismic folding. New techniques in tectonic geomorphology could play a major role in addressing these issues. The evolving geomorphic character of former depositional surfaces inferred by combining detailed field mapping and geochronology with precise digital elevation models is particularly powerful in assessing the patterns of deformation associated with blind thrust faults, where the absence of surface ruptures confounds the traditional paleoseismic approaches. Laser altimetry from aircraft using light detection and ranging (LIDAR) systems can be used to investigate faulting and the surface deformations caused by buried faults. Shaded-relief maps generated from the Shuttle Radar Topography Mission’s 30-meter data could be the basis for mapping Earth’s active faults, folds, and seismically induced landforms between 60 degrees north and 60 degrees south. These data could be the topographic component of global seismic slope stability maps, created at scales that would be useful for long-term regional land-use planning. Significant impediments to the use of these new technologies for earthquake science include the cost of data, national security restrictions on availability, inadequate training, and the lack of cooperative programs.

Topographic data and analyses are necessary but not sufficient to understand the actively deforming lithosphere. In many cases, seismic reflection, deep and shallow boring, and other technologies are required to investigate the subsurface. Remote-sensing geophysical techniques, such as active source seismic reflection and refraction and gravity maps, are valuable for understanding how surface maps of the strain field from GPS, geologic mapping, and geomorphology continue into the subsurface. Heretofore, subsurface data for regional neotectonic studies

have been scavenged principally from collections made by the resource extraction industry. Programs designed specifically to collect high-resolution subsurface images using the three-dimensional techniques developed in the search for petroleum could advance earthquake science significantly.

Earthquakes occurring beneath the oceans pose significant hazards to the world population, which is becoming increasingly concentrated along continental coastlines. The main risk in the coastal zones of Cascadia, Alaska, Japan, and Indonesia, for example, arises from thrust faults that intersect the surface many kilometers offshore but are fully capable of generating destructive ground motions and tsunamis. To date, most earthquake research has relied on data from arrays of seismometers, geodetic positioning of benchmarks, and geologic mapping confined to land areas. A major objective of earthquake science should therefore be to extend observational systems and data bases into the offshore environments (Box 6.3). For example, bathymetric maps by various new technologies have revealed ancient slumps and the traces of active faults on the seafloor. Systematic bathymetric mapping in active regions could reveal structures with significant seismogenic or tsunamigenic potential.

|

BOX 6.3 Marine-Based Earthquake Studies The oceans offer natural earthquake laboratories and research opportunities not available on land. The geologic structure and history of the oceanic lithosphere, which is considerably different and usually much simpler than the continents, provide an excellent starting place for understanding the fundamentals of fault-zone processes. The seismogenic zone for major thrust faults can be sampled directly only by drilling in the offshore region. The international Ocean Drilling Program has already drilled a number of accretionary prisms to provide ground truth for three-dimensional structural models and sample the active décollement. Ship-based acoustic mapping and seismic reflection and refraction surveys can characterize the three-dimensional structure of marine fault systems at a fraction of the cost of terrestrial surveys. The NSF-sponsored Margins Program has placed a high priority on imaging plate boundaries in the offshore region, and it would be extremely advantageous to follow up such studies with earthquake observatories. Although offshore earthquake observatories are considerably more expensive than land-based stations, there are no longer major technological impediments to installing them as an integral part of plate boundary networks. Such installations address all of the major aspects of the earthquake problem, but they are especially useful in determining how lithospheric deformation is controlled by lithospheric architecture in relatively simple and well-imaged geologic environments, understanding the details of rupture nucleation and propagation in major thrust systems, and characterizing the strain cycle in regions with the highest rates of relative plate motion. Project NEP |

|

TUNE, a proposed fiber-optic observatory offshore the Pacific Northwest,1 provides an ideal opportunity to establish a dense network of submarine seismic and geodetic stations in a region of high seismic risk. To be most effective, NEPTUNE data should be integrated with observations from the proposed Plate Boundary Observatory.2 Deployment of state-of-the-art broadband stations on the ocean floor would also provide better coverage and resolution of seismic sources worldwide, as well as more complete tomographic coverage of the Earth’s interior structure, which is a high priority of the International Ocean Network program. Much like the Program for the Array Seismic Studies of the Continental Lithosphere (PASSCAL) deployments on the continents, the semipermanent or temporary deployment of dense arrays of broadband ocean bottom seismometers can complement the sparse permanent stations for the investigation of regional seismicity on oceanic plate boundaries and in intraplate settings. Plans for the development of a PASSCAL-type facility for broadband deployments on the ocean floor are under way. Seafloor geodesy is an especially challenging but potentially rewarding area for new research. Absolute gravimeters promise precise vertical positioning on the sea-floor.3 Horizontal positioning relies on acoustic ranging to surface floats precisely positioned by GPS.4 The acoustic link is the largest source of error, due to uncertainties in sound velocity and currents, but despite its lower overall accuracy, such data would still be quite useful for submarine thrust faults with high rates of convergence.

|

6.2 GLOBAL EARTHQUAKE FORECASTING

By combining studies of the geological record with seismic and geodetic monitoring, it is possible to forecast which fault systems will produce large earthquakes over long periods of time (decades to centuries). This type of long-range forecasting is essential for seismic hazard analysis, and further work on the problem should receive a high priority. Such research should address the dynamics of rupture propagation over multiple fault segments, the irregularity of the earthquake cycle, and the tendency of earthquakes to cluster in space and time. The diversity of earth-

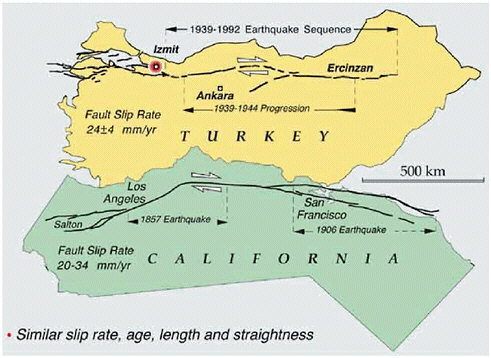

quakes and their geological environments necessitates a global approach. Great earthquakes (M = 8) are infrequent in the United States, but events of this magnitude occur about once per year on a worldwide basis. The experience collected through global studies of seismicity is therefore crucial to earthquake forecasting at the upper limits of the magnitude range. For example, although the Cascadia subduction zone has not produced a great earthquake in historical times, much can be inferred about the prospects of future events from the study of subduction-zone seismicity in other regions. Likewise, an improved understanding of the San Andreas fault will likely come from new knowledge about analogous strike-slip faulting in New Zealand, Turkey, and China, or perhaps from the study of the anomalous, slow earthquakes that characterize oceanic transform faults (Figure 6.2).

Goal: Forecast earthquakes on a global basis by specifying accurately the probability of earthquake occurrence as a function of location, time, and magnitude, as well as the magnitude limits and other characteristics of likely earthquakes in a given place.

Current forecasting schemes rely on controversial assumptions about stress, deformation, and earthquakes (e.g., characteristic earthquake behavior), so the immediate objective should be to formulate rigorous statistical tests of such forecasts. The evaluation of various forecasting methods can then proceed as new earthquakes occur and past ones are discovered, and the results can be used to improve the ideas and assumptions behind the forecasts. Global scope is important for capturing enough earthquakes to test hypotheses rapidly and to sample different tectonic and stress conditions.

Earthquake forecasting requires the basic information on fault structure and kinematics described in the previous section. The Global Seismic Network (GSN) deployed by the Incorporated Research Institutions for Seismology and the USGS just recently reached its design goal of 125 stations; in conjunction with other networks, it is furnishing unprecedented data on the source processes during major earthquakes in remote areas (see Section 4.1). Another monitoring network of obvious importance to global forecasting is the International GPS Service, which furnishes a well-defined global reference frame for regional studies of tectonic deformation (1). These facilities deserve long-term support and, in the case of the GSN, extension to include seafloor observatories (Box 6.3).

Fault and deformation data are not yet adequate in many seismically active parts of the world. However, new and recently expanded programs are beginning to provide these data on a global scale, including

FIGURE 6.2 Comparison between major, plate-bounding active strike-slip faults in California (San Andreas fault) and Turkey (North Anatolian fault) showing similarities in length, slip rate, and general geometry of the fault zones. Both faults have a history of frequent earthquakes of M 7 and higher, with irregular time intervals of decades to centuries between major events. The westward progression of earthquake epicenters in northern Turkey, beginning in 1939 and culminating in the Izmit earthquake of 1999, is reason for concern because the city of Istanbul (population 15 million) lies only 100 kilometers to the west of the Izmit epicenter. Major devastation and loss of life are to be expected if earthquake activity migrates westward into this urban area. It is not known if similar patterns in earthquake migration might occur along the San Andreas fault, but this question could be addressed through paleoseismological studies of San Andreas earthquakes over the last 10,000 years. SOURCE: R. Stein, U.S. Geological Survey.

global and regional earthquake monitoring, regional strain-rate measurements on many active plate boundaries, paleoseismic investigations of earthquake series on major faults throughout the world, neotectonic studies and satellite topography to map and characterize faults on land and near shore, and marine geophysical surveys to study faults on continental margins and in ocean basins.

Given the scope of the problem, broad-based international collaboration is essential. Pioneering efforts such as the Global Seismic Hazard

Assessment Program (Section 3.3), the Risk Assessment Tools for Diagnosis of Urban Areas Against Seismic Disasters, the Global Strain Rate Map Project of the International Lithosphere Project (ILP), the Global Fault Mapping Project, and the ILP Working Group on Earthquake Recurrence Through Time are providing uniform standards and data access, and they should be expanded with aggressive data-gathering efforts that exploit the new technologies described in this report.

6.3 FAULT-SYSTEM DYNAMICS

Much less is known about the feasibility of earthquake prediction over intervals of years to decades than about long-term forecasting. No algorithm for intermediate-term prediction has unequivocally demonstrated predictive skill at a statistically reliable level. However, there are both observational and theoretical reasons to believe that large-scale failures within some fault systems may be predictable on intermediate time scales, provided that adequate knowledge of the history and present state of the system can be obtained. It is not yet clear whether probabilistic forecasting methods can be devised that take advantage of this potential predictability, but such methods could contribute significantly to the reduction of earthquake losses. Therefore, basic research on the issue should be vigorously pursued, including a broad spectrum of research directed toward gaining a better fundamental understanding of fault-system dynamics.

Goal: Understand the kinematics and dynamics of active fault systems on interseismic time scales, and apply this understanding in constructing probabilities of earthquake occurrence, including time-dependent (non-Poissonian) earthquake forecasting.

The study of fault systems relies heavily on the information supplied by earthquake geology, particularly paleoseismology and tectonic geomorphology. A good example is the long-standing issue of regional earthquake clustering, in which periods of high earthquake activity (“seismic storms”) are separated by periods of relative quiescence. Clustering is clearly crucial to earthquake forecasting that is poorly constrained by the short catalogs of instrumental seismology. Paleoseismic techniques can be used to identify sequences of slip events at particular points on a fault, but these events must be precisely correlated in time and space to investigate clustering. The dense sampling and precise dating needed for this task are still lacking even along well-studied faults such as the San Andreas.

Seismologic data are also essential for testing hypotheses regarding earthquake clustering, including foreshocks and aftershocks. Modeling seismicity on a fault network relies on accurate and complete seismic catalogs for the recognition of regional patterns in seismicity and for detailed studies of specific earthquake sequences. Upgrading the regional networks proposed as part of the ANSS will greatly facilitate seismological studies of fault systems in the United States. These instrumental improvements will enhance earthquake information and encourage the development of new seismological products, such as the cataloging of fault planes, rupture lengths, and slip propagation directions for moderate-size earthquakes.

Better structural data are needed on fault segmentation, along with an improved mechanical understanding of the role of segmentation in fault rupture. Work on fault-zone complexity suggests fundamental differences in behavior between mature and immature faults. If segment boundaries play a key role in the termination of ruptures, then highly segmented faults may tend to be more characteristic in their behavior or at least more predictable in the lateral extent of future ruptures. A long, smooth fault such as the San Andreas may not have any “hard” segment boundaries, making the size of ruptures more sensitive to time-dependent stress heterogeneities.

Changes in Coulomb stress parameters resulting from large earthquakes have been invoked as a quasi-static mechanism for modifying seismicity rates. If such dynamical interactions significantly affect the timing of earthquakes on nearby faults, they must be accounted for in any model of earthquake recurrence. An important issue is the role of time-dependent phenomena, such as transient fault creep, fault healing, poroelasticity, and viscoelasticity, in stress transfer. The best constraints on these processes come from near-fault deformations following large earthquakes, which can be measured using GPS and InSAR geodesy.

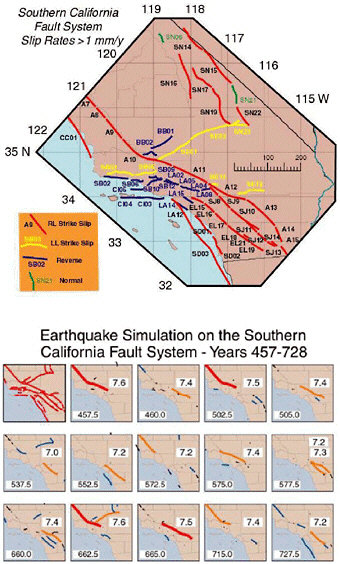

A true understanding of fault-system dynamics will be reached only by integrating the disparate observations of stress, strain, and rheology into self-consistent models that can be tested against observations not yet collected. Simulations of earthquake occurrence on fault networks are required to understand the behavior of natural fault systems and to address fundamental questions relating to earthquake occurrence, such as the effects of stress interactions and prior earthquakes in determining earthquake probability (Figure 6.3). The practical objective of this research is to develop procedures that can assimilate all information on fault-system behaviors into probabilistic forecasts and update these forecasts consistently based on seismic activity and other new information. A proper interpretation of fault systems must begin with a detailed representation of the active structural elements; in particular, it will be necessary to

FIGURE 6.3 Prototype “earthquake simulator” for southern California, in which fault driving stress is balanced against fault frictional resistance using a two-dimensional, quasi-static approximation. Top panel shows faults included in the simulation; colors indicate faulting style. Bottom panel plots large (M > 6) earthquakes calculated for a 270-year interval. Line color corresponds to different magnitudes. The numbers at the lower left of each panel indicate time in years, and the frames update on the occurrence of an M > 7 event. Physically based simulations such as these serve as useful platforms for hazard analysis and data assimilation. SOURCE: S.N. Ward, A synthetic seismicity model for southern California: Cycles, probabilities, and hazard, J. Geophys. Res.,101, 22,393-22,418, 1996. Copyright 1996 American Geophysical Union. Reproduced by permission of American Geophysical Union.

quantify the representation of major active faults in all three spatial dimensions. Further objectives should include the following:

-

the integration of other information, such as three-dimensional seismic velocities and attenuation parameters, surface topography, surface geology, and subsurface geologic horizons, into unified structural representations of active fault systems;

-

the use of these three-dimensional representations as the basis for combining all available data on geodetic velocities and fault slip rates into kinematically consistent models of deformation zones; and

-

the extension of these kinematical representations to fully dynamical models that incorporate realistic rheologies, boundary tractions, and body forces. The latter can be inferred by combining surface topography and gravity with density variations measured at the surface and inferred from seismic tomography.

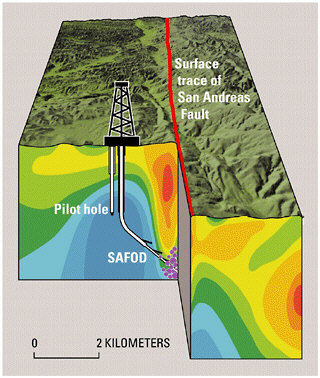

A dynamical description of fault system behavior must include the state of stress and how it changes with time. Stress can be measured directly in the near-surface environment accessible by mining and drilling, but the principal way to infer the stress field at depth is through modeling. The four observational facilities of the EarthScope program will provide critical data for this purpose, including precise measurements of surface deformation gradients and their temporal evolution (PBO and InSAR), detailed images of subsurface structures that control stress and strain heterogeneity (USArray), and better knowledge of deformation processes at depth (the San Andreas Fault Observatory at Depth [SAFOD]).

The research objectives outlined above will require (1) the ability to manipulate large data sets from geology, geodesy, and seismology; (2) the development of novel techniques for analyzing and interpreting these data sets; and (3) substantial information technology (IT) resources for developing, verifying, and maintaining community models and making them available to a heterogeneous, widely distributed group of users. The considerable experience accumulated by the petroleum industry over the last decade has shown that the construction of faithful and flexible structural representations is a difficult task, requiring significant resources and substantial reliance on advanced IT tools.

6.4 FAULT-ZONE PROCESSES

The considerations detailed in Section 5.3 highlight the importance of determining the composition, structure, and physical state of fault-zone materials and damaged border zones. At larger scales there is a need for

better characterization of fault junctions and the structure and mechanical properties of fault-jog materials over which, or through which, rupture jumps in transferring slip from one fault segment to another.

Goal: Characterize the three-dimensional material properties of fault systems and their response to deformation through a combination of laboratory measurement, high-resolution structural studies, and in situ sampling and experimentation.

More information on microscale processes is needed to formulate realistic macroscopic representations of the strength variations and the dynamic response of fault materials. Laboratory research has already provided promising representations of fault friction for understanding the nucleation of slip instability and other processes taking place at low strain rates (Section 4.4). Major advances in experimental techniques and facilities will be needed to elucidate the dynamic phase of fault response, in which rapid large slip rates and large slips may cause extreme temperature excursions and weakening by pressurization of pore fluids, melt generation, or other processes that are yet to be well documented. These phenomena can be partly addressed by high-speed rotary shear experiments, but challenges remain to study sliding under realistic triaxial stressing, to determine the effects of abrupt changes in sliding rate, and to confine rapidly heated fluids including melt. Advanced experimental techniques (e.g., shock and impact loading, stored energy bars, optical sensors of motion and thermal radiation, high-speed photography) in current use by other scientists concerned with dynamic response of materials have already contributed to understanding certain issues in dynamic friction and faulting (see Chapter 5) and should be employed more widely in laboratory earthquake studies.

Detailed investigations of fault properties and processes, in particular fluid-dominated processes, are needed on spatial dimensions from centimeters to kilometers to fill the scale gap between laboratory studies of rock mechanics and regional geophysical studies of active faulting. Such localized studies might best be done by careful investments in a set of “natural laboratories,” where high-resolution methods focused on imaging fault structure and deformation processes could be combined with systematic programs for in situ sampling and experimentation. Examination of exhumed faults explores the mechanical importance of shear localization structures and the evidence of fluids and local melting. Sample extraction by ultradeep drilling is feasible, at least at down to shallower seismogenic depths (2 to 6 kilometers) for many surface-breaking faults. Borehole data are needed to extrapolate laboratory results on laboratory-scale samples to natural faults, to elucidate the generation of fault-zone

structure (ultracataclastic core, cracked and damaged border zone), and to clarify why some faults creep whereas others slip in large earthquakes. Deep drilling of the Nojima fault in Japan has delivered samples from a depth of 2 kilometers. The EarthScope initiative proposes to construct SAFOD, which will sample the fault, monitor its seismicity and strain, and perform in situ experiments to 4-kilometer depth at Parkfield, California (Figure 6.4), where the USGS maintains a long-term, multidisciplinary program for the study of earthquake processes.

Focusing observations on carefully chosen, well-instrumented areas facilitates the coordination of activities across multiple groups of investigators, encouraging the types of multidisciplinary studies that are essential to understanding earthquake processes and fault-system behaviors. It also provides a long-term basis for capitalizing on field-based research. If

FIGURE 6.4 Schematic cross section of the San Andreas fault zone at Parkfield, showing the SAFOD drill hole proposed as part of the EarthScope project, and the pilot hole being drilled in 2002. Violet dots represent areas of persistent minor seismicity at depths of 3 to 4 kilometers. The colors in the subsurface show electrical resistivity of the rocks as determined from surface surveys; the lowest-resistivity rocks (red) above the area of minor earthquakes may represent a fluid-rich zone. SOURCE: EarthScope Working Group.

the investigations are well directed, and the data properly analyzed and archived, then the returns on previous research investments can be compounded as more data are collected. Each observational study within the natural laboratory adds to the database, improving the context for future work. Natural laboratories are well suited to field operations that are logistically complicated and expensive, as in the collection of spatially dense data sets and the monitoring of phenomena over extended time intervals. The committee endorses a recommendation in the recent National Research Council report Basic Research Opportunities in Earth Science, to establish an Earth Science National Laboratory Program within the NSF (2). Many types of earthquake natural laboratories would be able to deliver new data on fault-zone processes. Pore-fluid pressurization in deep boreholes could be used to induce seismic events, which could be recorded by borehole seismometers to characterize nucleation and the early stages of rupture dynamics (a modern-day update of the Rangely oil field experiments conducted in the 1960s; see Chapter 2). Data could be collected from seismic networks in deep mines where small earthquakes are rapidly, and to some extent controllably, generated by the advance of mine faces and other underground developments.

Synoptic studies in natural laboratories could furnish an important observational base for developing theoretical and numerical models of fault systems, and they could yield the essential data by which these models are ultimately validated. Fault zones are complex structures at all scales, and numerical simulations are required to integrate laboratory observations, field data on fault-zone structure and composition, and pore-fluid interactions to obtain constitutive representations of macroscale faulting. Simulations of fault-zone properties must account for many nonlinear phenomena, such as thermal transients during large earthquakes, that may directly alter fault strength (or even result in melting) and may couple with pore-pressure effects. Rigorous physical modeling begins with testable microscale processes and carries out the appropriate analyses that scale up through the geometric complexities of fault networks to understand the implications for natural events. Dynamical simulations at various scales will be needed to assess the discrepancies among laboratory-based friction laws, observed fault-system behaviors (e.g., earthquake productivity, postseismic response), and seismological data on large earthquakes (e.g., fracture energies, particle velocities and accelerations).

6.5 EARTHQUAKE SOURCE PHYSICS

Better knowledge of earthquake source physics on the short time scales of fault rupture will improve the understanding of how strong ground motions are generated, as well as the processes that lead up to the fault

ruptures that cause earthquakes. Earthquake scientists are not optimistic about the prospects for short-term earthquake prediction schemes that are based on observations of precursory behavior. Owing to the chaotic behavior of fault nucleation and rupture, accurate prediction of the time, place, and size of specific large earthquakes may not be possible. Nevertheless, near-field observations before and during large earthquakes are too few and too limited to rule out categorically the feasibility of short-term earthquake prediction (3), and further work on the problem is warranted. The key scientific issues involve the relationships between the dynamical processes that govern the nucleation, propagation, and arrest of rupture. More certainly, improvements in long-term forecasting and the still-bright prospects for intermediate-term prediction will depend on understanding the dynamical connection between the evolution of the stress field on interseismic time scales of decades to centuries and the stress heterogeneities created and destroyed during the few seconds of an earthquake.

Goal: Understand the physics of earthquake nucleation, propagation, and arrest in realistic fault systems and the generation of strong ground motions by fault rupture.

The most important data for constraining rupture processes are close-in seismographic and strainmeter recordings during earthquake ruptures, combined with geologic measurements, GPS measurements, and InSAR images of the near-fault deformation field. Effective use of this information in the study of rupture physics will depend on the following:

-

detailed three-dimensional models of seismic wave-speed structure needed to account for propagation effects in the waveform data;

-

fine structure of fault zones from geologic mapping and remote sensing, seismicity information, and trapped-wave studies of low-velocity zones and anisotropy;

-

development of new techniques to obtain experimental data on rock friction at high sliding velocities and large slips;

-

better understanding of fault-zone fluid processes from geologic observations on exhumed faults and in situ observations from SAFOD and other drilling experiments; and

-

constraints on the state and evolution of stress, including a better characterization of past earthquake history and stress-transfer mechanisms.

Numerical simulations are central to research in rupture dynamics, because they provide the physical basis for linking laboratory experiments and field data on small-scale fault-zone processes with large-scale

observations of seismic waveforms and geodetic deformation fields. Full three-dimensional dynamical simulations are needed to address issues regarding fault-zone complexities (e.g., nonplanarity of fault surfaces, stepovers, and branches in fault networks) and the selection among competitive rupture paths. Because of the wide separation between the inner scale of faulting (e.g., frictional breakdown at the rupture front, as small as 10 meters) and its outer scale (e.g., total rupture length up to hundreds of kilometers), the computational difficulties are truly enormous, requiring terascale resources from the national computational grid.

Rupture modeling involves nonlinear processes and geometrical complexities on various length scales, and there is no consensus methodology optimal for all aspects of the problem. A desirable simulation framework, therefore, would allow user-supplied rupture modules to be embedded in, and coupled to, a fast, simple, three-dimensional wave propagation model (e.g., a finite-difference code). With appropriate links to community structural models, this approach would furnish a framework for comparing waveform computations with recorded seismic data from past events and, thus, for improving the models, as well as the predictive simulations, for future earthquake scenarios. An important research objective is the validation of rupture-dynamics simulations using a set of “reference earthquakes” as a basis for comparison. Establishing these reference events will require (1) improved analysis of the geologic, geodetic and seismologic observations, especially strong-motion data, from these events; and (2) the collection of additional data on the three-dimensional structure and properties of the faults that ruptured.

Although many of the basic scaling properties of shallow earthquakes are shared by intermediate- and deep-focus earthquakes, it would appear unlikely that the same microscopic physics pertains to fault ruptures at all depths. A truly comprehensive theory of earthquakes must be able to explain the similarities and differences of earthquakes at different depths. Deep-focus events are difficult to study because the closest observations are necessarily hundreds of kilometers above the hypocenters. Global seismic networks and temporary deployments of portable seismometer arrays above active subduction zones are yielding the most direct data on natural events, while laboratory studies of deformation are furnishing the information on the microscale physics of earthquake instabilities at the high pressures and temperatures of descending slabs.

6.6 GROUND-MOTION PREDICTION

Seismic shaking is influenced heavily by the details of how seismic waves propagate through complex geological structures. Strong ground motions can be enhanced by resonances in sedimentary basins and wave

multipathing along sharp geologic boundaries at basin edges, as well as by amplification due to near-site properties. Although near-site effects such as liquefaction can be strongly nonlinear, most aspects of seismic-wave propagation are linear phenomena described by well-understood physics. Therefore, if the seismic source could be specified precisely and the wave velocities, density, and intrinsic attenuation were sufficiently well known, it would be possible to predict the time history of strong motions by a numerical calculation. The research goal is to use this physics-based approach to go beyond empirical attenuation relationships in characterizing strong ground motions and their secondary effects.

Goal: Predict the strong ground motions caused by earthquakes and the nonlinear responses of surface layers to these motions—including fault rupture, landsliding, and liquefaction—with enough spatial and temporal detail to assess seismic risk accurately.

Research in this field should focus on urban areas where the consequences of large earthquakes are most severe (Box 6.4). In particular, past earthquakes have demonstrated that areas of damage are often localized in highly populated sedimentary basins near active faults. Site-specific information about the time histories of shaking will be needed for performance-based design of structures in such settings. The challenge of urban hazard mapping is to predict ground-motion effects over an extended region with an acceptable level of reliability. Ground-motion maps for the 1989 Loma Prieta and 1994 Northridge events demonstrated that while the major urban regions of California were sufficiently instrumented to determine a first-order distribution of ground motions, the networks were not dense enough to provide a direct correlation of local damage patterns with ground-motion levels. Ground-motion simulations can potentially be used to interpolate the recorded data for more detailed seismic zonation, provided that the subsurface structure is adequately characterized.

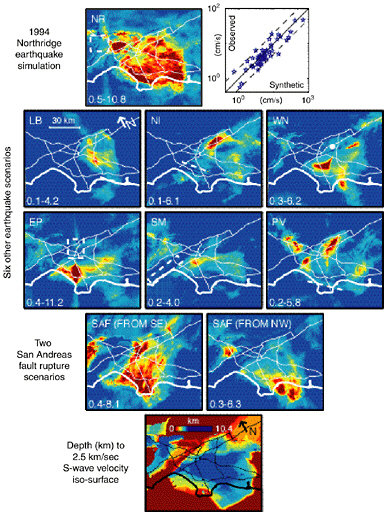

Plausible objectives for the next 10 years are (1) to determine the structure of high-risk areas well enough to model the surface motions from a specified seismic source at all frequencies up to at least 1 hertz and (2) to formulate useful, consistent, stochastic representations of surface motions up to at least 10 hertz. At present, computer simulations in areas where the three-dimensional structure of the crust is best known, such as the Los Angeles region, can model the peak amplitudes only below about 0.3 hertz (Figure 6.5). To extend these calculations to the higher frequencies needed for engineering applications, a much greater volume of seismological data will be needed to map the three-dimensional structure of the crust. Future densification of urban seismic networks should strive for

|

BOX 6.4 Urban Hazard Characterization An important objective of earthquake research is to develop the capability to produce detailed maps of earthquake shaking and other seismic hazards for urban areas with high seismic risk, as well as site-specific time histories of ground shaking in these urban areas expected during large earthquakes. These hazard maps and time histories should incorporate local site response and basin effects, rupture directivity and dynamics, and time-dependent probabilities of large earthquakes on all relevant faults. The steps necessary for improving the prediction of ground motions in urban areas include the following:

|

station spacing as short as 1 kilometer, or even less in some areas. These dense arrays should have sufficient dynamic range to record the strong shaking from large events as well as the weaker ground motions from small and moderate earthquakes. Small earthquakes recorded on such networks will furnish the dense data coverage needed for constructing three-dimensional models of sedimentary basins, while the strong-motion data will be essential for validating the ability of numerical models to predict ground shaking in future large earthquakes.

FIGURE 6.5 Peak-velocity amplification in the Los Angeles region from three-dimensional wavefield simulations. Amplifications are computed relative to a one-dimensional reference model in the frequency range from 0.1 to 0.5 hertz. Top panels show the amplification pattern computed for the 1994 Northridge earthquake (left), and the fit of the computed values to the observations (right). Middle panels show amplification patterns for eight scenario earthquakes. These simulations demonstrate that the peak velocity amplification pattern in the basin depends on the specifics of the faulting. For example, two earthquakes on the San Andreas fault, the same in every respect except for the direction of propagation, can produce very different patterns of amplification depending on how seismic waves interact with basin structure. Bottom panel shows the depth to the 2.5-kilometer-per-second isosurface in the three-dimensional structural model used to produce the simulations. SOURCE: K. Olsen, Site amplification in the Los Angeles Basin from three-dimensional modeling of ground motions, Bull. Seis. Soc. Am., 90, S77-S94, 2000. Copyright Seismological Society of America.

Instrumental improvements and densification of the regional seismic networks, as proposed in the ANSS initiative, will contribute substantially to these objectives. As currently planned, the ANSS will comprise about 6000 seismic stations in urban areas with significant seismic risk (Box 6.1). About half of these instruments will be free-field and half will be installed in buildings and other structures. This network will improve seismic hazard maps and will also enable engineers to correlate ground motions with building performance. The deployment of seismic instrumentation that records both strong and weak motions will unite the efforts of strong-motion and network seismologists whose often separate studies of site response, scattering, attenuation, and high-frequency radiation would benefit from enhanced collaboration.

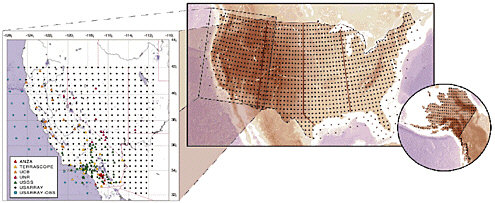

A major new source of structural data will come from the USArray component of the EarthScope project. The “Big Foot” array will provide nearly uniform structural control nationwide on lateral scales of tens of kilometers (Figure 6.6), while the high-density “flexible” array will allow the imaging of specific features at higher resolution using active-source techniques. These portable arrays can also be used to record aftershocks from larger earthquakes. In addition to providing information about rupture processes and driving stresses, such studies will yield valuable information on fault waveguides for constraining crack density, continuity of fault planes, and evolution of fault strength through the seismic cycle. Portable arrays recording background seismicity will also shed light on the effect of basins and basin edges on ground motion. Probing the detailed structure of sedimentary basins in high-seismic-risk areas will require extensive use of reflection seismology techniques using Vibroseis trucks or other high-energy sources and, at a few selected locations, measurements from deep boreholes.

In regions of high seismicity, crustal and uppermost mantle structure can be resolved using waveform data from local and regional earthquakes recorded on permanent and temporary stations. High-priority targets include the investigation of fault-related offsets of major structural features, such as the Moho discontinuity, which shed light on the rheology of the lower crust and connect observed surface motions with underlying mantle flow. A better knowledge of Moho topography would also improve the prediction of strong motions from SmS reflections at ranges of 50 to 150 kilometers. Similar data are needed on intracrustal reflectors.

As richer seismic data sets are collected, a primary challenge will be to set up a computational framework for the systematic refinement of three-dimensional wave-speed and attenuation models and the use of these models in the calculation of synthetic seismograms. This framework should be based on a unified structural representation that includes not only seismic propagation parameters but also geologic, gravimetric, and

FIGURE 6.6 Configuration of the transportable Big Foot seismic array proposed as a part of the USArray component of the EarthScope initiative. The underlying grid shows a regular spacing of 70 kilometers, resulting in approximately 1600 sites in the lower 48 states (actual deployment patterns will depend on site availability and therefore be less regular). USArray will begin operations in California, encompassing the SAFOD site at Parkfield. The western United States detail (left panel) shows the nominal coverage of the first full deployment of the 400 portable stations in the Big Foot array. Existing broadband stations are shown as colored symbols. SOURCE: EarthScope Working Group.

other information on major features, particularly faults. Numerical modeling methods for three-dimensional elastic wave propagation are now fairly mature, with several efficient, capable codes in regular use; a framework thus exists for the construction of community wave propagation models that would comprise many of the functional features needed for regional-scale ground-motion simulation. However, the ground-motion problem is further complicated by the fact that source excitation and wave propagation are intimately coupled, both as forward problems (inertial effects are important in rupture dynamics) and as inverse problems (excitation and propagation must be untangled to interpret seismograms). The coupling becomes stronger as the ground-motion frequencies become higher. A better understanding of rupture dynamics, the subject of the previous section, is therefore crucial to ground-motion modeling.

Developing flexible and feasible methods for assimilating wavefield observations (e.g., from USArray and ANSS) into updated three-dimensional structural models—the structural inverse problem—is both a conceptual and a computational challenge. In principle, the inversion can be set up as a linearized perturbation to an initial three-dimensional structure, and the sensitivity of the model parameters to various data values can be computed numerically. In practice, the huge computations will require substantial theoretical work and an enhanced information infrastructure.

Improved regional models will allow a better separation of source and propagation effects, facilitating more accurate hypocenter locations, focal mechanisms, and higher-order source parameters, including better imaging of rupture kinematics. The latter will be significant for initializing the stress field in fully dynamic rupture calculations. As the three-dimensional structures used in waveform simulations improve, the need for “site effects” to correct for incomplete descriptions of wave propagation should be reduced, which will help to isolate the true near-surface site response. Numerical codes that can account for soil nonlinearity are numerous, but computational approaches continue to evolve. The modeling framework must therefore accommodate a range of soil-modeling methodologies by developing a consistent interface for coupling ground-motion calculations to nonlinear codes.

Ground-motion prediction has the potential to enhance greatly local seismic zonation by including effects of rupture directivity, the orientation of the fault (e.g., the hanging-wall effect), and structures such as sedimentary basins, basin edges, and buried folds and faults. The development of procedures that include these effects ultimately will make earthquake scenario maps and seismic zonation more tractable, although it will greatly increase the complexity of their production. Owing to these complexities, nonscientists who could employ this information in performance-based

design, risk evaluation, and emergency management need to be educated about the capabilities and implementations of research results. In particular, the procedures described above can be applied in near real time immediately following an earthquake to improve the ground-motion maps now available (Figure 1.5) for guiding emergency response.

6.7 SEISMIC HAZARD ANALYSIS

Seismic hazard analysis provides the methodology for combining all information on seismic hazards into probabilistic and scenario-based predictions of the ground motions. This input is critical to earthquake engineering and design, as well as to the predictions of human casualties, damage to the built environment, and economic losses that can be expected from future earthquakes. Much applied research is still needed to improve the earthquake forecasts, attenuation relations, and site-response factors needed to apply seismic hazard analysis techniques. The methodology of seismic hazard analysis is now fairly mature, but it is highly empirical, based on many simplifications and approximations. Current attenuation relationships fail to capture much of the variance observed in the ground motions from actual earthquakes (Section 5.6). A recent study by the Southern California Earthquake Center concluded that “any model that attempts to predict ground motion with only a few parameters will have substantial intrinsic variability. Our best hope for reducing such uncertainties is via waveform modeling based on the first principles of physics” (4). The time is right to integrate physics-based models of earthquake processes into an improved scientific framework for seismic hazard analysis and risk management that explicitly considers the time dependence of seismic phenomena.

Goal: Incorporate time dependence into the framework of seismic hazard analysis in two ways: (1) by using rupture dynamics and wave propagation in realistic geological structures to predict strong-motion seismograms (time histories) for anticipated earthquakes, and (2) by using fault-system dynamics to forecast the time-dependent perturbations to average earthquake probabilities.

Seismic hazard analysis employs many of the data sets described under the other major science issues. These databases should be available as part of the community modeling framework, since alternative earthquake source models cannot be compared rigorously unless they are based on common sets of data such as earthquake catalogs, GPS velocity vectors, and fault geometries and slip rates. Similar requirements exist for the

strong-motion data sets and seismic velocity models that are used in the development and validation of ground-motion prediction models. These community data sets and models should be readily accessible on-line and have audit trails that allow them to be traced back to the original sources. A computational infrastructure is needed to allow real-time, dynamic access to current data that reside at community data centers. The challenge will be to construct data bases and models that can represent uncertainties and accommodate a wide variety of potential uses, while still encouraging and incorporating creative science.

Few recordings of strong ground motions from earthquakes greater than M 7 are available for earthquake engineering research (5). Consequently, ground-motion models may not adequately represent the damage potential of large earthquakes. For example, the response spectrum, which is the most common representation of ground motion for engineering design, is not very sensitive to the duration of strong motion, which is significant for large earthquakes. Also, the response spectrum may not provide an adequate representation of the damage potential of long-period ground motions from large earthquakes. Near-fault displacement pulses can place very large deformation demands on flexible frame buildings. These buildings are sometimes designed to withstand deformation that extends as much as 10 times beyond the elastic limit. The design of these buildings for nonlinear response is critically dependent on the reliability of the ground-motion level used for design, especially at the longer periods that scale strongly with earthquake magnitude. In particular, there is an urgent need for an improved representation of the near-fault rupture directivity pulse for use in earthquake engineering, because the response spectrum does not provide an adequate representation of pulse-type motions.

Probabilistic seismic hazard analysis (PSHA) and waveform modeling are complementary approaches to seismic hazard analysis. For example, the composite PSHA hazard estimate can be disaggregated to find the most menacing scenarios for a given site (e.g., Figure 3.10), and ground-motion simulations can be performed to generate time histories for those events. The notion of full waveform modeling of scenario earthquakes is not new to seismic hazard analysis of course, but opportunities exist for a greater level of interdisciplinary research, including collaboration with earthquake engineers, to develop a methodology appropriate for design and risk management applications. Over the long term, the most effective strategy for reducing the economic losses in earthquakes will be through the design of structures to withstand seismic shaking at specified levels of performance (see Section 3.5). Performance-based design is based on prediction of the deformations and failures of structural systems and their likelihood. For severe earthquake ground motions, such

prediction requires representative time histories for dynamic analysis of these complex, highly nonlinear systems. Reliable procedures for simulating ground-motion time histories have to be developed, tested rigorously against the available strong-motion data, and then applied in earthquake engineering research and practice. Significant research is needed on how to use waveform modeling to characterize the probability distributions of ground-motion time histories (or parameters derived from those time histories) in a way that properly accounts for both aleatory and epistemic uncertainties.

Constructing the appropriate probability distributions using brute-force Monte Carlo techniques requires multiple runs for many potential earthquake sources and geologic structures and thus increases demands on the resources for numerical simulation and the analysis of simulation output. Efforts to analyze the problem this way have required in excess of 1000 accelerograms and nonlinear structural analyses. Such solutions are unacceptable in standard practice. It will be more efficient and effective to combine seismic hazard analysis for a structure-specific, ground-motion intensity measure (or vector of such indicators) with a limited number (order of 10) of nonlinear analyses to produce the desired product—which might be, for example, the annual probability that a building’s maximum interstory drift (relative displacement) exceeds a specified level. Similar approaches should be feasible for other engineered facilities, such as earth dams, transportation networks, and urban infrastructure. The implementation of this engineering interface can be facilitated through multidisciplinary research centers such as the SCEC, Pacific Earthquake Engineering Research Center, Mid-America Earthquake Center, and Multidisciplinary Center for Earthquake Engineering Research.

Substantial opportunities for collaboration with the earthquake engineering community are presented by the NSF Network for Earthquake Engineering Simulation (NEES), a nationwide, distributed collaboratory comprising a variety of engineering testing facilities and simulation capabilities, currently under construction. Ground-motion data and simulations are critical inputs to the NEES project, and an active collaboration between NEES and earthquake science will be required.

6.8 SEISMIC INFORMATION SYSTEMS

A major advance in seismic monitoring and ground-motion recording is the integration of high-gain regional seismic networks with strong-motion recording networks to form comprehensive seismic information systems. On regional scales, such information systems provide essential information for guiding the emergency response to earthquakes, especially in urban settings. Seismic data from a regional network can be

processed immediately following an event and broadcast to users, which include emergency response agencies and responsible government officials, utility and transportation companies, and other commercial interests. The parameters include traditional estimates of origin time, hypocenter location, and magnitude, as well as maps of ground motions pertinent to damage assessments. The need for such systems became clear after the 1994 Northridge, California, and 1995 Kobe, Japan, earthquakes. The lack of accurate information on ground shaking in Kobe, for example, delayed the emergency response and resulted in needless damage and casualties (Box 2.4).

Goal: Develop reliable seismic information systems capable of providing (1) time-critical information about earthquakes needed for rapid alert and assessment of impact, including strong-motion maps and damage estimates, and (2) early warning of impending strong ground motions and tsunamis outside the epicentral zones of major earthquakes.

The ANSS, with its planned nationwide network of on-line broadband seismographs and strong-motion sensors, can fulfill some of these objectives. It can provide time-critical information for immediate public safety and emergency response when the dangers of an earthquake, tsunami, or volcanic eruption arise. The delivery of this information will require a coordinated information infrastructure that feeds directly into emergency management systems through robust, secure connections and can take advantage of new communication pathways, including the Internet and the World Wide Web.

A fully implemented ANSS will provide the framework for upgrading regional seismic information systems to real-time warning systems that, in favorable situations, can automatically notify critical facilities of impending shaking tens of seconds before the seismic waves arrive at the site. The implementation of seismic warning systems will depend critically on automated broadcasting and decision making, and the construction and testing of such systems will require extensive collaborations between seismologists and end users. Although the operational responsibilities for seismic information and warning systems correctly reside with the USGS, the participation of regional network operators, whose missions now include public information and education, is essential.

6.9 PARTNERSHIPS FOR PUBLIC EDUCATION AND OUTREACH

In a rapidly expanding society, it is difficult to balance earthquake risk against the economic expenses and social strictures (regulations and

enforcement) required to reduce risk. It is also difficult to prescribe what roles scientists should play in this intrinsically political process. Most observers support the proposition that scientists should increase their involvement in two critical areas: (1) implementing new knowledge gained from research to improve mitigation technologies and strategies, and (2) educating people about the nature of earthquake hazards and how to use earthquake information in seismic preparation and response. Experience from the NEHRP makes it clear that the efforts of individual scientists and research organizations can be amplified through partnerships with other technical groups, such as engineers and social scientists, as well as with the end users of earthquake science—emergency responders, disaster managers, insurance agencies, and public officials (see Section 3.6).

Goal: Establish effective partnerships between earthquake scientists and other communities to reduce earthquake risk through research implementation and public education.

Interdisciplinary collaborations are critical in establishing a firm technical basis for civic action and strengthening the resolve of public officials to improve mitigation strategies. Adapting probabilistic seismic hazard analysis to the needs of performance-based design is an example of where more cooperation between scientists and engineers could pay off in a big way. However, government agencies have not been particularly effective in sponsoring these types of interactions. For example, NSF manages research in earthquake science and earthquake engineering out of separate directorates. Moreover, while the USGS holds federal responsibility for the operational aspects of earthquake monitoring and hazard assessments, there is no federal equivalent for earthquake engineering. Owing to such organizational deficiencies, the most successful interactions have tended to involve the professional engineering societies and regional research centers.

Successful partnerships between those who conduct research and those who use research results usually require active and continuous collaborations, sustained through two-way communication. They profit from the involvement of people who have knowledge of implementation processes, have a tolerance for ambiguity, accept the high transaction costs associated with interdisciplinary activities, and are willing to overcome communication problems by developing a common language. An iterative process, with repeated opportunities for researchers and end users to educate each other, advances the concept of joint ownership. This in turn can lead to consensus and implementation of mutually identified priorities for earthquake hazard awareness, mitigation product development,

and information dissemination. Workshops, short courses, and field excursions that bring scientists together with end users can be effective mechanisms for promoting two-way communication and stimulating new approaches to solving practical problems. These activities can be enhanced by using technical briefs on research results in a form ready for application by professionals.

When it comes to the general public, the interpretation of scientific research—reducing results to understandable, usable products that improve hazard awareness, public safety, and mitigation efforts—is an essential part of the educational process. User-friendly information, distributed widely in print and over the Internet, can advance public understanding of the severity of an earthquake threat and motivate vulnerable populations to take protective measures. People are obviously most at-tuned to the issues of earthquake risk just after widely felt earthquakes, and scientists must be ready to engage the public through the news and other media during these teachable moments. The ShakeMap and Did You Feel It? web sites (6) set up by the USGS have received a large number of hits immediately following recent tremors, indicating that people are willing to invest considerable time and effort to understand what is happening in the ground beneath their feet.

The primary educational role for many earthquake scientists is at the university level, where teaching and research go hand-in-hand in developing the careers of young scientists. A healthy national program in research-based graduate education is essential to attract bright students and overcome the manpower limitations that now constrain some fields in earthquake science. Two trends in undergraduate education that deserve more support are the incorporation of information technology and computer science in geophysics training and an emphasis on environmental processes and histories in geologic training. Other educational objectives include

-

studies of earthquakes in the laboratory and field to enrich the educational experiences of students from all backgrounds and help them appreciate the excitement of basic and applied science;

-

scientist-mentored summer internships for undergraduates, such as those funded through the NSF Research Experiences for Undergraduates program;

-

work with museums to create novel and interactive learning environments;

-

development of K-12 earthquake curricula in accordance with the National Science Education Standards and state-sponsored efforts, such as California’s Earthquake Loss Reduction Plan; guiding these efforts should be specific objectives to (1) structure these Earth science curricula

-

in ways that appeal to students from underrepresented groups, and (2) achieve better meshing between K-12 and college-level Earth science education; and

-

workshops for K-12 and college-level educators to demonstrate and encourage the use of educational resources, curricula, and field-based experiences, in accordance with established career development standards.

6.10 RESOURCE REQUIREMENTS

The programmatic support required for earthquake research during the next 10 years will outstrip the resources currently available through NEHRP and other federal programs. The ANSS and EarthScope initiatives, for example, would greatly improve the observational capabilities for earthquake science in the United States and would contribute substantially to the objectives outlined in this report. To bring ANSS into full operation will require capital investments of approximately $170 million, and its annual operational costs are estimated to be about $47 million (Box 6.1). In comparison, the congressional appropriation for the entire USGS component of the NEHRP budget was only $50 million in FY 2001. Deployment costs for the EarthScope instrumental systems are estimated to be $91.3 million for PBO, $245 million for InSAR, $64 million for USArray, and $17.4 million for SAFOD (Box 6.2). Data analysis and management activities will require an additional $15 million to $20 million per year during the first decade of EarthScope operations. The total FY 2001 geoscience expenditures by the NSF in support of NEHRP were only about $12 million. The research opportunities for characterizing the structure and history of active fault systems warrant a severalfold increase in the neotectonic and paleoseismic studies currently supported by the USGS and the NSF. Work in this area is limited by the small number of earthquake geologists engaged in this type of research, underlining the need for increasing efforts in geoscience education at both the undergraduate and the graduate levels.

Research to understand earthquakes and their effects is central to continuing efforts to decrease earthquake risk. The technological and conceptual developments documented in this report have positioned the field of earthquake science for major advances. Investments made now will eventually pay off in terms of saved lives and reduced damage. These returns can be realized sooner by encouraging unconventional lines of research; coordinating scientific activities across disciplines and organizations, especially between scientists and engineers; and supporting international programs to investigate the global diversity of earthquake behavior. The transition to a systems-oriented science has important ramifications for the types of cooperative research activities and organiza-

tional structures that are most effective in addressing the basic and applied problems of earthquake research. In particular, there is a critical need to maintain and expand scientific centers where the disciplinary activities of many research organizations can be coordinated, evaluated, and synthesized into system-level models of regionalized earthquake behavior. In addition to their key role in basic earthquake science, such multidisciplinary centers have proven to be effective organizations for the dissemination of earthquake information and research results, the formulation of science-based strategies for loss reduction, and the education of the general public and nonscientist professionals concerned with disaster mitigation and loss reduction.

NOTES

|

1. |

The International GPS Service (IGS) is an International Service under the Federation of Astronomical and Geophysical Data Analysis Services. IGS has developed a worldwide system to put high-quality GPS data on-line within one day and data products within two weeks. The system consists of about 125 satellite tracking stations, 3 data centers, and 7 analysis centers. GPS data are used to generate GPS satellite ephemerides, Earth rotation parameters, IGS tracking station coordinates and velocities, and GPS satellite and IGS tracking station clock information. See < http://igscb.jpl.nasa.gov/>. |

|

2. |

National Research Council, Basic Research Opportunities in Earth Science, National Academy Press, Washington, D.C., pp. 101-105, 2001. |

|

3. |

For example, aseismic “silent earthquakes” have recently been observed on the thrust interface of subduction zones by geodetic networks in Japan and Cascadia, but the interplay between these events and major earthquakes in subduction zones is not understood; see Section 4.2. |

|

4. |