3

Integrating Observations, Models, and Users

As presented in Chapter 2, rapidly increasing sensing capacity is greatly expanding our ability to measure and monitor the conditions and processes that are critical to understanding and managing hydrologic systems. These technical advances are coming at a time when the demand for new and more accurate hydrologic information is rapidly increasing.

Models are typically used to extend the utility of individual measurements. They can be used to interpolate point data, integrate point and remotely sensed data, and estimate unmeasured quantities. Models can also be used to forecast future conditions, hindcast past conditions, and simulate hypothetical conditions, such as hydrologic states and fluxes under alternative future conditions. Further, models are also useful for designing measurement and monitoring systems, as they provide information on the value of specific measurements and can be used to determine the types, amount, and geospatial distribution of data that need to be collected. Real-time modeling of ydrologic systems offers an exciting opportunity for integrating data and models through “data assimilation”, an approach that is being used successfully in weather forecasting.

There is wide variety of models that are used in the hydrologic community, for example, models for predicting water quality, flood forecasting, water supply, and so forth. They have a wide range of temporal and spatial scales (e.g., spatially distributed sub-diurnal hydrologic models, basin-averaged hydrologic models, and regional total maximum daily load (TMDL) based non-point pollution models). Thus, how observations are merged with model predictions, and the usefulness of using models to evaluate observational networks, will be model- and application-oriented, and is a focus of research. Overall, model development has far exceeded our ability to provide these models with field data. Rather than supply a comprehensive review of the range of model applications that would find the integrated observations useful, a cross-section of models is presented through the specific case studies presented in Chapter 4. The committee expects that inte-

grated programs like the National Ecology Observatory Network (NEON) and the Water and Environmental Research Systems (WATERS) Network will offer additional research results showing the benefits of integrating observations and models.

Neither data nor models have value unless they are used. And they can only be used if they can be easily discovered, acquired, and understood in a timely and convenient manner by those who wish to apply them to practical issues such as flood forecasting, water availability modeling, and ecological flows, as inputs to decisionmaking. The communication and delivery of data and information (including their interpretation, quality, and uncertainties) to such end-users is the back-bone to a beneficial integrated system.

Therefore, efficient use by society of these new sources of data from land, air, and space requires concomitant improvements in the capture and archiving of these data, in the modeling of hydrologic systems, and in the communication of hydrologic data and information to researchers, water managers, and other users. However, achieving these goals requires a level of cyberinfrastructure not currently available or even designed. There are critical and extensive cyberinfrastructure needs if models are to routinely and efficiently take advantage of advances in measurements. It is critical that cyberinfrastructure evolve in concert with new developments in sensing capacity and hydrologic modeling.

Thus, this chapter first presents new opportunities in the merging of observations with models, followed by a discussion of the cyberinfrastructure needed to support these models and their application to societal needs.

APPROACHES FOR INTEGRATING OBSERVATIONS AND MODELS

Real-Time Environmental Observation and Forecasting Systems

The availability of tremendous computational power coupled with widespread communication connectivity has fueled the development of real-time environmental observation and forecasting systems. These systems offer the opportunity to couple real-time in-situ monitoring of physical processes with distribution networks that carry data to central processing sites. The processing sites run models of the physical processes, possibly in real-time, to predict trends or outcomes using on-line data for model tuning and verification. The forecasts can then be passed back into the physical monitoring network to adapt the monitoring with respect to expected conditions (Steere et al., 2000). Both wireless networks of sensors and sensors webs will enhance this development.

This approach is being successfully used in weather forecasting. Using protocols developed around the Global Telecommunication System (GTS), the National Weather Service has created an integrated network that interconnects me-

teorological telecommunication centers with point-to-point and multi-point circuits. This allows national weather centers to obtain global data from in-situ networks and environmental satellites that are “assimilated” into their forecast models.

A similar vision can be advanced for prediction in hydrologic systems, from daily water-quality forecasts in major estuaries and near-shore regions to timing of snowmelt runoff for hydropower scheduling to flood forecasting to operation of irrigation drainage facilities to protect stream water quality. While selected federal and state agencies are starting to move in this direction (e.g., the National Oceanic and Atmospheric Administration’s [NOAA] Drought Information Center, and its products), most are not. Opportunities for advances across the water sector are enormous. While the models can be used to help “design” data collections systems—the so-called operational sensor simulation experiments (OSSEs)—the more fundamental challenge is developing and testing methods for merging the diverse data from a variety of sensors into existing models.

Data Assimilation

As an example of the potential for integrating real-time measurements into predictive tools and thence into decision-support systems, we review the historical context and some recent challenges in hydrologic data assimilation. The merging of multiscale observations and models when both observations and model predictions are uncertain is referred to as data assimilation. Data assimilation has been widely used in atmospheric and ocean sciences (e.g., Bennett, 1993; Evensen, 1994), and is central to operational weather forecasting, in which a variety of observed weather states from disparate sensors are used to update the model states, and subsequently forecasts. In hydrology, research on data assimilation procedures has a relatively long but sparse history, going back to the 1970s. That earlier work generally focused on simple linear or linearized models within a Kalman filtering framework (see Wood and Szollosi-Nagy, 1980) and was applied to problems such as flood forecasting.

Major limitations of the early work included difficulties in handling the highly nonlinear nature of surface hydrologic systems, especially during intense storms, the general absence of observations of key states, like soil moisture, and computational limitations. The application of more advanced techniques is a relatively new phenomenon in hydrology. The renewed interest in hydrologic data assimilation has been spurred in part by the increased availability of remote sensing and ground-based observations of hydrologic variables and/or variables (like soil moisture and surface temperature) that can be related to surface hydrologic processes, and along with improved computational power (Houser et al., 1998; McLaughlin, 1995, 2002; Reichle et al., 2001a;b; Crow and Wood, 2003).

The basic objective of data assimilation is to better characterize the state of the hydrologic or environmental system, where information sources include process models, remote sensing data, and in-situ measurements. Until recently, research on data assimilation in land-surface hydrology was limited to a few one-dimensional, largely theoretical studies (e.g., Entekhabi et al., 1994; Milly, 1986), primarily due to the lack of sufficient spatially distributed hydrologic observations (McLaughlin, 1995). However, the feasibility of synthesizing distributed fields of surface states (e.g., soil moisture) by the novel application of four-dimensional data assimilation (4DDA) within the construct of a dynamic hydrologic model was only quite recently demonstrated (Houser et al., 1998). More recently, researchers in hydrology (e.g., Margulis et al., 2002; Crow and Wood, 2003; Wilker et al., 2006) have exploited both aircraft remote sensing observations from intensive field campaigns like SGP97 and SGP99 over the Southern Great Plains (SGP) domain and spaceborne observations.

Encouraging though these short-term demonstrations may be, the area is still quite limited relative to the continental and global domains for which remote sensing data sets from the suite of the National Aeronautics and Space Administration (NASA) earth observation system (EOS) satellites are available. At these scales, computational and algorithmic issues need to be better developed to integrate multiscale observations and models (Zhou et al., 2006; McLaughlin et al., 2006). Therefore, it is recognized that applying data assimilation to provide better integration of observations—from sensor pods from embedded networks to operational sensor webs and from local high-resolution process models to continental-scale, macroscale “earth-system” models—will represent a significant scientific challenge that necessitates investment in research and demonstration projects by the National Science Foundation (NSF), NASA and operational agencies to fully utilize the potential from the observational and modeling capabilities that currently exist.

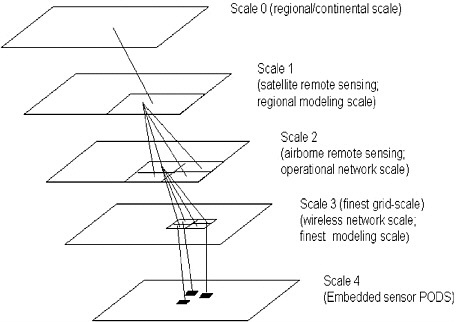

A way forward for the research in merging observations and modeling, both which exist at a variety of scales, is to apply a scale-recursive assimilation/smoothing procedure (Basseville, 1992; Daniel and Willsky, 1997; Luettgen and Willsky, 1995; Gorenburg et al., 2001.) Figure 3-1 shows the multiscale problem schematically, where a given measurement (or model output, or sensor) may provide information at other scales. An in-situ sensor within an embedded network at scales smaller than the finest grid may provide spatial information for the larger scale as well as information regarding sub-grid variability.

Multiscale approaches can be used with any of the covariance-based data-assimilation algorithms, such as optimal interpolation, three-dimensional variational (3-D Var) approaches, and Kalman filtering and its derivatives such as Extended Kalman filtering or Ensemble Kalman filtering. Additionally, multiscale methods offer a feasible computational approach to one of the largest potential challenges of widespread embedded sensor networks: the production of large

FIGURE 3-1 Portion of an inverted tree showing potential scales and measurements. Alternatively the scale 1 node has a parent (scale 0) and four children at scale 2.

amounts of data that could be overwhelming if not properly managed. Hence, the embedded computational ability of embedded sensor networks, combined with multiscale merging with coarser-scale observations and data can allow predictions across scales.

CYBERINFRASTRUCTURE: MANAGING THE DATA AND DELIVERING THE PRODUCTS

Cyberinfrastructure was defined broadly by an NSF Blue Ribbon Panel (2003) as:

The base technologies underlying cyberinfrastructure are the integrated electro-optical components of computation, storage, and communication that continue to advance in raw capacity at exponential rates. Above the cyberinfrastructure layer are soft

ware programs, services, instruments, data, information, knowledge, and social practices applicable to specific projects, disciplines, and communities of practice. Between these two layers is the cyberinfrastructure layer of enabling hardware, algorithms, software, communications, institutions, and personnel.

Cyberinfrastructure encompasses the coordination and deployment of information technologies that integrate observations and measurements, high-performance computing, management services, visualization services, and other advanced communication and collaboration services in a networked environment. Moreover, cyberinfrastructure necessarily includes the human resources necessary to support research and applications. In the remainder of this section, we discuss some of the current cyberinfrastructure challenges, present case studies illustrating promising developments in cyberinfrastructure, and conclude with a comprehensive vision for cyberinfrastructure that enables real-time environmental observation and forecasting.

Current Challenges

There are many cyberinfrastructure challenges, especially as they relate to communication technologies, associated with developing wireless networks and the integrated, seamless, and transparent information management systems that can deliver seismic, oceanographic, hydrological, ecological, and physical data from sensors to a variety of end users in real time. One example of a research project that has advanced the communication and real-time management of distributed, heterogeneous data streams from sensor networks in the San Diego region through the confluence of several cyberinfrastructure technologies is ROADNet (Real-time Observatories, Applications and Data management Networks; https://roadnet.ucsd.edu; Woodhouse and Hansen, 2003; Vernon et al., 2003). In particular, ROADNet employs (1) commercial software for data flow, buffering and distribution, data acquisition, and real-time data processing; (2) open-source solutions for data storage (i.e., Storage Resource Broker), integration, and analysis (i.e., Kepler Workflow System); and (3) web-services tools for rapid and transparent dissemination of results. ROADNet is used as the middleware and real-time data distribution system for the wireless, real-time sensor network at Santa Margarita Ecological Reserve in southern California (http://fs.sdsu.edu/kf/reserves/smer/).

Developing environmental observatories will increasingly need to focus on developing the technologies that can better enable users to use and understand data from sensor webs. It will be especially important to provide Internet access to integrated data collections along with visualization, data mining, analysis, and

modeling capabilities. Heterogeneities in platforms, physical location and naming of resources, data formats and data models, supported programming interfaces, and query languages should be transparent to the user, and cyberinfrastructure will need to be able to adapt to new and changing user requirements for data and data products. Cyberinfrastructure tools to achieve this are the major challenge in the integration of hydrologic observations, and the use of these observations by both the research and applications communities.

Large-scale environmental observatories like EarthScope, the WATERS, NEON and others will consist of hundreds to thousands of distributed sensors and instruments that must be managed in a scalable fashion. Cyberinfrastructure must enable scientists to remotely query and manage sensors and instruments, automatically analyze and visualize data streams, rapidly assimilate data and information, and integrate these results with other ground-based and space-based observations. With the advent of highly distributed embedded networked sensors, along with potential and real advances in biogeochemical sensors, the cyberinfrastructure will become even more important. Development of automated quality assurance and quality control procedures, adoption of comprehensive metadata standards, and the creation of data centers for curation and preservation are critical for the success of environmental observatories and the longevity and usability of their data holdings. Data are not useful unless they are high quality, well organized, well documented, and securely preserved yet readily accessible.

Achieving this will require a major paradigm shift from the way most hydrologic data are now handled by both the research and applications communities. Data must go directly from sensors to a data and information system, with quality assurance and quality control done within the system. Automatic quality control algorithms need to be built in, but the system must also allow efficient data processing by scientists responsible for the data. The paradigm shift is that data will not go to an investigator’s computer to be processed and later submitted to a data system. Rather, data will go directly into a data system, with the responsible investigator involved in immediate, timely data processing facilitated by the data system.

Emerging environmental observatories will clearly depend upon cyberinfrastructure to reduce the significant costs in money and people required to manage distributed sensing resources. Importantly, incipient environmental observing systems may represent a significant market presence that can encourage creation and acceptance of industry standards for sensor compatibility, communication, and sensor metadata. For instance, standardized approaches that automate capture and encoding of sensor metadata can facilitate the process whereby sensor data are ingested, quality assured, transformed, analyzed, and converted into publishable information products (Michener, 2006). Automatic metadata encoding should also enable scientists to track data provenance throughout data processing, analysis, and subsequent integration with other data products.

Environmental observing systems require data center and web services approaches that enable different users to specify data and information that can be automatically streamed to their computer. Furthermore, it should be possible to specify alternative views to data from raw through processed (quality assured) through integrated and synthesized information (e.g., graphic summaries of model outputs) depending on scientific need and level of user experience.

A final challenge may be the most difficult of all. This report notes in various places that integrating measurements in multiple sciences (e.g., biology, hydrology, and meteorology) is challenging, but overcoming this challenge is non-trivial. For example, simply gathering the data in a web-based portal (see the applications examples below) does not solve the problem. Research is needed in how cross-disciplinary information is communicated and exchanged. For example, the discipline of spatial ontology attempts to find a rigorous set of terminology for the same phenomena and geographic features in different disciplines. A serious attempt will have to be made to build cross-disciplinary databases if truly integrated information systems are to be achieved. The suggestion made in NRC (2006c) was to place the various NSF environmental observatory programs under a parent entity, which would be responsible for cyberinfrastructure development, among other shared activities. This would be a key forum for such discussions.

Examples of Applications

A detailed development of cyberinfrastructure needs is beyond the scope of this report. Here, to illustrate some of the above-mentioned issues in context, we highlight three recent and relevant advances in environmental infrastructure: (1) a “cyberdashboard” for the current EarthScope USArray real-time infrastructure, (2) a community observations data model and corresponding suite of web services for hydrologic applications, and (3) a specific application to flash flood emergency management.

A “Cyberdashboard” for the EarthScope USArray Project

The EarthScope USArray project seeks to study the seismic tomography of the continental United States. As with other networks, significant human effort is required to configure, deploy, and monitor the thousands of sensors that are being constantly deployed and redeployed and to manage their real-time data streams. This process required administrators to log in to multiple computers, edit configuration files, and run executables to properly integrate the new equipment into the existing sensor network.

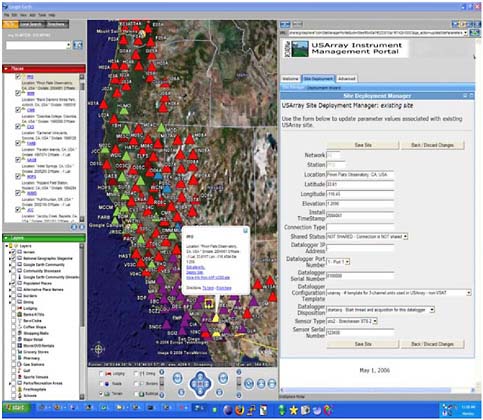

Cotofana and others (2006) recently applied a services-oriented architecture (SOA) approach to create a “cyberdashboard” for the current USArray real-time infrastructure (Figure 3-2). The architecture included (1) a layer of command-and-control web services, capturing and exposing resource management behaviors of the existing sensor network middleware used by USArray; (2) web-based management applications that orchestrate these services to automate common management tasks; and (3) geographical information system (GIS) capabilities provided by Google Earth™ to display the sites being configured and their surrounding environments.

FIGURE 3-2 USArray instrument management cyberdashboard, which provides an intuitive and comprehensive view into system status and operations, as well as control functions over various system resources such as data streams, instruments, data collections, and analysis and visualization tools. SOURCE: Reprinted, with permission, from T. Fountain, San Diego Supercomputer Center.

The cyberdashboard centralizes management activities into one consistent interface, decoupled from the actual systems hosting the underlying sensor network middleware. The management tools also provide a means of keeping track of multiple instrument sites, as they go through the various deployment steps, and automatically check the constraints, reducing input errors. Furthermore, these tools guide the administrators through the configuration of new sites, ensuring that all steps are properly completed in the right order. GIS tools for sensor networks facilitate a number of administration tasks, ranging from visual verification of site coordinates to the planning of new deployments given natural environmental conditions. The cyberdashboard combines all of these elements into an integrated user interface of monitors and controls for observing system management.

A Community Observations Data Model and Web Services for Hydrologic Applications

Efforts undertaken as part of the Consortium of Universities for the Advancement of Hydrologic Science, Inc.’s (CUAHSI) Hydrologic Information System are streamlining access to information from diverse data archives. The Hydrologic Information System (HIS; http://www.cuahsi.org/his) is one component of CUAHSI’s mission and comprises a geographically distributed network of hydrologic data sources and functions that are integrated using web services so that they function as a connected whole. The goal of HIS is to improve access to the Nation’s water information by integrating data sources, tools, and models that enable the synthesis, visualization, and evaluation of the behavior of hydrologic systems. This will be achieved through a distributed service-oriented system. Significant parts of HIS have been prototyped, and others are under development. Two contributions from HIS are highlighted here: (1) the community observations data model and (2) a suite of web services called WaterOneFlow.

The CUAHSI community observations data model is a standard relational database scheme for the storage and sharing of point observations from a variety of sources both within a single study area or hydrologic observatory and across hydrologic observatories and regions. The observations data model is designed to store hydrologic observations and sufficient ancillary information about the data values to provide traceable heritage from raw measurements to usable information, allowing data values to be unambiguously interpreted and used. The observations data model is an atomic data model that represents each individual observation at a point as a single record in the values table. This structure provides maximum flexibility by exploiting the ability of relational database systems to query, select, and retrieve individual observations in support of diverse analyses.

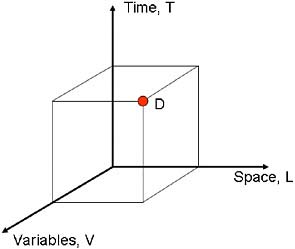

Although designed specifically with hydrologic observations in mind, this data model has a simple and general structure that can accommodate a wide range of other data from environmental observatories and observing networks, or even model output values. The fundamental basis for the data model is the data cube (Figure 3-3) where a particular observed data value (D) is located as a function of where in space it was observed (L), its time of observation (T), and what kind of variable it is (V). Other distinguishing attributes that describe observations serve to precisely quantify D, L, T, and V. The general structure of the data model, with comprehensive observation metadata, provides the foundation upon which HIS web services are built.

A critical step not yet designed is how to populate with data at different levels of processing, from raw sensor data to higher-level geophysical products, and how to efficiently build quality assurance and quality control into the system. A review of on-line data systems in related fields will show many times over that when research data pass to an individual investigator’s desktop for processing en route to an archive, only selected data actually end up in that archive. Cyberinfrastructure needs to capture data automatically, and make it painless for investigators to do quality assurance and quality control.

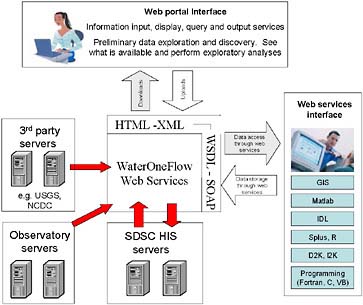

The second contribution from HIS is a suite of web services, called WaterOneFlow, that enable HIS users to access data, tools, and models residing on different servers. These web services can be invoked from a web browser interface or from various applications or programming environments commonly used by hydrologic scientists. An important function of web services is to facilitate analysis of national hydrologic data in the applications and analysis environment of a user’s choice, thereby minimizing the requirement for additional learning by users and providing greatest flexibility for the use of hydrologic data. This is achieved through the reliance on World Wide Web Consortium web services standards. WaterOneFlow presently accesses servers maintained by national water data centers (e.g., U.S. Geological Survey (USGS), Ameriflux, DayMet), and is supported by HIS servers at the San Diego Supercomputer Center (SDSC).

Figure 3-4 depicts the architecture of the WaterOneFlow system. This shows how web services serve to integrate and standardize the access to data from multiple sources such as USGS, National Climate Data Center, observatory servers, and HIS servers that provide data in the format of the observations data model. The primary function of the web portal interface depicted at the top is data discovery and preliminary data exploration. However, once a user has found what data are available and wants to do analysis it is generally more efficient to discontinue using the browser and access the data directly from the working environment of the user’s choice. This is depicted in the box on the right in Figure 3-4.

FIGURE 3-3 A measured value (D) is indexed by its spatial location (L), its time of measurement (T), and what kind of variable it is (V). SOURCE: Maidment (2002). © 2002 by ESRI Publishers.

FIGURE 3-4 WaterOneFlow web services architecture. SOURCE: Reprinted, with permission, D. Tarboton, Utah State University. Available on-line http://www.cuahsi.org/his/webservices.html.

Flash Flood Emergency Management through Web Servers

Flash floods have the dubious distinction of resulting in the highest average mortality (deaths/people affected) per event among natural disasters (e.g., Jonkman, 2005). Early warning systems are the means for producing localized and timely warnings in flash flood prone areas, which in many cases are remote, necessitating the use of remotely sensed data. An approach to flash flood warning that has gained acceptance and is used in operations is to produce estimates of the amount of precipitation of a given duration that is just enough to cause minor flooding in small streams over a large area. These flash flood guidance estimates are then compared to corresponding now casts or short-term forecasts of spatially distributed precipitation derived from remote and on-site sensor data and numerical weather prediction models to delineate areas with an imminent threat of flash flooding and to issue warnings and mobilize emergency management services (e.g., Sweeney, 1992). Flash flood guidance estimates are derived using GIS information and distributed hydrologic modeling.

Flash flood guidance is not a forecast quantity; rather, it is a diagnostic quantity (e.g., Carpenter et al., 1999; Georgakakos, 2006; Ntelekos et al., 2006). As such, its use for the development of watches and warnings requires assessment of a present or imminent flash flood threat or a possible flash flood threat in the near future (up to six-hours of lead time). As flash floods are local phenomena developing rapidly, it is best to have these assessments made by local agencies that are familiar with the response of the local streams and have access to last-minute local information (be it a phone call from local residents or information from a local automated sensor). Thus, even though a regional center may be producing flash flood guidance estimates over the region with high resolution, effectively assimilating all available real-time data, appropriate means for communication and interaction with such estimates are necessary to allow local agency assessments pertaining to issuing flash flood watches and warnings (a flood is occurring or will occur imminently) and/or taking steps for emergency management. The World Wide Web offers a very effective primary means for communicating and interacting with spatially distributed flash flood guidance estimates and associated precipitation data through client-server arrangements between the regional center and the local forecast and emergency management agencies. The integration of observations, high-performance computing, data management and visualization services, and user collaboration services promises to contribute significantly toward the reduction of life loss from flash floods.

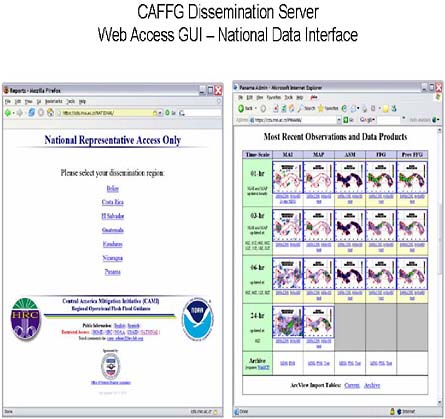

One of the first examples of the use of the web to enhance the effectiveness of producing warnings and emergency management for flash floods is the Central America Flash Flood Guidance System (CAFFG) that has served all seven countries in Central America since the summer of 2004. The system was developed by the Hydrologic Research Center in collaboration with NOAA and coun-

try meteorological and hydrologic services with funding from the U.S. Agency for International Development. A regional center in San José, Costa Rica, receives and ingests in real-time all the remotely sensed (satellite rainfall) and onsite operational data for the region. The system currently relies on NOAA estimates of satellite rainfall based on geostationary satellites with real-time assimilation of surface rainfall observations from a variety of conventional, operational-automated sensors in various countries of Central America. Current data-delivery latencies of rainfall estimates from satellite microwave data prevent the use of such information for the development of timely warnings. Other real-time data from World Meteorological Organization-network reporting surface stations are also ingested and used by the models. Uncertainty in observations and models is transformed into uncertainty measures characterizing the nowcasts of flash flood occurrence.

The regional center in Costa Rica hosts the system computational servers used for the production of flash flood guidance with spatial resolution of about 200 km2 on the basis of hydrologic and geomorphologic principles. A secure web site at the regional center produces a series of guidance products in real time (hourly) for in-country hydrologic and meteorological services (Figure 3-5). These in-country services can download GIS products locally for further processing, possibly update the downloaded information with local data, and generate flash flood warnings and watches. Emergency management agencies and other organizations (United Nations World Food Programme) have access to the web products for planning their deployment activities in areas where high flash flood threat is estimated. Real-time products with coarser spatial resolution that have not undergone in-country meteorological and hydrologic services quality control are disseminated through the World Wide Web for public information (http://www.hrc-lab.org/right_nav_widgets/realtime_caffg/index.php). A multi-month training program was established for regional center staff and in-country users for effective use of the operational system. In addition to system operations and guidance, product interpretation, and use, training also covered methodologies for the recording of flash flood occurrence in small streams to enhance regional observational databases and for the validation of the warnings issued on the basis of CAFFG.

While initial validation results for the system are promising, it is but a first step toward the solution of the flash flood warning problem in ungauged areas. Fruitful areas for improvement are the reduction in the latency of microwave satellite rainfall data for use in the production of flash flood warnings; the deployment of low-cost and maintenance sensors for precipitation, temperature, and flow stage and discharge in remote areas, and the enhancement of the existing cyberinfrastructure to accommodate these; and the improvement and in some cases the development of regional communication networks relying on the World Wide Web for more effective cooperation and data management of the

FIGURE 3-5 The secure web site interface of CAFFG. Information and products, such as mean areal precipitation and flash flood guidance, are provided on a country basis for the seven countries of Central America. CAFFG system information may be found in Sperfslage et al. (2004). Reprinted, with permission, from Sperfslage et al. (2004). © 2004 by Hydrologic Research Center.

national meteorological and hydrological forecast and management agencies of the region.

A Vision for Cyberinfrastructure

A future vision for cyberinfrastructure that addresses many of the challenges associated with developing real-time environmental observation and forecasting systems includes automated capture of well-documented data of known and consistent quality from both embedded network systems and air- and space-based platforms; active curation and secure storage of raw and derived

data products; provision of algorithms, models, and forecasts that facilitate the integration of data across scales of space and time, as well as the generation of predictions and forecasts; and the timely dissemination of data and information in forms that can be readily discovered and used by scientists, educators, and the public. Realizing this vision will require significant advances in research and development. In particular, new approaches for data quality assurance and quality control, automated encoding of metadata with data, and algorithms that enable integration of data across broad scales of space (single-point sensors to regional-scale hyperspectral imagery) and time (fractions of seconds, in the case of some sensors, to days to weeks, in the case of some remotely sensed data products).

We propose several guiding principles that would facilitate the creation of the cyberinfrastructure needed to support environmental observation and forecasting. First, open architecture solutions are central to enabling the rapid adoption of new hardware and software technologies. Second, nonproprietary and, ideally, open-source software solutions (e.g., middleware, metadata management protocols) promote the modularity, extensibility, scalability, and security that are needed for observation and forecasting. Third, development and adoption of community standards (e.g., data transport, quality assurance/quality control, metadata specifications, interface operations) will more easily support system interoperability. Fourth, open access to data and information and the provision of customizable portals are key to meeting the needs of scientists, educators, and the public for timely access to data, information, and forecasts.

In this chapter we have discussed new opportunities for incorporating modeling and data communication into integrated hydrologic measurement systems, and summarized the elements that might go into such a system. In the next chapter we present summaries of case studies developed by the committee based on existing and proposed measurement systems. The purpose of these examples is to provide context for the ideas discussed above and to provide insight into the requirements and challenges associated with the development of integrated hydrologic measurement systems.