5

Coherent Assessment Systems

The workshop presentations and discussions highlighted the disadvantages of many current approaches to assessment and the desirability of a coherent system in which multiple approaches are used to collect formative and summative information about student learning to meet the needs of students, teachers, administrators, policy makers, and the public. As Diana Pullin noted, the approach mandated by the No Child Left Behind (NCLB) Act has demonstrated that assessments can generate data that people will attend to, but the result is not necessarily any marked improvement in teaching or learning. Federal and state officials have placed tremendous demands on assessments, during a period when funds to support their development have been shrinking. Tests have been stretched to cover too many purposes, with results that are widely viewed as unsatisfactory.

The question to ask now, Pullin observed, is how states might move from present practices to innovative learning-based assessments embedded in coherent systems that foster improved learning and more appropriate accountability. With that goal in mind, Joan Herman provided an examination of coherent assessment systems and the key features they should have to serve the dual purposes of supporting student learning and providing accountability. The second part of this chapter summarizes subsequent discussions in which policy makers, researchers, and practitioners shared their perspectives on the challenges of establishing a coherent assessment system.

CHARACTERISTICS OF A COHERENT SYSTEM

It is a propitious time for a move toward coherent assessment systems, Herman observed. The Race to the Top funding, the opportunity for states to sign on to the common core learning standards, and converging confidence in the potential of new kinds of assessments—particularly formative assessments—combine to produce an important window of opportunity. Fortunately, Herman said, there is a strong body of research on which to base new approaches.1

Herman delineated key elements of a coherent assessment system: that it is a system of assessments, not a single assessment; that it is coherent with specified learning goals; and that its components collectively support multiple uses in a valid manner.

On the value of a system, as opposed to a single assessment, Herman noted that most tests used for accountability purposes today target only a limited subset of the learning goals that school systems set for their students. “If we want to know whether kids can write,” Herman observed, “we need something more than an editing test with multiple-choice questions. If we want to know whether kids can innovate, engage in inquiry, or collaborate with others, again, multiple-choice or short-answer tests are not giving the depth of information that we really need.”

This is critically important not only because tests communicate what it is important for students to learn, as was emphasized throughout both workshops, but also because their results will only support sound decision making if they provide a rich picture of what students know and are able to do. Thus, by moving from an exclusive reliance on multiple-choice and short-answer items to systems that also include performance and other kinds of assessments, states can better serve accountability purposes: they will be able to answer questions about important capacities not well addressed by current tests, such as depth of thinking and reasoning, the ability to apply knowledge and solve problems, the ability to communicate and collaborate, and the ability to master new technology. At the same time, the kinds of measures that teachers need on a daily basis in their classrooms are quite different from the annual or throughcourse kinds of measures that policy makers use to monitor progress on a more macro level.

Assessment systems are able to provide that rich picture if they are coherent with established goals for learning. This is not a controversial idea, Herman observed, but she distinguished four types of coherence. The most fundamental type of coherence is that among models of how students learn, the design of assessments, and the interpretation of their results, as illustrated in Figure 5-1. In Herman’s view, the fundamental coherence most states have now is orga-

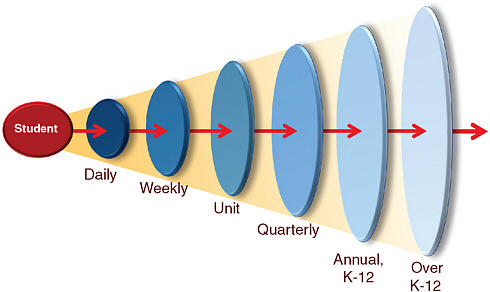

FIGURE 5-1 Developmental coherence learning goals.

SOURCE: From Joan Herman, Next Generation Assessment Systems: Toward Coherence and Utility. Used with permission from The National Center for Research on Evaluation, Standards, and Student Testing (CRESST) and by The Regents of the University of California supported under the Institute of Education Science (IES) U.S. Department of Education. Copyright © 2010.

nized around the material covered in annual statewide tests—not necessarily key learning goals.

The second kind of coherence, horizontal coherence, expands the alignment to assessment and instruction. This is generally assumed to be part of state accountability systems, Herman noted, but she questioned whether states really pose the question of whether students who have been taught the material identified in learning goals actually do better on annual statewide tests than students who have not. Third, developmental coherence describes instruction that builds student learning over time, progressing through daily, weekly, quarterly, and annual goals to deeper understanding. As Figure 5-1 illustrates, this sort of coherence extends across grades K-12, and tests ought to assess, in developmentally appropriates ways, the key competencies that students need to build along the way to college readiness.

The fourth kind of coherence is vertical coherence. The users of assessments at each level—the classroom, school, district, and state—have different information needs, but the means of producing that information can and should be aligned with learning goals. Thus, the design of very different types of assessments—from activities embedded in classroom instruction for the

purpose of providing immediate information about short-term learning goals to annual instruments designed to provide broad information about the effectiveness of a curriculum or the status of student subgroups—needs to reflect fundamental coherence.

Herman provided a matrix of assessment purposes not unlike the one presented earlier in the workshop series (see Chapter 1), but designed to illustrate the ways in which varying purposes can be met by a system that incorporates a range of assessment instruments linked by shared learning goals: see Table 5-1.

In developing a complex system to meet these multiple purposes, Herman explained, it is important to stay focused on fundamental validity—to ask to what extent the system, both in its individual measures and collectively, serves its intended purposes. When a single assessment is used to serve all purposes, it ends up serving none very well. Thus, it is important to identify all of the consequences of an assessment. Validity is not simply a matter of psychometric quality, Herman pointed out, and she identified some of the most important criteria for different sorts of assessments that should be incorporated into analysis of the validity of systems: see Table 5-2.

Assessments can help support improvements in many aspects of education, but they are not sufficient in themselves to change practice: Herman echoed others’ comments about the importance of coherence with other aspects of the system (teacher preparation and professional development, curriculum, etc.). She concluded with the acknowledgment that designing such a coherent system is an extremely complex challenge: “this is not just a matter of going into a room and figuring out what to do.” Existing technology and methodologies will need to be expanded. “That will take time,” she observed, “and there is no one right answer.” In her view, the best path forward is to explore multiple alternatives.

PERSPECTIVES ON IMPLEMENTATION

Several discussants were asked to comment on what they viewed as the most important considerations for implementing a new approach to assessment and accountability.

The Policy Process

Roy Romer, a former school superintendent and governor of Colorado, focused on the process that states are going through to forge new approaches to assessment and on the challenge of communicating new goals and strategies to the people who will need to accept them if they are to be successful. First, he pointed out that the process that is unfolding—in which states are grouping themselves into consortia to apply for federal grants that will support the

TABLE 5-1 Assessment Purposes*

|

Assessment |

Assessment Type |

Primary Users |

Use—Based on Race to the Top |

|

Annual |

On-demand annual |

|

|

|

Through Course Exams |

End-of-unit Mid-term Semester End-of-course |

|

|

|

School/District |

Benchmark |

|

|

|

Classroom |

Formative Curriculum-embedded Student work Discourse Discussion |

|

|

|

*Created based on a review of the expectations in the Race to the Top Assessment Program (2010). See Comprehensive Assessment System grant, http://www2.ed.gov/programs/racetothetopassessment/index.html [accessed September 2010]. SOURCE: From Joan Herman, Next Generation Assessment Systems: Toward Coherence and Utility. Used with permission from the National Center for Research on Evaluation, Standards, and Student Testing (CRESST) and by the Regents of the University of California supported under the Institute of Education Science, U.S. Department of Education. Copyright © 2010. |

|||

development of new kinds of assessment systems—is one that, by design, has no leader.

This approach has an advantage, in his view, because a key strategy for opposing a political change is to identify a figure to represent the change and then to associate that figure with negative images in order to marshal opposition to the change. With multiple states participating in three different consortia,

TABLE 5-2 Validity Criteria for Assessment Systems

|

Purpose |

Criteria |

|

Accountability Assessments |

|

|

Monitoring/Supervision Assessments |

|

|

Formative Assessments |

|

|

*The phrase “instructionally tractable” is used to mean results that provide information that teachers can use in planning next steps in instruction, teaching and learning. SOURCE: From Joan Herman, Next Generation Assessment Systems: Toward Coherence and Utility. Used with permission from The National Center for Research on Evaluation, Standards, and Student Testing (CRESST) and by The Regents of the University of California supported under the Institute of Education Science (IES) U.S. Department of Education. Copyright © 2010. |

|

this sort of opposition will be difficult.2 Yet this approach also means that the result will be whatever some group of entities can agree to.

Romer suggested that at some point that process might need some structure. For him, some issues to consider might include agreement on what will

be shared among the states and what will not. In order for assessment to be comparable, there must be a significant common component, but states will need the flexibility to vary some portion for their own purposes. Should the ratio be 70 percent comparable and 30 percent flexible or something else? How ought it to be fixed? Will multiple contractors be involved in developing and administering components of a consortium’s assessment system, and, if so, how will their work be coordinated? Will there be a single, shared digital platform for groups of states—or even for the nation?

Romer also outlined the characteristics he views as most important for new assessment systems. They need to be affordable over the long term. They need to be internationally benchmarked and also benchmarked within the states, which means that state tests must be substantially comparable to one another. Perhaps more challenging will be to convey to parents and the public why the tests that are given to students at each grade are critical—that at each level they set the standards for what students need to know and be able to do to stay on track for success in college and the workplace. Considering the fast pace at which technology and the global economy are changing, this is a critical responsibility for public education, but one that may not yet be fully appreciated. A key question to ask, then, is “is it too expensive to ask the right questions—the ones that really help make sure the student is prepared for the next step?” Romer used the example of flight training to illustrate why the critical job of testing is to show whether a student has really learned what he or she needs to know and be able to do: tests used to certify that pilots are ready to fly have to be able to identify those who are not ready, regardless of the cost because if they do not they are useless.

Romer also noted that it is both a creative and a challenging time for public education. Politics have “allowed us to talk nationally about standards and assessments, but not about curriculum,” he pointed out. The risk in that is that states will focus so much on tests and standards that they will overlook curriculum, teacher training, and other key elements of an aligned system. Communicating effectively about this will be key to success, he stressed. In the current political climate, “people are deeply worried about the financial future of their families.” They understand that education will be key to their children’s futures. It is very important “to tell them the truth about the nation’s educational health—and to identify a path” for progress.

Curriculum-Embedded Assessments

Assessment “of, as, and for learning” was the theme of Linda Darling-Hammond’s presentation. She agreed with Romer that curriculum and assessment are intertwined, and that students “are not entering a multiple-choice world.” Like many others at the two workshops, she emphasized that readiness for college and 21st century careers requires not just basic skills and factual

knowledge of the sort most frequently covered on standardized tests, but also the ability to find, evaluate, synthesize, and use knowledge. In order to be able to learn in changing contexts and to frame and solve nonroutine problems, students and workers will need knowledge and skills that are transferable, as well as skills in thinking, problem solving, design, teamwork, and communication.

This conception of learning supported a significant change in the approach to assessment in Hong Kong, Darling-Hammond explained, and it was the context in which the phrase “assessment of, as, and for learning” was coined. She noted that education reform initiatives in many high-achieving countries have focused on higher-order thinking skills. More specifically, they have focused on assessing the kinds of performance they want students to develop. Many countries, she observed, do not use multiple-choice assessments at all, and those that do balance them with other measures that capture complex knowledge and skills. When assessment is developed to be “of and for learning,” the tasks themselves both convey what students should be learning and provide an opportunity to examine students’ understanding. In most high-achieving nations, she added, teachers are integrally involved in developing and scoring on-demand and curriculum-embedded performance measures, which are combined to yield a total score on the examination. These experiences give them the opportunity to engage closely with the standards and the assessments and to consider carefully what good quality work looks like.

She summarized the key elements of this approach to assessment:

-

an integrated system of curriculum and assessment provides tests that are worth “teaching to” because they focus on the content and skills addressed by high-quality instruction;

-

teachers’ involvement in developing, scoring, and using the results of assessments, which improves their understanding of the curriculum and the standards and thus helps them improve their instruction; and

-

assessments that evaluate the most valuable kinds of student work and reasoning skills and thus provide valuable information to both teacher and students.

Changes in Hong Kong demonstrate the effectiveness of this approach, she explained. Their education ministry has begun to replace traditional examinations with school-based tasks delivered in a variety of ways. These include oral presentations, portfolios or samples of work often done to specific specifications, field work investigations, lab work design projects, and the like—many are both delivered and scored by computer. She argued that these tasks are more valid assessments because they include outcomes that cannot be readily assessed using a one-time examination format. Other countries have similar systems, though some stress the standardization of the task more than others. One example is the General Certificate of Secondary Education offered students ages 14-16 in

England, Wales, and Northern Ireland, which has a range of assessments embedded in the curriculum as well as an end-of-course examination component.3 As part of the literacy assessment, for example, students are asked to produce responses to different kinds of texts, to do certain kinds of imaginative writing, speaking, and listening activities, and to do information writing. Another example is Singapore’s A-level examinations, which also include a combination of externally set examinations, long-term projects conducted to particular specifications, and school-based practical assessments.4 Among the tasks required for the science examination is to design and conduct a scientific investigation and prepare a lengthy research paper documenting the work.

Curriculum-embedded tasks, Darling-Hammond explained, can more easily address central concepts and modes of inquiry than stand-alone assessments can. They can also more easily provide both summative and formative information, and they can allow for more detailed investigation of skills and knowledge also assessed in other components of an assessment system, for other purposes. She suggested that the United States is well behind other countries in this regard, though she noted that Connecticut has included in its assessment a science task for 9th- and 10th-grade students. Although it is not used for highstakes purposes, it is designed to measure inquiry skills using an extended task that is structured and standardized and conducted in the classroom over an extended period of time.5 The end-of course exam then revisits some of the concepts addressed in the curriculum-based task.

Darling-Hammond closed with her recommendations to states considering new assessment approaches that include curriculum-based components. They should

-

develop systems for auditing and guiding teacher-scored work;

-

provide time and training for teachers and school leaders;

-

use technology to support teachers’ participation in scoring and their training, as well as assessment delivery; and

-

evaluate costs and manage development to ensure that the assessment system is feasible and sustainable.

Computer-Based Testing

Tony Alpert described the Oregon Assessment of Knowledge and Skills (OAKS), which is a program that has stressed the involvement of teachers: they

|

3 |

See http://www.direct.gov.uk/en/EducationAndLearning/QualificationsExplained/DG_10039024 [accessed June 2010]. |

|

4 |

See http://www.seab.gov.sg/ [accessed June 2010]. |

|

5 |

The state also uses curriculum-embedded tasks at other levels; see http://www.sde.ct.gov/sde/cwp/view.asp?a=2618&q=320890&sdenav_gid=1757 [accessed June 2010]. |

write all of the state’s assessment items and also score all of the assessments of writing. The system is now delivered almost exclusively online, he explained, though it took the state about 5 years to reach that level. Students have up to three opportunities during the school year to take the assessments. They receive their results immediately, and within 15 minutes of testing, teachers have access to those results, as well as aggregate results at the classroom, school, district, and state level. Education service districts, entities that support school districts with many logistical challenges, provide technical support for online testing.

Oregon had several reasons for moving to computer-based testing, Alpert explained. First, education officials concluded that investing in computer infrastructure rather than disposable paper-based assessments and shipping would be a better use of resources. Districts were expected to save money, and even if there were no savings, investment would be focused on infrastructure with lasting value. Computer-based testing would also allow the state to provide adaptive testing and instant results and to provide certain kinds of accommodations (for special needs students) not possible with paper-based testing. It has been difficult to make comparisons between the online system and its paper-based predecessor, in part because current costs for the former system are not available. Nevertheless, human scoring has been reduced by 45 percent, and many of the costs are not related to quantity so they do not rise with the number of students or administrations.

Online delivery also allowed the state to implement adaptive testing that could provide summative information as well as formative information. Specifically, Alpert noted, such testing allows them to better support both high- and low-performing students and to better track all students’ incremental progress. He suggested that students may be more highly motivated to perform well when the bulk of the items they see are at a difficulty level that matches their ability and when opportunities for cheating—as well as motivation for teachers to focus on unconstructive test preparation—are reduced.

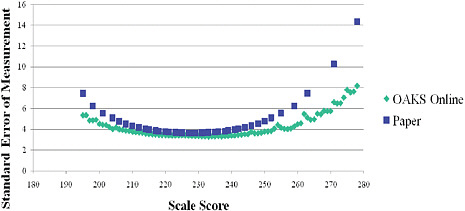

Alpert noted that adaptive testing also improves test validity by making it possible to measure the entire breadth of content standards every year, since testing is not limited to what can be contained in a finite number of booklets. Field testing can be done more efficiently because students, rather than schools, are the unit of analysis. This feature reduces potential errors in linking, Alpert explained, and also makes it easier to detect problematic items. Tests could be adapted on a variety of dimensions, he added, not just student ability and content standards. Oregon plans to explore additional possibilities, such as adapting based on the standard error of the measure or on student motivation. Alpert noted that the standard error of measurement has been lower for the computer-based testing than for the state’s paper-based testing: see Figure 5-2.

Oregon officials also saw important advantages to allowing students multiple opportunities to take the tests, Alpert explained. When students can demonstrate their progress over time and have multiple opportunities to learn from and act

FIGURE 5-2 Standard error of measurement by scale score and assessment mode, grade 8 mathematics.

NOTE: OAKS = Oregon Assessment of Knowledge and Skills.

SOURCE: Alpert and Slater (2010, slide #7). Reprinted with permission from Dr. Tony Alpert and Dr. Stephen Slater of Oregon Department of Education.

on information about their progress, the officials reasoned, both they and their teachers would be more likely to use test results to improve achievement. The state officials also expected that the test results would support more valid interpretations because there would be less variance unrelated to the constructs being measured. Because the span of time within which testing can occur each year is long, the tests can more easily meet a variety of purposes and provide results when they are needed. Districts and schools can tailor testing schedules according to the availability of resources (e.g., computers, bandwidth) and can also integrate timelines for accountability reporting with the pace of instruction.

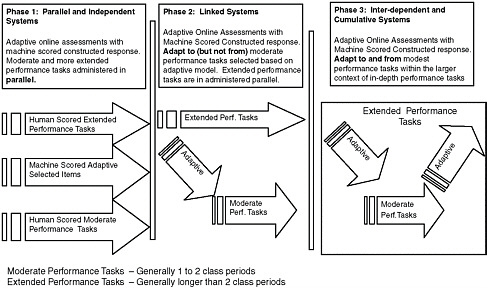

In Alpert’s view, Oregon’s assessment is well aligned to the breadth of the state’s content standards (and thus horizontally coherent), but its heavy reliance on multiple-choice items means that there are limits to its capacity to measure them in depth. The system currently includes some performance assessment, but it does not count those tasks for federal accountability purposes. Teachers’ involvement in writing and scoring assessment items, as well as professional development activities focused on the use of data to support student achievement, contribute to vertical coherence. Opportunities for multiple assessments of incremental progress contribute to developmental coherence. Still, the system does not yet fit the model of coherence described by Herman and others; he offered a roadmap for how it could move closer to that model: see Figure 5-3.

Fully implementing this model in Oregon would be a challenge, Alpert acknowledged, and would require

FIGURE 5-3 Roadmap for horizontal coherence of assessments with adaptive item selection.

SOURCE: Reprinted with permission from Dr. Tony Alpert and Dr. Stephen Slater of Oregon Department of Education.

-

flexibility,

-

professional development to support the use and interpretation of student data,

-

item banks large enough to support assessment of a range of student abilities across the breadth of the content and cognitive complexity of a subject,

-

robust software that can support efficient computer-based performance assessments,

-

robust approach to measuring student performance, and

-

adequate computers and supporting infrastructure.

BOARD EXAMINATION SYSTEMS

The goals and strategies characteristic of coherent systems are in many ways not new, Marc Tucker observed. Several centuries ago, Oxford and Cambridge Universities replaced their system of interviewing candidates for admission with a system in which they provided the schools from which most candidates came with clear descriptions of the preparation they would need and used an exam to confirm that the candidates were well prepared. Their goal, Tucker explained, was to develop exams that came as close as possible to eliciting the sorts of

thinking and work production they would ask of matriculating students. When new universities were later founded, beginning in the 1830s, the standards became more formally established to ensure that all were holding students to the same standards.

These exams, in Tucker’s view, were measuring the same sorts of higherorder thinking skills that are described today as 21st century skills. The schools that were preparing British students for university-level study were asked to teach them to think critically, to analyze and synthesize information from a wide variety of sources, and to produce useful products from their analysis, as well as to be able to both lead and cooperate to work collaboratively. “It is the whole list except learning how to twitter,” he joked, and the only difference is that today the goal almost everywhere is to provide all students with these skills, not just those who are being groomed as future leaders.

In the United States, however, a very different testing tradition emerged from the work of psychiatrists and other pioneers of scientific psychological and educational measurement. U.S. public schools had extremely diverse curricula, and thus as the new principles of measurement began to be applied to education, the natural course was to develop tests that were curriculum neutral—the exact opposite of the approach that had developed in England. The new standardized tests that began to emerge in the 1940s and 1950s were inexpensive and efficient, Tucker explained, but they did not benefit from the British perspective. “You will never find out whether students can write a 10-page history research paper … by administering a computer-scored multiple-choice test … [nor from such a test could you determine] whether they can read two newspaper articles on the same subject; compare them and figure out what is fact, what is fiction; analyze the differences; and come up with a considered view of their own,” he said.

This is critical, in Tucker’s view, because the United States is behind other countries: it is “burdened with an approach to testing which is very well suited to testing basic skills and very ill adapted to testing the skills that are most important.” Tweaks to the existing approach will not be sufficient, he argued, despite the fact that much in it is very valuable. He advocates that the United States look closely at the board examination systems used in many of the highest-performing countries in the world, and he cited studies from the Programme for International Student Assessment (PISA) that indicate that those systems are among the most important factors that explain those countries’ success (Bishop, 1997; Fuchs and Woessman, 2007). Among the countries that use this approach are Australia, Belgium, Canada, England, Hong Kong,6 the Netherlands, New Zealand, and Singapore.

In general, board examinations are used at the secondary level and are based on a core curriculum that is set for students through the age of 16 or so.

A syllabus for each course and instructional materials are provided to guide teachers, and the exam, usually a set of essays, is closely based on the syllabus. The syllabus specifies the level of skill expected, as well the material to be read and covered. The composite exam score generally also includes scores for work done in class during the year and scored by the teacher. Teacher training directly linked to the curriculum and course syllabi is a critical element.

Tucker stressed that all of these elements together would not be as effective as they are without the requirement that students pass a set of examinations in order to qualify for the next stage of study or work. Because students are focused not on logging 4 years in secondary school, but rather on what they need to accomplish to reach particular goals, he argued, they are highly motivated and clear about why they are studying particular material.

A number of these kinds of programs are available to U.S. students, including:

-

ACT QualityCore,

-

Cambridge International General Certificate of Secondary Education,

-

Edexcel International General Certificate of Secondary Education,

-

College Board AP courses used as diploma programs,

-

University of Cambridge Advance International Certificate of Education, and

-

International Baccalaureate Diploma Program.

Tucker highlighted some of the differences between board examination systems and typical accountability tests used in the United States. Board examinations are based in curricula as well as standards, designed specifically to capture higher-order thinking skills, and often include information on work done outside of the timed test. Students are expected to study for them, and performance expectations are very clear. In contrast, state accountability tests are generally not curriculum based, and students are not expected to study for them. In his view, students do not always have equal opportunity to study the material on which they will be tested, because the tests tend to cover such broadly defined domains. They do not generally include data on work done outside of the timed test, and they are more effective at capturing basic skills than higher-order thinking skills.