13. Toward a Biomedical Research Commons: A View from the National Library of Medicine at the National Institutes of Health

– Jerry Sheehan37

National Library of Medicine

I was asked to represent the perspective of the federal information policy community. There are numerous agencies across the federal government, each with its own practices and policies, so I am pleased to see that one of tomorrow’s presentations will provide a broad cross-agency view. I am going to focus my remarks on my own small part of the world and give you a view of some of these issues from the perspective of the National Library of Medicine (NLM) of the National Institutes of Health (NIH). It will be a combined NLM-NIH perspective because the NLM is often the organization that sets up the repositories that respond to NIH policies.

We at the NLM have a mission to collect, organize, make available, and disseminate biomedical knowledge in order to improve health, medicine, and well-being. As such, the NLM is a variety of things to a variety of people. We are a library, with more than 8 million artifacts of different types. We are also a research and development organization, with intramural research labs that do work on data mining, data search, retrieval, presentation, image archiving, and so on. We are home to the National Center for Biotechnology Information, which not only provides data and information services, but also conducts a great deal of research on bioinformatics, improving the ways that we link, find, and do research with biomedical information. Our Specialized Information Services provide information resources related to environmental health, toxicology, and disaster information management. NLM also funds extramural research and training in biomedical informatics.

The NLM has a number of different kinds of databases, data sources, and information sources that it makes available to the community as a whole. They are, for the most part, publicly available databases, and they encompass a broad range of types of information and data sources. MEDLINE and PubMed Central, for example, are literature databases that provide access to journal citations and to full-text journal articles, respectively. MedlinePlus offers consumer-oriented health information. Two other NLM databases are GenBank, which is a relatively well known archive of discovered human genes, and dbGap, which is the Database of Genotypes and Phenotypes. It serves as a repository for data produced by NIH-funded genome-wide association studies, which link genotypic data to phenotypic data. It aids in answering such question as to what extent variations in genes are associated with variations in the expression of a particular disease or a condition, such as diabetes or obesity. We also have a small molecules database (PubChem), a hazardous substances database, and ClinicalTrials.gov, which is a registry for ongoing clinical trials and, as of about a year ago, became a repository for summary results of some of those clinical trials.

These databases are not static, but rather continue to grow. As of October 2009, MEDLINE had 16 million citations from more than 5,000 different biomedical journals, and we add about 700,000 new citations a year, representing new peer-reviewed literature

_____________

37 Presentation slides available at: http://sites.nationalacademies.org/xpedio/idcplg?IdcService=GET_FILE&dDocName=PGA_053665&RevisionSelectionMethod=Latest.

from those journals. PubMed Central, which is a bit younger than MEDLINE, had about 1.8 million full-text peer-reviewed journal articles, and it gets about 300,000 users each day who are either accessing or downloading copies of those articles. There has been phenomenal growth in GenBank, which had on the order of 100 billion base pairs and about 100 million full sequences. Its rapid expansion reflects the deluge of information that must be captured, collected, curated, and maintained over time. As of October 2009, the clinical trials database had descriptive information on about 80,000 registered trials with information on 340 trials being added each week. We now have details on the results of these trials coming in at the rate of about 200 results records a month, so over time this will grow to be a fairly substantial resource for different kinds of comparative effectiveness research and for other kinds of evidence-based medicine research. With all of these databases, we notice that as we add content, the amount of use goes up.

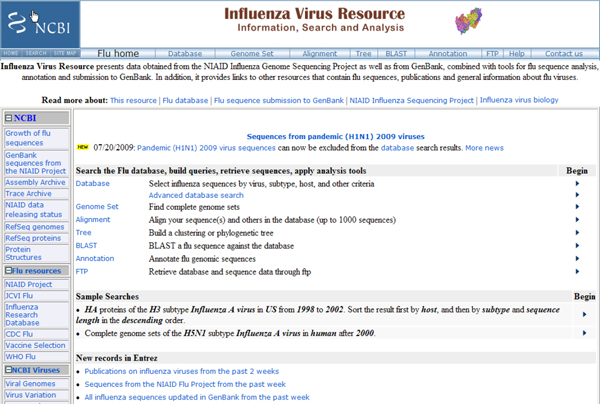

Most of the databases that I have mentioned so far contain information that spans the spectrum of biomedical research and is accessed by a broad range of users—researchers, care providers, and the general public. We also have databases with information that is tailored for particular types of research and/or specific audiences. For example, our Influenza Virus Resource database (Figure 13–1) pulls literature from PubMed and PubMed Central as well as a variety of genome sets, some of which are generated by researchers associated with the National Institute for Allergies and Infectious Diseases. Thanks to their influenza genome sequencing project, we have now about 90,000 influenza genes and 2,000 full influenza sequences in the database.

FIGURE 13–1 Screen shot of the Influenza Virus Resource database. SOURCE: National Center for Biotechnology Information, National Institutes of Health

NLM has also been working to develop channels for getting out information about H1N1 influenza faster than typically occurs through traditional publication channels.

NLM worked with the Public Library of Science (PLoS), which developed a new type of publication, called PLoS Currents, to speed scientific communication. The first phase of the program focuses on influenza. The information in PLoS Currents: Influenza differs from that traditional journals in that it is not fully peer-reviewed; instead, a governing board comprised of experts in various aspects of influenza examines incoming contributions to make sure they are relevant and based on sound analysis. Articles are posted in a matter of weeks, rather than months or years, with the expectation that the reported research may eventually be published as a standard, peer reviewed publications

NLM initially developed a new service Rapid Research Notes to serve as an archive for PLoS Currents: Influenza and other fast turn-around research communication mechanisms that may be developed. Over time, it was recognized that much of the content of Currents took the form of short journal-like articles that could be archived in PubMed Central and benefit from the enhanced search capabilities build into that platform and the integration of PubMed Central with other NLM resources. Hence, PLOS Currents is now a full contributor to PubMed Central, depositing its full content into the archive, where it is assigned a unique identifier and can be easily accessed by researchers, clinicians, and the public.

All of the services I have described are essentially databases that collect, organize, and make accessible particular types of information, often for a particular community of users. While they have considerable value as stand-alone resources, their real value—to NLM and the user community as a whole—comes from linking them together into what could be considered an integrated, online biomedical knowledge resource.

To illustrate what we have in mind, imagine doing a MEDLINE search for cancer treatments. You find the abstract of an article that looks valuable. By analyzing the text of the abstract you find valuable and your original search string, we can generate a list of related articles that you might also find to be relevant. If any of them are available as full-text articles in PubMed Central, you can click on the link and retrieve it. If the retrieved article discusses a drug being studied in a clinical trial, you can scroll down to the bottom of the abstract and find an identifier called an NCT number. The NCT number is a unique clinical trial identifier that the NLM assigns to trials registered at ClinicalTrials.gov. By clicking on the NCT number, you are brought directly to the clinical trial registration record in ClinicalTrials.gov, which may also contain summary results information from the trial, including adverse events. If you look at the bottom of that ClinicalTrials.gov record—because we have standard formats and a process for putting these identifiers on citations and journal articles—you can link back to the original citation, which would take you back to that first article you found.

Where this gets more interesting is where this sort of linking can work across all NLM resources. Imagine that after searching PubMed for articles on treatments for influenza, you found an article in PubMedCentral that discusses the potential role of different drugs in treating the disease, e.g., oseltamivir and zanamivir. You could then follow a link to the PubChem database of small molecules to see the structures of these drugs and find out what is known about their chemical properties, be presented with a list of PubMed links to other articles with more information about the role of those chemicals in blocking the production of certain proteins, then link to three-dimensional views of the protein structures that show how the chemicals bind to them and even manipulate the images in various ways, and so on. This is the vision for the infrastructure we would like to create by integrating and linking among the multiple databases and information resources we have at NLM.

Bringing that vision to reality requires advances on multiple fronts. It requires the creation of unique identifiers for all of the elements involved and widespread use of those identifiers across the relevant communities, including among publishers. It also requires good vocabularies and terminologies to enable intelligent linking of related materials from across databases. At its simplest, such vocabularies can ensure that when a user performs a search on a key word, the system will not only know its various synonyms but will also know of various relationships involving that word, such as the relationship between a disease and agents used to treat it. These capabilities are among those in which NLM has strengths.

Data and information sharing remain a priority for NIH. Our efforts to promote data access and linking were boosted by the recent appointment of Dr. Francis Collins as the new NIH director. When Dr. Collins assembled the NIH staff on his first day on the job, he listed a set of areas where he thought there were significant opportunities for NIH. He identified an opportunity in applying high-throughput technologies to help enhance understand fundamental biology and uncover the causes of specific disease states. He sees such technologies as offered a way to ask questions that, as he put it, have the word “all” in them: What are all the transcripts in a cell? What are all the protein interactions? We should do it all, he said, because we have the ability to do that.38

Those of us who work on the data access were quite happy to hear how Dr. Collins followed up that opportunity with this quote. “Those kinds of questions are now approachable, especially if we do the right job of making really powerful databases publicly accessible to all those who need them and empower investigators in small labs as well as big labs to plunge into that kind of mindset.” In short, I think you can expect to see a lot more development of these kinds of resources from NIH and development of a lot more of the data that will populate these kinds of databases.

NIH already has in place a number of agency-wide policies to promote data and information sharing. These include the NIH Data Sharing Policy, the NIH Public Access Policy, the NIH Genome-Wide Association Study Policy, and emerging policies (and regulations) governing clinical trials registration and results submissions. According to surveys, researchers support the idea of sharing data with others in the research community. In practice, we find that supporting data sharing does not always translate into active data sharing. We can build databases to house the data, but it is not enough to simply encourage voluntary contributions of data, for many of the reasons that have been discussed today. Thus, in a number of cases, the NIH has stepped in and put in place policies that either require the submission of information and data or else come as close as we can to requiring that without actually using that word. All of this is done with a great deal of consultation, public notices, and public comment in order to come up with a consensus or, at least, well-informed policy options.

Two policies in particular are standard for NIH-funded research. First, there is the NIH Data Sharing Policy, which imposes a requirement that any researcher who receives more than $500,000 in one year must provide with the grant application a plan for data sharing. We expect that the data will be made available in a timely manner, and the guidelines indicate this should happen no later than when the manuscript is accepted for

_____________

38http://www.usmedicine.com/articles/new-director-at-national-institutes-of-health-outlines-goals-forfy2011-funding-.html.

publication. There are certain exceptions to this requirement, such as if the data can be identified as coming from particular individuals or if there are national security concerns.

Another requirement, expressed in the NIH Public Access Policy, is for NIH grantees to submit to PubMed Central any peer reviewed publications resulting from NIH-funded research. The publications must be submitted upon their acceptance by a scientific journal, but public release can be embargoed for up to 12 months. This embargo period addresses concerns that making the publications publicly available might affect the subscription-based publication models of a number of the journals used by NIH researchers. We have no evidence to-date to indicate that availability of articles in PubMed Central up to 12 months after their publication date has resulted in cancelled subscriptions to journals.

The NIH Public Access Policy applies to about 80,000 to 85,000 papers a year, but that is only a fraction of the papers that are deposited into PubMed Central every year. We work very closely with a number of publishers to collect other published papers, beyond those funded by NIH. We have developed mechanisms whereby several hundred journals provide us with their full journal content, sometimes with an embargo period, but often without. In other cases a journal may submit the final printed version of only those articles that were funded by the NIH, again with up to a 12-month delay in the release.

Certain types of studies have their own data sharing requirements. The NIH genome wide association study (GWAS) policy, for example, requires that researchers funded by the NIH for a GWAS must put the resulting data in a publicly accessible database where it is available to other researchers for subsequent years. We have built a database into which they can provide that information, dbGaP. As with depositing articles into PubMed Central, there is a delay period: A researcher can have 12 months of exclusivity to generate the first publication based on that data, even if other researchers are granted access to the data before that embargo period has expired.

The GWAS research generates both genotype and phenotype data, and the existence of the genotype data in particular leads to concerns about the subjects in the studies being identified. Thus we have a process to minimize the chances of the subjects being identified. The data are not publicly available, other than some metadata that cannot be used to identify individuals. There is, however, a procedure by which a researcher can request access to these datasets for secondary research use.

The clinical trial datasets have their own requirements for contributions. Results information must be submitted for certain phase 2 through phase 4 trials of FDA-regulated drugs and biologics and for non-feasibility studies of FDA-regulated devices. Results are required to be submitted within 12 months of the completion of the study if the drug, biological product, or device has been approved, cleared, or licensed for use. There are penalties for noncompliance with these requirements that are specified in the law. Congress also instructed the NIH to consider whether to require the submission of data for trials of unapproved products and the timeline for submitting such data, if required. As part of our efforts to determine whether to propose such a requirement we recently held a public meeting to solicit input on that topic, and others.

These policies demonstrate that there are a number of issues to consider about how to populate a commons or a publicly available database or an information-sharing repository. The first issue is how to get people to participate and submit data.

One way to do it would be to create an expectation within the scientific community that such data are shared as a normal part of the scientific enterprise. In the

biomedical sphere, the publishers have sometimes been helpful in creating such an expectation. For example, publishers will generally ask for a GenBank accession number when manuscripts are submitted that deal with genomic information. Something similar is true for articles reporting clinical trials: The International Committee of Medical Journal Editors announced that articles submitted for publication should have the data registered at inception in a publicly accessible database. Our database was the only one that met their criteria at the time, and publishers look for our NCT number in submitted articles as verification that the trial has been registered. The lesson is that there are groups other than funding agencies that can put pressure on the community to submit data.

Another issue to consider is how to monitor compliance. How do you make sure that people fulfill their requirements? When the NIH Public Access Policy was voluntary, compliance rates were quite low, less than 5 percent by one measure. When the policy became mandatory, there was a large increase in the number of manuscripts that were submitted to the database each week and in the compliance rate. Then, the first time that progress reports were due to the NIH for the projects subject to the policy, our project officers had a chance to look through the lists of referenced publications and ask for the PubMed Central ID numbers for those subject to the policy. More manuscripts were deposited into PubMed Central and the compliance rate jumped again. The lesson is that closing the loop on compliance—by identifying lack of compliance and informing those responsible for submission—is important if you want to ensure equitable submission of information and data into these repositories.

Simplifying the process is another way to encourage—or not discourage—submissions. We have done a great deal of work to try to simplify our systems for depositing, both for PubMed manuscripts and for other data.

We also have thought about ways to develop incentives to reward and recognize those who contribute their data and their publications. We do not have the answer there, but one approach would be to develop better way of tracking citations or other types of metrics so as to be better able to give people credit for what they have done. As noted, we assign identifiers to publications or data sets submitted to NLM. What is needed are standard practices for citing data sets and for recognizing the collection and sharing of data sets as a valuable scientific activity that is rewarded by the community and taken into account in hiring and promotion decisions.

There is a lot to think about in the design of policies governing these databases. Different kinds of data might warrant different kinds of approaches, even if the objective in the end is to get as much data as possible into a repository as quickly as possible. It is important to take into account the concerns in the research community about wanting to hold onto data, at least until a first publication. For certain types of data, such as clinical trial data, there may be concerns about releasing the data before a product or device is approved.

The lesson may be that policies need to be flexible. It is not necessarily the case that “one size fits all” when you are talking about different kinds of data. I am not familiar enough with the microbial datasets to understand the different ways that you might need to treat them, but Paul Uhlir talked about how different thematic communities might develop somewhat different rules for data submission.

Finally, it is important to facilitate interoperability. Putting data into a repository or archive is only the first step. The second step is making the data useful, which means making it possible for users to find what they are looking for, to understand what it is (i.e., appropriate use of metadata), and, where possible, to be able to find other data that

will add value to that original dataset. To that end, the NLM does a lot of work with a larger community of people on terminologies and vocabularies. There is an international group meeting in Bethesda today that is working on vocabularies for clinical medicine. Persistent digital identifiers can play a major role simply by helping to connect various information and records. The NLM has also worked on the metadata standards and data descriptions that are going to be used. We have been trying to provide ways to help people understand which kinds of standards exist for describing data and which formats data should be provided so others can easily make use of them.

We would also like to facilitate having data in a good form, archivable, and well described. One approach would be to use data scientists to prepare the data, but there may also be ways to embed good data sharing and data curation practices into research training or education processes so that people know how to prepare data well and can do it more quickly and more efficiently.

Ending on a positive note, I do think we are making progress in improving data and information sharing in the biomedical community. There are a number of successful efforts, some of them represented in the room here today. The number of conferences and meetings and activities indicates that there is a growing interest in making information more easily available within the biomedical research community in order to advance the science and make better use of the research dollars that are provided by the NIH and other funding organizations. I also believe there is an increasing recognition of the need for various types of infrastructure and resources.

How do we actually make this happen? We at the NIH build or fund the development of many places to store data. As I mentioned, we put a great deal of effort into standards and reference vocabularies to make the data more easily shareable. It might take awhile to realize the vision that is being articulated at today’s symposium, but we are taking some good steps.