CLOCKS IN THE EARTH?

The Science of Earthquake Prediction

by Addison Greenwood

Earthquake country. In America this epithet usually evokes images of western California, where the San Andreas fault—perhaps our most notorious geological feature and probably the most intensely studied by scientists—appears for most of its length as the thinnest of lines in the dirt. The fault was indubitably the cause, however, of the earthquake and consequent raging fire in 1906 that devastated San Francisco (see Figure 6.1). After this natural disaster, at least 700 lay dead (the San Francisco city archivist recently reexamined many of the city's records and concluded that the number of deaths may well have been many hundred more) and many of the city's structures were toppled.

More than a mere memorial to past events, the San Andreas fault is a harbinger. For the present population of San Francisco, for millions of other Californians who also live along the fault zone, and for hundreds of millions more throughout the world living near other plate boundaries, the past is prologue. The strongest natural forces on our planet, earthquakes are not accidental but incidental

to the seething, ceaseless dynamics of the great heat engine that is the earth's interior. The next major earthquake in California, "The Big One" natives call it cavalierly, is as inevitable a natural phenomenon as the sunrise, though unfortunately not as predictable. When it arrives, the dead could well number in the tens of thousands, a scenario that charges modern earthquake scientists, called seismologists, with a mission and a sense of purpose far beyond the pursuit of pure science.

THE RIDDLES AND RHYTHMS OF 1992: EARTHQUAKE SCIENCE IN THE MIRROR

In 1992 the American seismological community was galvanized and challenged by several dramatic events. On the morning of June 28, a meeting attended by many of America's most eminent seismologists was about to get under way at the University of California-Santa Cruz. Their purpose was to review and begin to evaluate a U.S. Geological Survey (USGS)-run project nearly a decade in the running: The Parkfield Earthquake Prediction Experiment. For reasons that will be explained shortly, some seismologists were virtually "certain" (in statistical terms they expressed a 95 percent confidence) that a major quake was due to occur near a small town in Central California before too many years. Writing in Science in 1985, Bill Bakun and Al Lindh of the USGS predicted that an earthquake would occur before 1993 near Parkfield. As of June 1992 the predicted quake had not arrived. But the project had focused on that particular section of the San Andreas fault a level of scientific attention and study that was unprecedented in America; many valuable insights had been collected, and the scientists were meeting to consider these and other implications of the Parkfield experience.

Then on the morning the Parkfield meeting was to convene, the earth began to shake. The quake was not the magnitude (M) 6 earthquake predicted for Parkfield, however, but one many times larger (see the Box on p. 158), an M 7.3 quake emanating from a small desert community called Landers, dozens of miles to the south. The Landers quake relegated to a distant second place (in terms of the magnitude of energy released) the state's most famous recent quake—the Loma Prieta M 7.1 quake in 1989, which, as the World Series telecast had just begun, millions experienced vividly via television. As California's largest quake in 40 years, Landers was big news to scientists not because of its devastation—fortunately,

FIGURE 6.1 San Francisco, on the morning of April 18, 1906. This famous photograph by Arnold Genthe shows Sacramento Street and the approaching fire in the distance. (Photograph courtesy of the Fine Arts Museums of San Francisco, Achenbach Foundation for Graphic Arts.)

its effects remained largely in the desert—but because it occurred in the south. The Loma Prieta quake had given seismologists important information about the San Andreas fault around San Francisco, but Landers provided a message to Los Angelenos, whose city's fate rests upon a network of underground faults that share with Northern California only the fundamental fact that all are part of the major fault system defining the boundary between the Pacific and North American plates.

Why does the occurrence of a quake many miles from Los Angeles carry a prophetic message for the nation's second-largest city? Will "The Big One" strike there or at San Francisco, or somewhere else along the hundreds of miles of faults throughout California? Will the Parkfield prediction pan out, and when the quake does arrive, will the unprecedented experimental effort devoted to that region pay off? What is happening underground during an earthquake, and why, and, most importantly, where and when? These questions all point to a bottom line that few would dispute, for from a successful methodology of earthquake prediction could come the conservation of billions of dollars and the survival of many people who otherwise might perish in these unavoidable natural disasters. And why unavoidable? The question

belongs in the same category as, "Why does the sun rise in the east?" The exigencies of a lawful universe, as elucidated by Newton and many more since, provide the scientific context for a sunrise. So too are earthquakes, on Earth at least, inevitable. And many find it ironic that though so much closer and theoretically more accessible to empirical examination than the sun, the internal machinations of the earth remain shrouded in mystery.

"The Big One" obviously concerns scientists in a vital way, since predicting it and its effects could save many lives and billions of dollars. But the mythology of "The Big One" (a boon to newspaper sales if ever there was one) has obscured from the public some of the scientific clarity developed in the last decade about earthquakes. Nonetheless, while major strides have been taken to understand the genesis of earthquakes, nature may not be so willing to deliver up her secrets. It is by no means certain that scientists will ever be able to accurately forecast an earth-quake, says geophysicist Bill Ellsworth (USGS-Menlo Park), though he and colleague Duncan Agnew (UC-San Diego) recently compiled a state-of-the-art survey of the current methods and models used to predict earthquakes. Nonetheless, each new quake literally pulls back a veil, and, as was the case when news of Landers arrived at the Parkfield meeting, the excitement among seismologists is palpable that behind this next one may be some scientific signal of a breakthrough in prediction, or at least confirmation for their models about the mechanics of earth-quakes.

THE EARTH ITSELF

Geology is the study of how the earth has changed through time. Evidence of such changes during the earth's 4.6-billion-year history are preserved and embedded in the rocks that, like an old rusting car bumping its way through city life, will contain clues of their journey through the earth's interior and down through aeons of time. Geological time is a euphemism for millions and billions of years. The journeys of the smallest of rocks and the largest of continents both ride the same juggernaut: internal currents of scorching, coursing heat that actually move masses of earth like great underground river currents, though at a pace of only inches a year.

A more accurate image than a river, suggests Ellsworth, is a boiling pot of water, where the sea of bursting bubbles on the surface indicates that convection cells have developed in the pot. For the same reasons of basic physics—convection—hotter rock in the earth's deeper mantle

|

Earthquake Measurement Scales Besides where and when, a legitimate prediction must say how big—a parameter known as an earthquake's magnitude. Earthquakes can devastate human society, and the earliest efforts to catalog their size reflect this anthropocentric emphasis, focusing on, according to seismologist Bruce Bolt, damage to structures of human origin, the amount of disturbances to the surface of the ground, and the extent of animal reaction to the shaking. The size of nineteenth-century (and earlier) earthquakes is more than an arcane bit of data, however, even today. Recurrence models developed by seismologists to predict future earthquakes based on the pattern of past ones employ not only the date but also the magnitude of a preinstrumental earthquake. To make use of an intensity scale, first locate all written reports and eyewitness observations whose times and dates are known sufficiently to cluster them into widely placed reactions to the same event. Next, decipher each separate report according to the scale, assign the individual data points an intensity value from I to XII, and plot them on a geographical map of the region. Connect the dots corresponding to each value, and you've constructed an isoseismal map. With the twentieth century came a more scientific measure of magnitude. Seismographs (or seismometers) originated as elaborate and sensitive pendulum-like devices with a ''pen" mounted over a continuous roll of paper, producing a jagged line drawing that reflects the most delicate shaking of the earth's surface due to seismic waves. More modern seismographs use film and digital technology, and their readings are plugged into equations to quantify the energy released in an earthquake. The most famous of these equations was developed by American seismologist Charles F. Richter, who, writes Bolt, defined the magnitude (ML) of a local earthquake as "the logarithm to base ten of the maximum seismic-wave amplitude (in thousandths of a millimeter) recorded on a standard seismograph at a distance of 100 kilometers from the earthquake epicenter" (Bolt, 1988, p. 112). The enormous range of energies involved requires a logarithmic measure, where a rise of one digit yields a tenfold increase in displacement. Earthquakes have been recorded as low as -2 and as high as 9 on the scale, encompassing a range of 10. Compare, for example, the 1966 Parkfield quake (M 6) to the 1989 Loma Prieta (M 7.1) event that hit the San Francisco area. Loma Prieta released 30 times as much energy as Parkfield. |

FIGURE 6.2 Modified Mercalli Intensity Scale (1956 version from Richter, 1958, pp. 137–138). (From Earthquakes by Bruce A. Bolt. Copyright © 1988 by W. H. Freeman and Company. Reprinted with permission.)

|

Intensity value |

Description |

|

I. |

Not felt. Marginal and long-period effects of large earthquakes. |

|

II. |

Felt by persons at rest, on upper floors, or favorably placed. |

|

III. |

Felt indoors. Hanging objects swing. Vibration like passing of light trucks. Duration estimated. May not be recognized as an earthquake. |

|

IV. |

Hanging objects swing. Vibration like passing of heavy trucks; or sensation of a jolt like a heavy ball striking the walls. Standing cars rock. Windows, dishes, doors rattle. Glasses clink. Crockery clashes. In the upper range of IV, wooden walls and frame creak. |

|

V. |

Felt outdoors; direction estimated. Sleepers awakened. Liquids disturbed, some spilled. Small unstable objects displaced or upset. Doors swing, close, open. Shutters, pictures move. Pendulum clocks stop, start, change rate. |

|

VI. |

Felt by all. Many frightened and run outdoors. Persons walk unsteadily. Windows, dishes, glassware broken. Knickknacks, books, etc., off shelves. Pictures off walls. Furniture moved or overturned. Weak plaster and masonry D cracked. Small bells ring (church, school). Trees, bushes shaken visibly, or heard to rustle. |

|

VII. |

Difficult to stand. Noticed by drivers. Hanging objects quiver. Furniture broken. Damage to masonry D, including cracks. Weak chimneys broken at roof line. Fall of plaster, loose bricks, stones, tiles, cornices, also unbraced parapets and architectural ornaments. Some cracks in masonry C. Waves on ponds, water turbid with mud. Small slides and caving in along sand or gravel banks. Large bells ring. Concrete irrigation ditches damaged. |

|

VIII. |

Steering of cars affected. Damage to masonry C; partial collapse. Some damage to masonry B; none to masonry A. Fall of stucco and some masonry walls. Twisting, fall of chimneys, factory stacks, monuments, towers, elevated tanks. Frame houses moved on foundations if not bolted down; loose panel walls thrown out. Decayed piling broken off. Branches broken from trees. Changes in flow or temperature of springs and wells. Cracks in wet ground and on steep slopes. |

|

IX. |

General panic. Masonry D destroyed; masonry C heavily damaged, sometimes with complete collapse; masonry B seriously damaged. General damage to foundations. Frame structures, if not bolted, shifted off foundations. Frames racked. Serious damage to reservoirs. Underground pipes broken. Conspicuous cracks in ground. In alluviated areas, sand and mud ejected, earthquake fountains, sand craters. |

|

X. |

Most masonry and frame structures destroyed with their foundations. Some well-built wooden structures and bridges destroyed. Serious damage to dams, dikes, embankments. Large landslides. Water thrown on banks of canals, rivers, lakes, etc. Sand and mud shifted horizontally on beaches and flat land. Rails bent slightly. |

|

XI. |

Rails bent greatly. Underground pipelines completely out of service. |

|

XII. |

Damage nearly total. Large rock masses displaced. Lines of sight and level distorted. Objects thrown into the air. |

|

* To avoid ambiguity of language, the quality of masonry, brick or otherwise, is specified by the following lettering: Masonry A—Good workmanship, mortar, and design; reinforced, especially laterally, and bound together by using steel, concrete, etc.; designed to resist lateral forces. Masonry B—Good workmanship and mortar; reinforced, but not designed in detail to resist lateral forces. Masonry C—Ordinary workmanship and mortar; no extreme weaknesses like failing to tie in at corners, but neither reinforced nor designed against horizontal forces. Masonry D—Weak materials, such as adobe; poor mortar; low standards of workmanship; weak horizontally. |

|

region (just above the white hot liquid outer core) moves upward, displacing some of the cooler upper-mantle material (which dives back down to deeper regions), creating a kind of great circular movement. Corresponding to the surface of the pot in this model is the interface where the earth's crustal plates (generally 40 kilometers or so thick, though nearly twice that under some mountains) can actually separate from the earth just below. The chemistry and rheology of this upper-mantle region, called the asthenosphere, permit the convection of heat in the earth to be transmitted to the rigid plates above in the form of movement.

The crustal plates that form the earth's surface can be said to slide around on the viscous surface, reacting to these internal heat currents in the earth somewhat as small plastic poker chips might to the convection cells in the boiling pot of water. Instead of water bubbles bursting in the pot and releasing vapor into the air, the rising mantle material cools and forms new crust, as the plates slide around and jam into each other at their edges. The energy from this motion is temporarily stored elastically in the rock sections that press and lock together under friction, though "straining" to continue the motion powered by the heat currents. Eventually, the strain energy stored in this system overcomes the frictional resistance of the rock being crammed together, and the system "breaks," releasing the energy in the form of an earthquake.

This model emerges from the reigning paradigm of plate tectonics that has revolutionized modern geology. About a dozen major plates constitute the surface of the earth. The visible continents we inhabit are themselves merely passengers frozen in place atop these slowly shifting rocky rafts of earth. As an entire plate moves only a few centimeters in a year, it is not surprising that people hundreds or thousands of kilometers from a plate's edge, say in Dubuque, Iowa, can remain largely oblivious to the implications of the land beneath their feet not—relative to the center of the earth—being definitively anchored. But those on the very edge of a plate, including millions of Californians, experience plate tectonics viscerally, as patches of rock at the western edge of the North American plate undergo stress, accumulate elastic strain, and eventually and inevitably break loose from rock of the adjacent Pacific plate that is being propelled in a different direction. All such underground shifts are earthquakes, though most occur at levels undetectable except by instruments. Understanding exactly how this occurs, where and when it is most likely, and how much energy will manifest at the surface is the focus of Earth scientists from a number of specialties who come together in search of the keys to earthquake prediction.

Elementary school children now hear this fairly straightforward description of why earthquakes are inevitable, and yet 30 years ago geophysicists were just beginning to piece it together, marking a new era in seismology, a field born at the end of the nineteenth century with the development of recording instruments designed to measure the shaking of the earth (seismometers or seismographs), and the "pictures" they produce (see Box on p. 162). But even the seismograms don't reveal the picture of plate tectonics described above. They simply don't contain that information. "Very clever people," says Ellsworth, "working with very sparse information, cracked the plate tectonics puzzle in the early 1960s, and the dramatic advances in seismology since then rest on better data gathering through advances in tools and technology, the enhanced power to compute complex numerical solutions, and advances in the theory.''

What Agnew and Ellsworth call the first "modern" theory of earthquake prediction, by G. K. Gilbert in 1883, was conceived without benefit of the plate tectonics model. Gilbert's notion, as idealized in the block-and-spring model, was developed by another American, Harry Fielding Reid, into what has come to be known as the elastic rebound theory. Reid's study of the fault region that broke during San Francisco's notorious 1906 earthquake provided the first solid evidence for the elastic rebound of the crust after an earthquake. After Reid, scientists began to look at individual earthquakes as passing through a seismic cycle that they could examine and analyze quantitatively, searching for patterns that could be used to develop a prediction of the next rupture. As a generalization, stress accumulates slowly over time on a given fault, it reaches a critical point of failure, the fault slips, and energy is released as an earthquake. Go into the lab—or, more often these days, boot up your computer for a simulation—attach a spring to a block, and exert a pull, and you can watch the basic idea on which Gilbert and Reid built the earthquake cycle hypothesis in action. You will find that the amount of force required before the spring slips and the distance the block moves are predictable.

But the crucial question must be probed: Predictable in what sense? Ellsworth says that in the late 1970s, as elaborate experiments with block and spring models were being conducted in laboratories around the world (this construction of analog earthquake models is still going strong in such places as the Institute for Theoretical Physics in Santa Barbara), an important conceptualization came from Japanese seismologists Shimizaki and Nakata. They realized that, assuming the spring is being pulled at a constant rate, if the block always moves when a given force is

|

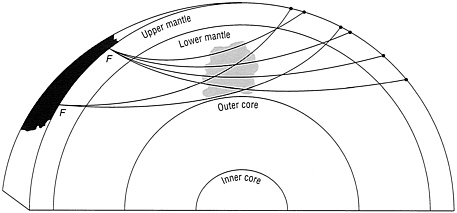

Seismic Waves Seismologist Thorne Lay from UC-Santa Cruz defines seismology as "the study of elastic waves and the various sources that excite such waves in the earth and other celestial bodies" (Lay, 1992, p. 153). Tectonic forces develop from convective recycling of the earth's interior, heat conducted to the crust that is converted to energy stored in rocks, under strain, which eventually fracture: An earthquake. Seismographs reveal that earthquakes are constantly occurring, several hundreds of thousands each year. Most rumble at levels undetectable by our unaided senses. Seismographs also show that the energy waves emitted from these earthquakes take several distinguishable forms, each traveling at characteristic speeds. One type, the body waves, travel through the earth, with the primary or P-wave able to traverse both the liquid and solid parts of the interior. The other type of body wave is slower. Called secondary waves (S-wave), these waves shear material sideways as they travel and therefore cannot propagate through the liquid zones of the earth's depths. The other major type of wave moves across the surface of the planet and also comes in two types, the Love wave and the Rayleigh wave. Basic models and equations were developed to recognize and analyze the travel times each of these waves took—beginning at the source where rocks fracture in the earth's crust—to arrive at various recording seismographs spread over the surface of the planet. By triangulation of the times that a specific wave arrived at several stations, a given event could be located within the earth. By comparing discrepancies between the times the various types of characteristic waves were predicted to—and then actually did—arrive at a given station, scientists gleaned a three-dimensional model of the earth's interior (Figure 6.3), and computer power has further enhanced the process, refining the approach now called seismic tomography. For instance, shear waves will not propagate through liquid, but primary body waves will; waves of any type pass more quickly through a colder region than a hotter one; and bulk mineral material adopts patterns of crystal alignment in its atoms that can enhance or retard the flow of waves. "These are just a few examples," said Lay (1992, p. 172), "of the ways in which seismological models have provided boundary conditions or direct constraints on models of planetary composition and physical state.'' |

FIGURE 6.3 Paths of earthquake waves through the earth's mantle from earthquake sources at F to seismographs at the surface. All the seismic paths pass through the shaded region and provide a tomographic scan of it. (From Earthquakes by Bruce A. Bolt. Copyright © 1988 by W.H. Freeman and Company. Reprinted with permission.)

reached, one can predict when this will occur—what they called time-predictable behavior. Conversely, if the block always springs back to the same point, the result is slip predictable, though it can't be said when (over the course of exerting the force by pulling the spring) this will happen. In terms of prediction, says Ellsworth dryly, the slip-predictable model is very pessimistic. Conversely, as geophysicists continue to improve their ability to actually measure the tectonic stresses that build up in fault zones, the time-predictable model could one day lead to a more precise means of forecasting.

THE LANGUAGE OF EARTHQUAKE PREDICTION

What is an earthquake prediction? "Any serious prediction," say Agnew and Ellsworth, "must include not only some statement about time of occurrence but also delineate the expected magnitude range [see Box on p. 158] and location." Without these elements, a prediction could not with certainty distinguish between what is being foreseen and what might actually occur, given how much seismicity occurs near plate boundaries, so seismologist and writer Bruce Bolt of the University of California-Berkeley adds a fourth element: ''A statement of the odds that an earthquake of the predicted kind would occur by chance alone and without reference to any special evidence" (Bolt, 1988, p. 160). Seismicity means the distribution of earthquakes in space and over time. "We currently record an average of 40 earthquakes per day in Southern California," said Tom Heaton (USGS-Pasadena), "and there is every reason to believe that there are many more that are too small for us to record."

The current definitive overview of seismicity in California, edited by geologist Robert Wallace, was published recently by the USGS as Professional Paper 1515. The San Andreas Fault System, California, describes the geological (rocks and their formation), geomorphic (surface features), geophysical (the behavior of matter and forces), geodetic (surveys of the surface), and seismological (waves emanating from earthquakes and explosions in the earth) aspects of this network of faults that extend along much of the length of California, about 1300 kilometers, and perhaps as wide as 150 to 200 kilometers. There is actually an even larger fault system of which the San Andreas is but a part, running up the edge of the Pacific plate from the Gulf of California all the way north to Alaska.

The San Andreas fault was discovered in the 1890s by Andrew Lawson of Berkeley. The northern end of the fault and the zone around it begin about 280 kilometers beyond San Francisco in the Pacific Ocean. It runs south-southeasterly just off the California coast, reaching landfall north of the city, and continues under metropolitan San Francisco, not far from the heart of the city. Continuing to the south it runs parallel to the coast about 60 kilometers inland until reaching the San Gabriel Mountains east of Los Angeles and then curls inland a bit more to the south, perhaps 150 kilometers east of San Diego. From an aerial or satellite photo, Wallace has said, the San Andreas looks like "a linear scar across the landscape," anywhere from several hundred meters to a kilometer in width, an effect of the erosion of rock that has been broken underground and then settled, over the aeons. The San Andreas fault proper is the principal plane where the local horizontal displacements in the earth's crust have occurred. In the zone surrounding this shear plane, geophysicists use such terms as fault branches, splays, strands, and segments to describe details in the fault geometry. Whatever shows at the surface is called the fault trace.

With the fault terrain thus delineated, a legitimate earthquake prediction must locate where on a given fault an event is expected. Most large earthquakes that occur at plate boundaries are categorized as shallow-focus earthquakes, occurring less than 70 kilometers deep. The word focus is usually replaced by the seismologist's term hypocenter, meaning the point where the rupture begins. Projecting the hypocenter directly above to the earth's surface locates an earthquake's epicenter, which is usually what appears on most seismic maps. The much less frequent intermediate-focus earthquakes occur from 70 to 300 kilometers deep, and the fewer still deep-focus earthquakes below that, though none are known to have originated deeper than about 680 kilometers.

The deeper earthquakes can be large but are rarely destructive to humans, said Marcia McNutt, a geophysicist at the Massachusetts Institute of Technology who is exploring how the mantle forces act to deform the earth's crustal plates.

The third and most problematic element of an earthquake prediction is time: When will the event of a predicted magnitude occur at a particular fault segment? Though the Japanese have a much larger billion-dollar experiment in place southwest of Tokyo, the most dedicated American effort ever marshaled to answer this question is currently under way at a small town in central California.

STALKING ELUSIVE PATTERNS

The Characteristic Earthquake Hypothesis

If the time-predictable model is to prevail and provide planners with information upon which useful precautions can be based, a discernible pattern should emerge from the sequence of ruptures at a given point on a particular fault. It was their attraction to this assumption that drew three young seismologists to Parkfield, California, in the late 1960s and early 1970s. Al Lindh was an unabashed child of the sixties, carpenter, garbage route impresario, and an itinerant student for years up and down the West coast, a cultural explorer who eventually got his B.S. in geophysics at UC-Santa Cruz and joined the USGS in 1973. He then began work on his doctorate at Stanford, near his job at the USGS in Menlo Park. His dissertation took him to the small town about midway between L.A. and San Francisco that was the site of a half dozen historical earthquakes M6 or so in size. More importantly, it was where the San Andreas, running south, appeared to "lock up" again, just south of what is called the creeping section. This is where, simply put, the earth on either side of the fault in this short region seems to be moving freely without accumulating any strain. Lindh showed that the fault took a five degree turn, which seemed to isolate it from the creeping section and put it back in the line of fire of traditional tectonic accumulating stress.

Lindh then graduated from working essentially alone and planted the seeds for a much grander notion: To establish at Parkfield a focused experiment that would monitor the area for as many signs of impending earthquakes as geophysicists could develop instruments to measure. "A lot of my energy went into talking to people for three or four years. It was plain old science as usual. You write papers, you give seminars, you talk to friends. Some of them believe you, some don't; but they keep

hearing the same old story. After a while some of them even decide they want to get involved" (Heppenheimer, 1990, p. 175). One who did was Bill Bakun, another geophysicist whose graduate work focused on Parkfield. In 1979, together with Tom McEvilly (a professor of geophysics at UC-Berkeley), based on the Parkfield sequence, Bakun had sketched out what was to become the cornerstone of the characteristic earthquake hypothesis.

At Parkfield they perceived similarities between the 1934 and 1966 quakes that led them to a more detailed analysis of the four previous quakes on the fault in 1922, 1901, 1881, and 1857. Analysis of historical, geodetic, and seismographic data showed a remarkable similarity in the rupture pattern. They found, said Ellsworth, that it seemed to start at the same place, and seemed to produce the same amount of slip regardless of when it occurred. But when it occurred also interested Bakun and McEvilly, who saw a tantalizing suggestion of pattern to the time sequence as well. They finally selected a statistical model they believed could characterize the sequence of data point/ dates, and it produced a result of 22 ± 5 years. If the fault were to rupture again during that period, they were fairly certain it would be a repeat performance of, at least, the six previous ruptures they had studied. If the physical characteristics were close enough to be called "the same," or characteristic, and the time interval also fit the pattern they had discerned from their statistical modeling, strong claims could be made that this—and perhaps many other—earthquakes were characteristic in all of their major parameters—in sum, a tightly controlled block and spring experiment conducted by nature.

Bakun and McEvilly's statistical analysis of the Parkfield events led them to develop a recurrence model with a 95 percent reliability that predicted the quake "should" occur within 5 years (plus or minus) of 1988. That this window has now closed and the Parkfield segment has not yet ruptured casts doubt on Bakun and McEvilly's model, and seismologists have joined a debate about the characteristic earthquake hypothesis. "In fact," said McNutt, "Paul Segall at Stanford has argued from his geodetic research work that the so-called repeated Parkfield earthquakes broke different sections of the fault." Ellsworth points out that his USGS colleagues developed their prediction for the time interval to the next quake with a particular statistical model, a choice influenced by the time series they had to work with. But with only six data points, this model could be "the wrong one,'' as compared to a different choice that might have been based on a richer sequence of not only those six data points but hundreds of others as well. Looking (and guessing) in the

1980s and not in the year 4980, however, they didn't have hundreds of others but only six. Thus, the Parkfield controversy is somewhat off the point, Ellsworth believes, and the failure of the next Parkfield event to arrive on Bakun and McEvilly's schedule has little, if anything, to do with the characteristic earthquake hypothesis.

Ellsworth emphasizes that the interevent interval assumes its significance only in the "surprising" context "that the Parkfield segment appears to have produced nearly the same earthquake each time it has broken. This was a surprising result, as one might expect that one earthquake so changes the stresses that the next will bear no relation to the former, and certainly won't be identical." What drives geophysicists is their deep interest "in what happens when the fault breaks in a characteristic event, whether or not the stress levels reach the same level each time, what initiates the rupture, and just how the rupture proceeds along the fault." There has been sufficient data gathered about such events, he believes, to state that the characteristic earthquake model "is quite strong for some parts of the San Andreas fault,'' notwithstanding dissenting opinions about Parkfield. As evidence, he relies less on the Parkfield sequence and the timing of the next rupture there than on the extant record of smaller earthquakes (M 1–5) throughout California, many of which do conform to the model. He believes the question is best attacked by concentrating on those earthquakes that rupture—as can be discerned in a study of their seismograms—in an almost identical pattern underground, even if they are much smaller than Parkfield or other larger magnitude quakes that draw our attention because of the threat they pose.

Though stronger evidence continues to accumulate, it is still possible that these attempts at forecasting and subsequent work may eventually reveal the absence of pattern: Perhaps regularities in earthquakes are not generic but rather indigenous to a particular type of plate boundary, even further to more specific local conditions that have yet to be defined. It may even turn out that there is no such animal as a characteristic earthquake, but rather—to mix scientific metaphors—only an order, with species as rich and diversified as those on the Galapagos. At present, however, the characteristic earthquake idea frames much of the thinking about long-term prediction.

The Parkfield drama thus provides a concentrated lens to view seismology, and several interesting issues come into focus. First, how can scientists expand the empirical data base available for their probability models? While monitoring nature's own ongoing laboratory experiments by attending closely to the details of quakes as they occur, the

only other place to look is the past, to recorded history and, with the intriguing new science of paleoseismology, into prehistory as well. Second, as people living in fault zones—and the politicians who represent them—go about their business under the shadow of "The Big One" and other less mythic earthquakes, they want to know what to do. To scientists and the USGS falls the role of providing the best information possible, both to forecast quakes and to try to mitigate their effects. Third, geophysicists are developing other analytical and theoretical ideas (besides the block and spring and characteristic models), laboratory techniques, and monitoring strategies to try to better understand the genesis and mechanics of earthquakes. Finally, though the Parkfield quake didn't erupt in October 1992, another furor of sorts did, shining a spotlight on both the science and sociology of earthquake prediction.

Hitting the Trail into Past Time

As any scientist will readily concede, your conclusions are only as good as your data. And for models as heavily reliant on the particularities of data as are the seismic cycle, elastic rebound, and characteristic earthquake theories, interevent times for a particular fault shine like diamonds in a coal mine. The more precisely known the year of past events, the closer can be the statistical analysis of the spectrum of data. The greater the number of events or data points, the more likely that subpatterns will be expressed and the greater confidence scientists can take in their conclusions. In recurrence models, scientists reflect their subjective judgments about the quality of the data used to produce a particular prediction with a reliability measure (such as A through E for strongly to weakly confident).

A major hurdle faced by those trying to unravel the mystery of earthquakes is the recency of recording devices. Since John Milne began to install his worldwide network of seismographs in 1896, there is less than a century of seismograms to pore over for evidence of repeating earthquakes. The elastic rebound theory predicts that the larger the quake, the longer the time necessary for the fault to reaccumulate that amount of stress; thus, the interevent times for quakes above M 6 are measured in centuries, and not even one full cycle has run since seismology ushered in the era of instrumentation. Ellsworth, Agnew, and many others have extended their research farther back into the preinstrumental historical record to see what settlers had to say about nineteenth-century earthquakes.

By adding history to seismology, the potential time series for the

San Andreas system was about doubled but still covered only just a bit more than one cycle for any given fault. McNutt wonders if better archeology of American Indian culture in this region might not provide evidence even farther back. But for now, the written record from the American West provides scant material for historians before the early nineteenth century, and so other scientists returned to the basic science of geology, examining the rocks and strata near the earth's surface for evidence of the history of past earthquakes.

Paleoseismology

The Cenozoic (or present) geological era coincides approximately with the appearance and rise of mammals some 65 million years ago, but geologists searching the record for evidence of prehistoric earthquakes are content for now to rummage around in the Holocene epoch, approximately the last 10,000 years. One of the lead scouts on this expedition into the past is Kerry Sieh, who modestly explains away his first National Science Foundation grant (awarded before he had even begun graduate school at Standford): "Really, I was just in the right place at the right time." Ellsworth notes that while geologists had been gathering fault slip data since G. K. Gilbert's day, "Kerry really saw what to do with these clues in the geologic record" and made an enormous investment in time and energy on what to many others at the time was little more than a scientific hunch. Now, 20 years later, a significant body of crucial prehistoric data has been developed by Sieh (supported early on by his mentor Richard Jahns, the late dean of the School of Earth Sciences at Stanford) and others following his lead into the new subfield of paleoseismology, which he defines as "the recognition and characterization of past earthquakes from evidence in the geological record."

A paleoseismic exploration begins by excavating the earth at the fault trace to create a large pit and reveal the fault broadside, thereby unveiling a mural deep enough to look back centuries in time. Ancient organic remains exposed in the mural could be dated to establish when the layer in which they were embedded was deposited. What Sieh typically saw in such cutaway views was remarkably similar to another earthquake signature seen at the surface of the earth—offsets. Imagine a creek or river running across a fault: When the fault moves suddenly and produces an earthquake, the opposite shore banks are displaced up to several meters, moving the creek bed too, and producing a zigzag pattern through time of the course of the water. Seen from the air, even to the untrained eye, the San Andreas reveals hundreds of such offsets of

streams and other obvious surface features. Similar offsets in Sieh's rocky mural provided analogous intimations of plate movement, and read like chapters in the history of a particular fault, a story written by the earth and translated by geologists. Ellsworth provides a kind of synopsis of this story: "Some earthquakes and some faults are not totally random in their behavior, but rather have a history, which we are learning to read."

Sieh's first major discoveries were at an area known as Pallett Creek, a tiny stream flowing down from the mountains into the southern Mojave Desert, east of Palmdale, near the town of Pearblossom. When he saw from aerial photos that the mountain stream seemed to jog in an offset of about 130 meters at the fault, he believed he had found a stream older than the Holocene and that he might unveil 10 millennia or more worth of earthquake data. But the radiocarbon analysis (see Box on p. 171) of peat just below the streambed indicated it was only 2000 years old. Sieh was incredulous that the large offset could have developed in that short a time. He eventually discovered that the stream had been redirected not by a fault but by a natural rise in the land. When he finally revealed the trace and completed a painstaking examination of hundreds of square meters of stratigraphic layers of soil and peat, he began to refine a methodology using radiocarbon dating and other lines of analysis that could date indicative offsets and formations known as sandblows to within a couple of decades.

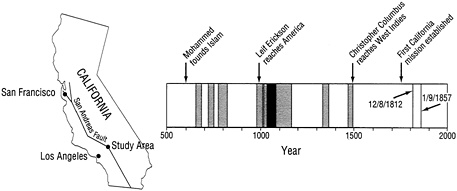

To Sieh's ever-developing eye, the mural at Pallett Creek began to reveal a sequence of a dozen earthquake events repeating here at the edge of a continent, half a world away from anyone who could provide historical verification. As he put it, "Geology was running concurrently with European history." Sieh has a keen sense of the paradox of specifying events in these relatively piddling pieces of historical time, while working in the field of geology where eras are routinely scored in hundreds of millions of years. "This fascinated me; I love working with geology that I can put in a human context. It's tough having a sense of time when you're talking billions of years" (Heppenheimer, 1990, p. 87). In Sieh's catalog the recent geological history of the region near Pallett Creek came alive, as earthquakes were indicated in the years 260, 350, 590, 735, 845, 935, 1015, 1080, 1350, 1550, 1720, 1812, and 1857. (see Figure 6.4 for a history of earthquakes on the San Andreas fault.) There had been thirteen significant earthquakes on a fault that, though it had not broken for well over a century, now lay within 55 kilometers of Los Angeles, the second-largest city in the country. Add the 145-year average interval to the most recent occurrence, and the significance of

|

Radiometric Dating in Paleoseismology Much of the earth's thermal energy comes from radioactive isotopes created during its formation some 4.5 billion years ago. Potassium, thorium, and two species of uranium are the primary elements, heavily concentrated in the earth's crust, where 40 percent of the heat flow experienced at the surface comes from. Radioactive is synonymous with unstable. As these elements lose electrons, they decay into what are called daughter products, stable elements such as argon, strontium, and lead but versions of these that can be identified as descendants of each radioisotope. Radiometric dating relies on the discovery that each of these elements takes a specific and known time for half of the atoms in any particular collection to decay, a period known as the half-life. Because the half-lives of these elements are in the millions and billions of years, they provide a time scale congruent with the history of the planet. Carbon-14 (C14), on the other hand, has a half-life of only 5730 years, and thus radiocarbon dating is better suited (and limited) to about 80,000 years. This radioactive isotope (created by cosmic-ray collisions with carbon dioxide molecules in the upper atmosphere) comprises a known portion of the carbon that is continually being incorporated into organic matter on Earth. C14 begins to decay when its host dies, and thus the same calculation (of the present volume of atoms versus the original) allows the fossil to be dated. Kerry Sieh of Caltech, following the lead of Stanford's late Richard Jahns, was a trailblazer with his work at Pallett Creek (known as the Rosetta Stone of paleoseismology) and other California fault sites. By cutting away a trench and revealing a fault broadside, he was able to construct what is called a stratigraphic map of the history of that fault's ruptures. By concentrating on the peat buried at various strata, he was able to develop dates for 12 Pallett Creek earthquakes going back to the third century. His original study is reported in the text. In 1988, with colleagues Minze Stuvier from the Quarternary Research Center at the University of Washington, and David Brillinger from UC-Berkeley, he reexamined the original samples (Sieh et al., 1989). Advances in the technique at Stuvier's lab reduced the error range of 50 to 100 years down to 12 to 20. Redating the most recent 10 quakes recalculated the average interval to be 132 years, which was precisely the amount of time that had elapsed since the last quake in 1857. |

Sieh's work to millions of Southern Californians is clear. Such a dramatic impact happens infrequently in the steady incremental progress of daily science, and Pallett Creek has come to be known as "the Rosetta stone of paleoseismology."

Slipping and a "Slidin"

When Sieh first began these excavations in the late 1970s, plate tectonics had become a useful and accepted set of ideas, and the basic rate at which the Pacific and North American plates were sliding past each other had been established at about 5.6 centimeters per year for at least the last 3 million years. Given this underlying relative movement

FIGURE 6.4 History of earthquake occurrence on the San Andreas fault extended 1200 years beyond written records by geological investigations. Detailed geological studies augment the relatively short historical and instrumental records of U.S. earthquake activity and yield critical information on the timing and character of prehistoric earthquakes. At a study site on the San Andreas fault northeast of Los Angeles, earthquakes have occurred in clusters of two or three events spanning several decades separated by dormant periods of two or three centuries. Precision radiocarbon dating of buried peat horizons in a faulted sequence of marsh and stream deposits has yielded age ranges (shaded bars) for eight earthquakes prior to the 1812 and 1857 shocks, which are documented by historical accounts. The observed pattern of earthquake clustering was not apparent in earlier studies, which used less precise, conventional radiocarbon dating techniques. Knowledge of long-term patterns of earthquake occurrence is needed for probabilistic forecasting of future earthquake activity. (Reprinted from USGS, 1992, p. 26.)

of the entire plate system, Sieh could use the elastic rebound model to provide an earthquake catalog extending deep into the prehistoric past. The calculation begins with the assumption that, if the crust were totally rigid and moving at the plate rate (which has since been revised downward to 4.8 centimeters per year), the Pacific plate would move northwestward at a steady rate, relative to the southeasterly sliding North American plate (so that in less than 40 million years San Diego would become a suburb of Seattle).

Because the fault is a frictional surface however, Ellsworth points out that sections of the fault, identified as asperities, "lock up" and the elastic crust accumulates and stores this movement in the form of strain and potential energy, breaking only when the fault's frictional resistance is overcome. Though geophysicists with increasingly powerful laboratory instruments and theoretical models have for decades been trying to specify exactly what these conditions might be, long-term forecasting still rests largely on the premise that—whatever they are, at least according to the characteristic earthquake hypothesis—they are consistent from one occurrence to the next. Thus, the plate rate provides a way to quantify the strain accumulated over time. Over vast time periods the crust must catch up with the lithospheric "raft" below (of which it is a constituent part; not to be confused with the asthenospheric boundary where the entire lithospheric plate can slide more or less freely) and thus, at any particular period, it is more or less behind. When it gets so far behind that fracture finally occurs, an earthquake is the likely result, and—at least in transform fault zones like the one where the Pacific and North American plates meet in California—measurable offsets will be carved into the earth.

Take one specific fault strand, such as Pallett Creek, and a stratigraphic map will reveal a history of such offsets for many centuries. One first measures the offset distances and (knowing the age of each offset) can then correlate them with the time it took for the plates to separate sufficiently to cause the earthquake that produced that offset. The method works best with major earthquakes, where it is presumed that all of the accumulated strain was released (following Reid's concept of elastic rebound) and the equation reverts back to zero. In fact, Reid recommended 80 years ago that more systematic measurements be taken near the San Francisco rupture, but it would be decades before this was done. Seismologists use this slip rate feature of plates as a baseline for their time-predictable models.

Another project of Sieh's illustrates the significance of this basic plate rate to forecasting earthquakes for individual faults. Using Wallace's

aerial photos of a different unnamed stream that flowed out of the Tremblor Range and onto the Carrizo Plain, Sieh began by examining the offsets where the San Andreas ran directly across what he soon christened as Wallace Creek. Rather than a series of offsets, Wallace Creek at the surface showed one major zigzag of 128 meters and another larger one of 475 meters that C14 dated at 13,250 years. After excavating many trenches over a number of years, Sieh finally proved that the smaller offset was a measurement of the slip along the fault that had accumulated since a major mudslide about 3700 years ago. To within the limits of error, the slip rate for this and the much larger offset, after Sieh had sifted through many confounding factors, concurred at about 3.4 centimeters a year, which also matched the rate determined at Pallett Creek and elsewhere.

When the slip rates for other faults were determined, few matched the underlying annual slip rate of 4.8 centimeters per year for the Pacific plate itself, and thus other major fault zones are known to be accumulating some of the stress. This piece of data permitted slip rate calculations, based on offset distance and interevent time, to be compared throughout the San Andreas fault zone. As already discussed, one particular region of the fault in Central California, just north of Parkfield, does actually appear to be slipping steadily—a phenomenon known as creep—at the full rate for the San Andreas fault. This creeping section would seem to mitigate against anything like a superquake that could tear through all of California.

Sieh's paleoseismic studies and a study of the history of California seismicity by Ellsworth both indicated that a major event was due in Southern California, but in June 1992, said Heaton, the rupture at Landers "was a complete surprise, which cut across all known fault lines." With a seismic force of M 7.5, it was the largest California quake in 40 years and—after the 1906 San Francisco quake—the third-largest in this century. A primary question immediately arose: Did Landers make Los Angeles and other Southern California cities a safer place to live? The persistence of "The Big One" mythology was prominent in the California media in the wake of Landers, as scientists were asked repeatedly not only whether this was it, but also whether the Mojave shear zone, which contained the source of the Landers quake, was now accommodating more of the movement between the North American and Pacific plates than previously believed. The simple answer to both questions was no, but simple answers do not really convey the complexity and the extent of seismological wisdom. A working group of seismologists was convened to develop and publish information about

Southern California, post-Landers. Sieh and Agnew were two of the dozen members, which included as cochair Tom Heaton.

What the group identified as the Landers sequence illustrates the puzzle mosaic nature of earthquake science, for each major event holds the promise not only of pushing probability data farther toward certainty but also of revealing subterranean connections not readily apparent. In retrospect, it is clear that Landers culminated a sequence of events in the Mojave zone, which includes the 1975 Galway (M 5.2), 1979 Homestead Valley (M 5.6), 1986 North Palm Springs (M 6.0), and 1992 Joshua Tree (M 6.1) quakes. It can be seen as the main shock of that sequence, because of its size (M 7.5), and it is believed that stress on this fault has been significantly diminished. Moreover, not 3 1/2 hours after Landers, a major quake occurred nearby at Big Bear, measuring M 6.5. The group decided that the Big Bear quake was an aftershock of the Landers earthquake because it was within one rupture length of the Landers main shock and had a magnitude consistent with the normal distribution of aftershock sizes for an M 7.5 main shock.

In sum, the Landers and Big Bear quakes provided an opportunity to update the overall picture of seismicity in Southern California as of June 1992 and also for the working group to reinforce the bottom line of all of the comparative statistics, of which seismologists have been aware for over a decade: "Portions of the southern San Andreas fault appear ready for failure; where data are available [such as Sieh's Pallet Creek studies], the time elapsed since the last large earthquake exceeds the long-term recurrence interval" (Ad Hoc Working Group, 1992, p. 1). Long-term forecasting revolves around the seismic cycle, and "since 1985, earthquakes have occurred at a higher rate than for the preceding four decades," the group emphasized, referring specifically to the period since 1985, which showed a rate 1.7 times greater for M 5 and larger quakes and 3.6 times greater for M 6 and larger earthquakes.

While quakes occur and energy is released on distinct fault segments, it is believed that stresses become redistributed throughout the fault zone. On the affected segment, strain will probably be diminished, but on nearby segments and faults it might well be increased. The group decided that the Landers earthquake increased the stress toward the failure limit on parts of the southern San Andreas fault, in particular on the San Bernardino Mountains segment. Contrary to some popular reports, Landers and Big Bear do not likely represent a major underground shift of stress accumulation and release to the Mojave zone. Thus, the group confirmed that over 70 percent of the effects of plate motion due to the 4.8 centimeters per year of slip between the plates is

straining the southern San Andreas, an area where millions of people could be affected by a major quake.

Reading the Historical Record

Distributed stress from the Landers sequence aside, it is still crucial to look at the long-term history of large quakes in Southern California. Sieh has recently taken another route into the prehistoric record, which, though not quite as far reaching as trenching studies, seems to provide much more accurate dates: Tree rings. As with the development of stratigraphic mapping, the procedure didn't just fall out of the laboratory fully ready to be used, though botanists have known about tree rings as a measure of age for centuries. With each new spring a tree begins a new ring and thus develops a putative birth certificate. When Sieh realized that many trees shaken by prehistoric earthquakes had been damaged but not destroyed, he began to look to their tree rings to determine the precise year of the earthquakes. Whether it be damage to the tree's root system by fault rupture underfoot or to its overall health by a lightning strike, flood, or some other environmental catastrophe, exactly when a particular growth ring was affected could pinpoint an event to within several months. By looking at patterns of nearby and somewhat farther removed trees, he was able to distinguish between these various types of ancient environmental phenomena, largely based on where they stood relative to the fault and to one another. This culminated in yet another method for dating earthquakes, which could take advantage of California trees up to 500 years old.

Working with tree ring expert Gordon Jacoby from Columbia University, Sieh realized that the meager information about the December 8, 1812 earthquake came from the Spanish missions that were near the Southern California coast, and a doubt dogged him because he realized that the intensities out toward the San Andreas could have been much higher than those recorded at the mission. One night over dinner he and Jacoby were discussing the tree ring record in the context of their search for corroborating evidence of a quake on the San Andreas in the early 1700s. With his misgivings about the interpretation of the mission evidence in mind, he asked Jacoby about tree ring data after about 1810 and was told that no count had been made beyond 1812 because the rings were too close together to distinguish. "Aha" the two said in metaphoric unison, which Ellsworth thinks is "a rather good example of how science is done." Many trees, once the scientists knew where and what to look for, indicated a quake at the end of the 1812 growth season.

It could have been the earthquake known to have been centered near Santa Barbara on December 21 of that year, but in an impressive bit of deduction (given the time frame of just a couple of weeks nearly two centuries in the past) Sieh rejected that possibility, accounted for all the known data, and concluded that ''there's no reason why it's not perfectly consistent with a great quake on the San Andreas" on December 8. The significance of this possibility, like the work at Wallace and Pallett creeks, is what it says about historical seismicity in Southern California and the fate of Los Angeles and other major urban centers.

With his work on the 1812 quake, Sieh had moved into a more recent area of inquiry, using nineteenth-century historical records. Working in this area of preinstrumental seismicity, scientists are limited to intricate schemes of inference and induction in order to quantify an earthquake (see Box on p. 158). Sieh and Agnew developed an isoseismal map in their exhaustive reexamination of the next major historical event in Southern California, the famous Fort Tejon earthquake of January 9, 1857, the importance of which, they point out, has long been recognized.

Their analysis suggested to them that the intensity along the fault in 1857 "must have been IX or more [corresponding on the Modified Mercalli Intensity Scale to "general panic, most masonry heavily damaged or destroyed"—see p. 159] since trees 20 kilometers west of Fort Tejon were overthrown and buildings were destroyed between Fort Tejon and Elizabeth Lake," the then-small Pueblo village that occupied the present site of downtown Los Angeles and fared much better, experiencing an intensity of VI or so ["felt by all, weak plaster cracked, dishes broken"]. Thus, they observed, "perhaps the most interesting conclusion that can be drawn from this study is that … were the 1857 earthquake to be repeated today, there would not be extensive damage to low-rise construction in the metropolitan Los Angeles area, [though] the evidence does suggest that there would be substantial damage to structures along the fault."

Add Sieh's interpretation of the 1812 event to this clarification of 1857, and a number of questions must be faced by the city fathers of Los Angeles. Depending on which fault ruptured in 1812, did the two major quakes in less than 45 years relieve so much strain on one fault that it may yet be many more decades before it will reaccumulate? Or were those quakes on different but nearby faults, forming a kind of "pair" sequence that could arguably be seen in San Francisco, where a major quake preceded the 1906 event by only 68 years? If so, "The Big One" could actually become "The Big Two" and strike twice during the lifetimes of many who will be fairly young for the first. Or does the

Pallett Creek sequence, based as it is on an impressive time series of events, suggest that the city is right now in the bull's-eye of the probability window? Many such questions can be better posed as a result of interpreting the historical earthquake catalog in the context of the elastic rebound theory, especially when slip measurements of the entire San Andreas system are added to the mix.

The most exhaustive catalog of California earthquakes to date—incorporating the earlier compilations and analyses of many others—was provided by Ellsworth and appears in Wallace's San Andreas overview. Ellsworth regards Sieh's work at Pallett Creek as seminal and the contribution of other paleoseismologists and historians as invaluable. Because their studies are primarily limited to earthquakes of large magnitudes, which have interevent times of many decades, even centuries, scientists have no choice but to invent complementary ways, like paleoseismology, to look back in time, the farther and more accurately the better. But Ellsworth's conviction about the importance of testing the characteristic earthquake hypothesis has led him to focus on the more numerous smaller-magnitude earthquakes that can be studied in the seismographic record. If you limit yourself to the bigger earthquakes, you're going to be data limited. Go in the other direction and look at smaller quakes, he suggests, and you will find yourself on a firm observational base with data on many earthquakes as small as M 1 from which to draw conclusions. "It's really almost an experimental machine," he marvels, "because the earth is actually turning the experiments out for you. The natural laboratory allows you to look at the cycle for small earthquakes many times within your record."

Predictability and Politics

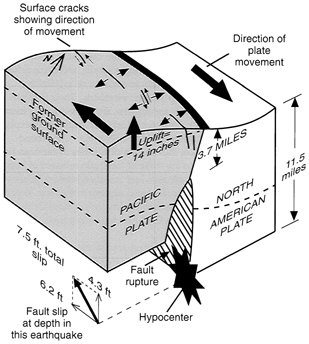

"In the late afternoon of 17 October 1989, as the eyes of America turned toward Game 3 of the World Series at Candlestick Park, the largest earthquake in northern California since the great earthquake of 1906 struck the San Francisco Bay Area," wrote the USGS in Science. The television picture suddenly began to tremble and the people on camera demonstrated that unholy moment of fear [though sports commentator and San Franciscan Al Michaels was later commended for an uncommon sense of control and awareness] when an earthquake begins to rumble beneath your feet. The nearby southern segment of a fault running just east of Santa Cruz under the Santa Cruz Mountains had broken on a steeply dipping fault plane at a point 17 kilometers beneath the surface, a rupture that lasted for 7 to 10 seconds, and slipped for a couple of meters

FIGURE 6.5 Schematic diagram showing inferred motion on the San Andreas fault during the Loma Prieta earthquake. Along the southern Santa Cruz Mountains segment of the fault, the Pacific and North American plates meet along an inclined plane that dips approximately 70 degrees southwest. Plate motion is mostly accommodated by about 6.2 feet of slip along a strike of this plane and by 4.3 feet of reverse slip, in which the Pacific plate moves up the fault and overrides the North American plate. The amounts of fault slip and vertical surface deformation were determined from geodetic data. (Modified from a figure by M. J. Rymer. Reprinted from USGS, 1989, p. 6.)

deep underground (see Figure 6.5). The fault slip itself did not reach the surface to produce a trace, but surface waves achieved a magnitude of 7.1. As reported by the USGS, the ground shaking collapsed sections of the Bay Bridge and Interstate 880 (in Oakland); began fires in San Francisco's Marina district; ultimately caused 62 deaths and 3757 injuries; destroyed 963 homes; damaged another 18,000, leaving 12,000 temporarily homeless; and ultimately will have cost some $10 billion. These statistics rate it as one of America's most serious natural disasters. As such, it raises questions about how long-term forecasting fits into the American political infrastructure, since in the context of work done by Ellsworth, Agnew, Sieh, and many others, the USGS in the Science article classified Loma Prieta as "an anticipated event."

Californians have felt thousands of earthquakes over the decades,

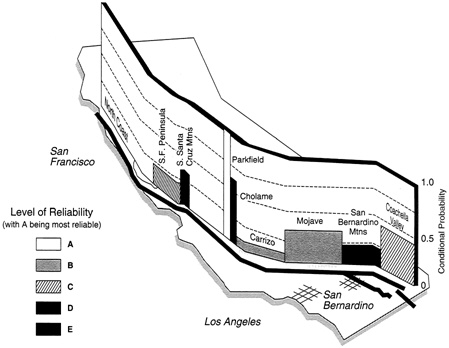

and so it was natural that the politics as well as the science of prediction should center there. The latest intersection is the Working Group on California Earthquake Probabilities, organized by the National Earthquake Prediction Evaluation Council (NEPEC) under the auspices of the USGS. The group includes representatives from the USGS, academia, and private industry. Ellsworth, his colleague Lindh, and Chris Scholz (geophysicist from Columbia University and author of a definitive current text) are three of the group's dozen members. Their first two reports bracketed the Loma Prieta earthquake, and, based on the former report (Working Group, 1988), the latter (Working Group, 1990) explains why the USGS was able to "anticipate" the 1989 event. The second report establishes and discusses the future probabilities of earthquakes in the San Francisco Bay region in the wake of the strain released at Loma Prieta. In the first report, seismologists had calculated the probability that each of California's major faults would rupture in the next 30 years, expressed as a fraction. The highest probability in Northern California was assigned to the southern Santa Cruz Mountains segment, .3 (see Figure 6.6).

To the scientist familiar with statistical procedures and the Poissonian model (which was the reference case), such numbers are very compelling. To those without this perspective, however, it may seem that a .3 probability of an event occurring can provide a false sense of security based on the 7 in 10 chance the event will not occur in the next 30 years. As Ellsworth says, your chances of experiencing a quake on a given day are microscopically small, though it will be a day, if you survive it, you will never forget; what he calls a low-probability / high-impact event. To illustrate, he suggests you think about the odds of being injured driving your car, compared to your chances of being hurt in the quake, even assuming it will arrive. If you stay put and ride out the quake, your chances of injury are less than if you took your family for an all-day drive in the car. Thus, he concludes, "the appropriate response to such a low-probability / high-risk situation is not always obvious."

While scientists are able to vest increasing confidence in their long-term forecasts, the very nature of probability estimates will probably remain somewhat inscrutable to the general public. Those accustomed to taking their science in predigested and denatured journalistic tea-spoons are not likely to develop a sudden appreciation for the subtleties of uncertainty inherent in this sort of scientific exercise, especially when their lives and possessions seem to be threatened. Thus, emphasized Ellsworth, coming to a consensus for such reports is critical "when carrying uncertain knowledge into the public arena."

FIGURE 6.6 Conditional probabilities of the occurrence of major earthquakes on the San Andreas, Imperial, San Jacinto, and Hayward fault systems for the 30-year period from 1988 to 2018 (Reprinted from USGS, 1988, p. 9.)

Rather than try to unveil what may be seen as the esoteric mysteries of such statistical phenomena, recent experience suggests it is better to look broadly at the results. Using the current models, the consensus 30-year probability of a rupture for the Santa Cruz segment was .3. (Lindh in particular had focused on this segment, and expressed the probability much higher, between .47 and .83 for an M 6.5 event). Yet the earthquake ruptured in less than 2 years, many people were killed, and few precautionary steps had been undertaken. Thus, when a 30-year probability estimate for a given segment is above .2, the question probably should not be "How soon will the quake actually arrive (with accompanying acrimonious debate among scientists and consequent misinformation disseminated in the press)?" But rather, since it is highly likely that it will arrive sometime in the humanly foreseeable future, "What are we going to do about it?"

This dual perspective poses a dilemma that weighs heavily on most of the scientists involved in earthquake prediction. As Ellsworth put it: "In this field we find ourselves trying to maintain a delicate equilibrium between research at the frontiers of our understanding of the phenomenon and its application to public policy." The USGS, after completing the Working Group report for 1990, decided to confront the threat of misinformation head on. Rather than leaving the scientific report (properly and documentarily dense, though well structured and clearly written) for the press and public to interpret and summarize for themselves, the USGS produced a full-color, 24-page pamphlet in English, Spanish, Chinese, and braille. When the scientific report was released, 41 newspapers in the region provided the more accessible pamphlet as an insert in their Sunday editions, leading to the distribution of 2.5 million copies. As is only reasonable given the intended readership, the pamphlet emphasized preparedness, but it also included a lucid section titled "Why a Major Earthquake is Highly Likely."

Using clear language and illustrative figures—and absent the tendency of scientific publications to overly qualify and understate important conclusions—the pamphlet would seem to be a major step in dealing with the dilemma seismologists face. Since so much of the important seismological research in America moves through a federal agency, the USGS, the sense of responsibility for the public interest is inherent, especially since refereed journals and the other mechanisms of peer review and scientific method remain in place to monitor validity. Notwithstanding the determination to transmit the most constructive message to the public, however, the dilemma remains. In the real world—until science can predict earthquakes within a much shorter time frame and with much more certainty—politicians and people will continue to act out these uncertainties in socially predictable ways.

GEOPHYSICS IN LABORATORY EARTH

Solar and lunar cycles are predictable. Ever since Copernicus and especially Newton, scientists—by constructing descriptions from observed phenomena and deriving from them the laws of nature and the laws of physics—may be said to have explained these cycles with their theories. As Bolt puts it, "A strong theoretical basis is usually needed to make reliable predictions such as in the prediction of the phases of the moon or the results of a chemical reaction" (Bolt, 1988, p. 159). By contrast, the seismic cycle does not represent a coherent explanation of known phenomena with fully identifiable causes; rather it seems to

describe what appear to be categorical time periods. Look at seismicity in a region, on a fault, on a plate boundary, or in historic earthquake catalogs, and a general pattern seems to emerge. But the general pattern that arises from the conjunction of the earthquake cycle—generally accepted—and the elastic rebound theory does not mean that all earthquakes share enough underlying aspects to be encompassed by a descriptive model like the characteristic earthquake hypothesis.

The USGS does not evoke the specter of a monolithic bureaucracy. Ideas and theories bounce around and collide in hallways and through modems like molecules in a confined gas. As an example, Heaton is unpersuaded by the premise that many earthquakes repeat regularly: "It has been extremely difficult to test the characteristic earthquake model." He doesn't challenge the actual preinstrumental data in the nineteenth-century catalogs or the geological evidence Sieh and others have unearthed. But he does believe that, "Unfortunately, many questionable assumptions must be made when interpreting either of these types of data." Citing one example, he points out that, if the Loma Prieta earthquake had been prehistoric, searches such as Sieh's would probably not have found evidence for it since there was no trace close enough to the surface to dig up, the fault having been confined to between 5 and 18 kilometers underground. And while he concedes that the first Working Group report in 1988 "predicted" Loma Prieta in the sense that the Santa Cruz segment was assigned the highest probability of rupture, he says most of his colleagues found the character of the earthquake quite surprising. The fault rupture in 1989 did not duplicate the 1906 event and thus ''raised many questions about the validity of the characteristic earthquake model for this stretch of the fault."

Heaton is challenging the very notion of a characteristic earthquake, in part because of the paucity of direct data and the many assumptions and inferences involved. He believes that "it will be many generations before we have collected enough reliable data to observe the repetition of rupture along given fault strands." Even before the October 1992 Parkfield revelations (to be discussed in the final section), Heaton noted that this fault also may have fractured differently at different times in the past. Such dilemmas have "caused much debate within the seismological community. … The Parkfield earthquake is now almost 4 years beyond its mean recurrence interval, giving plenty of time for scientists to raise serious questions about the validity of the Parkfield Prediction Experiment and the overall validity of the characteristic earthquake model." These and other problems have impelled him to take a different tack toward prediction, focusing on the dynamics of the faulting process.

It would be misleading, however, to suggest that the seismological community looking at recurrence models is taking different approaches altogether, since as Ellsworth points out, he and others are actively trying to establish or disprove the characteristic earthquake hypothesis with hard evidence. Ellsworth emphasizes that, "Our goal, whether we study the small earthquakes recorded by modern seismographs, historical earthquakes, or paleo earthquakes, is the same: To understand the underlying physics of the earthquake source."

The Stress Dilemma

A first step in this direction is to confront more directly what Heaton calls "the stress dilemma." Geologists are obviously concerned with the pressure and forces that might prevail at various depths beyond direct measurement. Bars (and their aggregation into thousands, kilobars) provide a convenient scale of measure, since a bar is equivalent to 98.697 percent of the atmospheric pressure at the earth's surface. (More precisely, a bar is the force of 15 pounds per square inch, or 106 dynes per square centimeter, where a dyne is the force needed to propel 1 gram 1 centimeter per second.) Calculating the apparent force exerted by the overlying rock (whose mass is generally known), scientists tell us that pressure obviously increases with depth. In the 5- to 15-kilometers-deep, shallow-focus zone where most earthquakes commence, a straightforward calculation of pressure yields about 1.5 to 5 kilobars. This is what is known as confining pressure, says Heaton, and it sets a general limit to the earthquake forces seismologists are curious about: "Almost any type of rock at [those] pressures can withstand shear [or tearing] stresses of at least one half of the confining pressure, before it yields. … Faults obviously yield during earthquakes," he reasons, and thus "we expect the shear stress to be on the order of 1 to 2 kilobars," that is, 1000 to 2000 times the atmospheric pressure at the surface. Whence, then, the dilemma?

The stress released during an earthquake is somewhat hard to actually measure, but a fairly close approximation is possible, and Heaton points out that these stress changes are two full orders of magnitude smaller, typically about 15 bars. "And it's a good thing," he continues, because if you had a full kilobar of stress drop on a fault rupture that was 15 kilometers in diameter, the activity at the surface would be devastating. While seismologists cannot say beyond a first order precisely what an earthquake is, Heaton knows what it is not: "An earthquake is not taking a piece of rock up to its ultimate strength and letting it totally fail." Such a scenario provides an unlikely stage on which the

drama of evolution could have been played. The fact that earthquake stress drops appear to be much smaller "is why there is life on Earth. Otherwise, everything would be dead."

Heaton illustrates this prophesy with what physics tells us about a world where the smaller forces (that seem to be observed) were not the operative ones. The fault would slip 100 meters (compared to around 2 meters, for example, at Loma Prieta). The ground itself during this tear would move about 10 meters per second. Heaton hypothesizes: "If your building was strong enough to survive this motion—say a bomb shelter—then certainly you would be killed by a wall running into you at 25 miles an hour. … Nothing would survive the experience. The point is, that although earthquakes seem violent to humans they actually involve stress changes that are small (typically about 15 bars) compared with the confining stress at the depth of earthquakes." The measurements of accumulating strain in the crust over several decades also support this premise, says Heaton: "The rate at which shear strain builds on the San Andreas fault is about 2 × 10-7 per year, or less than 1 bar of shear stress per decade."