3

Public Disclosure of Data on Health Care Providers and Practitioners

Previous chapters have discussed a wide array of users, uses, and expected benefits of information held by health database organizations (HDOs). Such organizations are presumed to have two major capabilities. One is the ability to amass credible descriptive information and evaluative data on costs, quality, and cost-effectiveness for hospitals, physicians, and other health care facilities, agencies, and providers. The other is the capacity to analyze data to generate knowledge and then to make that knowledge available for purposes of controlling the costs and improving the quality of health care—that is, of obtaining value for health care dollars spent. Another benefit derived from HDOs is the generation of new knowledge by others.

In principle, the goals implied by these capabilities are universally accepted and applauded. In practice, HDOs will face a considerable number of philosophical issues and practical challenges in attempting to realize such goals. The IOM committee characterizes the activities that HDOs might pursue to accomplish these goals as public disclosure.

By public disclosure, this committee means the timely communication, or publication and dissemination, of certain kinds of information to the public at large. Such communication may be through traditional print and broadcast media, or it may be through more specialized outlets such as newsletters or computer bulletin boards. The information to be communicated is of two varieties: (1) descriptive facts and (2) results of evaluative studies on topics such as charges or costs and patient outcomes or other

quality-of-care measures. The fundamental aims of such public disclosure, in the context of this study, are to improve the public's understanding about health care issues generally and to help consumers select providers of health care.1

These elements imply that HDOs should be required to gather, analyze, generate, and publicly release such data and information:

- in forms and with explanations that can be understood by the public;

- in such a manner that the public can distinguish actual events (i.e., primary data) from derived, computed, or interpretive information;

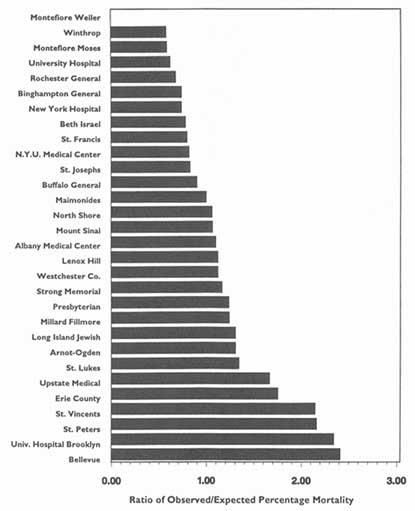

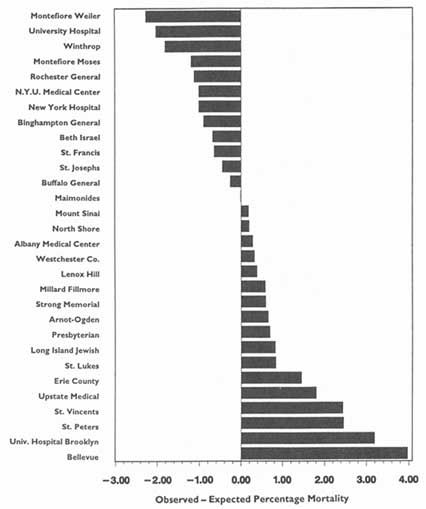

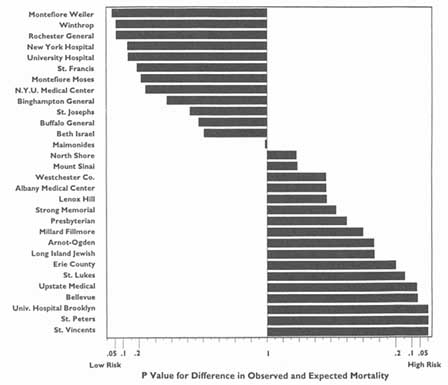

- in ways that reveal the magnitude of any differences among providers as well as the likelihood that differences could be the result of chance alone;

- in sufficient detail that all providers can be easily described and compared, not just those at the extremes;

- with descriptions and illustrations of the steps necessary to predict outcomes in the present or future from information relating only to past experience; and

- with statements and illustrations about the need to particularize information for an individual in the final stages of decision making.

Acceptance of HDO activities and products relating to public disclosure will depend in part on the balance struck for fairness to patients, the public in general, payers, and health care providers. Fairness to patients involves protecting their privacy and the confidentiality of information about them, as examined in Chapter 4. Fairness to the public involves distributing accurate, reliable information that is needed to make informed decisions about providers and health care interventions; the broader aims are to promote universal access to affordable and competent health care, enhance consumer choice, improve value for health care dollars expended, and increase the accountability to the public of health care institutions. Fairness to payers may be a subset of this category. They should receive the information that is available to the public at large, but perhaps in more detail or in a more timely manner. Finally, fairness to providers entails ensuring that

data and analyses are reliable, valid, and impartial; it also means that providers are given some opportunity to confirm data and methods before information is released to the public, and offered some means of publishing their perspectives when the information is released.

This chapter deals chiefly with issues relating to trade-offs between fairness to providers and fairness to the public at large (including patients) insofar as public disclosure of information is concerned. The considerations just noted appear simple and noncontroversial on the surface; in the context of real patients, providers, and data, they become technical, complex, and occasionally in conflict. The appendix to this chapter offers a brief illustration of the difficulties that HDOs might face in discharging their duties of fairness to all groups.

PREVIOUS STUDIES

This report is not the first treatment of issues related to providing health-related information to the public. Marquis et al. (1985) reviewed what was known about informing consumers about health care costs—considered then and now a less difficult challenge than informing them about quality of care—as a means of encouraging them to make more cost-conscious choices. In an extensive literature review, the authors documented the wide gaps in cost (or price) information available to consumers, especially for hospital care.2 They reported evidence that some programs to help certain consumer groups, such as assisting the elderly in purchasing supplemental Medicare coverage, have had salutary effects on the choices people make. Despite new efforts at that time by employers, insurers, business coalitions, and states to collect and disseminate such information, the authors concluded that, ''it remains uncertain whether disclosure of information about health care costs will do much to modify consumers' choices of health plans,

hospitals, or other health care providers" (p. xii). The authors emphasized that understanding how consumers use information in making health care choices is critical to the design of effective data collection and disclosure interventions but that such basic knowledge was lacking. It is not clear that the knowledge gap has been closed.

More recently, the congressional Office of Technology Assessment (OTA, 1988) produced a signal report on disseminating quality-of-care information to consumers. It examined the rationales that lie behind the call for more public information; evaluated the reliability, validity, and feasibility of several types of quality indicators; 3 and advanced some policy options that Congress could use to overcome problems with the indicators. Also presented was a strategy for disseminating information on the quality of physicians and hospitals using the following components: stimulate consumer awareness of quality of care; provide easily understood information on the quality of providers' care; present information via many media repeatedly and over long periods of time; present messages to attract attention; present information in more than one format; use reputable organizations to interpret quality-of-care information; consider providing price information along with information on the quality of care; make information accessible; and provide consumers the skills to use and physicians the skills to provide information on quality of care (OTA, 1988, pp. 40-47).

The OTA study did not wholly endorse any one quality measure or approach, and specifically noted that "existing data sets do not allow routine evaluation of physicians' performance outside hospitals" (p. 30). The report also concluded that "informing consumers and relying on their subsequent actions should not be viewed as the only method to encourage hospitals and physicians to maintain and improve the quality of their care. Even well-informed lay people ... must continue to rely on experts to ensure the quality of providers. Some experts come from within the medical community and engage in self regulation, while others operate as external reviewers through private and governmental regulatory bodies" (p. 30). It may be said that many, if not most, of the issues raised by the OTA report are germane to today's quite different health care environment, including the development of regional HDOs.

IMPORTANT PRINCIPLES OF PUBLIC DISCLOSURE

A significant committee stance should be made plain at the outset: the public interest is materially served when society is given as much information on costs, quality, and value for health care dollar expended as can be given accurately and provided with educational materials that aid interpretation of that information. Indeed, public disclosure and public education go hand in hand. Much of the later part of this chapter, therefore, advances a series of recommendations intended to foster active, but responsible, public disclosure of information by HDOs.

One critical element in this position must be underscored, however, because it is a major caveat: public disclosure is acceptable only when it: (1) involves information and analytic results that come from studies that have been well conducted, (2) is based on data that can be shown to be reliable and valid for the purposes intended, and (3) is accompanied by appropriate educational material. As discussed in Chapter 2, data cannot be assumed to be reliable and valid; hence, study results and interpretations, and resulting inferences, cannot be assumed always to be sound and credible. Thus, a position supporting public disclosure of cost, quality, or other information about health care providers must be tempered by an appreciation of the limitations and problems of such activities. In Chapter 2 the committee advanced a recommendation about HDOs ensuring the quality of their data so as to minimize the difficulties that might arise from incomplete or inaccurate data.

Apart from these caveats, the committee's posture in this area leads to three critical propositions. First, it will be crucial for HDOs or those who use their data to avoid the harms that might come from inadequate, incorrect, or inappropriately "conclusive" analyses and communications. That is, HDOs have a minimum obligation of ensuring that the analyses they publish are statistically rigorous and clearly described.

Second, HDOs will need to establish clear policies and guidelines on their standards for data, analyses, and disclosure, and this is an especially significant responsibility when the uses in question are related to quality assurance and quality improvement (QA/QI). The committee believes that HDOs can produce significant and reliable information and that the presumption should be in favor of data release. Such guidelines can help make this case to those who would otherwise oppose public disclosure efforts with the argument that reasonable and credible studies cannot be conducted.

Third, in line with these principles, the committee advises that HDOs establish a responsible administrative unit or board to promulgate, oversee, and enforce information policies. The specifics of this recommendation are discussed in Chapter 4, chiefly in relationship to privacy protections. The committee wishes here simply to underscore its view that HDOs cannot

responsibly or practically carry out the activities discussed in the remainder of this chapter without formulating and overseeing such policies at the highest levels.

IMPORTANT ELEMENTS OF PUBLIC DISCLOSURE

Several elements are important to the successful public disclosure of health-related information. Among them are the topics and types of information involved, who is identified in such releases, differing levels of vulnerability to harm, and how information might be disclosed. How these factors might be handled by HDOs is briefly discussed below.

Topics for HDO Analysis and Disclosure

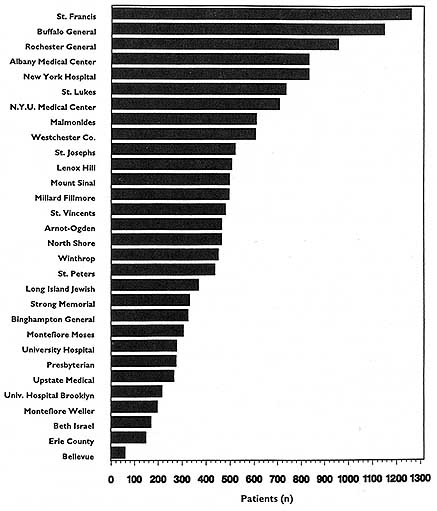

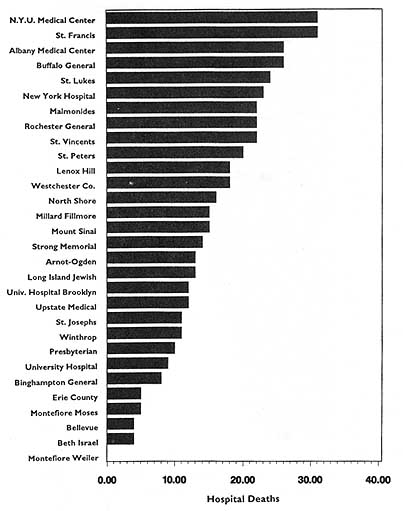

In theory, virtually any topic may be subject to the HDO analyses and public disclosure activities under consideration in this chapter. In practice, the topics that figure most prominently in public disclosure of provider-identified health care data thus far have been extremely limited. Perhaps the best-known instance of release of provider-specific information is the Health Care Financing Administration's (HCFA) annual publication (since 1986) of hospital-specific death rates; these have been based on Medicare Part A files for the entire nation (see, e.g., HCFA, 1987; OTA, 1988, Chapter 4; HCFA, 1991; and the discussion in Chapter 2 of this report).4

This activity has had three spin-offs (not necessarily pertaining just to hospital death rates). The first is repackaging and publishing the HCFA data in local newspapers, consumer guides, and other media. The second is similar analyses, perhaps more detailed, more timely, or more locally pertinent, carried out by state-based data commissions. Examples of statewide work include the published data on cardiac surgery outcomes in New York (cited in Chapter 2), the work of the Pennsylvania Health Care Cost Containment Council on hospital efficiency (PHCCCC, 1989) and on coronary artery bypass graft (PHCCCC, 1992), and the publication of a wide array of information on hospitals, long-term care facilities, home health agencies,

and licensed clinics by the California Health Policy and Data Advisory Commission (California Office of Statewide Health Planning and Development, 1991). The files of the Massachusetts Health Data Consortium have been a rich source of information for various health services research projects (Densen et al., 1980; Gallagher et al., 1984; Barnes et al., 1985; Wenneker and Epstein, 1989; Wenneker et al., 1990; Ayanian and Epstein, 1991; Weissman et al., 1992). The third spin-off is exemplified by the special issues of U.S. News & World Report (1991, 1992, 1993) that have reported on top hospitals around the country by condition or speciality. The underpinnings of these rankings, however, are not HCFA mortality data but, rather, personal ratings by physicians and nurses.

Longo et al. (1990) provide an inventory of data demands directed at hospitals, some of which originate with entities like the regional HDOs envisioned in this study (e.g., tumor and trauma registries and state data commissions). Those requesting data would like, for example, to compare hospitals or hospital subgroups during a specific calendar period, to control or regulate new technologies or facilities, and to help providers identify and use scarce resources such as human organs.

Local activities, such as those for metropolitan areas or counties, are exemplified by the release of the Cleveland-Area Hospital Quality Outcome Measurements and Patient Satisfaction Report (CHQC, 1993), as described in Chapter 2. (Nearly a decade ago, the Orange County, California, Health Planning Council developed a set of quality indicators for local hospitals, which was considered at the time to be a pioneering effort; see Lohr, 198586.) In 1992 (Volume 8, Number 3), Washington Checkbook presented information on pharmacy prices for prescription drugs and for national and store-brand health and beauty care products; it also reported on hospital inpatient care quality (judged in terms of death rates) and pleasantness (evaluated in terms of staff friendliness, respect, and concern) (Hospital Inpatient Care, 1992). In October 1993 The Washingtonian offered a review of top hospitals and physicians serving the Washington D.C. metropolitan area (Stevens, 1993). Another local publication, Health Pages (1993, 1994), covers selected cities or areas of the country. It tries to help readers choose doctors, pick hospitals, and decide on other services such as home nursing care. Its Spring 1993 issue provides a consumer's guide to several metropolitan areas of Wisconsin; included are practitioners; hospital services, procedure rates, and prices; and an array of other kinds of health care.5 A similar issue released in Winter 1994 focused on metropolitan St.

Louis. Sources of the information in these publications include surveys, price checks, and HCFA mortality rate studies; only the last approximates the uses that might be made of the data held by regional HDOs today, but clearly more comprehensive HDOs in the future may have price information, survey data, and the like.

The brief examples above illustrate areas in which analyses that identify providers have been publicly released. Other calls for public disclosure, however, may actually be intended for more private use by consulting firms; health care plans such as health maintenance organizations (HMOs), independent practice associations (IPAs), and preferred provider organizations (PPOs); and other health care delivery institutions such as academic medical centers or specialized treatment centers. Requests may include analyses of the fees charged by physicians for office visits, consultations, surgical procedures, and the like, and the requests may be for very specific ICD-9-CM (International Classification of Diseases, ninth revision, clinical modification) and CPT-4 (Current Procedural Terminology, fourth revision) codes. Yet other inquiries come from clients concerned with the market share of given institutions or health plans in a region as part of a more detailed market assessment. Questions may also be focused on patterns of resource utilization by certain kinds of patients, for instance, those with advanced or rare neoplastic disease. In general, because these applications are unlikely to lead to studies with published results, they are not discussed here in any detail.

Some internal studies are intended for public release, however, for use by regulators, consumers, employers, and other purchasers. These include the so-called quality report cards being developed by the National Committee on Quality Assurance, by Kaiser Permanente, the state of Missouri, and others. The Northern California Region of Kaiser Permanente, for instance, has released a "benchmarked" report on more than 100 quality indicators such as member satisfaction, childhood health, maternal care, cardiovascular diseases, cancer, common surgical procedures, mental health, and substance abuse (Kaiser Permanente, 1993a, 1993b).

Who Is Identified

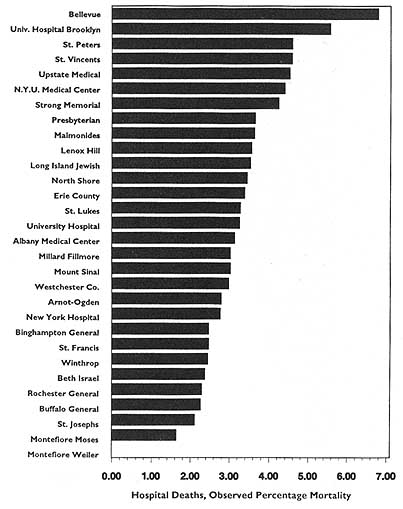

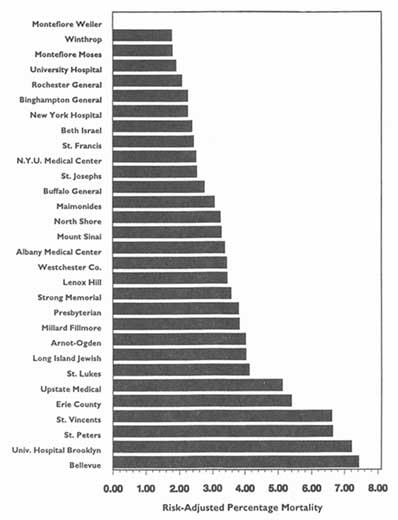

The main objects of such requests and the ensuing analyses tend to be large health plans to hospitals, physician groups, individual physicians, and nursing homes. Most of the debate in the past few years has centered on hospitals, especially in the context of the validity and meaningfulness of hospital-specific death rates (Baker, 1992). Generally, arguments in favor of the principle of release of such information on hospitals have carried the day; controversy persists about the reliability, validity, and utility of such information when the underlying data or the sophistication of the analyses can be called into question.

More recently, the debate has turned to release of information on the hospital-based activities of particular physicians—for example, death rates associated with specific surgical procedures. Here the principle of public disclosure also seems to have gained acceptance, again with caveats about the soundness of the analyses and results. Nevertheless, because of the much greater difficulty of ensuring the reliability and validity of such analyses, especially on the level of individual physicians, many observers remain concerned about the possible downside of releasing information on specific clinicians. This criticism is especially pertinent to the extent that this information is a relatively crude indicator of the quality of care in hospitals or of that rendered by individual physicians, especially surgeons.

In the future, attention can be expected to shift to outpatient care and involve the ambulatory, office-based services of health plans and physician groups in primary or specialty care and of individual physicians. In these cases the stance in favor of public disclosure may become more difficult to adopt fully, for three reasons: the problems alluded to above for hospital-based physicians become exponential for office-based physicians; the clear, easy-to-count outcomes, such as deaths, tend to be inappropriate for office-based care because they are so rare; and quality-of-life measures, such as those relating to functional outcomes and physical, social, and emotional well-being, are more significant but also more difficult to assess, aggregate, and report.

Other types of providers and clinicians also must be considered in this framework. These include pharmacies and individual pharmacists; home health agencies and the registered nurses and therapists they employ; and durable medical device companies, such as those that supply oxygen to oxygen-dependent patients and the respiratory therapists they employ. Stretching the public-disclosure debate to these and other parts of the health care delivery environment may seem farfetched; to the extent that their data will appear eventually in databases maintained by HDOs, however, the prospect that someone will want to obtain, analyze, and publicize such data is real. This may illustrate the point raised in Chapter 2 that simple creation of databases may lead to applications quite unanticipated by the original creators.

Finally, some experts foresee the day when HDOs might do analyses by employer or by commercial industry or sector with the aim of clarifying the causes and epidemiology of health-related problems. Cases in point might be the incidence of carpal tunnel syndrome in banks, accidents in the meatpacking or lumber industry, or various types of disorders in the chemical industry. Here the issue is one of informing the public or specific employers in an economic sector about possible threats to the health and well-being of residents of an area or employees in a particular commercial enterprise.

Vulnerability to Harm

The examples above can be characterized by level of aggregation: large aggregations of health care personnel in, for instance, hospitals or HMOs, as contrasted with individual clinicians. The committee believes that, in general, public disclosure can be defended more easily when data involve aggregations or institutions than when they involve individuals. Vulnerability to harm is the complicating factor in this controversy, and some committee members affirm that it should be carefully and thoughtfully taken into account before data on individuals are published.

To an individual, the direct harms are those of loss of reputation, patients, income, employment, and possibly even career.6 Hospitals and other large facilities, health plans, and even large groups are less vulnerable to such losses than are individuals. Higher-than-expected death rates for acute myocardial infarction or higher-than-expected caesarean section rates are not likely to drive a hospital out of business unless the public becomes convinced that these rates are representative of care generally and are not being addressed. By contrast, reports of higher-than-expected death rates for pneumonia or higher-than-expected complication rates for cataract replacement surgery could disqualify an individual from participating in managed care contracts and eventually spell ruin for the particular physician.

How one regards harms and gains may depend in part on whether one views public disclosure of evaluative information about costs or quality as a zero-sum game. In a highly competitive market, which may have the characteristics of a zero-sum game, clear winners and losers may emerge in the provider and practitioner communities. Furthermore, in theory this is what one would both expect and desire. Nevertheless, when markets are not highly competitive—for instance, when all hospital occupancy rates are high or when the number of physicians in a locality is small—the information may less directly affect consumer choice, although it may well influence provider behavior by changing consumer perceptions. In this situation, clear winners and losers are neither expected nor likely, but establishing benchmarks that all can strive to attain should, in principle, contribute to better performance across all institutions and practitioners.

Methodological and Technical Issues

Several factors influence the degree of confidence one can have in the precision of publicly disclosed analyses, and this dictates how securely one can interpret and rely on published levels of statistical significance and confidence intervals and generalize from published information. Two factors involve the quality of the underlying data and the analytic effort, as introduced in Chapter 2. Others, discussed below, involve the level of aggregation in published analyses, the appropriateness of generalizing from published results to aspects of care not directly studied, and the difficulty of creating global indexes of quality of care.

In the committee's view, proponents of public disclosure have an obligation to insist that the information to be published meet all customary requirements of reliability, validity, and understandability for the intended use. Such requirements vary, to some degree, according to the numbers of cases or individuals included in the report-that is, according to the level of aggregation, from a single case or physician to dozens or hundreds of cases from multiple hospitals. When HDOs cannot satisfy these technical requirements, they should not publish data in either scientific journals or the public media. The committee was not comfortable with the idea that publication might go forward with explanatory footnotes or caveats, on the grounds that most consumers or users of such information are unlikely to accord the cautions as much importance as they give to the data themselves and may thus be unwittingly led to make erroneous or perhaps even harmful decisions.

This position may not be sustainable in all cases, however. The New York Supreme Court rejected the argument "that the State must protect its citizens from their intellectual shortcomings by keeping from them information beyond their ability to comprehend" (Newsday, Inc. and David Zinman v. New York State Department of Health, et al.) and ruled that physician-specific mortality rate information be made public pursuant to a Freedom of Information Law request. In this particular case it could be argued that the data and analyses met all reasonable expectations of scientific rigor. In the future, however, one cannot assume this will be the case. One solution in problematic circumstances may be for HDOs to disclose information only at a much higher level of aggregation than that at which the original analyses may have been done.7

Generalizability is a related methodologic matter with ramifications for the harms and gains noted above. It refers here to the proposition that information on one dimension of health care delivery and performance will in some fashion predict or otherwise relate to other dimensions of performance. For hospitals, for instance, the thought might be that information on adult intensive care can be generalized to adult cardiac care, pediatric intensive care, neonatal intensive care, or even to orthopedics, obstetrics, or ophthalmology. A similar proposition might hold that information on the management of patients with acute upper respiratory infections in the office setting can in some manner predict care of patients with long-standing conditions such as chronic obstructive lung disease, congestive heart failure, or even diabetes mellitus.

Those involved in public disclosure of evaluative information must take care to reflect expert opinion on this matter-inferences about one aspect of care cannot always successfully be drawn from information, whether positive or negative, about another aspect of care. The point is complex because some extrapolation or generalization may be supportable. For example, good or bad ratings for a hospital on death rates for congestive heart failure or acute myocardial infarction might well be generalizable to that hospital's performance on pneumonia or chronic obstructive pulmonary disease (Keeler et al., 1992b), but they might well be completely irrelevant to ratings for asthma in children, hip replacement, or management of high-risk pregnancies. The committee thus believes that HDOs will have a duty to make clear the limits of one's ability to draw conclusions about quality of care beyond the precise conditions and circumstances reported for analyses.

A related problem involves the understandable desire to reduce several separate measures of quality of care into a single, global index intended to represent the performance of an entire hospital, plan, or individual provider. The presumption is that an index measure will be easier for HDOs to report and for the public to understand. Developing index measures is extremely difficult for conceptual reasons, which mainly relate to the difficulty of aggregating measures that come from a variety of sources or represent disparate variables (essentially an "apples and oranges" or even "apples and giraffes" problem); quantitative and statistical problems are also significant. In practice, developing index measures for quality-of-care analyses rarely if

|

|

practitioners, or indeed even specific plans, hospitals, and so forth, might be suppressed according to a "concentration rule" (an N, K rule); in this, if "n" number of providers (e.g., a small number, such as two) dominate a given cell (e.g., account for "k" percentage of a given cell, where "k" is a large figure such as 80 percent), then information on those particular providers would not be made public. This is a form of protection against the "statistical identification problem'' as well. |

ever has been successful,8 although at least one committee member believes that the general level of intrahospital correlation is probably underestimated.

How Information Is Publicly Disclosed

Assuming that some of the above issues have been adequately addressed, one arrives at the question of the content and appearance of publicly disclosed information. The structure, level of detail, and other properties of such information will differ by the disclosure media used, by the nature of the information, by the type of provider or practitioner under consideration, and by the level of confidence that can be placed in the numbers, statistics, and inferences to be presented. Some of the more problematic factors in presenting data are noted here. The committee does not take a formal stand on how these matters might be resolved, however, because it believes that those decisions need to be governed by local considerations.

One difficulty in presenting data involves how and in what order HDOs elect to identify or list institutions, clinicians, or other providers. The most obvious choice is to do so alphabetically. This option has the advantage of making it easy to find a given provider and would probably be the likely approach when publicly disclosed information is purely descriptive. It has the related disadvantage, however, of complicating the task of comparison when the issues of interest involve evaluative information.

Other approaches are nonalphabetic. HDOs might, for instance, order providers of interest on a noncontroversial or descriptive variable; for a given region, these might be the number of beds for institutions, the number of free-standing clinics for HMOs, or the number of primary care physicians for PPOs. This method, however, does not have the advantages of alphabetic ordering and still has the disadvantages noted above. A variant is to sort providers on the basis of an essentially descriptive variable, such

as charges for a particular service, that has the potential of some evaluative content.

Yet another option is to list providers in order from high to low (or vice versa) on a particularly sensitive evaluative variable, such as death or complication rates. This option may be the least desirable or the most open to misinterpretation, as exemplified by PrimeTime Live's referral to such an arrangement as a "surgical scorecard" (PrimeTime Live, ABC, June 4, 1992).

Some choices in the category of other-than-alphabetic ordering present special problems or considerations. For one, the distinction implied above between descriptive and evaluative information may be incorrect or not always applicable. What for some consumers may be purely informational, noncontroversial, or irrelevant—for instance, numbers of specialists or fees charged for a procedure—may for others be a significant or decisive matter in choosing or leaving providers. Opting to list providers or practitioners by these variables may thus reflect the biases or predispositions of those publicizing the data and may not serve all consumers equally well.

Rankings—for instance, from highest to lowest on some variable—may imply greater differences than are truly warranted, and indeed may be positively misleading. This is especially the case when adjacent ranks differ numerically but the differences have no clinical meaning or statistical significance (stated degree of certainty). The committee believes that those disclosing such information must indicate where no statistical differences exist between ranks.

Mixed approaches—for instance, grouping into thirds, quartiles, quintiles, or essentially equivalent ranks and then ordering alphabetically within the groups—are of course possible. Scores, indexes, or other combinational calculations may be appealing for publication purposes, but they often have technical or methodologic weaknesses that will be difficult to convey to the public.

Other aspects of public disclosure involve whether information is representational or symbolic. Data can be presented quantitatively as numbers, rates, dollars, and so forth. Alternatively, data can be rendered qualitatively—for example, as one to five stars or dollar signs; open, half-open, or closed circles; or other symbolic figures. Combinations of such approaches are possible. Exactly which approaches convey what kinds of information best, with the least implicit bias, is open to question and deserves empirical study.

Another aspect of disclosure involves how analyses are released. Up to the present time, most observers would expect HDOs to release analyses in printed form; in the future, however, electronic outlets, such as CD-ROM diskettes or computer bulletin boards, may come into play. A computer-based approach may speed information to some audiences, which would be a positive outcome. Depending on the extent to which the underlying data

accompany analytic results in computer-based media, however, some opportunity may arise for unauthorized analysis of data that could distort the original information. From the viewpoint of HDOs, this would be an undesirable outcome.

A final set of factors concerns the extent of explanatory information and technical footnotes. When public disclosure relies on judgmental or symbolic approaches, more explanatory and definitional material is probably needed than when information is given in straightforward, nonqualitative ways. Some of this information might best be left to a technical report for researchers and other very knowledgeable readers. In any case, the committee agrees that HDOs involved in public disclosure must make available, in clear language, the key elements of their methods, including discussion of possible threats to the internal validity and generalizability of the work that analysts believe they have dealt with adequately.

COMMITTEE FINDINGS AND CONCLUSIONS

To this point the committee has considered issues of public disclosure of information, particularly descriptive or evaluative data on costs or quality, by or under the auspices of HDOs. Its views do not extend to certain other kinds of data banks or repositories, such as computer-based patient record systems of individual hospitals and health plans or internal data files of commercial insurance carriers. Furthermore, the positions advanced here logically depend on the databases in HDOs being regional in nature (i.e., serving entire states or large metropolitan areas) and both inclusive and comprehensive within that region as those terms were used in Chapter 2.

The committee further believes that public disclosure of such information, particularly evaluative or comparative data, must give due regard to the possible harms that may unfairly be suffered by institutions and individuals. In the committee's view, disclosure of information about larger aggregations of health caregivers, such as hospitals, will generally be less prone to causing undeserved losses of reputation, income, or career than disclosure of information on individual practitioners. The committee thus takes the position that public disclosure is a valuable goal to pursue, to the extent that it is carried out with due attention to accuracy and clarity and does not undermine the QA/QI programs that health care institutions and organizations conduct internally.9

RECOMMENDATIONS

The stance favoring public disclosure presented so far includes two requirements. One is that the HDOs themselves ought to carry out some minimum number of consumer-oriented studies and analyses and publish them routinely. That view proceeds directly from the definition of HDO developed for this study—that HDOs are entities that have as one of their missions making health-related information publicly available. In elaborating this position, the committee offers a series of recommendations under the general rubric of advocacy of analyses and public disclosure of results. The second requirement is that HDOs make appropriate data available for others to use in such studies and analyses, with the expectation that the results of the work will be publicly disclosed; that is the thrust of a recommendation on advocacy of data release. Finally, to promote these aims, the committee has urged that HDOs keep prices for providing data and related materials as low as possible, as noted in the section on related issues

Advocacy of Analyses and Public Disclosure of Results

RECOMMENDATION 3.1 CONDUCTING PROVIDER-SPECIFIC EVALUATIONS

The committee recommends that health database organizations produce and make publicly available appropriate and timely summaries, analyses, and multivariate analyses of all or pertinent parts of their databases. More specifically, the committee recommends that health database organizations regularly produce and publish results of provider-specific evaluations of costs, quality, and effectiveness of care.

The subjects of such analyses should include hospitals, HMOs and other capitated systems, fee-for-service group practices, physicians, dentists, podiatrists, nurse-practitioners or other independent practitioners, long-term care facilities, and other health providers on whom the HDOs maintain reliable and valid information. In all cases the identification of providers and practitioners in publicly released reports should be only at a level of disaggregation that will support statistically valid analyses and inferences.

In this context, publish or disclose is intended to mean to the public, not simply to member or sponsoring organizations. This may be easier to state as a principle than to effect in practice. For HDOs with clear public-agency mandates, such as those created by state legislation or governmental fiat or charter and supported with public funds, the requirement to provide information to the public would seem clear, but the use of public funds to support private HDOs could be made contingent on the dissemination of such analyses. Some HDOs may be based in the private sector, operate

chiefly for the benefit of for-profit entities, and have no connection with or mandate from states or the federal government. In these cases, the imperative to make information and analytic results available to the public on a broad scale is much less clear. This committee hopes that such groups would act in the public interest and not just in support of parochial or member interests.

This committee assumes that policy and economic forces already exist to encourage HDOs to conduct such studies and to release information to the public. As implied above, however, this presupposition may be in error, particularly if HDOs are supported largely by private interests or professional groups that may have reasons not to want such information publicly disclosed or that may believe that proprietary advantage may be lost by disclosure. Professional groups may have different, but equally self-interested, reasons for wanting information on their members to remain private.

In the committee's view, therefore, the charters of such HDOs ought to include firm commitments to conduct consumer-oriented studies. Furthermore, no public monies or data from publicly supported health programs (for example, Medicare and Medicaid at the federal level, or Medicaid or various health reform efforts at the state level) ought to be available to HDOs that do not subscribe to such principles (except when such data are otherwise publicly available). Where state legislation is used to establish HDOs or similar entities (e.g., data commissions), the enabling statutes themselves should contain such requirements.

RECOMMENDATION 3.2 DESCRIBING ANALYTIC METHODS

The committee recommends that a health database organization report the following for any analysis it releases publicly:

- general methods for ensuring completeness and accuracy of their data;

- a description of the contents and the completeness of all data files and of the variables in each file used in the analyses;

- information documenting any study of the accuracy of variables used in the analyses.

The committee expects HDOs to accompany public disclosure of provider-specific information with the following kinds of information: (1) clear descriptions of the database, including documentation of its completeness and accuracy; (2) material sufficient to characterize the original sources of the data; (3) complete descriptions of all equations or other rules used in risk adjustments, including validations and limitations of the methods; (4) explanations of all terms used in the presentations; and (5) description of

appropriate uses by the public, payers, and government of the data and analyses, including notice of uses for which the data and analyses are not valid. When certain disclosures are relatively routine (e.g., appearing quarterly or annually), such information might be made available in some detail only once and modified or updated as appropriate in later publications.

With respect to the quality and accuracy of data, HDOs that do not cover the majority of patients, providers, and health care system encounters—such as those operated mainly for the interests of self-selected employers—will have less to say about cost, quality, and other evaluative matters for the full range of providers in the community. Public disclosure of the results of evaluative investigations in these circumstances may be less important; indeed, it may even be undesirable if the information is open to oversimplification, misinterpretation, or misuse. As noted previously, therefore, it will be crucial to provide explanatory material and clear caveats about how the data might be appropriately applied or understood. Publicizing descriptive facts on providers who do render health care services to the populations covered by these smaller HDOs will, however, likely be a useful step.

Minimizing Potential Harms

Up to this point, the committee has taken an extremely strong pro-disclosure stance toward comparative, evaluative data, but it sees some potential for harm in instantaneous public release of comparative or evaluative studies on costs, quality, or other measures of health care delivery. This might be the case, for example, if those doing such work fail to provide information to hospitals, physician groups, or other study targets in advance of release, even to permit them to check the data or develop responses. Disclosure proponents assume that such studies will be done responsibly, and the public has every right to expect that to be the case. To the extent that is true, the generators of the work will be believed and the public interest will have been served.

What is not clear is how well such initiatives will be carried out and what brake or check will exist to ensure high-quality studies. One option is to require HDOs to exercise formal oversight over their work and, insofar as possible, over work done with data they provide. For instance, HDOs might be expected to impose an expert review mechanism on their own analyses before public release, or to require that such peer review be done for analyses performed by others on HDO data, or both.

Alternatively, reliance might be placed on an essentially market-driven set of checks and balances. This approach holds that poor, biased, or otherwise questionable work will eventually be discovered and those carrying it out discredited, because the "marketplace of ideas" has its own discipline to prevent reckless analysis. Studies cost money and are likely to be done

only by organizations large enough to have a reputation to protect; poorly done analyses will be criticized and discounted in the press; analysts will fear diminishment of their professional reputations; and fear of lawsuits for defamation can be a powerful dissuader.

The committee did not, in the end, wish to rely solely on marketplace correctives; it believed that a more protective stance was needed.

RECOMMENDATION 3.3 MINIMIZING POTENTIAL HARM

The committee recommends that, to enhance the fairness and minimize the risk of unintended harm from the publication of evaluative studies that identify individual providers, each HDO should adhere to two principles as a standard procedure prior to publication: (1) to make available to and upon request supply to institutions, practitioners, or providers identified in an analysis all data required to perform an independent analysis, and to do so with reasonable time for such analysis prior to public release of the HDO results; and (2) to accompany publication of its own analyses with notice of the existence and availability of responsible challenges to, alternate analyses of, or explanations of the findings.

This set of recommendations reflects what might be regarded as a fairness doctrine. It holds that an important safeguard for providers, especially individual practitioners, dictates that they be allowed to check their own data and comment thereon.

Meeting a fairness principle could take the form simply of giving the subjects of analyses prerelease copies of the publication or at least of the information about them. Such subjects would not be given a veto over whether their data are used, nor would they necessarily be afforded an opportunity to have their data amended in public studies, but their comments would be maintained by the HDO so that interested parties could review them. The committee assumed that HDOs might well choose to append such comments to their own reports and publications. HCFA adopted this approach for its mortality rate releases, for instance, as did Cleveland Health Quality Choice for its first data release.

The committee actually has gone further, however, to advise that HDOs give providers and practitioners (or their representatives) the relevant data and sufficient time to analyze them, should such requests be made. Because such situations will differ among HDOs in the future, the committee did not develop specific guidance on these points. For example, it cautions that HDOs will have to devise ways, in conveying such data to one requestor, to conceal the identities of other providers or practitioners in the data files to be transferred, but the committee believes that HDOs can accomplish this.

In addition, the committee did not reach any consensus on what constitutes sufficient time, believing that this would vary by the nature, size, and scope of the analysis in question, but common sense about the difficulties of data analysis and fairness to all parties might dictate the length of waiting time; a week's, or a year's, delay would doubtless fail both tests, two months might not. The committee also thought that the time permitted for reanalysis might be longer in the earlier years of HDO operations, when all parties are developing procedures and skills in this area, and for approximately the first year of any new or especially complicated analyses.

This discussion began with the premise that potential harms to institutions and individual practitioners need to be prevented or minimized, and the steps recommended above have that intent. The committee believes, however, that a benefit for HDOs of requiring them to make such data available for review and possible reanalysis is that feedback from providers may reveal problems with data quality and study methods that HDOs would want to remedy.

Advocacy of Data Release

Promoting Wide Applications of Health-related Data

To this point, the chapter has focused on what HDOs might do internally to analyze and publish information on providers and practitioners in their regions. Consistent with the discussion in Chapter 2, however, HDOs might well be expected to do more in the public interest to promote responsible use of health-related data. Specifically, they can serve as a key repository of data to which many other groups should have access.

RECOMMENDATION 3.4 ADVOCACY OF DATA RELEASE: PROMOTING WIDE APPLICATIONS OF HEALTH-RELATED DATA

To foster the presumed benefits of widespread applications of HDO data, the committee recommends that health database organizations should release non-person-identifiable data upon request to other entities once they are in analyzable form. This policy should include release to any organization that meets the following criteria:

- It has a public mission statement indicating that promoting public health or the release of information to the public is a major goal.

- It enforces explicit policies regarding protection of the confidentiality and integrity of data.

- It agrees not to publish, redisclose, or transfer the raw data to any other individual or organization.

- It agrees to disclose analyses in a public forum or publication.

The committee also recommends, as a related matter, that health database organizations make public their own policies governing the release of data.

In referring to "non-person-identifiable data," this recommendation is intended to protect the confidentiality of person-identifiable information in HDO databases. The latter pertains to specific patients or other individuals and might include persons residing in the community who appear in population-based data files but have not received health care services. The distinction is made because individual practitioners or clinicians, who might well appear in the databases as patients or residents of the area, could be identified or identifiable by their professional roles (in line with earlier recommendations in this chapter). Chapter 4 of this report explores issues of privacy and of confidentiality of person-identifiable data in more detail.

The committee debated at length the desirability and propriety of advocating that HDOs make data available to all requestors, rather than constraining the transfer of data as in the above recommendation. It was uncomfortable with flat prohibitions on all transfers of data, but it was equally uncomfortable with the possibility of open-ended or blanket transfers of data to a wide variety of groups who would not be expected to place public dissemination of information high on a list of organizational objectives. To thread a path through this dilemma, therefore, the committee advocated that HDOs make data available to those entities that can demonstrate their clear goal of public disclosure of descriptive or evaluative information and their ability to realize this goal. HDOs should not place prior restraints on which entities might receive such data simply on the grounds that others may conduct analyses or release findings that dispute those of the HDOs themselves.10

To characterize such entities, therefore, the committee devised the criteria in the above recommendation for two purposes. The first is to underscore its view that databases held by HDOs (or at least those mandated by law or supported by public funds) should be available for science and the public good. The second is to constrain the use of such data purely for

private gain, particularly in anticompetitive actions. Examples of this second concern might be employers, insurers, PPOs, hospitals, or other health care delivery organizations in a given region using data for price collusion. Thus, it is expected that those requesting data tapes from an HDO will perform and publish analyses that can be said to serve science and/or the public interest.

The committee recognized that a tension may develop between the understandable desire on the part of HDOs to hold data until they have completed studies they wish to conduct and the need to be responsive to requests from eligible organizations for reliable, valid, and up-to-date data. Responding to such requests might even delay or prevent studies and publications by the HDOs themselves. The committee believes, therefore, that HDOs might consider developing independent units; one group could be responsible for data management and release of data to authorized recipients, and the other could take the lead for the HDOs' own internal analyses and public disclosure activities.

Requiring Recipients to Protect Data Privacy and Confidentiality

The committee debated at some length the advisability and feasibility of recommending that HDOs require recipients of their data to protect the confidentiality of the information. Ultimately, the committee elected simply to observe that such behavior on the part of HDOs might be desirable; it is certainly desirable on the part of data recipients. It concluded, however, that the practical aspects of insisting that HDOs police the actions of their data recipients were too difficult to make this step an integral part of HDO operations.

Certain kinds of database organizations, already in existence for some years, have long experience in designing descriptive, public-use data tapes that are consistent with all the principles of privacy and confidentiality advanced in Chapter 4. Thus, to the extent that any affirmative action is required of HDOs, it should at a minimum include that they create data files according to well-known precepts and methods for public-use tapes and transfer data only through those means. Further steps are examined in Chapter 4. In sum, HDOs can probably not be expected to police the proper and responsible use of their data beyond their own walls. They can, however, cut off from further access to their data any users who have abused these principles, and the committee advises that HDOs be authorized or empowered to do so.

Using Valid Analytic Techniques

This chapter has dealt chiefly with descriptive or evaluative studies and related activities that HDOs might pursue and then release publicly; it has also considered the responsibilities that HDOs might have to make data available to others for private QA/QI programs and for other analytic purposes. Consistent with the discussion of data quality in Chapter 2, however, the committee wishes to emphasize that valid studies will require valid analytic techniques, often multivariable methods.

Such techniques are powerful, complex, and arguable in details among experts, but they are easily understood in principle. Properly used, they provide the best methods for comparing institutional, provider, plan, or clinician performance. This is so because only such approaches can isolate performance from the potentially confounding effects of differences in the prevalence of disease, severity of illness, presence of large numbers of elderly or poor patients, and so forth. Variables that reflect these factors are often termed severity-, risk-, or case-mix-adjustors.

Serious criticisms can be leveled at studies with inadequate or inappropriate adjustors and analyses;11 such studies, and the organizations that conduct them, can readily be discredited. The consequences could be devastating to the entire public-disclosure effort. To help protect against such criticisms, the committee advises that HDOs use only proper statistical techniques—particularly multivariable analyses—for comparisons released to the public.

In addition, the committee holds that HDOs ought to use only severity- and risk-adjustment techniques and programs that are available for review and critique by qualified experts. It also believes that HDOs would do well to require, as a condition of data release, that entities or investigators conducting secondary analysis of these data adopt the same precept.

Related Issues

Privacy Protections for Person-identifiable Data

Chapter 4 deals with the issues of privacy and confidentiality of person-identifiable data in depth. In advocating release of data to providers, researchers, or others, however, the committee recognizes the possibility that information identifying patients or other persons may be inadvertently revealed to those with neither a right nor a need to know. This brief commentary on how privacy might be protected is intended to signal the committee's strong view that it must be protected.

Generally, committee members believe that encrypting, encoding, and aggregating patient data can go a long way toward protecting the identity of patients and other persons in HDO databases. Although no one can ever guarantee zero probability that an individual can be identified through concerted effort and ingenious devices, these methods make it possible to issue assurances about privacy at a reasonable level of confidence. Such assurances are stronger regarding tabular or aggregated information than data in discrete records, but they might still be reasonably strong at the level of tapes and other disaggregated databases.

Some committee members were not convinced that such steps will be sufficient to ensure anonymity and protect the identity of patients. They argued that a less ambitious goal—that of protecting the identity of patients to the extent feasible—is more realistic. It has the further advantage of conveying to the public that absolute protection of the identity of individuals when their information is in a computerized data bank is very difficult, if not impossible.

Consistent with the principles developed in Chapter 4 on access to person-identifiable information for researchers, the committee argues that appropriately qualified, institution-based researchers with approvals from their institutions' Institutional Review Boards can receive data with intact identifiers. The committee suspects that most investigators will not wish to acquire data tapes on analyses that HDOs have already performed. Rather, they will wish to obtain data that permit original analyses on a broad array of topics relating to the effectiveness and appropriateness of health care interventions. The committee does make provision in Chapter 4 for researchers interested in HDO data for these purposes to receive person-identifiable information.

Constrained Staff Capabilities

This chapter assumes that HDOs will have an affirmative responsibility to carry out analyses and public dissemination activities. Clearly, however,

what studies they choose to do and how actively they pursue communication of results will be constrained by available resources and dictated by their own perceptions of critical issues. HDOs, for reasons of political disinclination, lack of staff, or other factors, may not wish or not be able to conduct certain analyses that outside requestors, such as consumer groups or newspapers, regard as extremely significant or timely. Such outside requestors might even be able to pay for such work, or at least to underwrite the costs of acquiring the data; HDOs may still find it impossible or unattractive to attempt to respond to such requests. The committee developed no consensus on how these problems might be addressed, beyond the points made earlier that HDO charters ought to mandate consumer-oriented studies.12

Obligations to Correct Analyses or Retract Information

A complex issue that proponents of HDOs need to consider is what incentives are required to ensure the accuracy of their analyses and subsequent publications. The market for the work may act as one corrective, as discussed earlier. In theory, if it becomes known that an HDO has published information demonstrated to be false, wrongly interpreted, or inappropriate, then political, economic, and social support for the HDO will falter. In practice, the committee was not convinced a market-oriented solution like this would have much effect on an HDO's later performance—there is essentially no evidence on the point—and it was even less persuaded that harms to individuals could be prevented or redressed in this manner.

An alternative, more activist approach may be to require that HDOs publish retractions in the same way and through the same media that published the original erroneous material. Some remedies for injury to individuals or providers might be available through civil litigation when false

|

12 |

To overcome some of the difficulties HDOs might face in responding to outside requests for studies (e.g., staffing constraints or inability to price analytic services appropriately to recoup costs), one committee member proposed that HDOs might contract with at least three "analysis consultants," who will have the same clearance to see patient identifiers as HDO staff. These analysis consultants would compete to do analyses for any outside requestor and to release to the requestor analysis results that do not permit or include patient identification. The rationale for this suggestion is that "outsiders," such as newspapers, employers, consumer organizations, or other nonacademic organizations, will find it difficult to meet the Institutional Review Board requirements for direct access to patient-identified data (a condition elaborated in Chapter 4 on privacy and confidentiality); such entities may also find it difficult to identify experts (within academic institutions) who can or would be willing to do such studies. The full committee was divided on whether this approach was either desirable or feasible but regarded it as worth consideration. |

information has done material harm to their reputations, incomes, or careers, although bringing libel actions that demonstrate malicious intent or foreknowledge of the falsity of the information may be extremely difficult. A further drawback to this strategy is the same feeling that inhibits individuals whose privacy has been breached from bringing lawsuits—the disinclination to make public again what was painful, defamatory, or otherwise harmful when it first was publicized.

A related question is what to do when data are corrected long after they have been processed, used in analyses, or transferred to other users. For example, should HDOs notify users or recipients of their data when something has been augmented, corrected, or changed? Ought they go further and insist that the users alert the public or others to whom they have disclosed study results or transferred data of such matters? The committee did not develop considered opinions on these questions, but it did believe that individual HDOs should be prepared to devise policies to address these issues in anticipation of the day when the questions will arise in their own operations.

STRENGTHENING QUALITY ASSURANCE AND QUALITY IMPROVEMENT PROGRAMS

Data Feedback

The primary focus of this chapter has been on actions HDOs might take to make reliable, valid, and useful information on health care providers and practitioners easily available to the public. The committee concluded that HDOs could help improve the quality of health care through more direct assistance to health care institutions, facilities, and clinical groups. One technique to accomplish this is termed feedback, namely, efforts to make available to providers and practitioners—in as nonthreatening, nonconfrontational, and constructive a way as possible—the data used or the results of evaluative studies about themselves and their peers.

Despite high hopes and some years of experience with public disclosure of provider-specific information, QA/QI experts are not yet clear whether and how an uninhibited approach to public disclosure will foster better QA/QI initiatives in the health care community. To the best of the committee's knowledge, no systematic or rigorous analysis of the short- or long-term effects of public disclosure has been conducted. Anecdotal evidence suggests that some provider groups may seize the opportunity to take a hard look at their own operations and act aggressively on what they find, whereas others may adopt an essentially defensive posture. Nevertheless, virtually no conclusive information indicates whether public disclosure activities materially improve quality of care or QA/QI programs or, for that matter, make

any lasting impact on the public's mind.13 On balance, the committee believes the risk of damage to QA/QI efforts—for instance, if public disclosure forces institutions to divert QA/QI funds to efforts to defend themselves against negative publicity—is likely to be less than the gain from timely public disclosure of such information, but this proposition remains a question for empirical study.

To support advances in QA/QI, the committee advises that HDOs make available to provider organizations the information they need to conduct their own internal QA/QI programs more assertively. This might mean, for example, that HDOs would supply hospitals with their own data and equivalent, but probably nonidentified, data on their peers (e.g., all other hospitals in the region, or all other hospitals of certain types). The notion is generalizable to virtually all kinds of entities that deliver health care in a region, from small fee-for-service practices of physicians, to large multispecialty health plans, to pharmacies and nursing homes. Here the intent is more to transfer raw data than to transmit results of evaluative studies, although in principle HDOs could develop a set of reports that would personalize such results for each of the specific institutions or clinicians included in the study.

Some HDOs may elect to take a lower-profile, less pro-disclosure position. For example, they may opt to postpone public release of their own evaluative studies or insist on delay by groups to whom they provide data until the information has been made available to providers for their use in QA/QI programs. This option would probably entail more delay before public disclosure than is assumed for the earlier recommendation about supplying such data for providers to reanalyze for possible challenge to or comment on HDO studies. Consistent with its stance above, however, the committee would urge HDOs to assess carefully the pros and cons of operating in this manner, with particular attention to whether they are thereby supplying a useful public service or acting more in the interests of the health care community.

Quality Assurance and Quality Improvement

The committee assumed that the QA/QI activity prompted by HDO data would occur chiefly as a part of or an adjunct to the formal QA/QI process that various providers and plans might themselves conduct. 14 Information on identified providers and individual clinicians concerning questionable, and perhaps quite poor, performance would be made available to organizations' QA/QI programs so that they could act constructively on that information; information on superior accomplishments as well as on average performance should also be forthcoming. Such feedback implies that health care institutions and individual practitioners will review, analyze, and make judgments about this information and use it to improve the quality of care.

Of course, QA/QI programs can be (and are often accused of being) ineffective. For instance, they may not act meaningfully or in a timely way on information about poor providers and clinicians; and efforts that should be taken to improve performance or remove substandard providers from the scene may be delayed. Here, it is argued, is where near-real-time public disclosure of information can play a significant sentinel role, illuminating problems and perhaps encouraging, if not forcing, provider groups and health institutions to take actions they would otherwise have softened, postponed, or not initiated.

Nevertheless, the committee believes that the health care community today is moving more forcefully toward meaningful QA/QI efforts. Contemporary QA/QI philosophy would call HDOs to provide information that will accomplish several tasks equally well: identifying poor providers, identifying superior providers, and improving average levels of practice. The committee would encourage HDOs to give these quality-of-care goals equal weight.

Privileging

On a narrower point, quality-related information can be used to grant practitioners various kinds of privileges, for instance, to admit patients to

hospital and perform certain kinds of invasive, diagnostic, or therapeutic procedures. This process can also be used to withdraw privileges in certain circumstances, such as for specific surgical procedures. It is applied most often to physicians but can be directed at other clinicians as well. Related applications in this area involve various forms of selective contracting, in which physicians or other providers are selected for or excluded from participation in certain types of health plans.15

HDOs may be asked to provide information on specific practitioners on a private basis to health plan and group administrators, precisely for formal privileging or contracting purposes. Some potential for harm to physicians does exist in this application of HDO data; the particulars depend on who actually receives such information and the presence or absence of due process, in addition to the quality of the information per se. The committee believes that when privileging procedures are used creatively, they are compatible with QA/QI efforts. It cautions, nonetheless, that HDOs need to be alert to the possible drawbacks of making information privately available in these circumstances and to take appropriate steps to minimize them.

Peer Review Information

Some kinds of quality-related information, developed through formal QA/QI and peer review efforts, are not covered by this discussion. 16 The content of private peer review efforts, for instance, those of hospital QA committees or other investigative or disciplinary actions, are protected from

|

15 |

Issues relating to selective contracting for HMOs, IPAs, PPOs, and other types of health plans that may emerge in the coming years of health care reform go well beyond those mentioned in Chapter 2. Selective-contracting decision making on the part of health plan managers is likely to differ in philosophical, operational, legal, and other ways from steps that patients and consumers take to choose (or drop) plans, providers, or physicians. In this context, one might speculate that patients and consumers would use information obtained through public disclosure to make judgments or choices about health plans, providers, or physicians, whereas plan executives might rely more on information developed internally. Nevertheless, HDOs (and policymakers) should not underestimate the sensitivity that health plan executives may have to public opinion and image, however, and this factor may have a synergistic or leveraging effect on the effectiveness of HDO disclosure activities. |

|

16 |

The rules governing due process and other aspects of quality assurance and peer review are too complex to explore here, but see the IOM report on confidentiality of peer review information in the Professional Standards Review Organization (IOM, 1981) and other work by Gosfield (1975). The IOM report on a quality assurance strategy for Medicare describes the PRO program in detail and reinforces the extent to which information on substandard performance of physicians and hospitals will be kept confidential through many steps before a more public "sanction" step is begun (IOM, 1990). |

disclosure.17 In 1981 an IOM committee issued a study (Access to Medical Review Data) on disclosure policy for Professional Standards Review Organizations (PSROs, which were the precursor organizations to Medicare Peer Review Organizations, or PROs); it addressed several questions about the types of information that PSROs collected and generated and the potential benefits and harms of disclosing individual institutional and practitioner profiles and other data. That committee concluded that the protection of patient privacy must be a primary component of any disclosure policy and, indeed, is not really at issue. By contrast, major problems do arise in the context of data about facilities, institutions, systems, and practitioners that can be identified individually. The study concluded that disclosure of utilization data about identified institutions could be justified on the grounds of public benefits such as enhanced consumer choice and public accountability of health care institutions. Conversely, that study did not conclude that utilization data on identified practitioners ought to be released because of concerns about "unwarranted harm to professional reputations caused by misleading or incomplete data, and the likelihood of a chilling effect on peer review stemming from physician fears of data misuse" (p. 8). Finally, that committee decided that when the data in question relate to quality of care, "the potential harms of requiring public disclosure [by PSROs] in identified form would outweigh the potential benefits" (p. 8).

A decade later, essentially the same approach is in place for PROs. PROs clearly provide considerable information to individual physicians under review and to the hospitals where they practice. PRO regulations, however, hold most quality-related information to be confidential and not subject to public disclosure,18 and PROs are exempted from the require-

ments of the Freedom of Information Act. PROs are required to disclose some confidential information to appropriate authorities in cases of risk to public health or fraud and abuse.

Public Disclosure and Feedback

Some readers may believe that a tension exists between public disclosure and feedback, but the committee believes that both will be important tools available to HDOs to improve quality and foster informed choices in health care. Thus, it voices support for both functions, believing that one activity does not—or at least need not—discredit the other and that effective combination strategies can be designed. It sees public disclosure as a powerful motivator for physicians to participate more fully in their organizations' QA/QI activities.

The challenge to HDOs may be to decide on policies that will foster the greatest improvements in quality of care, patient outcomes, and use of health care resources. Some advances will be achieved more by public disclosure, particularly actions that aim to get useful descriptive data to the public in a timely way, but others may be aided chiefly by private feedback in the QA/QI context. This clearly is not an either/or situation. Combinations of approaches will probably be the most desirable strategy, and the exact combinations are likely to differ by type of provider, geographic area, nature of the data under consideration, and similar factors.

SUMMARY

This chapter has addressed the challenges posed for HDOs in two critical sets of activities: public disclosure of quality and cost data and information and private feedback of similar information to health care providers in efforts to help them monitor and improve their own performance. The HDO will have to have an impeccable reputation for fairness, evenhandedness, objectivity and intellectual rigor. This will be hard to earn and hard to maintain but essential if HDOs are to play the key roles envisioned for them.

The committee believes that public disclosure of information, particularly evaluative or comparative data, must give due regard to the possible harms that may unfairly be suffered by institutions and individuals. The committee thus takes the position that public disclosure is a valuable goal to pursue, to the extent that it is carried out with due attention to accuracy and clarity and contributes to the QA/QI programs that health care institutions and organizations conduct internally.

The committee identified several important aspects of public disclosure. These included: topics for HDO analysis and release, who is identi-

fied in material so released, questions of the vulnerability to harm of providers and clinicians so identified, various methodologic questions about analysis, alternative approaches to public disclosure, and specific considerations about using quality-of-care data in QA/QI programs and the protections accorded peer review information.

In explicating its findings and conclusions, the committee advanced several recommendations advocating analyses and public disclosure of results. It specifically recommended that HDOs produce and make publicly available appropriate and timely summaries, analyses, and multivariate analyses of all or pertinent parts of their databases. The committee recommends that HDOs regularly produce and publish results of provider-specific evaluations of costs, quality, and effectiveness of care (Recommendation 3.1). Furthermore, the committee recommends that a health database organization report the following for any analysis it releases publicly: its general methods for ensuring completeness and accuracy of their data; a description of the contents and the completeness of all data files and of the variables in each file used in the analyses; and information documenting any study of the accuracy of variables used in the analyses (Recommendation 3.2).