4

Dealing with and Improving DOD's M&S

SIGNIFICANCE OF THE ISSUES

As discussed in Chapter 3 , the quality, substantive content, and proper use of models and simulations are significant issues in being able to achieve the full promise of M&S. These were indeed a motivation for the panel's original tasking, as noted in Chapter 1 . We now turn to those issues.

Models as Repositories of Knowledge

One reason that DOD's models, especially the higher-level models, have significant validity problems is that many model builders treat them merely as tools to be manipulated as required in the context of a particular study. With this view, a developer may well set much lower standards for model quality than would have been set if the developer believed that these models were products that would be handed over to others for their own use. From this “tool” perspective, model and simulation quality is associated with the study rather than with the models themselves. Another consideration here is that the historical experience has been that attempts to build comprehensive general-purpose models have often collapsed under their own weight.

The “model as tool” perspective has immediate practical value in that it permits the particular study to be completed more efficiently; however, it has very bad longer-run consequences. These untoward long-run effects arise in part because (1) they defeat the potentially positive role that can be played by M&S and (2) they defeat the longer-run benefits that can come from good software process. More specifically,

-

Simulation models are becoming unique repositories of knowledge about complex systems. As such, models must be carefully specified, fully documented, and designed to be evolvable as knowledge about the system or phenomenon being modeled is gained.

-

Simulation models are increasingly becoming an important mechanism by which knowledge is communicated and passed on.

-

In the future, simulation exercises will become a major vehicle through which the intuition and insight of military officers about combat will be developed and enriched. Consequently, it is of great importance that those models convey reality as much as possible.

-

Well-developed, well-documented, and well-calibrated models and simulations can be used in many studies. When such models are reused, a wide audience receives training on them, improvements to the models can be suggested, and an evolutionary process can be established through which those models can be continually improved.

Modeling and simulation is already playing the role of being a repository of insight. For example, when a new analyst begins work in an organization, it is often the case that his or her education is centered on “learning the model.” The organization's principal model is the frame of reference for discussion and tasking. Even though the analyst may be told the aspects in the model are realistic and unrealistic, the model as a whole is frequently at the core of his or her work. In such a case, it is important to institute an improvement process within which the model can continually evolve and be upgraded.

Models, then, are far more than tools. Appropriate models can represent and communicate our knowledge. Inappropriate models (or use of models) can distort situation assessment and choices of alternative courses of action, whether it be in the choice of weapons systems or the choice of operational strategies and tactics in the midst of war. Successful military operations are increasingly dependent upon the use of sound, well-documented models. Moreover, the advent of distributed simulation and the evolving character of DOD command and control systems serve as additional reasons why users of models will often not be part of the same organization that developed them. These trends put a far greater onus on the model or simulation developer, namely, in distributed-simulation applications (and in the future world of M&S in which frequent use is made of repositories), models and simulations must be increasingly well developed and well documented and must be constructed so that they can be reused across studies and improved as the needs arise.

The potential of M&S for impact on the Department of the Navy, both good or bad, will increase greatly over the next 30 years. The Department of the Navy should have a great interest in capturing the potential benefits while avoiding the many potential problems, but this will not happen without focused institutional attention and some significant changes in current policies toward M&S. For

example, the issues of model quality and content have gotten short shrift for years relative to the attention paid to the more tangible underlying computer technologies such as graphical interfaces, processing power, and network connectivity. Model quality and content need much greater institutional attention.

THE MULTIFACETED NATURE OF MODEL QUALITY

What determines the “quality” of a model (including simulation models)? Perhaps the most important elements of quality are the following: 1

-

Knowledge. Validity of the knowledge represented.

-

Design. The structure used to represent the system, which can affect the model's clarity and appropriateness, as well as its maintainability.

-

Implementation. The faithfulness and soundness of the model's implementation as a computer program.

-

Software quality. The quality of that program as software, taking into account considerations such as comprehensibility, modifiability, and reusability.

-

Appropriateness of use. The way in which the model is used substantively, particularly with respect to dealing with uncertainties. Assuming that programming is correct, it is often difficult to assess a model's quality outside of context.

In what follows, the panel discusses these issues in two pieces. First, the issues of knowledge, design, and use will be considered (items 1, 2, and 5) because they are often closely intertwined. In doing so the panel will use the theme of model uncertainty as a focal point. This is unorthodox, but quite useful for the present purposes. It will also help motivate the approach the panel suggests to research, an approach that recommends both research focused on warfare areas ( Chapter 5 ) and more fundamental research in modeling theory ( Chapter 6 ).

UNCERTAINTY AS A CORE REALITY IN BUILDING AND USING MODELS

First, of course, there is uncertainty about how models should repesent the world. That is, there are uncertainties in our knowledge. However, going beyond this, a central reality is that models and data will remain inherently imperfect— especially with respect to higher-level matters such as those depicted in operational- and campaign-level M&S. Therefore, the Department of the Navy must

|

1 |

A different breakdown might be in terms of knowledge, quality as a software artifact, and both cost and benefit to users. See also the discussion in Appendix B . |

also improve its willingness and ability to deal with uncertainty in models and with the predictions made by them. This will require a major cultural shift (throughout the DOD community).

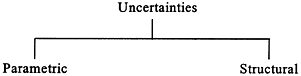

FIGURE 4.1 Taxonomy of model content uncertainty problems.

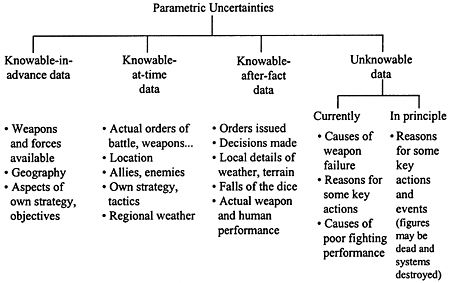

FIGURE 4.2 Taxonomy of uncertainties in parameters.

An initial taxonomy for dealing with uncertainty in models is presented in Figure 4.1 . Uncertainties can lie in model parameters and, more fundamentally, in their basic structures. 2

Figure 4.2 provides a taxonomy of parametric data. Starting on the left, we see the class of data that are knowable in advance, at least with enough effort. This includes data on friendly and enemy weapons and forces.

There is then a class of data that are unknowable at the time of force-planning decisions, but knowable at the time of an actual contingency. This

|

2 |

A different breakdown involves limitations of understanding, computation, and measurement. |

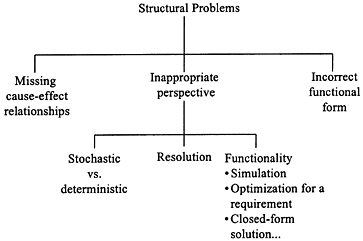

FIGURE 4.3 Taxonomy of structural problems in models.

includes, for example, the real order of battle for both sides, and whether the United States and the enemy have allies.

The next class is data that are not knowable until after the battle or war (and perhaps not even then as a practical matter). For example, the simulated battle may depend on particular decisions made by the commanders of both sides. One might estimate what those decisions would be by drawing upon logic, doctrine, and even cognitive modeling of individual commanders if one knew who they were, but the results of such efforts would still be estimates. The real decisions would not be known until afterward. Similarly, the actual cloud cover over a particular bridge at a particular time is known only when the time comes. But such things can be determined afterward from records. Thus, models using such parameters are by no means unscientific or circular. They are, however, limited in their ability to predict.

Still other classes consist of data that are unknowable. That is, there is some fundamental parametric uncertainty, even after the fact. For example, the morale of a key unit might be bad at a critical time because of illnesses, exhaustion, poor leadership, or a host of other reasons. The unit might be destroyed, along with its memories. Some such unknowable data can be represented as randomness, but it may be randomness of a complex sort.

In summary, even a “perfect” model may have limited predictive capability. It may be quite useful for description and for developing insights about what might happen. And it might be predictive in some circumstances where the unknown factors are not very important.

Parametric uncertainty, then, is quite large. However, it is only part of the story. Figure 4.3 provides one taxonomy of structural problems in models, that

|

BOX 4.1 Examples of Structural Problems

Order of magnitude errors possible, also “tail” effects |

is, problems embedded in the choice of entities and, within processes, the logic and algorithms. Since we often do not know precisely what the “correct” structure is, these problems correspond to a different form of uncertainty. 3

To make this less abstract, Box 4.1 lists some examples of structural problems. Some of them are probably quite familiar to military readers; other are known primarily to specialists. Commenting on the last two items as examples, the panel notes that much modern planning for next-generation warfare depends sensitively on the effectiveness of long-range precision strike. Theater-level analyses, however, typically estimate that effectiveness by merely concatenating planning factors about kills per sortie (or volley) and sorties (or volleys) per day. Such analysis is a crude linear and deterministic approximation that can be wrong. To a commander concerned about troops in the field, troops allegedly protected by long-range fire, it might be of interest to know that the correct mathematics would involve a probability distribution for the effectiveness of that fire, and that—even if the “best estimate” indicated that the enemy forces would be destroyed before engaging small friendly units—there might be a substantial “tail,” that is, a substantial probability that many enemy forces would in fact penetrate and engage. 4

|

3 |

See also Appendix B on virtual engineering, which gives a related but somewhat different taxonomy. |

|

4 |

See also the discussion in the chapter on analysis and modeling in Defense Science Board (1996a), Vol. 2. See also Appendix J on probabilistic dependencies. |

The overall point of Box 4.1 is to dispel the myth that results depend only on model data (i.e., the assumptions about parameter values). In fact, they also depend on built-in features.

APPROACHES TO DEALING WITH UNCERTAINTY

Coping with ubiquitous uncertainty, both parametric and structural, requires something increasingly referred to as “exploratory analysis, ” as distinct from analysis focused on the implications of allegedly best-estimate assumptions and some modest sensitivities. Described more fully in Chapter 6 and Appendix D , exploratory analysis is only now becoming computationally feasible as the result of massive increases in computer power. 5 However, current M&S has not been designed for this kind of analysis under uncertainty. Nor have civilian and military leaders, or analysts for that matter, been educated to approach problems with a full confrontation of uncertainty. Changing this circumstance for next-generation M&S is therefore important. This will require basic research, education, and cultural changes.

“DOING BETTER” ON MODEL CONTENT: NEED FOR MANAGERIAL CHANGES, NOT JUST TOKEN EXHORTATION

Given this quick review of types of problems, what can be done? The most important point is that doing better requires commitment to model content. The panel suggests an approach for the Department of the Navy as indicated in Box 4.2 . It starts with an expression of top-level interest and concern. It then involves a strategy. The principal notion is that model quality tends to be highest when the modeling has been done by people working on relatively specific problems. Thus, the strategy does not call for increasing money for M&S content per se, much less for investing in “hobby shops,” but rather for investing in research in each of a number of important warfare areas that need such research to avoid serious errors that might cost lives or lose wars. For each such program the panel suggests providing terms of reference that explicitly demand empirical and theoretical research, not mere modeling. Further, the panel recommends review by science advisory panels, whose members are highly qualified in terms of education and research experience.

The panel also sees the need, therefore, to increase the supply of military officers qualified to oversee the M&S efforts. The qualifications would involve

|

5 |

See Bankes (1993, 1996) for a technologist's perspective. See Davis et al. (1996) for applications to force-planning analysis. |

|

BOX 4.2 An Approach to Improving Model Content (Quality) Possible Instruments and Approach

Strategy: Establishing Good Exemplar Programs in Key Warfare Areas

|

solid technical education (e.g., M.S.s or Ph.D.s requiringresearch), experience, and, perhaps, certification in a “short course” for technically educated mid-career officers about to take on managerialresponsibilities involving M&S. There is already a shortage of suchofficers, with the result that some officers find themselves managingM&S with little or no relevant background except for general-purposemanagerial skills.6

The panel also sees need to improve incentives for young officers to work on model quality, and to do a better job of matching background to responsibility. More investment in education, and changes in promotion criteria, are needed.

|

6 |

This problem cuts across the services, but with respect to the Navy, it is probably worsened by the lack of a substantial and prestigious analytic shop analogous to Air Force Studies and Analysis in its peak years or to the OP-96 organization disbanded in the 1980s. The panel notes, however, that it is not advocating that M&S and analysis be the province solely of “analysts.” Involving military officers with substantial operational experience, and forcing modelers and analysts to become acquainted with operational realities, is critical. It is now arguably more feasible with the advent of distributed simulation as a key factor in training and exercises. See Davis (1995b). |

VERIFICATION, VALIDATION, AND ACCREDITATION

General Observations

Any report discussing model quality, content, and validity must address what DOD calls verification, validation, and accreditation (VV& A). Much has been written about the subject, however, so the panel merely touches here upon highlights relevant to the current study. 7

Roughly speaking, verification testing establishes whether a computer program correctly implements what was intended by the designer. A verified program should run without crashing, should accomplish its numerical calculations correctly, and so on. In practice, verification testing not only uncovers a variety of “bugs” ranging from typographical errors to incorrect bounds on variable values, it also uncovers errors or shortcomings that trace back to design (e.g., omitted logical cases). Nonetheless, verification's purpose is primarily to test implementation, not the correctness of the underlying model. Validation is the continuing process of establishing the degree to which the model and program describe the real world adequately for the intended purpose. Accreditation is an official determination that a model is adequate for the intended purpose.

In the early 1990s, when DOD established the Defense Modeling and Simulation Office (DMSO), one priority was to establish procedures for assuring validity. DMSO prepared a formal instruction to DOD components, defining terms and objectives and requiring that the components develop VV&A plans. The DMSO also sponsored or encouraged a number of efforts to define VV&A issues with some care and establish guidelines that could be adopted by project leaders and managers throughout the DOD. There now exists a good deal of related documentation. 8 One prominent feature of that documentation is a community consensus on a number of important principles, notably, 9

-

There is no such thing as an absolutely valid model.

-

VV&A should be an integral part of the entire M&S life cycle (i.e., quality cannot be “inspected in,” to quote a well-known aphorism).

-

A well-formulated problem is essential to the acceptability and accreditation of M&S results.

|

7 |

For a range of current views of VV&A, see Sikora and Williams (1997), Muessig (1997), Chew (1997), Stanley (1997), Youngblood (1997), and Lewis (1997). |

|

8 |

The most comprehensive DOD study on the matter may be Defense Modeling and Simulation Office (DMSO, 1996a) which describes a number of consensus principles, prescriptive material drawn from academic and industrial experience with model development and testing, and illustrative formats for reporting results of VV&A activities. See also Davis (1992), MORS (1992), Youngblood et al. (1993), and Sanders and Miller (1995). |

|

9 |

Many of these principles are discussed by Hillestad et al. (1996) based on experiences at RAND in developing and maintaining campaign models. |

-

Credibility can be claimed only for the intended use of the model or simulation and for the prescribed conditions under which it has been tested.

-

M&S validation does not guarantee the credibility and acceptability of analytical results derived from the use of simulation.

-

V&V of each submodel or federate does not imply overall simulation or federation credibility and vice versa.

-

Accreditation is not a binary choice.

-

VV&A is both an art and a science, requiring creativity and insight.

-

The success of any VV&A effort is directly affected by the analyst.

-

VV&A must be planned and documented.

-

VV&A requires some level of independence to minimize the effects of developer bias.

-

Successful VV&A requires data that have been verified, validated, and certified.

These principles are significant in large part because they are not obvious to many users of M&S, and even to many military officers and civilian officials who find themselves involved in M&S. Indeed, it is apparently natural for many individuals to assume that models can be tested, once and for all, and either certified or rejected. Such people are implicitly seeing models as commodities (or as physics models).

Implications for Management

The consensus principles accepted by workers, if not their managers, have many implications for model management (Davis, 1992). Perhaps the most important is recognizing that model quality must be built in from the outset and that assuring high-quality knowledge content will often require years of continued effort and a permanent effort to maintain and update. With respect to VV&A,

-

VV&A should be seen as merely one part of a much larger effort to ensure model quality, an effort that depends for its success on understanding the relevant phenomena, representing it in modeling terms appropriate for intended applications, implementing that representation in a computer model, and seeking out aggressively the data needed to use the model.

-

It is not generally possible to assess a model's validity once and for all. First, validity depends on contextual details; second, most models and/or their data change frequently. Indeed, the best models for a particular effort—whether training, acquisition, or operations related—are often assembled (or patched together, to use an older metaphor) for that specific purpose.

-

An enlightened approach to model development will anticipate and support long-term continuing research on the warfare phenomena at issue, as well as

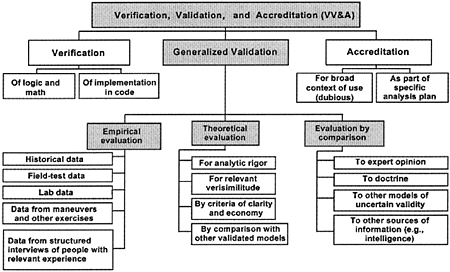

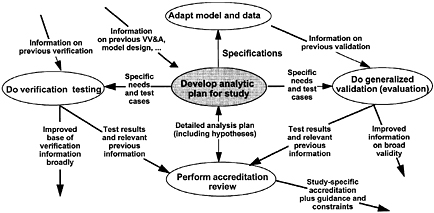

FIGURE 4.4 A taxonomy of VV&A methods to assist managers in mid- and long-term planning to ensure model quality.

-

related data collection. It will also support a broad range of information collection and empirical testing.

Consistent with this, managers and sponsors of M&S need to support the knowledge base with a broad range of information collection and empirical testing, as suggested by the shaded portion of Figure 4.4 . This suggests the need for a long-term program to do so, because, in practice, shorter-term efforts such as the effort to do the computer coding for a model seldom if ever can do more than a tiny fraction of what Figure 4.4 suggests. In organizational terms, Figure 4.4 implies that the research may need to be supported by an office with a longer time horizon and a research orientation, even though the intention is to have the research be strongly focused. Over a period of years, such an organization could build a strong knowledge base in a given area with the combination of activities suggested. Note that these include not only the commonly used evaluation by comparison with expert opinion, doctrine, and other models, but also empirical work and theoretical studies. 10

|

10 |

Our discussion is too brief with respect to verification. Verification testing is essential and often insufficiently pursued. Even numerical methods are sometimes inadequate to deal with nonlinearities. A key to success is assuring quality up front with clear and well-reviewed designs and routine component testing along the way. Also, many software engineering tools can help a great deal if employed from the outset. |

FIGURE 4.5 VV&A as elements of an application-centered process over time.

A second managerial/organizational implication of the conclusions about VV&A principles is that accreditation should be seen as applying to a specific activity such as a particular study or exercise, and not to a model. The panel recognizes that this runs counter to the natural desire of the military and civilian managers who want to have their models certified once and for all, but the conclusion is central. If one truly cares about M&S quality, rather than about having gotten a “check mark” for that M&S, then the context of use is critical. To be sure, an M&S can and should be reviewed and certified for basic soundness and performance, and most important, perhaps, for whether it is adequately documented with “truth in advertising” about what its strengths and limitations are, and cautions for users. Such reviews should be strongly encouraged. However, they have essentially nothing to do with whether a subsequent application is sound. That application, for example, will be likely to require a special database. The data will probably dictate results. What significance, then, would an earlier model review have? Nor should the data be held constant, because in practice that can often undercut the quality or relevance of the work.

The inexorable conclusion, again, is that the quality of an application must be accredited with full appreciation of the specific context. This, then, is not so much a VV&A activity for an M&S as it is a more general review of a substantive activity such as a study or fleet exercise.

If one takes this view, then Figure 4.5 suggests how to implement it. It shows the critical element of accreditation as the development of an analytic plan for the study (or exercise, etc.). This plan will then dictate model adaptations, specialized verification and validation testing, and the criteria to be used in that testing. The result (bottom right) is a study-specific accreditation, probably with guidance such as “Do not purport to reach conclusions on . . . because the study

is not adequate for that purpose. Further, report results as ranges over the specified uncertainty band, because ‘point results' would be misleading. ”

As of the time this report was being prepared, the Department of the Navy did not appear to have a managerial concept for validation-related research. Further, its VV&A plan was deliberately permissive, in keeping with the Navy's tradition of decentralized activity on M&S. That approach may be desirable in many respects, but the failure to plan supportive research activities is a problem —albeit, one DOD-wide in its scope.

An Alternative to Emphasis on VV&A Processes

While VV&A is unquestionably important, it is doubtful that a focus on bureaucratic process will greatly improve the quality of models and simulations. Too many of the problems start at the outset as noted above. In Chapter 7 the panel recommends a “market-oriented approach” that emphasizes increasing the testability of M&S and then exposing the M&S to extensive “beta testing” by organizations such as the Naval War College. The panel also recommends demanding and then exposing to outside scientific review the “conceptual models” on which M&S should be based. Today, such conceptual models often do not even exist and hence cannot be reviewed, but that situation should change. The current emphasis on building and publishing object models is an important step in the right direction, as is work on common models of the mission space (CMMS).