6

Reliability and Quality Assurance Issues for Reuse Systems

In recent years, the term "barriers" has come to serve as a comprehensive descriptor of processes that tend to reduce the risk of waterborne contaminants. Watershed protection programs, water treatment processes, and maintenance of the water distribution infrastructure are all considered barriers to certain types of contamination. The concept of barriers is attractive because it promotes integrated thinking about actions that at first appear unrelated. Potable reuse projects require more robust multiple barriers than conventional water systems do, especially for microbiological contaminants. For example, the presence of high concentrations of Cryptosporidium in a water supply for even a very short period poses a significant risk, whereas high levels of lead would be expected to cause detrimental effects only if the lead persisted for much longer periods of exposure.

As an engineered system, every water reclamation facility has the potential for out-of-specification performance. This chapter considers the practical management alternatives for reducing the risks posed by the potential for such performance failures. The chapter also considers the role of public health surveillance in providing an early warning system for potential health effects.

Multiple Barriers

From 1946 until 1980, 41.7 percent of drinking water disease outbreaks in community water supplies were attributed to inadequate or

interrupted treatment (Lippy and Waltrip, 1984), and similar treatment failures have been noted in more recent years (Herwaldt, 1991). Multiple barriers to contaminant breakthrough are seen as one way of preventing such outbreaks. In addition, increased concern over resistant viruses and protozoa has led the water treatment community to pay more attention to the concept of incorporating multiple barriers to such pathogens in the water system.

Velz (1970) employed the term "multiple barriers" for the concept of providing wastewater treatment when a receiving water is used for water supply. In conventional (non-reuse applications) water treatment, the use of multiple barriers to pathogens within a single facility was advocated by the American Water Works Association Organisms in Water Committee (1987). The multiple barriers concept has, in effect, been embodied in the federal Surface Water Treatment Rule, as well as a number of the state-specific reuse requirements noted earlier in this report. Currently, all processes that help to reduce the risk of waterborne contaminants are referred to as barriers. Thus, watershed protection programs, engineered water treatment processes such as disinfection and filtration, and maintenance of the water distribution infrastructure are all considered barriers to certain types of contamination (even though some of these might cause certain aspects of water quality to deteriorate).

Different types of contamination require different types of barriers. For instance, disinfectants aimed at microbial pathogens do not mitigate chemically based risks and in fact may exacerbate them. Likewise, activated carbon will remove many chemical contaminants but does little to remove viruses. Accordingly, each barrier must be examined separately for its efficacy for removal of each contaminant. The cumulative capability of all barriers to accomplish removal should be evaluated considering the levels of the contaminants in the source water, the nature of the expected health effect associated with the contaminants, the goals that have been set for the potable supply, and any additional safety factors.

The concept of multiple barriers is implicit in the design of many advanced wastewater treatment projects investigating the feasibility of reuse. These projects typically use several physical and chemical barriers to pathogens, which can cause problems if present at high levels for even a short time, but only one or two aimed at chemical contaminants, which must generally be present longer to affect health. One such design is the San Diego project, where a failure in the ion exchange process would probably cause the finished water to exceed the nitrate target until the ion exchange unit was repaired, but where there is no one process whose failure would prevent virus goals from being met.

For drinking water supplies in general, using multiple barriers might mean choosing the most pristine available water source, protecting it from

current and future contamination, providing multiple engineering processes to remove contaminants in a water treatment plant (e.g., pre-oxidation, coagulation, filtration, and disinfection), and protecting the water quality from deterioration in the distribution system (e.g., by adding corrosion inhibitors and additional disinfectant and keeping the water under pressure to prevent contaminated water from entering the system). In places where reclaimed water is used to augment natural supplies or where source water cannot be protected from upstream discharges of water with impaired quality, the importance of the barriers at the water treatment plant or wastewater reclamation plant is correspondingly increased.

Evaluating Barrier Independence

The independence of multiple barriers is a key aspect of system reliability and safety, especially for removal of pathogens. The greater the degree of independence among different barriers, the more they can be relied upon to serve as backups for one another. The water treatment process train should incorporate multiple, independent treatment barriers of sufficient redundancy that required contaminant removal levels will be achieved even if the single most effective treatment barrier is not performing. For example, sedimentation and filtration should not be considered independent barriers if the success or failure of both depends on proper coagulation prior to the sedimentation step. Failure in this type of system contributed to the 1993 Cryptosporidium outbreak in Milwaukee. On the other hand, design of a sufficiently deep intake pipe for surface water extraction and the use of disinfection are independent barriers to microbial contamination.

Individual treatment barriers (or unit processes) should be evaluated individually and collectively with regard to their capacity for contaminant removal and their prospects for failure. Analysis of the contaminant-removal capabilities of independent barriers involves several steps. First, the contaminants of concern are identified, and reasonable maximum and target levels are determined. (The difference between target level and maximum level provides a margin of error.) Based on information on contaminant levels in the source water, the necessary contaminant reduction, usually expressed as logs of removal, is estimated. Each treatment process is evaluated for its removal capability, and this information is used to estimate the overall removal to be accomplished by the process train. Finally, an estimate is made for how much the overall removal will be compromised if the single most important independent barrier (unit process) were to fail.

Table 6-1 illustrates this analysis for a hypothetical comparison of

two treatment trains. The table compares overall efficiencies of the process trains for removing four hypothetical microbial pathogens (A, B, C, and D). This hypothetical analysis illustrates that both process trains provide similar protection against pathogens A and B, but the train that includes the membrane process provides considerably greater protection against pathogens C and D.

Use of Environmental Buffers in Reuse Systems

By definition, indirect potable reuse projects include an ''environmental buffer," that is, a natural water body that physically separates the product water from the wastewater reclamation plant and the intake to the drinking water treatment plant. A reservoir, river, or lake would be the environmental buffer for planned surface water augmentation. With ground water augmentation, the aquifer and/or soil (depending on whether direct injection is used) acts as the environmental buffer between the reclamation plant and the water production well. Surface water always receives subsequent treatment prior to distribution, while ground water may or may not.

The effectiveness of environmental buffers as barriers to various types of contamination is less well understood than that of engineered treatment processes. In different wastewater reuse applications the environmental barriers might include dispersion, dilution, sorption to the sediment and removal by deposition, chemical reaction, biodegradation, and biological transformation processes (photolysis and hydrolysis). Analyzing the effectiveness of environmental buffers for contaminant removal is complex, for a number of reasons. There are different removal processes for different contaminants, and different processes are expected to dominate depending on whether the water infiltrates through surface layers of the ground, is injected into deeper underground layers, or is discharged to a surface water reservoir. Even if all controllable factors are identical, local geology, biology, and climate undoubtedly affect the outcome.

In the absence of definitive research on the effectiveness of environmental buffers, attitudes about this topic have developed from a combination of anecdotal evidence and attempts to extrapolate from the behavior of other systems. The three perceived benefits of buffers are that they provide (1) an opportunity to further reduce contaminants through natural processes, (2) a substantial lag time between the exit of the advanced wastewater treatment system and entrance into the potable system, and (3) the opportunity for the water of wastewater origin to blend with natural waters in the environment. These three potential benefits are discussed below.

TABLE 6-1 Hypothetical Comparison of Two Multiple-Barrier Treatment Process Trains

|

|

Pathogen A |

Pathogen B |

Pathogen C |

Pathogen D |

|

Physical/Chemical Process Train |

|

|

|

|

|

Secondary effluent |

1.00E + 07 |

1.00E + 01 |

1.00E + 02 |

1.00E + 01 |

|

Nominal "safe" level |

1.00E + 00 |

1.00E - 05 |

1.00E - 05 |

1.00E - 05 |

|

Safety factor |

1.00E + 02 |

1.00E + 03 |

1.00E + 03 |

1.00E + 03 |

|

New goal |

1.00E - 02 |

1.00E - 08 |

1.00E - 08 |

1.00E - 08 |

|

Required logs removal |

9 |

9 |

10 |

9 |

|

Treatments |

|

|

|

|

|

Lime |

1.7 |

2.0 |

0.0 |

0.0 |

|

Recarbonation |

0.0 |

0.0 |

0.0 |

0.0 |

|

Filtration |

0.3 |

0.3 |

0.3 |

0.3 |

|

GACa |

1.0 |

1.0 |

0.3 |

0.3 |

|

AOPb |

5.0 |

6.0 |

3.0 |

1.5 |

|

UVc |

4.0 |

3.0 |

2.0 |

1.0 |

|

Cl2d |

4.0 |

5.0 |

2.0 |

0.0 |

|

Total logs removal |

16 |

17 |

8 |

3 |

|

Logs w/o key barrier |

11 |

11 |

5 |

2 |

|

Excess logs |

2 |

2 |

-5 |

- 7 |

|

Process Train with Membrane Filtration |

||||

|

Secondary effluent |

1.00E + 07 |

1.00E + 01 |

1.00E + 02 |

1.00E + 01 |

|

Nominal "safe" level |

1.00E + 00 |

1.00E - 05 |

1.00E - 05 |

1.00E - 05 |

|

Safety factor |

1.00E + 02 |

1.00E + 03 |

1.00E + 03 |

1.00E + 03 |

|

New goal |

1.00E - 02 |

1.00E - 08 |

1.00E - 08 |

1.00E - 08 |

|

Required logs removal |

9 |

9 |

10 |

9 |

|

Treatments |

|

|

|

|

|

MFe |

5.0 |

0.5 |

5.0 |

5.0 |

|

ROf |

4.0 |

4.0 |

5.0 |

5.0 |

|

Stripper |

0.0 |

0.0 |

0.0 |

0.0 |

|

AOPb |

5.0 |

6.0 |

3.0 |

1.5 |

|

UVc |

4.0 |

3.0 |

2.0 |

1.0 |

|

Cl2d |

4.0 |

5.0 |

2.0 |

0.0 |

|

Total logs removal |

22 |

19 |

17 |

13 |

|

Logs w/o key barrier |

17 |

13 |

12 |

8 |

|

Excess logs |

8 |

4 |

2 |

-2 |

|

a GAC = granular activated carbon filtration. b AOP = advanced oxidation process. c UV = ultraviolet disinfection. d Cl2 = chlorination. e MF = microfiltration. f RO = reverse osmosis |

||||

Reduction of Contaminants Through Natural Processes

The value of the soil-aquifer system in attenuating pathogens is long known. During the middle of the nineteenth century, scientists and doctors observed that epidemics of cholera and typhoid were more common in cities served by river water than in cities served by ground water. These observations stimulated the development of "natural filters," a concept similar to the filtration of drinking water through sand banks as practiced in Germany today (Sontheimer, 1980). These techniques reportedly worked well in controlling bacterial pathogens. Soil-aquifer systems have demonstrated substantial capacity to remove bacterial pathogens and organic chemicals (NRC, 1994). The ability of aquifer systems to reduce viruses is less certain, and in some instances, viruses have been reported to survive transport for long distances in ground water (NRC, 1994).

The differences between surface water and ground water storage as environmental buffers are profound, both in terms of processes that may reduce the levels of contaminants of concern and in terms of the degree to which the environmental buffer can be influenced by short circuiting. The potential for short-circuiting of flows introduced into a surface water body deserves special attention.

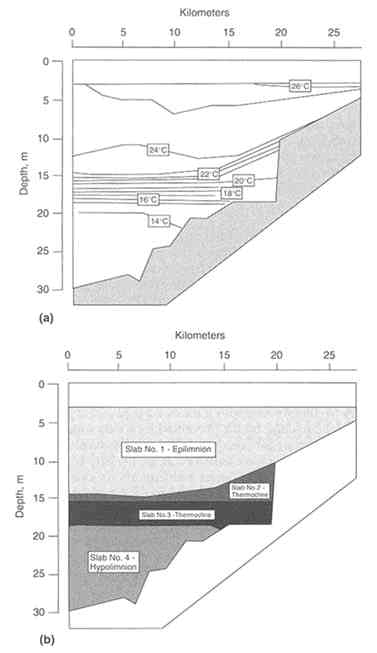

More often than not, surface water bodies are vertically stratified due to differences in density. Temperature is usually the primary driver of this density difference, although salinity also plays a role. Raphael (1962) demonstrated the importance of depth in determining mixing in reservoirs. He characterized the reservoir as a series of horizontal slabs, each corresponding to a different density layer. Since then it has been shown that this density stratification is extremely stable, that very little mixing occurs between stratified layers, and that a small temperature difference will induce stratification (Fischer et al., 1979). Many reservoirs are stratified in this way for most of the year. In warm climates, stratification may persist nearly year-round.

Figure 6-1(a) shows the structure of the Wellington Reservoir during the summer months. The Wellington Reservoir is modeled as four slabs of water covering a temperature range of about 3 to 4°C each. The top slab (23-26°C) is the epilimnion and extends from a depth of 0 to 15 m. The second slab (19-22°C) is the upper half of the thermocline, which is a much thinner layer at a depth between 15 and 16 m. The third slab (1619°C) represents the bottom half of the thermocline, another thin layer located 16-18 m deep. The fourth and last slab (13-15°C) is the hypolimnion, located between 18 and 30 m of depth.

Water introduced into a reservoir will travel vertically up or down to

the slab that corresponds to its temperature, after which it will spread horizontally. Horizontal mixing within the slabs is easily accomplished. Vertical mixing between slabs is very limited, hence the term "slab." In the reservoir model shown in Figure 6-1(b), an influent water at a temperature of 23°C would mix throughout slab 1 (epilimnion) and be diluted by mixing with nearly half the contents of the reservoir. An influent water at a temperature of 21°C, on the other hand, would mix only within the much smaller percentage of reservoir water in slab 2 (the upper thermocline).

The location and depth of reservoir inlets do not heavily influence this dynamic. No matter what depth water is introduced, it will seek water of its own density. Cold water discharged into the warm layers on the top will sink; warm water discharged into the cold layers on the bottom will rise.

In contrast, the depth of the reservoir outlet can have an important impact on whether the reclaimed water finds a "short-circuit" through the natural system, preventing it from receiving all the benefits of natural treatment. In Figure 6-1(b), if the reservoir's outlet is 15.5 m deep and water is discharged into the reservoir at 21°C, the mixing will be only within slab 1, and the discharged water will move almost directly to the outlet. The result will be a very serious short-circuiting problem. Tests conducted at Lake Youngs for the Seattle Water Department under such conditions showed that water marked by tracers passed through the system in 31 hours—even though the lake's nominal detention time was about 30 days (Ellis, 1995).

In the Wellington example, if the outlet is placed at a depth of 25 m in slab 4, short-circuiting can be prevented for a very long time because of the poor circulation between slabs. Tracer tests conducted under such conditions in the San Vicente Reservoir for the City of San Diego (Flow Science for Montgomery Watson and City of San Diego, 1995) showed that less than 0.2 percent of the tracer had passed through in 30 days. At the time, this reservoir had a hydraulic detention time of approximately 12 months. This strategy of discharging to the epilimnion (top layer) and withdrawing from the hypolimnion (bottom layer) is often a sound one for indirect potable reuse projects. One of the principal reasons for this is the fact that treated wastewater is usually as warm or warmer than the epilimnion for most of the year.

Thus, the holding time that can be accomplished in a surface water body can be much less than it might appear. While such a water body might appear to be well mixed, it is more likely stratified into numerous horizontal layers of different densities. Calculations of the hydraulic detention time may have little relevance because water discharged to the reservoir will seek a layer of equal buoyancy and mix with that layer

only. If water mixes and is also withdrawn from the thin thermocline, short-circuiting can be surprisingly rapid. Therefore, water repurification projects using surface water bodies as environmental buffers should be careful to locate drinking water intakes so that any introduced wastewater will have to pass through several layers of water to reach the intakes. It should not be assumed that long hydraulic detention times will control short-circuiting. Investigations into the stratification of surface water bodies during the year are critical to designing effective ways to use them as environmental buffers.

Little study has been devoted to the removal of contaminants from water during transport or storage in surface water bodies. The limited literature on virus removal suggests some removal is possible. The discussion in Chapter 3 on microorganism survival in ambient water notes the importance of temperature. The literature also suggests that the removal of some particularly volatile synthetic organic contaminants can be expected during storage in a large surface water body, but the committee is unaware of data demonstrating removal of disinfection by-product precursors or other organic components of health concern.

In summary, present evidence suggests that some contaminant removal does occur during storage in or passage through environmental buffers for soil-aquifer treatment systems and in aquifer and surface water systems where residence times are long and short-circuiting is avoided. On the other hand, the performance of environmental buffers has not been extensively documented, the conditions that benefit or confound performance are poorly understood, and the necessary data on underground conditions are difficult to obtain. As a result, while environmental buffers can be expected to play a role in public health protection, particularly from microbial disease, the level of protection provided is difficult to quantify.

Lag Time Between Discharge of Wastewater and Entry Into the Potable Water System

When short-circuiting is controlled, using a large environmental buffer provides a significant lag time between the time the reclaimed water is produced and the time it enters the domestic supply. This increased lag time allows any processes contributing to contaminant reduction to proceed longer and, presumably, accomplish more removal.

However, the lag time should be viewed as simply one factor affecting the contaminant removal processes described above. A more subtle yet important value of lag time is that it allows flexibility in responding to changes in the quality of the treated water that are not understood at the time the water is produced but become clear later. For instance, a

substantial lag time increases the chance that a flaw in treatment performance that goes undetected at one time will be discovered before the affected water enters the potable water system. There is always value in having additional time to react. This extra precaution might become less necessary after decades of experience with potable reuse systems, but at present it seems quite valuable.

On the other hand, some argue that storage time accomplishes nothing, that it only ensures that the project will cost more and that if contaminants are inadvertently released, they could affect water supplies over a longer term. Admittedly the alternatives for action are fewer when water of poor quality has been discharged into a supply lake or aquifer. Yet even when the quality of the reclaimed water is poor, mixing it with higher quality water that is subsequently treated is probably a better option than having that same poor quality enter the potable system more quickly with little or no dilution and time lag. As long as the water is in storage, measures can be taken to protect the public. In California, regulations have been proposed requiring 6 to 12 months of hydraulic retention time in an environmental buffer before treated wastewater can be reused as a potable water source. These storage times seem appropriate for allowing the development and execution of alternative actions if needed.

Loss of Identity of the Water of Wastewater Origin

"Loss of identity," meaning mixing of the reclaimed water with water in a natural system, is certainly an important element in the conceptual view of water reuse by both public health authorities and the average citizen. When reclaimed water is introduced into a large aquifer, lake, or further upstream in a river, the process of water reuse is less visible.

Nevertheless, loss of identity alone would seem to have little serious value where public health protection is concerned, because it is hard to associate the loss of identity with any tangible reduction in risk. In the absence of other documented conversion or removal processes, loss of identity is simply dilution. While there might be some risk reduction associated with such dilution, an honest appraisal of the benefit of loss of identity should be presented in those terms.

Moreover, the loss-of-identity argument is not always well received by the public. This can be particularly true when the quality of the reclaimed water is perceived to exceed the quality of the water presently in the environmental buffer. Under these conditions, blending the repurified water with these sources can be seen as paying a premium to satisfy

arbitrary requirements while risking contamination of the high-quality reclaimed water. This was one of the reactions received from public workshops dealing with water reuse in San Diego, where there are plans to introduce high-quality reclaimed water into Lake San Vicente.

In summary, it is clear that including an environmental buffer in a potable reuse project can substantially reduce public health risk but that this risk reduction cannot presently be assessed with any confidence. The benefits that do accrue are likely to be associated with reduction in contaminant concentration and the introduction of a lag time. For these benefits to be realized, short-circuiting must be avoided. Aquifers appear to provide more protection as environmental buffers than do surface waters. Soil-aquifer treatment adds a further dimension of potential contaminant reduction. When surface water storage alone is used, more sophisticated treatment and longer storage times should be required. Finally, loss of identity is an issue that seems more relevant to public relations than public health protection. However, an environmental buffer large enough to contribute to a loss of identity is probably also large enough to bring real reductions in risk.

Consequence-Frequency Assessment

A water reclamation plant is an engineered system. Environmental engineering texts have stated that "every water treatment facility must be so designed that, when properly operated, it can produce continuously the design rate of flow and meet the established water quality standards" (James M. Montgomery Consulting Engineers Inc., 1985). However, any engineered system has the potential for out-of-specification performance. It is critical to understand the likelihood of failures that may compromise product safety. The tools and concepts used to analyze reliability of other engineered systems should also be used in analyzing potable reuse systems (Kumamoto and Henley, 1996).

Regardless of the treatment processes used, treated drinking water from a given facility will vary in quality because of variations in the influent stream as well as variability in the performance of the individual process elements. For contaminants (such as carcinogens) associated only with long-term health risks, variability is relatively unimportant, since the effect depends primarily upon the long-term average dose. However, variability becomes quite important for contaminants, such as infectious microbes or acute chemical toxins, that cause acute health effects at high or single-dose exposures. Figure 6-2 presents two hypothetical data sets showing concentrations of a hypothetical contaminant. In both cases the mean concentration is 3. If the contaminant were one that caused an acute effect when present above a concentration of 4, then the high-vari-

FIGURE 6-2 Effect of treated water variability on the concentration of a hypothetical contaminant.

ability situation would be undesirable, while the low-variability situation would be acceptable.

Multiple-barrier systems tend to produce less variability in the levels of contaminants that pass through the system. For instance, while single- and dual-process systems may pose similar mean levels of risk for contaminant breakthrough, dual-process systems greatly reduce the chance of high levels of contaminant breakthrough. This reduced variability makes multiple-barrier systems preferable to single-barrier systems. Realistic plant designs therefore contain many barriers, with the performance characteristics of various barriers carefully considered so that they complement each other.

Conceptually, the reliability of the overall treatment train can be approximated using event chain analysis, which has been formulated as a systematic technique for some other types of engineered systems (Kumamoto and Henley, 1996). The application of event chain analysis to a portion of a hypothetical reclamation facility is indicated in Figure 6-3, following Keeney et al. (1978).

In most cases, barriers neither perform perfectly nor fail completely, but rather have performance distributions over a broad continuum. A more precise method of reliability assessment than event chain analysis would need to use statistical methods to reflect the performance distributions of each barrier. Such statistical analysis, known as consequence-frequency assessment, has been previously used in microbial risk assess-

FIGURE 6-3 Schematic event tree analysis, as applied to a hypothetical water reclamation facility. Sets of arrows branching from a common point indicate alternative occurrences. Numbers on the arrows indicate occurrence probabilities. At the extreme right (in boxes) are the final occurrence magnitudes-here given as the microorganism density in the final product water (after filtration and disinfection) and probabilities of occurrence. The initiating event (with a probability of 0.1) is the occurrence of a microbial concentration of 1 to 10 Cryptosporidium oocysts per liter in a sand filter influent.

ment for evaluating the probability of contaminant breakthrough for individual elements along a process connecting a source of microorganisms to a potential receptor (Gerba and Haas, 1988; Haas et al., 1993; Regli et al., 1991; Rose et al., 1991). Appendix A provides a detailed example of the use of this method to assess the reliability of an advanced wastewater treatment facility.

Performance Evaluation, Monitoring, and Response

One of the most important uses of reliability analysis is to determine critical processes that must be kept under tight control to limit the probability of high levels of contaminant exposure. Operational variables that provide early warning of failures in water treatment processes should be identified and incorporated into an ongoing monitoring-and-control strategy. These variables, which will be termed ''sentinel parameters," should ideally be readily measurable on a rapid (even instantaneous) basis and should correlate well with high contaminant breakthrough. They need not be of particular health or environmental interest in and of themselves.

Sentinel Parameters

Appropriate sentinel parameters will depend upon the contaminant of interest and the particular process. The following are potential sentinel parameters for microbial breakthrough for some unit processes:

-

•

For lime treatment and clarification, pH and turbidity can serve as sentinel parameters.

-

•

For filtration, elevated particle counts or turbidity can signal problems.

-

•

For reverse osmosis, sentinel parameters could include conductivity, chloride levels, total organic carbon, or transmembrane pressure.

-

•

For ozonation, the dissolved ozone residual or UV254 could serve as sentinel parameters.

The use of sentinel parameters, combined with a sufficiently large environmental buffer or alternatives for diversion, might permit rapid operational adjustments to prevent microbial or other contaminant exposure of the population. The application of sentinel parameter monitoring to water reclamation facilities is analogous to the application of HACCP (hazard analysis and critical control points) methods to the food processing industry (Havelaar, 1994; Jay, 1992; Notermans et al., 1994).

The concept of sentinel parameters should be separated from the measurement of particular constituents that have direct health or regulatory interest. Sentinel parameters should be capable of being rapidly measured with respect to the rate at which underlying process fluctuations are anticipated and should be associated directly with potential contaminant breakthrough. Parameters measured for compliance purposes may require more time-consuming sample collection, preparation, and analysis procedures. The term "sentinel" is also used in contrast to "surrogate." Surrogate parameters are those whose measurement is intended to serve as a particular flag for the potential presence of a contaminant of health concern. Sentinel parameters are those designed to serve as a flag for the potential deterioration in process performance. While it is possible that some sentinel parameters may be surrogate parameters, and vice versa, this is not necessary.

Monitoring and Response

To ensure safety, every treatment facility should have in place rigorous monitoring and control systems to detect and correct lapses in performance.

Monitoring

The role of monitoring in a water reclamation plant, as in any water treatment plant, is to verify that the routine operational characteristics are in fact achieving the intended objectives. Typical routine monitoring parameters might include total organic carbon (TOC), nitrogen, phosphorus, coliforms, phage, Clostridia, and plate counts (see Chapters 2 and 3). Less routine parameters used for monitoring might include viruses, protozoa, pesticides, total organic halides, and heavy metals. Special studies might also use toxicological responses involving appropriate assays (see Chapter 5). Ideally, the entire battery of monitoring should be able to confirm that the routine operation of the facility and its design are in control.

Advances in analytical capability should make new monitoring tools and analytes available. However, as in any other environmental application, the development of more sensitive or selective methods does not necessarily mean that they should be employed on a routine basis. Instead, such developments in methodology should be used to verify that plant design and operation continue to protect public health. If necessary, special (nonroutine) studies might be necessary to develop new sentinel parameters for incorporation into the plant operational strategy.

Strategies for Monitoring and Control

A strategy for monitoring and controlling an indirect potable reuse system can include (1) continuous monitoring, (2) routine monitoring, and (3) ad hoc monitoring.

Continuous monitoring uses sentinel parameters that are directly relevant to the control of the process and for which reliable instruments for continuous monitoring are available. Monitoring must be at sampling locations that are relevant to process control. Continuous monitoring should always be carefully designed to allow sufficient time for blending and chemical reactions upstream of the point of sampling. When continuous monitoring is used directly in process control, the design should also consider lag times between the point where process changes are made and the point where samples are taken, as well as the response time for equipment conducting sample analysis and equipment involved in making process changes. Reliable instruments are essential.

Routine monitoring refers to regularly scheduled sampling and analysis that support both operation and regulatory compliance. For operational support, samples of sentinel parameters are generally obtained by grab samples and immediately analyzed on-site to help with hour-to-hour decision making. Samples taken for routine monitoring to support regulatory compliance are flow-proportioned composite samples taken on-site but analyzed by a certified drinking water laboratory. A routine monitoring program should comply with all regulatory monitoring requirements, in terms of both sampling frequency and analytes selected.

Ad hoc monitoring refers to special sampling and analysis designed to investigate process performance or to demonstrate the removal of special analytes that are not part of a permanent, routine monitoring program. Examples of ad hoc monitoring for process performance would be examining the profile of TOC removal throughout the process train or conducting special seeding challenges to demonstrate the removal of a particular contaminant in a particular unit process. Such ad hoc programs may use continuous, composite, or grab samples as appropriate.

The removal of special analytes, such as viruses, might be evaluated by a special ad hoc monitoring program during the first year of operation—an analysis that might be too expensive for long-term routine monitoring. An ad hoc monitoring program may include an extensive list of synthetic organic chemicals in order to determine those that should be included in future routine monitoring. Another example might be an ad hoc program to characterize the components of the organic carbon that still persist in the advanced wastewater treatment (AWT) product water.

Response

When a sentinel parameter monitoring program shows lapses in performance, appropriate actions should be taken to correct the situation. The appropriate action will depend upon the particular plant design. Ideally, the design should allow the operator to respond to a variety of circumstances. Such design flexibility might include extra pump capacity with reconfigurable piping and intermediate storage capacity, such as equalization and surge tanks.

Particular actions taken in response to process excursions might include the following:

- increasing the number of parallel process modules (filters, membranes, etc.) in service;

- removing and regenerating individual parallel process modules;

- adding supplemental chemicals or increasing chemical dosing;

- recirculating product water to reclamation plant headworks; or

- diverting product flow to an outfall, or to a nonpotable use, rather than into the potable supply stream.

Diversions should be implementable from all points within the reclamation facility, as shown in Figure 6-4 in the conceptual design for the San Diego Reclamation Facility (Bernados, 1996). In addition, process excursions might be used to trigger public health responses such as additional population surveillance, notification of sensitive subpopulations, or provision of emergency alternative supplies. The nature of any public health response will depend on the duration and magnitude of process excursions and the delay time provided by the environmental buffer and by finished water storage.

The existence of finished water storage (including perhaps storage of injected product water into an aquifer prior to use) either within or following a reclamation plant provides increased flexibility for operations. Such storage is beneficial in at least two respects. First, the additional holding time further removes contaminants by processes such as natural decay and biological and chemical reaction. Second, the storage may act to buffer fluctuations in quality so that short-lived transient spikes in undesirable contaminants are smoothed out prior to being sent for consumption. Such finished water storage may help to mitigate the urgency of process excursions. Conversely, the absence of such storage makes it more vital to continuously monitor processes and attend to deviant conditions. There is thus a trade-off between the stringency of reliability that may be needed in treatment and the size of the finished water storage area. Site-specific work will be necessary to quantify these trade-offs.

FIGURE 6-4 Diagram of conceptual design of San Diego Reclamation Plant (after Bernados, 1996). Dashed lines indicate potential emergency diversion points. NOTE: POTW = publicly owned treatment works. SOURCE: Reprinted from AWWA Water Reuse Conference Proceedings (San Diego, California, February 25-28, 1996), by permission, © 1996, American Water Works Association.

Reducing Risk from Unidentified Trace Organic Contaminants

The organic matter in the water produced by an aerobic biological wastewater treatment plant consists of essentially two components: (1) synthetic organic chemicals (SOCs) of anthropogenic origin, as defined by the Environmental Protection Agency (EPA) Office of Drinking Water, and (2) natural organic matter (NOM), which is mostly an ill-defined set of compounds generated through microbial metabolism (see discussion in Chapter 2). Reclaimed water generally holds higher concentrations of both types of components than conventional drinking water supplies do. However, in both reclaimed water and conventional drinking water supplies, the SOCs may not be distinguishable from the NOM. And either water may hold significant quantities of chemicals having potent toxicological properties (e.g., endocrine disrupters, pharmaceutical agents or metabolites, or hormones).

The state of our knowledge concerning SOCs has improved since the 1982 National Research Council report Quality Criteria for Water Reuse (NRC, 1982). Extensive lists of organic compounds have been generated based on known ground water contaminants, the priority and toxic pollutant lists of the Clean Water Act, and the Drinking Water Priority List (53 Federal Register 1892, January 1988, updated 56 Federal Register 1470, January 1991).

Using these lists as a guide, analytical methods have been developed to detect most of the organic compounds of concern in industrial discharges, in raw sewage and treated sewage, and in drinking water supplies. The discharge of priority pollutants to the sewer and to the environment has been regulated and enforced by monitoring requirements. As a result of these efforts, more is known about the anthropogenic chemicals in sewage today, and discharge of these compounds to the nation's sewers is more tightly controlled than was the case in 1982. While concern about chemical risk from reclaimed water remains, the potential for risk management is greater today than in 1982.

The largely uncharacterized organic matter in natural water, in conventionally treated wastewater, and in reclaimed water consists of large organic macromolecules usually identifiable in only the broadest way (e.g., by functional groups, molecular weight, aromacity, or acid/base solubility). As explained in Chapter 2, we have a poor understanding of the differences, if any, between NOM generated in biological sewage treatment processes and NOM generated in a conventional watershed. A credible argument can be made that the same biochemical processes produce the organic matter in both circumstances, and their chemistry is

likely to be much the same, with only subtle differences in molecular weight and functional groups.

As in conventional drinking water supplies, the toxicity of uncharacterized organic materials in reclaimed water may be of less concern than the toxicity of by-products produced when these materials are transformed by disinfection processes. Some experimental work with concentrates of organic chemicals from reclaimed water has shown no toxic effects on animals (for example, the Tampa and Denver studies described in Chapter 5). Nevertheless, as a society, we have less experience with exposure to wastewater-derived organic matter than we do with the NOM in conventional water supplies. Therefore, the concentration of wastewater-derived organic matter should be minimized as part of any prudent potable reuse plan.

To address SOCs, the EPA should develop a priority list of contaminants of public health significance that are known or anticipated to occur in sewage. This list should then be used by utilities planning potable reuse as they manage their industrial pretreatment programs so that the introduction of these compounds into the sewer is regulated and monitored. Finally, potable reuse operations should include a program to monitor for these chemicals in the AWT effluent. Chemicals that occasionally occur at measurable concentration should be monitored at greater frequency than those are not typically detected.

Every community considering potable reuse should carefully review its industrial pretreatment program to ensure that this program serves sufficiently as the principal barrier to SOCs. The industrial pretreatment program must accomplish three essential goals: (1) identify the potentially toxic compounds used or produced by industry, (2) establish regulations to prevent their discharge to the sewer, and (3) establish monitoring to ensure that discharge does not occur and to detect the presence of these compounds in the wastewater should controls fail. This same monitoring program can serve as a basis for designing the chemical monitoring system for the reclaimed water.

To address health risks associated with the contribution of NOM to the potential for disinfection by-product formation, the TOC of the reclaimed water must be reduced to the lowest feasible level, as recommended in Chapter 2. The additional barriers in indirect potable reuse, such as dilution, soil-aquifer treatment, and long retention times in surface reservoirs and/or ground water aquifers, will contribute to the overall reduction of organic carbon of wastewater origin to the water supply. As TOC of wastewater origin diminishes, so do the health concerns associated with it. In principle there comes a point where these concerns are less important than other concerns already being addressed by the current drinking water regulations and their associated monitoring require-

ments. When the wastewater-derived TOC is below this level, no special toxicological monitoring should be required. When the wastewater-derived TOC is above this level, public safety should be protected with continuous toxicological monitoring using in vivo systems (see Chapter 5).

Although establishing such a TOC level appears to be a legitimate risk management strategy, there is no scientific basis for determining what that level should be. The committee believes this judgment should be made by local regulators, integrating all the information they have available to them concerning a specific project.

Public Health Surveillance

Public health surveillance programs are essential components of a community-wide strategy to provide early warning of possible health problems. Adequate disease surveillance requires continuing scrutiny of all aspects of occurrence and spread of a disease that are pertinent to effective control.

The most comprehensive and internationally accepted definition of public health surveillance is that found in the American Public Health Association report entitled Control of Communicable Diseases Manual (Benenson, 1995). That report calls for the systematic collection and evaluation of

- morbidity and mortality reports;

- special reports of field investigations of epidemics and individual cases;

- isolation and identification of infectious agents by laboratories;

- data concerning the availability, use, and untoward effects of vaccines and toxins, immune globulins, insecticides, and other substances used in disease control;

- information regarding immunity levels in segments of the population; and

- other relevant epidemiologic data.

-

A report summarizing the above data should be prepared and distributed to all of those involved in public health protection. This procedure applies to all jurisdictional levels of public health protection, from local to international (Benenson, 1995).

This definition of surveillance of disease is distinct from health surveillance of specific persons, which is a form of public health quarantine. Any surveillance programs should be tailored to the needs of its community, and not all of the elements of the definition above necessarily apply

to populations exposed to potable water supplies augmented with reclaimed water. Figure 6-5 diagrams relationships between the types of public health surveillance (hazard surveillance, exposure surveillance, and outcome surveillance) and the corresponding process by which an environmental agent produces an adverse effect (Thacker et al., 1996).

Surveillance is distinct from epidemiological studies in that surveillance is an ongoing public health program analogous to continuous monitoring. Epidemiology, in contrast, is an investigative activity on the patterns of disease occurrence in particular human populations (or study groups) to identify the factors that influence these patterns of disease. The investigative activity may be descriptive, analytical, or experimental in form. Findings identified in surveillance programs may generate hypotheses that could be tested by epidemiological studies.

The ability of a surveillance system to provide early warning of a possible health problem in the community depends on its sensitivity or threshold of detection. The sensitivity varies depending on the type of surveillance (passive vs. active) and the resources available to maintain the system. In most waterborne diseases, there is normally a low level of sporadic cases in the population, known as "endemic" illness. As the number of exposed individuals increases, the number of recognized cases will rise. This may occur rapidly for an infectious disease with a short incubation period or slowly for a chronic disease with a long or variable latency period. An increase in the illness rate will be recognized by the surveillance system as an outbreak when the disease rate exceeds the threshold of detection. The outbreak event should trigger an epidemiologic investigation to determine (1) whether the reported increases in disease are real or an artifact of the reporting or detection methods; (2) whether the cases are related, as by geographic proximity or common exposure; (3) whether the diseases may be related to any changes in measured water quality parameters; and (4) how the outbreak can be controlled and further disease prevented.

The Uses of Surveillance

Public health surveillance can be put to many uses, including the following (Teutsch and Churchill, 1994):

- developing quantitative estimates of the magnitude of a health problem;

- portraying the natural history of disease;

- detecting epidemics;

- documenting the distribution and spread of a health event;

- facilitating epidemiologic and laboratory research;

FIGURE 6-5 The process by which an environmental agent produces an adverse effect and the corresponding types of public health surveillance necessary to monitor that effect. SOURCE: Reprinted, with permission, from Thacker et al., 1996. © 1996 by American Journal of Public Health.

- testing hypotheses;

- evaluating control and prevention measures;

- monitoring changes in infectious agents;

- detecting changes in health practice; and

- planning.

Surveillance may be used to identify and track waterborne health hazards even when the water reclamation facility is operating in accordance with applicable regulations and within generally accepted standards. In a report by Tilden et al. (1991), for example, dry fermented

salami was shown to serve as a vehicle of transmission for 0157:H7 strains of Escherichia coli, even when all the food production methods used complied with existing regulations and recommended good manufacturing practices. In this case, Tilden et al. noted that ''surveillance ... provides the ultimate feedback on the efficacy of the standard industry safety plans."

Public Health Surveillance and Water Reclamation

Recent developments in the application of public surveillance to drinking water uses in general are also relevant to reclaimed water projects. The 1993 outbreak of cryptosporidiosis affecting more than 400,000 persons in Milwaukee stimulated a great deal of interest in the role of public health surveillance in the early detection of harmful agents in municipal water supplies.

The Centers for Disease Control and Prevention (CDC) held a workshop in September 1994 to assess the public health threat associated with waterborne cryptosporidiosis (CDC, 1994). One of the workshop's major objectives was "to identify surveillance systems ... for assessing the public health importance of low levels of Cryptosporidium oocysts or elevated turbidity in public drinking water." The major observations and recommendation published in the report of that workshop are as follows:

- Local public health officials should consider developing one or more surveillance systems to establish baseline data on the occurrence of cryptosporidiosis among residents of their community and to identify potential sources of infection.

- No single surveillance strategy is appropriate for all locations; therefore, communities should select a method that meets local needs and is most compatible with existing disease surveillance systems.

- Cryptosporidiosis should be reportable to the CDC.

- Sales of antidiarrheal medications should be monitored. The development of an information exchange system between local pharmacists and state or local public health officials is a cost-effective and timely way to detect increases in diarrheal illness in some counties.

- Logs maintained by health maintenance organizations and hospitals should be monitored for diarrheal illness.

- Information entered promptly into a computerized database can effectively monitor both complaints of diarrhea and severity of gastrointestinal disease in a community.

- Incidence of diarrhea in nursing homes should be monitored. Diarrheal illness rates in residents of nursing homes that use municipal

- drinking water can be compared with illness rates in residents of other nursing homes in the same community that use a different water source (e.g., private well water).

- Laboratories should routinely test for Cryptosporidium in stool specimens. Most laboratories presently do not look for Cryptosporidium in specimens submitted for routine parasitologic examination.

- Tap water should be monitored for Cryptosporidium. This information will allow researchers to see how often an increase in diarrheal disease or Cryptosporidium diagnosis occurs during the first week to two weeks after oocysts are found in drinking water.

- Local, state, and national public health agencies should cooperatively initiate and develop surveillance systems to assess the public health significance of low levels of Cryptosporidium oocysts in public drinking water.

A similar investigation was undertaken by the New York Department of Environmental Protection (DEP). DEP commissioned an advisory panel on waterborne disease assessment to determine whether New York City's active disease surveillance program (1) was adequate to detect a waterborne disease outbreak; (2) could provide sufficient information to assess the endemic rates of cryptosporidiosis and giardiasis; and (3) could provide sufficient information to assess endemic waterborne disease risks. The panel was also asked to assess whether an epidemiologic study or studies could be designed to address the question of increased risk of enteric illness or infection associated with the consumption of tap water.

The approach of the New York advisory panel on waterborne disease assessment was similar to that of the CDC workshop, and its conclusions are likewise relevant to the issue of augmenting potable water supplies with reclaimed water. The panel made five major recommendations:

- Designate a waterborne disease coordinator. The most important aspect of a successful waterborne disease surveillance program is the designation of an individual who is specifically responsible for developing, supervising, and coordinating all aspects of the program.

- Initiate rapid reporting and analysis of disease surveillance data. Early detection of a waterborne outbreak requires that disease surveillance information be quickly transmitted and analyzed.

- Initiate special waterborne disease surveillance studies. Studies should include (1) surveillance of diarrheal illness and selected infectious diseases in populations using managed care programs (e.g., health maintenance organizations), in selected emergency treatment facilities, and in

- sentinel populations residing in nursing and/or retirement homes; and (2) monitoring of the sales or use of medications for diarrheal illness.

- Improve reporting of cryptosporidiosis. The reporting of cryptosporidiosis can be improved by educating physicians and health care providers about the disease and encouraging laboratories to examine stool specimens for Cryptosporidium.

- Institute an annual evaluation of the waterborne disease surveillance program to make sure it is effective for the detection and early recognition of waterborne outbreaks or emerging pathogens.

The designation of a waterborne disease coordinator is not the norm, as was documented by Frost et al. (1995) in their report of a survey of state and territorial programs. Frost et al. found that in 49 states and 3 territories surveyed, fewer than half of the programs had designated a waterborne disease coordinator. Of these coordinators, only 24 percent had training or work experience in water treatment and only 28 percent met regularly with drinking water regulatory staff. Only 46 percent of states and territories surveyed indicated that an individual from the drinking water regulatory staff had been designated to assist in investigation of outbreaks, and almost a third of those designated individuals could not be named by the epidemiology program staff. No state used computerized illness data from health maintenance organizations or the Indian Health Service as a waterborne disease surveillance tool. With fewer than half of the state/territorial epidemiology programs having a designated coordinator for waterborne disease outbreaks and with few of these coordinators having training or experience in drinking water treatment or maintaining contact with the state water treatment specialists, the timeliness of initiation and the effectiveness of outbreak investigations may be compromised.

Strengths and Limitations of Surveillance Systems

The basic structures for public health surveillance are already in place in most communities. Mandatory disease reporting systems have been established by statute or regulations in all state and many local jurisdictions, and all states require that physicians report cases of specified diseases to the appropriate state or local health department. The diseases that are reportable, however, vary from state to state. Reporting agencies such as physicians and laboratories are repeatedly reminded of their obligations in this regard (Rutherford, 1992), and lists of diseases to be reported are frequently updated. In California, for example, Escherichia coli 0157:H7 and any "waterborne disease" were added to the California

Code of Regulations and were required to be reported by telephone. The list already contained cryptosporidiosis and giardiasis as well as the more classical salmonellosis and shigellosis (Ross, 1995).

However, as stated by Teutsch and Churchill (1994), "Under-reporting is a consistent and well-characterized problem of notifiable-disease reporting systems. In the United States, estimates of completeness of reporting range from 6 percent to 90 percent for many of the common notifiable diseases." Many factors contribute to this lack of complete reporting, but they are generally well known and can be addressed by positive action if the will to do so exists. As Teutsch and Churchill (1994) note,

Some approaches that appear to be successful include (a) providing physicians with feedback on the health department's disposition of individual cases; (b) matching laboratory reports with physicians' reports, and for those cases reported only by laboratories, notifying physicians that a specific case should have been reported to the health department; and (c) conducting in-person site visits to review reporting procedures. The ultimate purpose of surveillance systems is to prevent or control.

the occurrence of adverse health events associated with the ingestion of drinking water sources augmented with reclaimed water. Therefore, any such surveillance system must be jointly planned and operated not only by those who collect the data, but by those who would use these data in conjunction with other monitoring processes to ensure the quality of the water delivered to the consumer. As a minimum, this would include the health, water, and wastewater departments. Essential to the effective functioning of such a system is the identification of key individuals in each agency who would plan, coordinate, rehearse, and communicate frequently. This might be tied in with the community's general emergency response plan. It would also be appropriate to include and advise interested consumer groups of the surveillance plan and its purpose.

The strength of the surveillance system is directly proportional to the degree to which this coordinated joint system is effective, and it will be limited to the degree that coordination is lacking.

Operator Training And Certification

Proper operation of an advanced water treatment plant intended to improve potability of wastewater requires special training. Neither the conventional wastewater treatment operator nor the conventional water treatment operator gets sufficient training or experience in physicochemical treatment or in public health microbiology to serve the needs that must be met at an AWT plant.

Such training should include at a minimum the principles of opera-

tion of processes for coagulation with ferric chloride or lime (especially high-lime treatment), granular media filtration, membrane filtration, reverse osmosis, air stripping, ion exchange, advanced oxidation, and high level disinfection with free chlorine, ozone, UV light, and other means.

Training should include courses on the various microbial pathogens and indicators. Operators should be familiar with many of the most important microbial organisms, the diseases they cause, the symptoms of those diseases, the likely density of the organisms in wastewater, and the relative effectiveness of the various treatment processes in removing each one. Operators should also be generally familiar with the procedures for isolating these organisms from drinking water as well as some of strengths and weaknesses of the various analytic techniques.

Conclusions and Recommendations

The safe, reliable operation of a potable reuse water system depends both on well-designed treatment trains that provide redundant safety measures, or "multiple barriers," and on monitoring efforts designed to detect variations in system operation as well as any signs of contaminant breakthrough in the system. Such duplicative barriers and monitoring efforts are essential to reducing, detecting, and mitigating any weaknesses or lapses in the system's safety performance.

To provide these margins of safety, the committee recommends the following:

- Potable water reuse systems should employ independent multiple barriers to contaminants, and each barrier should be examined separately for its efficacy for removal of each contaminant. Further, the cumulative capability of all barriers to accomplish removal should be evaluated, and this evaluation should consider the levels of the contaminant in the source water, the expected health effect associated with the contaminant, the goals that have been set for the potable supply, and any additional factors of safety.

- The multiple barriers for microbiological contaminants should be more robust than those for many other forms of contamination, due to the acute danger such contaminants pose at high doses even for short time periods. Where reclaimed water is used to augment natural supplies or where source water cannot be protected from upstream discharges of water with impaired quality, the importance of the barriers at the water treatment plant or wastewater reclamation plant is correspondingly increased.

- Because the performance of wastewater treatment processes may vary considerably from time to time, such systems should employ

- quantitative reliability assessments to gauge the probability of contaminant breakthrough among individual unit processes. Such a quantitative approach can be combined with dose-response assessments to better ascertain the likelihood of a risk of infection or illness of a given magnitude. Sentinel parameters, which are readily measurable on a rapid (even instantaneous) basis and which correlate well with high contaminant breakthrough, should be used for monitoring critical processes that must be kept under tight control.

- Utilities using surface waters or aquifers as environmental buffers should take care to prevent "short-circuiting," by which influent treated wastewater either fails to mix with the ambient water fully or moves through the system to the drinking water intake faster than expected. In addition, the buffer's expected retention time should be long enough to give the buffer time to provide additional contaminant removal. California has proposed regulations requiring retention times of 6 to 12 months before treated wastewater can be reused as a potable water source; these storage times seem appropriate for enabling the development and execution of alternative actions.

- Risk management strategies should be used to reduce the risk from the wide variety of synthetic organic chemicals that may be present in municipal wastewater and consequently in reclaimed water. Utilities involved with planning for potable reuse should implement a stringent industrial pretreatment and pollutant source control program that not only includes existing priority pollutants, but considers additional contaminants of public health significance that are known or anticipated to occur in sewage. Guidelines for developing lists of wastewater-derived SOCs should be prepared by the Environmental Protection Agency and modified for local use. Finally, potable reuse operations should include a program to monitor for these chemicals in the AWT effluent, tracking those that occasionally occur at measurable concentration with greater frequency than those that do not.

- Potable reuse operations should have alternative means for disposing of the reclaimed water in the event that it does not meet required standards. Such alternative disposal routes protect the environmental buffer from contamination.

- Every water agency using reclaimed waters as drinking water should implement well-coordinated public health surveillance systems to document and possibly provide early warning of any adverse health events associated with exposure to reclaimed water. Such surveillance data should be interpreted with care, because there are many sources of exposure for all diseases categorized as "waterborne." (That is, suggested elevated levels of disease may be due to other transmission routes and not related to drinking water.) There is little scientific documentation of

- the degree to which most specific diseases are due to exposure to water. It is important to have good communication and cooperation between health authorities and water utilities to quickly recognize and act upon any health problems that may be related to water supply. Any such surveillance system must be jointly planned and operated by the health, water, and wastewater departments and should take advantage of recommendations made in conjunction with recent public health workshops on the subject sponsored by the Centers for Disease Control and Prevention. Essential to the effective functioning of such a system is the identification of key individuals in each agency who would coordinate planning and rehearse emergency procedures. Further, appropriate interested consumer groups should be involved and informed as to the public health surveillance plan and its purpose.

- Operators of water reclamation facilities should receive adequate training that should include the principles of operation of advanced treatment processes, a knowledge of pathogenic organisms, and the relative effectiveness of the various treatment processes in reducing pathogen concentrations. Operators of such facilities need training beyond that typically provided to operators of conventional water and wastewater treatment systems.

References

American Water Works Association. Organisms in Water Committee. 1987. Committee report: microbiological considerations for drinking water regulation revisions. Journal of the American Water Works Association 79(5): 81-88.

Benenson, A. S., ed. 1995. Control of Communicable Diseases Manual, 16th ed. Washington, D.C.: American Public Health Association.

Bernados, B. 1996. The Importance of Reliability in Potable Reuse. AWWA Water Reuse Conference. San Diego, Calif., February 25-28.

Centers for Disease Control and Prevention (CDC). 1995. Morbidity and Mortality Weekly Report 44 (No. RR-6:1-19). Atlanta, Ga.: CDC.

Ellis, R. H. 1995. Seattle Water Department: Cedar River Surface Water Treatment Rule Compliance Project. Seattle, Wash.: Seattle Water Department.

Englehardt, J. D. 1995. Predicting incident size from limited information. Journal of Environmental Engineering 121(6): 455-464.

Fischer, H., J. List, R. Koh, H. Imberger, and N. Brooks. 1979. Mixing in Inland and Coastal Water. New York: Academic Press.

Flow Science for Montgomery Watson and City of San Diego. 1995. Vicente Reclamation Project: Results of Tracer Studies. Pasadena, Calif.: Flow Science, Inc.

Frost, F. J., R. L. Calderon, and G. F. Craun. 1995. Waterborne disease surveillance: Findings of survey of state and territorial epidemiology programs. Environmental Health Dec.

Gerba, C. P., and C. N. Haas. 1988. Assessment of risks associated with enteric viruses in contaminated drinking water. ASTM Special Technical Publication 976: 489-494.

Haas, C. N. 1997. Importance of distributional form in characterizing inputs to Monte Carlo risk assessment. Risk Analysis 17(1):107-113.

Haas, C. N., J. B. Rose, C. P. Gerba, and S. Regli. 1993. Risk assessment of virus in drinking water. Risk Analysis 13(5): 545-552.

Havelaar, A. 1994. Application of HACCP to drinking water supply. Food Control 5(3):145-152.

Herwaldt, B. L., et al. 1991. Waterborne-disease outbreaks, 1989-1990. Morbidity and Mortality Weekly Report 40(SS-3):1-21.

James M. Montgomery Consulting Engineers Inc. 1985. Water Treatment Principles and Design. New York: John Wiley.

Jay, J. M. 1992. Microbiological food safety. Critical Reviews in Food Science and Nutrition 31(3):177-190.

Keeney, R., et al. 1978. Assessing the risk of an LNG terminal. Technology Review 81(1): 64-78.

Kumamoto, H., and E. Henley. 1996. Probabilistic Risk Assessment and Management for Engineers and Scientists. New York: Institute of Electrical and Electronics Engineers, Inc.

Lippy, E., and S. Waltrip. 1984. Waterborne disease outbreaks-1946-1980: a thirty-five year perspective. Journal of the American Water Works Association 76(2):60-67.

National Research Council (NRC). 1982. Quality Criteria for Water Reuse. Washington, D.C.: National Academy Press.

National Research Council (NRC). 1994. Ground Water Recharge Using Waters of Impaired Quality. Washington, D.C.: National Academy Press.

New York City's Advisory Panel on Waterborne Diseases Assessment. 1994. Report of New York City's Panel on Waterborne Disease Assessment. The New York City Department of Environmental Protection.

Notermans, S., et al. 1994. The HACCP concept: specification of criteria using quantitative risk assessment. Food Microbiology 11(5):397-408.

Raphael, J. M. 1962. Prediction of temperature in rivers and reservoirs. J. Power Div. Am. Soc. Civ. Engr. 99:475-1496.

Regli, S., et al. 1991. Modeling risk for pathogens in drinking water. Journal of the American Water Works Association 83(11):76-84.

Rose, J. B., C. N. Haas, and S. Regli. 1991. Risk assessment and the control of waterborne giardiasis. American Journal of Public Health 81:709-713.

Ross, R. K. 1995. Additional diseases to be reported. Physician's Bulletin. June (No. 398). County of San Diego.

Rutherford, G. W. 1992. Why-when-how to report communicable diseases. Medical Board of California Action Report 45(May):24.

Smith, R. L. 1994. Use of Monte Carlo simulation for human exposure assessment at a Superfund site. Risk Analysis 14(4):433-439.

Sontheimer, H. 1980. Experience with riverbank filtration along the Rhine River. Journal of the American Water Works Association 72(7):386.

Teutsh, S. M., and R. E. Churchill. 1994. Principles of Public HealthSurveillance . New York: Oxford University Press.

Thacker, S. B., D. F. Stroup, R. G. Parrish, and H. A. Anderson. 1996. Surveillance in environmental public health: issues, systems, and sources. Am. J. Public Health 1996: 633-638.

Tilden, J., Jr., W. Young, A. M. McNamara, C. Custer, B. Boesel, M. A. Lambert-Fair, J. Majkowski, D. Vugia, S. B. Werner, J. Hollingsworth, and J. G. Morris, Jr. 1991. A new route of transmission for Escherichia coli: infection from dry fermented salami. Am J. Public Health 86:1076-1077.

Velz, C. 1970. Applied Stream Sanitation. New York: John Wiley.