IV. Materials R&D—A Vision of the Scientific Frontier

The Science of Modern Technology

Paul Peercy

SEMI/SEMATECH

Electronic, Optical, and Magnetic Materials and Phenomena

We have seen numerous important unexpected discoveries in all areas of condensed-matter and materials physics in the decade since Physics Through the Nineties was published. Although these scientific discoveries are extremely impressive, perhaps equally impressive are the technological advances based on our ever-increasing understanding of the basic physics of materials along with our increasing ability to tailor the composition and structure of materials in a cost-effective manner. Today's technological revolution would not be possible without the continuing increase in our scientific understanding of materials and phenomena, along with the processing and synthesis required for high-volume, low-cost manufacturing. This article examines selected examples of the scientific and technological impact of electronic, optical, and magnetic materials and phenomena.

Technology based on electronic, optical, and magnetic materials is driving the information age through revolutions in computing and communications. With the miniaturization made possible by the invention of the transistor and the integrated circuit (IC), enormous computing and communication capabilities are becoming readily available worldwide. These technological capabilities enabled the Information Age and are fundamentally changing how we live, interact, and transact business. These technologies provide an excellent demonstration of the strong interdependence and interplay of science and technology. They have greatly expanded the tools and capabilities available to scientists and engineers in all areas of research and development, ranging from basic physics and materials research to other areas of physics and to such diverse fields as medicine and biotechnology.

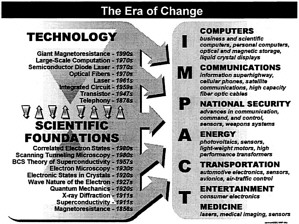

Incorporation of major scientific and technological advances into new products can take decades and often follows unpredictable paths. Selected technologies supported by the foundations of electronic, photonic, and magnetic phenomena and materials are illustrated in Figure 1 . These technologies have enabled breakthrough technologies in virtually every sector of the national economy. The two-way interplay between foundations and technology is a major driving force in this field. The most recent fundamental advances and technological discoveries have yet to realize their potential.

Figure 1. Examples of how major scientific and technological advances have an impact on new products.

The Science of Information Age Technology

The predominant semiconductor technology today is the silicon-based integrated circuit. The silicon integrated circuit is the engine that drives the information revolution. For the past 30 years, the technology has been dominated by Moore's law—the statement that the density of transistors on a silicon integrated circuit doubles about every 18 months. The relentless reduction in transistor size and increase in circuit density have provided the increased functionality per unit cost that underlies the information revolution. Today's computing and communications capability would not be possible without the phenomenal 25 to 30 percent per year exponential growth in capability per unit cost since the introduction of the integrated circuit in about

|

NOTE: This article was prepared from written material provided to the Solid State Sciences Committee by the speaker. |

1960. That sustained rate of progress has resulted in low-cost volume manufacturing of high-density memories with 64 million bits of memory on a chip and complex, high-performance logic chips with ~10 million transistors on a chip. This trend is projected to continue for the next several years.

If the silicon integrated circuit is the engine that powers the computing and communications revolution, optical fibers are the highways for the Information Age. Although fiber optics is a relatively recent entrant in the high technology arena, the impact of this technology is enormous and growing. It is now the preferred technology for transmission of information over long distances. There are already approximately 30 million km of fiber installed in the United States and an estimated 100 million km installed worldwide. Due in part to the faster than exponential growth of connections to the Internet, the installation of optical fiber worldwide is occurring at an accelerated rate of over 20 million km per year—more than 2,000 km/h, or around Mach 2. In addition, the rate of information transmission down a single fiber is increasing exponentially at a rate of a factor of 100 every decade. Transmission in excess of 1 terabit per second has been demonstrated in the research laboratory, and the time lag between laboratory demonstration and commercial system deployment is about 5 years.

Compound semiconductor diode lasers provide the laser photons that are the vehicles that transport information along the optical information highways. Semiconductor diode lasers are also at the heart of optical storage and compact disk technology. In addition to their use in very-high-performance microelectronics applications, compound semiconductors have proven to be an extremely fertile field for advancing our understanding of fundamental physical phenomena. Exploiting decades of basic research, we are now beginning to be able to understand and control all aspects of compound semiconductor structures, from mechanical through electronic to optical, and to grow devices and structures with atomic layer control, in a few specific materials systems. This capability allows the manufacture of high-performance, high-reliability, compound semiconductor diode lasers that can be modulated at gigahertz frequencies to send information over the fiber-optical networks. High-speed semiconductor-based detectors receive and decode this information. These same materials provide the billions of light-emitting diodes sold annually for displays, free-space or short-range high-speed communication, and other applications. In addition, very-high-speed, low-power compound semiconductor electronics play a major role in wireless communication, especially for portable units and satellite systems.

Another key enabler of the information revolution is low-cost, low-power, high-density information storage that keeps pace with the exponential growth of computing and communication capability. Both magnetic and optical storage are in wide use. Very recently, the highest-performance magnetic storage/readout devices have begun to rely on giant magnetoresistance (GMR), a phenomenon that was discovered by building on more than a century of research in magnetic materials. Although Lord Kelvin discovered magnetoresistance in 1856, it was not until the early 1990s that commercial products using this technology were introduced. In the last decade, the condensed-matter and materials understanding converged with advances in our ability to deposit materials with atomic-level control to produce the GMR heads that were introduced in workstations in late 1997. It is hoped that, with additional research and development, spin valve and colossal magnetoresistance technology may be understood and applied to workstations of the future. This increased understanding, provided in part by our increased computational ability arising from the increasing power of silicon ICs, coupled with atomic-level control of materials, led to exponential growth in the storage density of magnetic materials analogous to Moore' s law for transistor density in silicon ICs.

Future Directions and Research Priorities

Numerous outstanding scientific and technological research needs have been identified in electronic, photonic, and magnetic materials and phenomena. If those needs are met, it is anticipated that these technology areas will continue to follow their historical exponential growth in capability per unit cost for the next few years. Silicon integrated circuits are expected to follow Moore's law at least until the limits of optical lithography are reached, transmission bandwidth of optical fibers is expected to grow exponentially with advances in optical technology and the development of soliton propagation, and storage density in magnetic media is expected to grow exponentially with the maturation of GMR and development of colossal magnetoresistance in the not too distant future. Although these changes will have a major impact on computing and communications over the next few years, it is clear that extensive research will be required to produce new concepts and that new approaches must be developed

to reduce research concepts to practice if these industries are to maintain their historical growth rate over the long term.

Continued research is needed to advance the fundamental understanding of materials and phenomena in all areas. More than a century of research in magnetic materials and phenomena has given us an understanding of many aspects of magnetism, but we still lack a comprehensive first-principles understanding of magnetism. By comparison, the technology underlying optical communication is very young. The past few years have seen enormous scientific and technological advances in optical structures, devices, and systems. New concepts such as photonic lattices, which are expected to have significant technological impact, are emerging. We have every reason to believe that this field will continue to advance rapidly with commensurate impact on communications and computing.

As device and feature sizes continue to shrink in integrated circuits, scaling will encounter fundamental physical limits. The feature sizes at which these limits will be encountered and their implications are not understood. Extensive research is needed to develop interconnect technologies that go beyond normal metal and dielectrics in the relatively near term. Longer term, technologies are needed to replace today 's Si field-effect transistors. One approach that bears investigation is quantum state switching and logic as devices and structures move further into the quantum mechanical regime.

A major future direction is nanostructures and artificially structured materials, which was a general theme in all three areas. In all cases, artificially structured materials with properties not available in nature revealed unexpected new scientific phenomena and led to important technological applications. As sizes continue to decrease, new synthesis and processing technologies will be required. A particularly promising area is self-assembled materials. We need to expand research in self-assembled materials to address such questions as how to controllably create the desired one-, two-, and three-dimensional structures.

As our scientific understanding increases and synthesis and processing of organic materials systems mature, these materials are expected to increase in importance for optoelectronic, and perhaps electronic, applications. Many of the recent technological advances are the result of strong interdisciplinary efforts as research results from complementary fields are harvested at the interface between the fields. This is expected to be the case for organic materials; increased interdisciplinary efforts, for example between CMMP, chemistry, and biology, offer the promise of equally impressive advances in biotechnology.

Conclusion

In conclusion, we identify a few major scientific and technological questions that are still outstanding and call attention to research and development priorities.

Selected Major Unresolved Scientific and Technology Questions

-

What technology will replace normal metals and dielectrics for interconnect in silicon ICs as speed continues to increase?

-

What is beyond today's field-effect transistorbased Si technology?

-

Can we create an all-optical communications/computing network

-

Can we understand magnetism on the mesoscales and nanoscales needed to continue to advance technology?

-

Can we fabricate devices with 100 percent spinpolarized current injection?

Priorities

-

Advance synthesis and processing techniques, including nanostructures and self-assembled one-, two-, and three-dimensional structures;

-

Pursue quantum state logic;

-

Exploit physics and materials science for low-cost manufacturing;

-

Pursue the physics and chemistry of organic and other complex materials for optical, electrical, and magnetic applications;

-

Develop techniques to magnetically detect individual electron and nuclear spins with atomic-scale resolution; and

-

Increase partnerships and cross-education/communications among industry, university, and government laboratories.

Novel Quantum Phenomena in Condensed-Matter Systems

Steven M. Girvin

Indiana University

The various quantum Hall effects (QHEs) are arguably some of the most remarkable many-body phenomena discovered in the second half of the 20th century, comparable in intellectual importance to superconductivity and superfluidity. They are an extremely rich set of phenomena with deep and truly fundamental theoretical implications. The fractional effect, for which the 1998 Nobel Prize in Physics was awarded, has yielded fractional charge, spin, and statistics, as well as unprecedented order parameters. There are beautiful connections with a variety of different topological and conformal field theories studied as formal models in particle theory, each here made manifest by the twist of an experimental knob. Where else but in condensedmatter physics can an experimentalist change the number of flavors of relativistic chiral Fermions or set by hand the Chem-Simons coupling that controls the mixing angle for charge and flux in 2+1D electrodynamics?

Because of recent technological advances in molecular beam epitaxy and the fabrication of artificial structures, the field continues to advance with new discoveries even well into the second decade of its existence. Experiments in the field were limited for many years to simple transport measurements that indirectly determine charge gaps. However recent advances have led to many successful new optical, acoustic, microwave, specific heat, and nuclear magnetic resonance (NMR) probes, which continue to advance our knowledge as well as raise intriguing new puzzles.

The QHE takes place in a two-dimensional electron gas subjected to a high magnetic field. In essence, it is a result of commensuration between the number of electrons, N, and the number of flux quanta, NΦ, in the applied magnetic field. The electrons undergo a series of condensations into new states with highly nontrivial properties whenever the filling factor ν = N/NΦ takes on simple rational values. The original experimental manifestation of the effect was the observation of an energy gap yielding dissipationless transport (at zero temperature) much like in a superconductor. The Hall conductivity in this dissipationless state is universal, given by σxy = ve2/h independent of microscopic details. As a result of this, it is possible to make a high-precision determination of the fine structure constant and to realize a highly reproducible quantum mechanical unit of electrical resistance, now used by standards laboratories around the world to maintain the ohm.

The integer quantum Hall effect owes its origin to an excitation gap associated with the discrete kinetic energy levels (Landau levels) in a magnetic field. The fractional quantum Hall effect has its origins in very different physics of strong Coulomb correlations, which produce a Mott-insulator-like excitation gap. In some ways, however, this gap is more like that in a super-conductor, because it is not tied to a periodic lattice potential. This permits uniform charge flow of the incompressible electron liquid and hence a quantized Hall conductivity.

The microscopic correlations leading to the excitation gap are captured in a revolutionary wave function developed by R.B. Laughlin that describes an incompressible quantum liquid. The charged quasi particle excitations in this system are “anyons” carrying fractional statistics intermediate between bosons and Fermions and carrying fractional charge. This sharp fractional charge, which despite its bizarre nature has always been on solid theoretical ground, has recently been directly observed two different ways. The first is an equilibrium thermodynamic measurement using an ultrasensitive electrometer built from quantum dots. The second is a dynamical measurement using exquisitely sensitive detection of the shot noise for quasi particles tunneling across a quantum Hall device.

Quantum mechanics allows for the possibility of fractional average charge in both a trivial way and a highly nontrivial way. As an example of the former, consider a system of three protons forming an equilateral triangle and one electron tunneling among the 1S atomic bound states on the different protons. The electronic ground state is a symmetric linear superposition of quantum amplitudes to be in each of the three different 1S orbitals. In this trivial case, the mean electron number for a given orbital is 1/3. This, however, is a result of statistical fluctuations because a measurement will yield electron number 0 two-thirds of the time and electron number 1 one-third of the time. These fluctuations occur on a very slow time scale and are associated with the fact that the electronic spectrum

|

NOTE: This article was prepared from written material provided to the Solid State Sciences Committee by the speaker. |

consists of three very nearly degenerate states corresponding to the different orthogonal combinations of the three atomic orbitals.

The ν = 1/3 QHE has charge 1/3 quasi particles but is profoundly different from the trivial scenario just described. An electron added to a ν = 1/3 system breaks up into three charge 1/3 quasi particles. If the locations of the quasi particles are pinned by (say) an impurity potential, the excitation gap still remains robust and the resulting ground state is nondegenerate. This means that a quasi particle is not a place (like the proton above) where an extra electron spends one-third of its time. The lack of degeneracy implies that the location of the quasi particle completely specifies the state of the system, that is, implies that these are fundamental elementary particles with charge 1/3. Because there is a finite gap, this charge is a sharp quantum observable that does not fluctuate (for frequencies below the gap scale).

The message here is that the charge of the quasi particles is sharp to the observers as long as the gap energy scale is considered large. If the gap were 10 GeV instead of 10 K, we (living at room temperature) would have no trouble accepting the concept of fractional charge.

Magnetic Order of Spins and Pseudospins

At certain filling factors (ν = 1, in particular) quantum Hall systems exhibit spontaneous magnetic order. For reasons peculiar to the band structure of the GaAs host semiconductor, the external magnetic field couples exceptionally strongly to the orbital motion (giving a large Landau level splitting) and exceptionally weakly to the spin degrees of freedom (giving a very small Zeeman gap). The resulting low-energy spin degrees of freedom of this ferromagnet have some rather novel properties that have recently begun to be probed by NMR, specific heat, and other measurements.

Because the lowest spin state of the lowest Landau is completely filled at ν = 1, the only way to add charge is with reversed spin. However, because the exchange energy is large and prefers locally parallel spins (and because the Zeeman energy is small), it is cheaper to partially turn over several spins forming a smooth topological spin “texture.” Because this is an itinerant magnet with a quantized Hall conductivity, it turns out that this texture (called a skyrmion by analogy with the corresponding object in the Skyrme model of nuclear physics) accommodates precisely one extra unit of charge. NMR Knight shift measurements have confirmed the prediction that each charge added (or removed) from the ν = 1 state flips over several (~ 4 to 30 depending on the pressure) spins. In the presence of skyrmions, the ferromagnetic order is no longer collinear, leading to the possibility of additional low-energy spin wave modes, which remain gapless even in the presence of the Zeeman field (somewhat analogous to an antiferromagnet). These low-frequency spin fluctuations have been indirectly observed through a dramatic enhancement of the nuclear spin relaxation rate 1/T1. In fact, under some conditions T1 becomes so short that the nuclei come into thermal equilibrium with the lattice via interactions with the inversion layer electrons. This has recently been observed experimentally through an enormous enhancement of the specific heat by more than five orders of magnitude.

Spin is not the only internal degree of freedom that can spontaneously order. There has been considerable recent progress experimentally in overcoming technical difficulties in the MBE fabrication of high-quality multiple-well systems. It is now possible for example to make a pair of identical electron gases in quantum wells separated by a distance (~ 100 Å) comparable to the electron spacing within a single quantum well. Under these conditions, strong interlayer correlations can be expected. One of the peculiarities of quantum mechanics is that, even in the absence of tunneling between the layers, it is possible for the electrons to be in a coherent state in which their layer index is uncertain. To understand the implications of this, we can define a pseudospin that is up if the electron is in the first layer and down if it is in the second. Spontaneous interlayer coherence corresponds to spontaneous pseudospin magnetization lying in the XY plane (corresponding to a coherent mixture of pseudospin up and down). If the total filling factor for the two layers is ν = 1, then the Coulomb exchange energy will strongly favor this magnetic order just as it does for real spins as discussed above. This long-range transverse order has been observed experimentally through the strong response of the system to a weak magnetic field applied in the plane of the electron gases in the presence of weak tunneling between the layers.

Another interesting aspect of two-layer systems is that, despite their extreme proximity, it is possible to make separate electrical contact to each layer and perform drag experiments in which current in one layer induces a voltage in the other due to Coulomb or phonon-mediated interactions.

Stacking together many quantum wells gives an artificial three-dimensional structure analogous to certain

organic Bechgaard salts in which the QHE has been observed. There is recent growing interest in the bulk and edge (“surface”) states of such three-dimensional systems and with the nature of possible Anderson localization transitions.

These phenomena and numerous others, which cannot be mentioned because of space limitations, have provided a wonderful testing ground for our understanding of strongly correlated quantum ground states that do not fit into the old framework of Landau's Fermi liquid picture. As such, they are providing valuable hints on how to think about other strongly correlated systems such as heavy Fermion materials and high-temperature superconductors.

Nonequilibrium Physics

James S. Langer

University of California, Santa Barbara

Nonequilibrium physics is concerned with systems that are not in mechanical or thermal equilibrium with their surroundings. Examples include flowing fluids under pressure gradients, solids deforming or fracturing under external stresses, and quantum systems driven by magnetic fields. These systems often lead to very familiar patterns such as snowflakes, dendritic microstructures in alloys, or chaotic motions in turbulent fluids. Many of these are familiar phenomena governed by well-understood equations of motion (e.g., the Navier Stokes equation), but in some of the most interesting cases, the implications of these equations are not understood.

The Brinkman report (Physics Through the 1990s, National Academy Press, Washington, D.C., 1986) recognized the significance of the emerging field of nonequilibrium physics but missed some of the most important topics of current research such as friction, fracture, and granular materials. Notable progress has been made in the last decade regarding patterns in convecting and vibrating fluids, reaction-diffusion systems, aggregation, and membrane morphology.

The patterns observed in nonequilibrium systems are especially sensitive to small perturbations. Weather phenomena are a prime example. Long-range weather forecasting requires precise characterization of current and past weather conditions. As such characterization becomes more detailed, it is possible to predict future patterns with increasing accuracy. One task of nonequilibrium physics is quantifying the relationship between precision and predictability.

In spite of decades of study, the origin of ductility in materials remains a key unsolved problem of nonequilibrium physics. Traditional explanations based on dislocations do not explain observations such as ductility in glassy materials. We lack a good theory of ductile yielding in situations where stresses and strains vary rapidly in space and time.

One of the most important recent observations is that fast brittle cracks undergo materials-specific instabilities leading to roughness on the fracture surface. Stick-slip friction is also observed on large scales, for example, in earthquake dynamics. The nature of these processes, including the issue of lubricated friction, is a key problem of nonequilibrium physics.

Granular materials are an example of a familiar class of materials of considerable industrial importance that have escaped scrutiny by physicists until recently. These materials are highly inelastic in their interactions. When granular materials cohere slightly, they can behave like viscous fluids as in saturated soil. If the coherence is strong, then we have sandstone or concrete, which behaves more like ordinary solids. If complex dynamics are added, we have foams or dense colloidal suspensions. The nature of lubrication is also relevant to these problems.

These and many other open problems show that the frontiers of physics include many very familiar phenomena. In many cases, these problems are of great importance to materials properties and industrial processes.

Soft Condensed Matter

V. Adrian Parsegian

National Institutes of Health

Adrian Parsegian opened his remarks on soft condensed matter research with the question, “When was American poetry born?” He quoted William Saroyan's response, “When people not trained in poetry began writing it.” No one can imagine Walt Whitman's poetry being written in England or anywhere except America. It was “an instantaneous flop” because people did not think it was even “poetry.” The clear implication for the field of condensed-matter and materials physics is that soft materials physics will flourish only after much initial skepticism and even resistance are overcome.

Advancing our understanding of soft materials requires an unprecedented combination of traditionally distinct, and noninteracting, fields. One of the greatest challenges is the simple recognition and appreciation of the disparate skills required for attacking problems with relentlessly increasing levels of complexity. For example, as the genome project unfolds, uncovering seemingly endless genetic information, synthetic chemists and physicists face the daunting task of producing and understanding the complex interactions that govern biological function occurring at length scales ranging from atomic to supramolecular dimensions. Such problems may not succumb to the conventional reductire methods familiar to most physicists. Modern instrumentation such as third-and even fourth-generation synchrotrons, high-flux neutron sources, and high-resolution nuclear magnetic resonance spectrometers provide powerful means for exploring these issues. However, are these potent tools the key to uncovering the secrets of biology? “What constitutes understanding in this business?” asks Parsegian, adding, “An explanation that satisfies the physicist may be thoroughly irrelevant to the gene therapist.” Established approaches toward education and funding and even attitudes about industrial interactions must change if the physics community is to have a demonstrable and meaningful impact on this burgeoning field.

Many of the macroscopic properties of soft materials are foreign to the condensed-matter physicist. Softness is substituted for hardness as a desirable property; malleability, extensibility, and compliance replace stiffness and shape retention; and fragility is often more valuable than durability. These themes are stimulating a new type of physics that relies on the same bedrock principles enumerated in elementary physics education but must be augmented by the targeted interdisciplinary studies of medicine, food, polymers, and many others.

The field of polymers provides a bridge between conventional physics and the biologically oriented sciences and engineering. Both natural and synthetic macromolecules offer numerous research and development opportunities. Polysaccharides and milk proteins are identified by Parsegian as examples of ubiquitous and naturally occurring macromolecules that may be formulated into novel items of commerce for use in foods and environmentally benign—even biodegradable—plastics. Advances in synthetic chemistry during the past decade have greatly expanded our ability to tailor molecular architecture, even in the simplest of polymers known as polyolefins, prepared from just carbon and hydrogen. This class of synthetic polymers makes up roughly 60 percent of the entire synthetic polymer market. Yet the commercial consequences of varying the number and length of side branches, grafts, and block sequencing on the melt flow properties, crystallization kinetics, and ultimate mechanical properties are just now being realized. Dendrimers, precisely and highly branched giant molecules that can assume a nearly perfect spherical topology, offer fresh strategies for manipulating polymer rheology and may provide ideal substrates for delivering drugs to the human body.

Parsegian noted that polyelectrolytes are an especially important class of materials, since almost all forms of biologically relevant matter contain macro-molecules with some degree of ionic charging. Yet polyelectrolytes present some of the toughest challenges to condensed matter physics, convoluting electrostatic interactions, self-avoiding chain statistics, and traditional solution thermodynamics with self-assembly into higher-order structures that rely on tertiary and quaternary interactions.

Application of physics to biological problems is not a new phenomenon. Physicists have made significant contributions to the field of protein folding for nearly 35 years. In fact, physicists have defined the “language” of the field and created exquisite tools for simulating and even “watching” individual molecules. For example, optical tweezers techniques permit quantification of the force versus extension relationship of indi-

vidual biomolecules such as DNA. These studies are not yet the clinical analysis of protein-folding and prionrelated illnesses such as Alzheimer 's and “Mad Cow” disease.

“Why are physicists frequently off the biological radar screen? “ asks Parsegian.

“In large part they are seen as insular and parochial,” is the response he has received to this question. He points out key differences in how physicists approach problemsolving through a simple example—understanding the force required to separate two interacting biomolecules, as might be encountered in cell adhesion. This delicate problem depends on the time scale of the experiment as much as the force applied and the displacement measured. Given enough time, the molecules will disengage without any applied force. Physicists must resist the temptation to connect or reconfigure anything biological into a conventional, solved, physics problem, in this case treating the biomolecular interactions with traditional static intermolecular potentials.

Rectifying these shortcomings, that is, making physics visible and relevant to the biological sciences, will require education on the part of biologists as well as physicists. Biologists and medical researchers must understand the utility and importance of physics to their work. For example, the sophisticated instrumentation that is often the source of so many scientific revelations (e.g., three-dimensional NMR and x-ray imaging) often comes from fundamental advances in physics. Medical doctors and researchers should understand the origins of their equipment and appreciate the underlying principles of operation. Parsegian offered an assortment of recommendations for improving the current situation. Basic research, which fuels practical developments in industry and medicine, would benefit from the following innovations: (1) grant mechanisms that encourage interdisciplinary work; (2) special grants that circumvent the double jeopardy of being judged both as a biologist and a physicist; (3) fellowships for physicists, including theorists, to work in biological laboratories; and (4) maximized contact between university researchers and industrial scientists, especially those from the chemical, medical, and pharmaceutical industries.

Changes in education were also prescribed. New physics courses must be developed that are targeted at biologists; and physicists should be trained in chemistry, biochemistry, and molecular biology. Introductory physics courses must begin to emphasize soft systems in addition to the traditional curriculum. There should be “bilingual” textbooks, aimed at both physicists and biologists. Summer schools with laboratories for scientists at all stages of their careers and interdisciplinary workshops can be established. In short, the field of physics should spread itself out from the confines of physics departments, while broadening its horizons to encompass the emerging exciting world of soft matter.

Fractional Charges and Other Tales from Flatland

Horst Störmer

Bell Laboratories and Columbia University

Flatland is two-dimensional space. In nature it is found at surfaces or at interfaces. The quantum Hall effect (QHE) and fractional quantum Hall effect (FQHE) are properties of electrons confined to the interface region of semiconductor quantum wells. The electrons can move along the two-dimensional surface of the interface but are confined in the third direction. A Nobel prize was awarded to Klaus von Klitzing in 1985 for the discovery of the QHE, and Störmer, D.C. Tsui, and Robert Laughlin shared the 1998 Nobel Prize in Physics for their discovery of the FQHE. This research area continues to be interesting, with many new ideas and discoveries.

E.H. Hall discovered in 1878 a transverse voltage Vxy when a magnetic field B is imposed perpendicular (z-direction) to the direction of electrical current. The voltage is proportional to the current Ix in the layer. The ratio Vxy/Ix= Rxy defines the transverse resistance. The QHE is the observation of plateaus in the transverse resistance when measured as a function of magnetic field. These plateaus occur when Landau levels are completely filled with electrons. During these plateaus, the longitudinal resistance (RXX = VXX/Ix) appears to vanish: It actually declines to a very small value. The plateaus have a value of resistance that are multiples of the fundamental value h/e2 = 258120, where h is Planck's constant and e is the unit of charge. The plateaus have the same value in each sample. They have become the new international standard of resistance.

Störmer, Tsui, and Gossard continued the measurements of the Hall effect to very high values of mag-

netic field. They discovered additional plateaus in the transverse resistance. These plateaus occur at values that imply that the Landau levels are fractionally occupied: 1/3, 2/5, and so on. The experiments continue, with samples of increasing purity, at lower temperature, and with higher magnetic field. Low-temperature values of the mobility in GaAs/AlGaAs wells are now μ = 2 × 107 cm2/(Vs). The mean-free-path of the electrons is on the order of 100 × 10−6 m. In these recent measurements, the list of fractions is quite large, with unlikely numbers such as 5/23 appearing.

Why are there fractions? The two-dimensional gas of electrons becomes highly correlated. It is difficult to imagine a many-body system that is as simple and as profound. At these high values of magnetic field, the electrons have circular orbits, due to the field, which have a small radius. The separation between electrons is much longer then the diameter of the orbits. The electrons are becoming localized into a kind of Wigner crystal. However, they have unusual motion, because they have no kinetics. Their correlation is entirely due to their mutual Coulomb interaction. The fractions indicate the existence of highly correlated states that occur at these fractional fillings. The topic is fascinating to theoretical physicists, who try to explain the origin of all of these states.

The most important fraction is 1/3. It was discovered first and has a large plateau in the Hall resistance. Each quantum of flux can be considered to be a vortex in the plane. For the 1/3 state, there are three vortices for each electron. The three vortices get attached to the electron and form a quasi particle called a “composite.” For the 1/3 state, it is a “composite Boson,” and the collective state is due to a Bose-Einstein condensation of these quasi particles. Noise measurements in the resistivity confirm that the charge on the current carriers is actually e/3. The excitation energy to excite an electron out of the correlated state is ∆E = 10 K in temperature units. This energy is large, because the experiments are performed at a small fraction of a degree.

At the fraction 1/2, the quasi particles are “composite Fermions,” which cannot form a condensate. There are no plateaus at this fraction, although it is speculated that the Fermions form a superfluid state akin to the Bardeen-Cooper-Schrieffer state in a superconductor. This pairing of Fermions is thought to explain the features of the 5/2 state in particular. For fractions written as the ratio of two integers q/p, even values of p are composite Fermions and odd values of p denote composite Bosons.

New fractions continue to be discovered. The graph of the transverse resistance appears to be a “devil's staircase” of an infinite number of steps of irregular width. Physicists have always been fascinated by the highly correlated electron gas. There is no more highly correlated system of electrons than is found in the FQHE.