1

The Challenge of Earthquake Science

Few natural events disrupt human society more than large earth quakes. Civilization depends on a massive “built environment” anchored in the Earth’s active crust, framing a habitat extremely vulnerable to seismic shaking. To cope with the all-too-frequent destruction caused by earthquakes, people have long sought to improve their practical knowledge about where and when such events might occur, and what happens when they do. Science has shown that seismic activity can be understood in terms of a basic machinery of deformation that shapes the face of the planet. Consequently, the pragmatic inquiry into the causes and effects of earthquakes has become increasingly fused with the quest for a more fundamental understanding of the geologically active Earth.

This report surveys all aspects of earthquake science, basic and applied, from ancient times to the present day (1). Despite rapid progress in the latter part of the twentieth century, the study of earthquakes, like the science of many other complex natural systems, is still in its juvenile stages of exploration and discovery. The brittleness and opacity of the deforming crust have made headway arduous, slower in some respects than in the study of the Earth’s oceans and atmosphere. However, in just the last decade, new instrumental networks for recording seismic waves and geodetic motions have mushroomed across the planet, and new methods for deciphering the geological record of earthquakes have been applied to active faults in many tectonic environments. High-performance computing is now furnishing the means to process massive streams of observations and, through numerical simulation, to quantify many as-

pects of earthquake behavior that are completely resistant to theoretical manipulation and manual calculations. These new research capabilities are transforming the field from a haphazard collection of disciplinary activities to a more coordinated “system-level” science—one that seeks to describe seismic activity not just in terms of individual events, but as an evolutionary process involving dynamic interactions within networks of faults.

The scientific challenge is to leverage these advances into an understanding of earthquake phenomena that is both profound and practical. The research needed to move toward these objectives is the focus of this report. The National Earthquake Hazard Reduction Program, the mainstay for federal earthquake research over the past 25 years (Appendix A), has opened many areas of fruitful inquiry. New possibilities are arising from the system-level approach that now organizes the study of active faulting and crustal deformation. In appraising research opportunities, the Committee on the Science of Earthquakes has sought to keep in focus the rationale for future earthquake research from four complementary perspectives: (1) the need to improve seismic safety and performance of the built environment, especially in highly exposed urban areas; (2) the requirements for disseminating information rapidly during earthquake crises; (3) the fresh opportunities for exciting basic science, particularly in the context of current research on complex natural systems; and (4) the responsibility for educating people at all levels of society about the active Earth on which they live. These perspectives are summarized below.

1.1 SEISMIC SAFETY AND PERFORMANCE

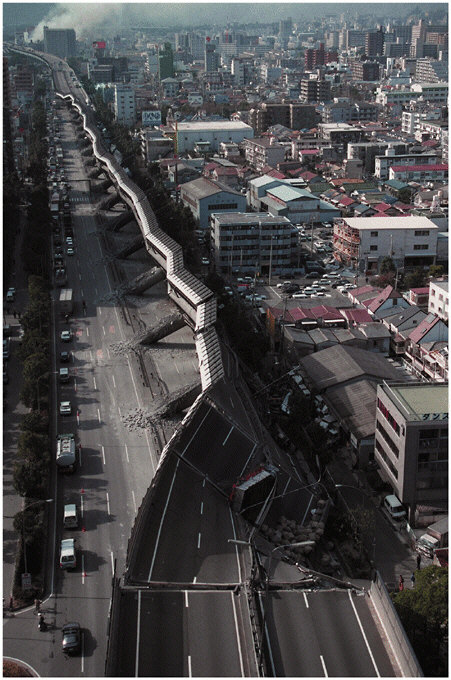

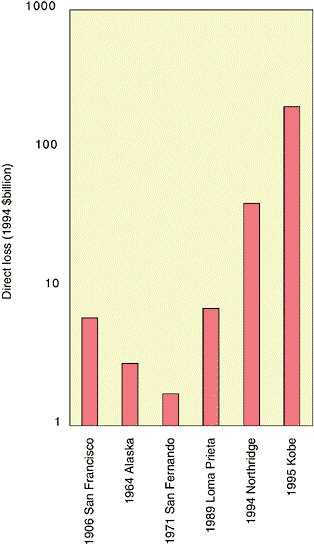

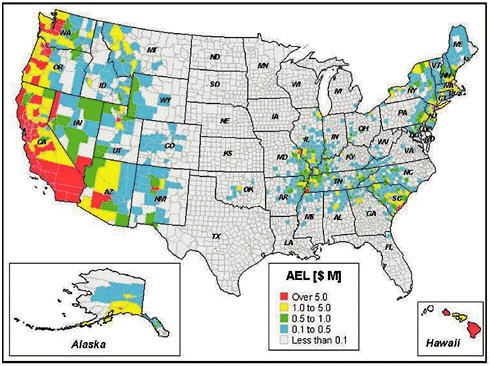

Earthquakes are hazards primarily because strong ground shaking destroys things that people have constructed—buildings, transportation lifelines, and communication systems (Figure 1.1). Earthquakes are also responsible for secondary, though often very damaging, effects such as soil liquefaction, landslides, tsunamis, and fires. Over the last century, earthquakes have caused an average of 10,000 deaths per year worldwide and hundreds of billions of dollars in economic losses. The United States has seen less seismic devastation than many other countries, primarily owing to its lower population density and superior building construction (2). Nevertheless, the annualized long-term loss due to U.S. earthquakes is currently estimated at $4.4 billion per year (3), and this figure appears to be rising rapidly, despite continuing improvements in building codes and structural design (Figure 1.2). California leads with the highest risk, but the problem is truly national: 38 other states face substantial earthquake hazards, including 46 million people in metropolitan areas at moderate to high risk outside of California (Figure 1.3).

FIGURE 1.1 Scene of destruction in Kobe, Japan, from the 1995 Hyogo-ken Nanbu earthquake (magnitude 6.9), showing a collapsed section of the Hanshin Express-way. This event killed at least 5500 people, injured more than 26,000, and was responsible for approximately $200 billion in direct economic losses. SOURCE: Pan-Asia Newspaper Alliance.

FIGURE 1.2 Direct economic losses, given in inflation-adjusted 1994 dollars on a logarithmic scale, from some major earthquakes in the United States and Japan. The plot shows a near-exponential rise in the losses caused by urban earthquakes of approximately equal size from 1971 to 1995. SOURCE: Compiled from Office of Technology Assessment, Reducing Earthquake Losses, OTA-ETI-623, U.S. Government Printing Office, Washington, D.C., 162 pp., 1995; R.T. Eguchi, J.D. Goltz, C.E. Taylor, S.E. Chang, P.J. Flores, L.A. Johnson, H.A. Seligson, and N.C. Blais, Direct economic losses in the Northridge earthquake: A three-year post-event perspective, Earthquake Spectra, 14, 245-264, 1998; National Institute of Standards and Technology, January 17, 1995 Hyogoken-Nanbu (Kobe) Earthquake: Performance of Structures, Lifelines, and Fire Protection Systems, NIST Special Publication 901, Washington, D.C., 573 pp., July 1996.

FIGURE 1.3 Current annualized earthquake losses (AEL) in millions of dollars, estimated by the Federal Emergency Management Agency (FEMA) on a county-by-county basis using the HAZUS method. Twenty-four states have an AEL greater than $10 million. The total AEL estimated for the entire United States is about $4.4 billion. SOURCE: FEMA, HAZUS 99 Estimated Annualized Earthquake Losses for the United States, FEMA Report 366, Washington, D.C., 33 pp., February 2001.

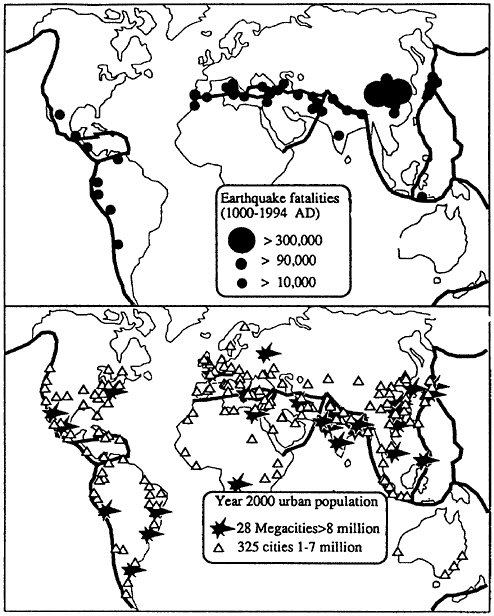

The prediction of losses from future natural disasters is notoriously uncertain (4), but more accurate projections are being established with better technical input from earthquake science, engineering, and economics. Scenarios constructed using loss estimation tools have begun to quantify the magnitude of the risk that now faces large population centers in earthquake-prone regions. According to a 1995 report (5), a repeat of the 1906 San Francisco earthquake would likely result in a total loss of $170 billion to $225 billion (in 1994 dollars). The comparable loss for the 1994 Northridge earthquake, the costliest U.S. disaster on record, was about five times lower. The direct losses in a repeat of the 1923 Kanto earthquake, near Tokyo, would truly be staggering—$2 trillion to $3 trillion— and the indirect economic costs could be much higher (6). Nevertheless, much of the Pacific Rim and other earthquake-prone regions are urbaniz-

ing rapidly (Figure 1.4) and will face risks similar to those of existing megacities, such as Los Angeles, Istanbul, and Singapore. One expert has speculated that the urbanization in earthquake-prone areas of the developing world may result in a four- to tenfold increase in the annual fatality rate over the next 30 years, reversing a long-standing trend (7).

The losses expected from future events (risk) depend on the population and amount of infrastructure concentrated in a given area (exposure) and the vulnerability of the built environment (fragility), as well as the hazard itself. The seismic hazard levels in Alaska and California are both high, but California’s exposure is much greater, which yields a much larger total risk (Figure 1.3). The growth in losses charted in Figure 1.2 comes primarily from the increased exposure, especially in urbanizing regions (8). Exposure can be lowered by a judicious choice of building sites and careful land-use planning; but only by so much—in seismically active regions, all sites face significant hazard. Earthquake risk reduction must thus rely on lowering vulnerability through earthquake-resilient design of new structures and retrofitting or rehabilitating inadequate older structures to improve their seismic safety. Provisions for earthquake design are now an integral part of building codes in most seismically active parts of the United States, although some states with moderate earthquake risk do not have state seismic codes (see Chapter 2). Code improvements and related problems of implementation continue to require scientific guidance to take into account regional and local variations in seismic hazards, as well as uncertainties and improvements in hazard estimates (9).

The fundamental question of how much to invest in seismic safety has become a pressing problem for the national economy. Several hundred billion dollars are currently spent per annum on new construction in seismically active areas of the United States, and about 1 percent of this overall investment is associated with seismic reinforcement (10). Seismic retrofitting is much more expensive; the costs for unreinforced masonry buildings are typically about 20 percent of new construction, and the values for other building types are comparably high. An alarming example can be found in Los Angeles County, where a recent study showed that more than half of the hospitals are vulnerable to collapse in a strong earthquake. The cost for upgrading these facilities to state-mandated levels of seismic safety has been estimated conservatively at $7 billion to $8 billion, which exceeds the entire assessed value of all hospital property in the county and comes at a time when nearly all hospitals are losing money (11). Resolving such economic and political issues is at least as difficult as the engineering and science issues, and it illustrates the need for coordinated planning across all aspects of seismic safety. To facilitate planning, understandable earthquake information must be made avail-

FIGURE 1.4 Major cities (1 million to 7 million inhabitants, open triangles) and megacities (more than 8 million inhabitants, solid stars) in the year 2000, relative to the major earthquake belts (shaded regions). These cities, which house approximately 20 percent of the global population, tend to be concentrated in regions of high seismic risk. SOURCE: R. Bilham, Global fatalities in the past 2000 years: Prognosis for the next 30, in Reduction and Predictability of Natural Disasters, J. Rundle, F. Klein, and D. Turcotte, eds., Santa Fe Institute Studies in the Sciences of Complexity, vol. 25, Addison-Wesley, Boston, Mass., pp. 19-31, 1995. Copyright 1995 by Westview Press. Reprinted with permission of Westview Press, a member of Perseus Books, L.L.C.

able to a wide range of professionals, as well as people at large, who ultimately decide what level of public safety is acceptable.

Requirements for a built environment that can withstand seismic shaking have motivated much research on specialized construction materials and advanced engineering methods. As these efforts have matured, engineers have begun to employ more detailed characterization of strong ground motions in structural design and testing. In the process, the coupling between earthquake engineering and science has been strengthened. Engineers are now interested in going beyond the basic life-safety requirement of preventing structural collapse. Demand is growing for techniques to design buildings that can retain specified levels of functionality after earthquake shaking of a specified probability of occurrence (see Chapter 3). The success of “performance-based engineering” will depend on two related developments: (1) the formulation of new, more diagnostic measures of structural damage (primarily an engineering task) and (2) more sophisticated treatments of ground shaking, including parameterizations of seismograms that are better suited to the probabilistic prediction of damage states than peak ground acceleration and other classical intensity measures (primarily a seismological task). Thus, collaboration between the earthquake science and engineering communities is essential.

1.2 SEISMIC INFORMATION FOR EMERGENCY RESPONSE

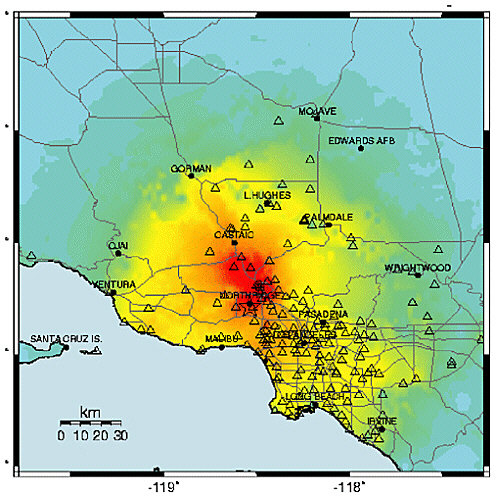

Science and technology can do nothing to prevent or control large earthquakes, and as yet no known method can reliably predict when, where, and how big future tremors will be. However, once an earthquake occurs, advanced seismic information systems can transmit signals from dense networks of seismometers to central processing facilities and, in a fraction of a minute, pinpoint the initial fault rupture (hypocenter) and determine other diagnostic features (e.g., fault orientation). If equipped with strong-motion sensors that accurately record the most violent shaking (when velocity reaches a meter per second and acceleration sometimes exceeds gravity), these automated systems can also deliver accurate maps in nearly real time of where the ground shaking was strong enough to cause significant damage (Figure 1.5). This information about ground shaking can be incorporated into earthquake loss estimation programs such as HAZUS, which provide assessments of potential damage (12). Such information can be crucial in helping emergency managers and other officials deploy equipment and personnel as quickly as possible to save people trapped in rubble and to reduce further property losses from fires and other secondary effects. Bulletins about the magnitude and boundaries of the shaking can also be channeled through the mass media, reduc-

FIGURE 1.5 A map of the shaking intensity for the 1994 Northridge earthquake derived from peak ground accelerations measured by strong-motion instruments (triangles) in the Los Angeles area. In regions with advanced seismic information systems, maps such as this can be broadcast to emergency management agencies within a few minutes, providing critical information for organizing emergency response. SOURCE: U.S. Geological Survey.

ing public confusion during disasters and allaying fears aroused by minor tremors.

Seismic information systems with the capabilities described above are now operational or under development in high-risk areas such as California, the Pacific Northwest, and the intermountain West, but the delivery of rapid information following earthquakes poses many techno-

logical and scientific challenges. The experience with recording large earthquakes is still fairly thin; with rare exceptions, areas of more moderate risk are currently serviced only by sparse seismographic networks with antiquated instrumentation and uneven capabilities for digital recording and processing. To remedy some of the deficiencies, the U.S. Geological Survey has begun to deploy the Advanced National Seismic System, which will improve regional networks, expand the distribution of strong-motion and building response sensors in the high-risk urban areas, and package and deliver earthquake information automatically (see Chapter 4). Research is needed in many aspects of post-event analysis, for example, assimilating data into strong-motion predictions, increasing the reliability of aftershock predictions, and identifying areas of enhanced short-term risk through the development of models of how earthquakes transfer stresses from one fault to another.

With appropriate technology, seismic information systems can be used as earthquake warning systems. Because electronic signals travel much faster than seismic disturbances, it is possible to notify regions away from the epicenter that an earthquake is in progress before any damaging waves arrive. Suboceanic earthquakes sometimes generate tsunamis (sea waves) that can inundate shorelines thousands of kilometers from the source. For example, the great 1964 Alaska earthquake generated tsunamis that killed 17 people along the Oregon-California coast, and tsunamis generated by the 1960 Chile earthquake killed 61 people in Hawaii and 122 people in Japan. These waves travel relatively slowly (500 to 700 kilometers per hour), so post-event predictions of tsunami arrival times and amplitudes can be used to warn coastal communities soon enough to allow for preparation and evacuation. Agencies such as the National Oceanic and Atmospheric Administration operate tsunami-warning networks that depend on precise seismic information to function properly (13). However, how tsunamis are generated by suboceanic earthquakes and how they run up along coastlines are still poorly understood.

Advance warnings of strong motions caused by the fast-moving (2 to 8 kilometers per second) ground waves are more problematic than for tsunamis, because there is so little time for an event to be evaluated and an advisory broadcast through civil defense or other warning systems. The damage zones of large earthquakes usually have radii of 200 kilometers or less, and it takes only 60 to 100 seconds for the most damaging waves (shear and surface waves) to propagate to this distance. Nevertheless, the time is adequate to issue electronic warnings that can initiate emergency shutdowns and other protective actions within power generation, transportation, and computer systems, provided that decisions can be automated reliably (see Chapter 3). The implementation of this capability will require seismic information systems that are robust with respect

to regional disruptions in power and communications and methods for making (and aborting) decisions under stressful conditions, in addition to research on the basic problems of rupture dynamics, wave propagation, and site response.

1.3 BASIC GEOSCIENCE

In its broadest sense, the science of earthquakes seeks a comprehensive, physics-based understanding of seismic behavior and associated geological phenomena. While plate tectonics has provided a simple and precise kinematic framework for relating individual earthquakes to geological deformations on a global scale (see Chapter 2), its extension to a fully dynamic theory of Earth deformation remains a work in progress. Among the fundamental questions that still lack satisfactory answers are: Why do crustal strains on the Earth tend to localize on major faults, rather than be distributed over broad zones of more continuous deformation, as has been inferred for the surface of Venus? How does brittle crustal deformation couple to the ductile motion of the convecting solid mantle? What physical mechanisms explain the large earthquakes that occur in the descending limbs of mantle convection down to depths of nearly 700 kilometers? How predictable are large earthquakes? Such questions connect the study of earthquakes to many basic aspects of solid-Earth research.

Correspondingly, many disciplines—seismology, tectonic geodesy, earthquake geology, rock mechanics, complex systems theory, and information technology—are producing the conceptual innovations needed for the practical issues of seismic hazard analysis, risk reduction, and rapid emergency response. Fundamental work on elastic wave scattering in fractal media has been applied to prediction of the strong ground motions that damage buildings. The ultraprecise techniques of space geodesy have measured the deformation across the broad zone of faulting that extends from the Wasatch Range in Utah to the Pacific coast, improving constraints on probabilistic earthquake forecasting. The need to predict where earthquakes will occur has inspired novel techniques for deciphering where and when earthquakes have occurred in prehistory. This practical focus has provided new opportunities for basic geological research in the youthful fields of paleoseismology and neotectonics.

Other applications of earthquake science include the study of volcanic seismicity, which has led to improvements in predicting volcanic eruptions, and the detection of nuclear explosions, a strategic capability for the verification of international arms control treaties. The coupling of seismological and archaeological studies in the Middle East has shed light on the role of earthquakes in the rise and fall of ancient urban centers and political systems. Laboratory studies of fault friction and earthquake fracture

mechanics have led to constitutive relations and dynamical models that have found applications in materials engineering. The power-law scaling relations between earthquake frequency and size, combined with the recognition that nearly all of the Earth’s crust may be close to its critical state of failure, have stimulated theories of nonlinear system dynamics (14).

The fundamental interactions that govern active faulting are distributed over an enormous range of spatial and temporal scales—sequences of great earthquakes on thousand-kilometer faults over hundreds of years are coupled dynamically to deformation processes operating in milliseconds over millimeters. These processes, difficult to study directly in the field, can be replicated in the laboratory only at the lower end of this range. Sampling and in situ observation by trenching, tunneling, and drilling are confined to the outer few kilometers. Understanding the physical processes at the much greater depths where earthquakes typically nucleate depends on the ability to construct models that combine surface observations with seismic imaging and other remote-sensing data.

Earthquakes in nearly all tectonic environments share similar scaling laws (e.g., frequency-magnitude statistics, aftershock decay rates, stress drops), which suggests that some of the most basic aspects of earthquake behavior are universal and not sensitive to the details of deformation processes. However, differing theories have sparked considerable controversy about how small-scale processes such as rock damage and fluid flow are involved in active faulting. A comprehensive theory must integrate earthquake behavior across all dimensions of the problem. This challenge is a primary motivation for the National Science Foundation’s ambitious EarthScope initiative, which will employ four new technologies for observing active deformation in the United States over a wide range of scales (15).

1.4 EDUCATION

Educating people about earthquakes can effectively reduce human and economic losses during seismic disasters. The pedagogical responsibilities of earthquake science are expanding as concerns about the vulnerability of the built environment increase. The issues encompass the delivery of earthquake information to the public; earthquake-oriented curricula in schools at all levels; the career focus of young researchers, and the transfer of knowledge to engineers, emergency managers, and government officials.

The Internet has greatly enhanced the capability for delivering a wide variety of earthquake information. Many public and private organizations maintain web sites that host a variety of earthquake information services. These sites display up-to-the-minute maps of earthquake epi-

centers and strong motions, describe seismic hazards and damage, offer tips to homeowners about retrofitting and insurance, and make available curricular material. Regional seismic networks and earthquake response organizations update their web sites regularly. The Hector Mine (California) earthquake of October 16, 1999, and the Nisqually (Washington) earthquake of February 28, 2001, were among the first U.S. “cyber-quakes” in the sense that the Internet became the dominant medium for exchanging data and posting results in the minutes and hours following the ruptures (16).

Information and communication technologies have irrevocably altered how scientists interact with the public in several subtle ways. New tools for digital representation and visualization are available to animate scientific descriptions of earthquakes and present research results in more attractive and intelligible formats. Renderings of numerical simulations now allow people to visualize more readily the complex physical processes on space-time scales too large or small, or places too remote, to be observed directly. Worldwide improvement in communication systems is feeding the public’s interest in global problems. When devastating earthquakes occurred in El Salvador and the Indian province of Gujarat in January 2001, coverage by U.S. broadcast and print media, as well as through the Internet, was quicker and more comprehensive than it had been for most previous, remote earthquakes.

Every effort should be made to educate the public that earthquakes are natural phenomena that cannot be controlled (17) but can be understood. The goal is to teach people enough about earthquake science for them to become smart users of information and know how to prepare for and react to seismic events. Earthquakes furnish compelling examples of physics and mathematics in real-world action, and research on earthquakes illustrates the process of empirical inquiry and the scientific method in many interesting ways. The silver lining of seismic disruption is perhaps the “teachable moment” when a major earthquake captures public attention and the media are filled with technical explanations. Properly prepared students can learn a lot about natural science from these intense periods of reporting.

The content and methods for teaching earthquake science in primary and secondary schools remain pressing subjects that should engage more scientists involved in basic research. National science education standards recommend that Earth science be taught at all grade levels (18). For example, students should learn about fossils and rock and soil properties in kindergarten through fourth grade; geologic history and the structure of the Earth in grades 5 through 8; and the origin and evolution of the Earth system in grades 9 through 12. A number of states have adopted these standards, which provide many opportunities for giving students a better

understanding of how the Earth works and how its internal forces are acting to shape their environment.

Researchers tend to be more concerned with higher education. Many four-year colleges and universities offer coursework in Earth science that satisfies the general science requirements for nonmajors. Most of these classes include material on earthquake processes and active tectonics, and they can be quite popular. However, fewer college students are majoring in Earth science (19). The baccalaureate in this field is rarely an adequate degree, and its status as a pre-professional degree (e.g., premedical or prelaw) has never been high, except as preparation for graduate studies in Earth science (20). Graduate education, especially at the Ph.D. level, is research oriented and therefore sustained by the migration of young geoscientists into teaching careers at research universities. Earth science needs a healthy program of earthquake research to attract the best candidates.

1.5 PREDICTIVE UNDERSTANDING

The main goal of earthquake research is to learn how to predict the behavior of earthquake systems. This goal drives the science for two distinct reasons. First, society has a compelling strategic need to anticipate earthquake devastation, which is becoming more costly as large urban centers expand in tectonically active regions. In this context, prediction has come to mean the accurate forecasting of the time, place, and size of specific large earthquakes, ideally in a short enough time to allow nearby communities to prepare for a calamity. Three decades ago, many geoscientists thought this type of short-term, event-specific earthquake prediction was right around the corner. However, proposed schemes for short-term earthquake prediction have not proven successful. In particular, no unambiguous signals precursory to large earthquakes have been identified, even in areas such as Japan and California where monitoring capability has improved substantially. Although a categorical statement about the theoretical infeasibilty of event-specific prediction appears to be premature, few seismologists remain optimistic that short-term, event-specific prediction will be feasible in the foreseeable future.

It is true, nevertheless, that many aspects of earthquake behavior can be anticipated with enough precision to be useful in mitigating risk. The long-term potential of near-surface faults to cause future large earthquakes can be assessed by combining geological field studies of previous slippage with seismic and geodetic monitoring of current activity. Seismologists are learning how geological complexity controls the strong ground motion during large earthquakes, and engineers are learning how to predict the effects of seismic waves on buildings, lifelines, and

critical facilities such as large bridges and nuclear power plants. Together they have quantified the long-term expectations for potentially destructive shaking in the form of seismic hazard maps, and they are striving to improve these forecasts by understanding how past earthquakes influence the likelihood of future events. Consideration of slip models along faults is now leading to predictions of site-specific ground motions, needed by engineers for the design of large urban structures that can withstand seismic shaking.

The second reason for seeking a predictive understanding is epistemological and generic to fundamental research on a variety of geosystems, be they localized—like volcanoes, petroleum reservoirs, and groundwater systems—or global—like the oceans and atmosphere. These geosystems are so complex and their underlying physical and chemical processes are so difficult to characterize that the traditional reductionist approach, based on the elucidation of fundamental laws, is incomplete as an investigative method. In the face of such complexity, the ability to routinely extrapolate observable behavior becomes an essential measure of how well a system is understood. Model-based prediction plays an integral role in this type of empiricism as the first step in an iterated cycle of data gathering and analysis, hypothesis testing, and model improvement.

1.6 ORGANIZATION OF THE REPORT

This report summarizes progress in earthquake science and assesses the opportunities for advancement. Although not intended to be exhaustive, the report documents major scientific achievements through extensive references and technical notes. Chapter 2 charts the rise of earthquake science and engineering, introducing the technical concepts and terminology in their historical context. Chapter 3 gives the current view of seismic hazards on a national and global scale and shows how an improved characterization of these hazards can reduce earthquake losses by lessening risk and speeding response. Chapter 4 describes the observational activities and research methods in four major disciplines—seismology, tectonic geodesy, earthquake geology, and rock mechanics—and discusses the advanced technologies being employed to gather new information on the detailed workings of active fault systems. The integration of this information into a predictive, physics-based framework is the subject of Chapter 5, which lays out key scientific questions in five areas of interdisciplinary research—fault systems, fault-zone processes, rupture dynamics, wave propagation, and seismic hazard analysis. Chapter 6 examines the goals of earthquake research over the next decade and the resources and technological investments needed to achieve these goals in

nine major areas of interest. Federal programs supporting earthquake research and engineering are summarized in Appendix A. Finally, a list of acronyms is given in Appendix B.

NOTES

|

|

the Japanese government and private corporations would need to sell foreign assets and/or issue new securities [which] would upset the supply and demand balance at the bond markets and push up long-term interest rates.” For further comments on indirect losses, see T. Uchida (Thoughts about the great Tokyo Earthquake, By the Way, May/June 1995). |

|

7. |

R. Bilham, Global fatalities in the past 2000 years: Prognosis for the next 30, in Reduction and Predictability of Natural Disasters, J. Rundle, F. Klein, and D. Turcotte, eds., Santa Fe Institute Studies in the Sciences of Complexity, vol. 25, Addison-Wesley, Boston, Mass., pp. 19-31, 1995. Over the past 400 years, the annualized global mean fatality rate from earthquakes has fallen by more than an order of magnitude, from 10 to 100 per million before 1600 to about 1 per million during the last half of the twentieth century. Yet Bilham raises the specter of more than 1 million fatalities from a single event if a major earthquake occurs near one of the dozens of supercities in developing countries within seismically active zones. |

|

8. |

Increases in economic exposure are not limited to urban centers, however. The moderate (M 5.2) Yountville earthquake of September 3, 2000, in a rural area 10 miles northwest of Napa, California, resulted in a direct loss of at least $50 million; 25 people were injured— 2 critically—and building inspectors red-tagged 16 buildings and yellow-tagged 168 others. The governor declared a state of emergency and obtained federal disaster relief funds (<http://quake.wr.usgs.gov/recent/reports/napa>). |

|

9. |

California Seismic Safety Commission, California at Risk, 1994 Status Report, SSC 94-01, Sacramento, Calif., p. 1, 1994. |

|

10. |

Current Construction Reports, Value of Construction Put in Place, C30/01-5, U.S. Department of Commerce, U.S. Census Bureau, Washington, D.C., 21 pp., 2001; Office of Technology Assessment, Reducing Earthquake Losses, OTA-ETI-623, U.S. Government Printing Office, Washington, D.C., 162 pp., 1995. |

|

11. |

Summary of Hospital Performance Ratings, Office of Statewide Health Planning and Development, Sacramento, Calif., 20 pp., March 2001; Study Finds High Quake Risk for Hospitals, Los Angeles Times, March 29, 2001. |

|

12. |

HAZUS is a public-domain software package and database that estimates losses from ground motions. (See Chapter 3, Section 3.4.) HAZUS was run by the Federal Emergency Management Agency immediately following the 2001 Nisqually, Washington, earthquake and played a role in the decision for a Presidential Disaster Declaration (FEMA Disaster Federal Register Notice 1361, March 2001, <http://www.appl.fema.gov/library/dfrn/2001/d1361in.htm>). |

|

13. |

Earthquake information is provided by seismic stations operated by tsunami warning centers, the U.S. Geological Survey National Earthquake Information Center, and international sources. If the location and magnitude of an earthquake meet the known criteria for generating a tsunami, a warning is issued to emergency managers and the general public. The warning includes predicted tsunami arrival times at selected coastal communities within the geographic area defined by the maximum distance the tsunami could travel in a few hours. See <http://www.geophys.washington.edu/tsunami/general/warning/warning.html>. |

|

14. |

P. Bak, How Nature Works: The Science of Self-Organized Criticality, Springer-Verlag, New York, 212 pp., 1996; J.S. Langer, J.M. Carlson, C.R. Myers, and B.E. Shaw, Slip complexity in dynamic models of earthquake faults, Proc. Natl. Acad. Sci., 93, 3825-3829, 1996; B.E. Shaw and J.R. Rice, Existence of continuum complexity in the elastodynamics of repeated fault ruptures, J. Geophys. Res.,105, 791-810, 2000. |

|

15. |

The EarthScope program will deploy four technologies for observing the active tectonics and structure of the North American continent: (1) USArray, for high-resolution seismological imaging of the structure of the continental crust and upper mantle beneath the conterminous United States, Alaska, and adjacent regions; (2) San Andreas Fault Observatory |

|

|

at Depth (SAFOD), for probing and monitoring the San Andreas fault at seismogenic depths; (3) Plate Boundary Observatory (PBO), for measuring deformations of the western United States using strainmeters and ultraprecise geodesy of the Global Positioning System; and (4) Interferometric Synthetic Aperture Radar (InSAR) Initiative, for using satellite-based InSAR to map surface deformations, especially the deformation fields associated with active faults and volcanoes (<http://www.earthscope.org>). |

|

16. |

For example, see the web-based clearinghouse for the Nisqually earthquake set up by the University of Washington at <http://maximus.ce.washington.edu/~nisqually/>. |

|

17. |

In the 1960s, studies of seismicity induced by reservoir loading (D. Simpson, W. Leith, and C. Scholz, Two types of reservoir-induced seismicity, Bull. Seis. Soc. Am., 78, 2025-2040, 1988) and injection of fluids into rock masses (J. Healy, W. Rubey, D. Griggs, and C.B. Raleigh, The Denver earthquakes, Colorado, USA, Science, 161, 1301-1310, 1968) stimulated speculations that similar methods might be adaptable to relieving tectonic stresses prior to large earthquakes. In 1969, a National Research Council report put forward the argument not only for earthquake prediction, but also for earthquake control: “Prevention or control of destructive earthquakes must rank as a major goal of seismology” (National Research Council, Seismology: Responsibilities and Requirements of a Growing Science, Part 1: Summary and Recommendations, National Academy Press, Washington, D.C., p. 15, 1969). This can-do attitude was quickly replaced by the recognition that the stresses and strains in the brittle crust are such complex functions of space and time and so poorly known that any program aimed at beneficial control would be expensive, ineffective, and potentially dangerous, especially in regions of high seismic activity. |

|

18. |

National Research Council, National Science Education Standards, National Academy Press, Washington, D.C., 262 pp., 1996. |

|

19. |

Over the past two decades, undergraduate enrollment in the geosciences has declined from a high of 36,893 in 1983 to 10,454 in 2000. See American Geological Institute’s survey of historical enrollment and degree information at <http://www.agiweb.org/career/enroll.html>. |

|

20. |

According to the Bureau of Labor Statistics, a bachelor’s degree in geology or geophysics is adequate for some entry-level jobs, but more job opportunities and better jobs with good advancement potential usually require at least a master’s degree. See U.S. Department of Labor, Occupational Outlook Handbook, 2000-2001 edition, Bulletin 2520, <http://www.bls.gov/oco/ocos050.htm>. |