2

Concepts, Methods, and Data Sources

“… the Coalition is committed to measuring its efforts and showing quantifiable progress towards its goals.”

Mobilizing Georgia, Immobilizing Cancer

Georgia Cancer Coalition, 2003

“Quality health care means doing the right thing, at the right time, in the right way, for the right person—and having the best possible results.”

Agency for Healthcare Research and Quality, 2001

The Institute of Medicine (IOM) Committee on Assessing Improvements in Cancer Care in Georgia began its work by developing a conceptual framework and approach for the selection of quality measures that could be used by states—Georgia in particular—to assess progress in improving the quality of cancer care and in reducing cancer-related morbidity and mortality. The committee assumed it would recommend a rather slim set of measures. Ultimately, as the state’s data collection and reporting system proves its workability and value to funding sources, GCC can invest in expanding the scope and type of measures it employs. At a minimum, the state should regularly revisit the measure set to make adjustments. Oncology is a dynamic field of medicine; today’s indicators of quality care may become irrelevant in a few years.

The committee’s conceptual framework and approach to selecting quality-of-cancer-care measures for Georgia, including the expert panel process and other methods, are described in this chapter. The final section

of the chapter identifies and discusses the strengths and weaknesses of key sources of data for the quality measures recommended in this report.

KEY CONCEPTS

The committee began by establishing some basic definitions and concepts. As discussed below, these included what constituted good quality health care, how to define quality measures, and what principles and criteria the committee should use to select quality measures for cancer care.

It is important to note that the concepts and methods used by the IOM committee were built on important foundational work by others—most notably Avedis Donabedian’s classic body of work on quality of care; IOM’s National Roundtable on Health Care Quality and subsequent IOM inquiries into quality of care, including the IOM National Cancer Policy Board’s research on the quality of cancer care; RAND Health’s groundbreaking work in developing indicators of quality health care and in documenting basic deficits in U.S. health care; and the developmental work of the Strategic Framework Board of the National Quality Forum (Donabedian, 1980; IOM, 1998, 1999, 2000a,b, 2001a,c; Asch et al., 2000; McGlynn, 2002, 2003a,b; McGlynn and Malin, 2002; McGlynn et al., 2003; NQF, 2003). Also important was the work of the Comprehensive Cancer Control Program of the Centers for Disease Control and Prevention (CDC) and the Outcomes Research Branch of the Cancer Control and Population Sciences Division of the National Cancer Institute.

What Is Good Quality Health Care?

The IOM committee defined good quality care as care that makes desired health outcomes more likely and more consistent with current professional knowledge, a definition first put forth by IOM in 1990 (IOM, 1990). In other words, as articulated by the federal Agency for Healthcare Research and Quality, good quality care is “doing the right thing, at the right time, in the right way, for the right person—and having the best possible results” (AHRQ, 2001).

Several important concepts are implicit in the perspectives on health care quality adopted by the committee. One is that the roots of poor quality health care are systemic. Health care systems, along with many individual and institutional participants, determine the quality of care—and considering these systems and participants is essential to quality improvement. Moreover, some factors are beyond the control of health care providers. A person’s health is a result of many forces—genetic, environmental, behavioral factors, exposure to risk, and health history, as well as the person’s personal preferences regarding such things as the invasiveness of medical or

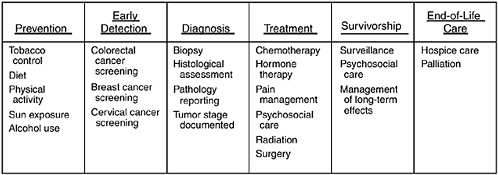

surgical procedures or desire for extreme life-saving procedures (Palmer, 1997). Furthermore, the quality of health care is multidimensional and continuous. As shown in Figure 2-1, “the cancer control continuum”—which includes the domains of prevention; early detection; diagnosis; treatment (delivering evidence-based treatment); minimizing pain and providing humane, end-of-life care; and maintaining the health of survivors—is a useful framework for assessing the impact of the GCC initiative or similar state-level cancer initiatives. This framework takes the broad view that an integrated and coordinated approach is key to reducing cancer incidence, morbidity, and mortality (Richard-Lee and Rochester, 2003). It also recognizes that for many patients, cancer is a life-altering, chronic illness with a prolonged course.

What Are Health Care Quality Measures?

This section reviews how the committee chose quality measures using a process which was the same for all four cancers considered in this report. The IOM committee adopted the classification of health care quality measures suggested by Donabedian’s framework (Donabedian, 1980) for measuring quality of care: (1) structural measures—the features of health care facilities, equipment, staffing, and organization of delivery of care that establish the capacity to provide good quality care; (2) process measures—what health care providers do to or for patients in both a technical and interpersonal way; and (3) outcome measures—what happens to patients, their health status, functional status, and quality of life that can be directly

FIGURE 2-1 Domains of the cancer control continuum with selected examples of activities in each domain.

SOURCE: Adapted from National Cancer Institute figure on the “Cancer Control Continuum”: http://cancercontrol.cancer.gov/od/continuum.html.

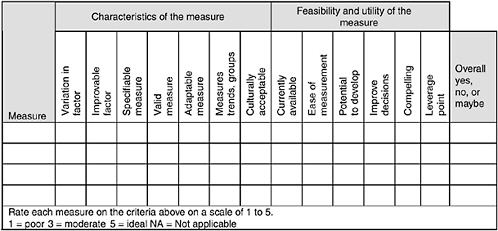

attributed to the health care they have received. The committee found it useful to think of measures in this way for at least two reasons: when possible, measures should deal with outcomes, that is, assess what good care actually achieves for patients; and thinking of measures in terms of structure, process, or outcomes focuses attention on the part of the health system that may require attention. But, as indicated in Figure 2-2, the committee evaluated measures as described in that figure and the accompanying text.

The committee also agreed with the classification, first suggested by the National Roundtable on Health Care Quality, that there are three types of quality problems in cancer care: too little care; too much care; and the wrong care (IOM, 1998). Too little care (“underuse”) is when patients do not receive evidence-based preventive care, diagnostic tests and procedures, treatment, or palliative care. Too much care (“overuse”) is when patients receive unnecessary diagnostic tests, medications, surgeries, or other health care services that may cause side effects or pose other health risks. The wrong care (“misuse”) is when diagnoses are missed or delayed, ineffective treatments are used, effective procedures are done poorly, or errors are made. This classification is useful to have in mind as a concept because it may bear on the feasibility or utility of a measure, but, as noted above, the committee focused on scoring as shown in Figure 2-2 and used the excellent work of Donabedian and the Roundtable only as background.

Finally, the committee took the view that patient-centeredness is a fundamental attribute of high-quality health care (IOM, 2001a). Thus, measuring patients’ perspectives, experiences, and preferences regarding

FIGURE 2-2 Sample scoring sheet used to evaluate potential quality measures.

the structure, process, and outcomes of cancer care is fundamental to measuring quality. This important attribute led the committee to discuss, and strongly urge Georgia to survey patients as described in Chapter 7. The committee often referred to patient-centeredness in evaluating potential measures, although clearly not every measure focuses on this attribute.

KEY METHODS

Guiding Principles and Criteria Used to Select Quality Measures for Cancer Care

In deciding which quality-of-cancer-care measures to recommend, the committee adapted the guiding principles and selection criteria of the National Quality Forum’s Strategic Framework Board (McGlynn, 2002). Thus, the committee’s decisions about the selection of quality-of-cancer-care measures were guided by the following five general principles:

-

Each measure should relate directly to a GCC strategic goal (Box 2-1).

-

Each measure should have a clear and compelling use.

-

Each measure should avoid imposing an undue burden on those providing data (with the understanding that important improvements to Georgia’s cancer information infrastructure may be necessary).

-

Each measure should be actionable so that Georgia providers and other stakeholders can use the measure for making decisions or taking steps to improve the state’s cancer care.

-

Each measure should help GCC lead the improvement of cancer care in Georgia.

Additional criteria the committee used as ideals to guide its decisions about whether to accept or reject specific quality-of-cancer-care measures were each measure’s importance, scientific acceptability, and feasibility/ utility (NQF, 2003); they were part of the discussions and were reflected in the scoring sheet used to evaluate potential measures and in the one-page descriptions defined below and presented in Chapters 3-6:

-

Importance. Candidate quality-of-cancer-care measures were considered important if, from the perspective of GCC, they represented one or more of the following: a significant leverage point for improving the quality of cancer care; an aspect of cancer care where current practice does not meet the best available, evidence-based standards of care; a standard of care that is inconsistently practiced throughout the state, varying by location of care, region of the state, socioeconomic factors, race and ethnicity, or other

|

BOX 2-1 GCC GOALS THAT ARE THE FOCUS OF THIS IOM STUDY 1. Prevent Cancer and Detect Existing Cancers Earlier. Reduce the number of deaths due to cancer through a focused cancer prevention and early detection effort; and provide education to and screen Georgians for cancer, emphasizing the cancers that are the major causes of death.

2. Improve Access to Quality Care for All Georgians with Cancer. Increase access to quality care and upgrade the availability of world-class medical care for Georgians with cancer through state-of-the-art technology and methods.

OTHER GCC GOALS 3. Save More Lives in the Future. Create a new leading body of knowledge and leading products that contribute to the ultimate eradication of cancer in Georgia and for humankind. 4. Train Future Cancer Researchers and Caregivers. Leverage the overall effort to benefit future generations by training the next wave of cancer researchers and caregivers. 5. Turn the Eradication of Cancer into Economic Growth. Create and enhance existing partnerships with pharmaceutical and biotechnology companies that will provide quality jobs to Georgians and environmentally clean additions to the economy. SOURCE: GCC Strategic Plan, 2001. |

-

factors; and an area of cancer care that GCC could realistically act on and improve.

-

Scientific acceptability. Candidate measures were judged to be scientifically acceptable if they were (1) precisely specified and described in a standard format including explicit specifications for the numerator and denominator (where applicable); (2) valid—that is, clearly able to reflect the concept being evaluated and to discern good from bad quality; and (3) adaptable—that is, useful in a variety of real-world circumstances where patient preferences often differ, clinical scenarios vary, and similar services are provided in different organizational settings.

-

Feasibility/utility. Candidate quality-of-cancer-care measures had to be both feasible and usable to be selected by the IOM committee. A measure was judged to be feasible if it could be produced using data that are currently available or data that could be developed with reasonable improvements to Georgia’s cancer information infrastructure (e.g., by enhancing registry data or expanding or introducing new patient- or population-based surveys). A measure was judged to have utility if it had practical and compelling applications (e.g., as potential management tools to drive quality improvements). GCC and other users of the quality indicators would have to be able to use the measure to track statistical trends and group disparities and also to present findings that could be easily interpreted by key audiences, including the governor, state legislature, providers of cancer care services, patients, and the public.

Consideration of Levels of Evidence for Quality-of-Cancer-Care Measures

In addition to using the selection criteria just described, the IOM committee considered the strength of the evidence underlying the candidate quality measures when deciding on acceptance or rejection. The committee adopted the U.S. Preventive Services Task Force’s (USPSTF) three-level hierarchy of evidence (Box 2-2). The committee’s gold standard, referred to as Grade I evidence by USPSTF, is evidence from a properly conducted randomized controlled trial. Grade II refers to evidence from well-designed, nonrandomized controlled trials; well-designed cohort or case-control studies; or multiple-time series. Grade III, the least reliable type of evidence, includes expert opinion, descriptive studies, and case reports. Of course, the strength of evidence varied for different measures, but the committee assigned great importance to evidence-based measures, and this almost always meant at least some Grade I support.

|

BOX 2-2 The “hierarchy of evidence” applied to clinical research (e.g., examining whether a given treatment is effective in patients with a specific type of cancer) is well established and agreed upon. The following version is taken from the well-respected U.S. Preventive Services Task Force, proceeding from the most reliable to the least reliable type of evidence (i.e., from Grade I to Grade III): Grade I Evidence—Evidence obtained from at least one properly randomized controlled trial. Grade II Evidence

Grade III Evidence—Opinions of respected authorities, based on clinical experience, descriptive studies and case reports, or reports of expert committees. SOURCE: USPSTF, 1996. |

Focus on Clinical Indicators of the Quality of Cancer Care

The IOM committee chose to focus primarily on potential measures of clinical quality, that is, measures useful in assessing the quality of preventive, diagnostic, or therapeutic patient care, because of earlier IOM reports that identified a “wide gulf” between what is known about cancer care and what is actually experienced by many Americans (IOM, 1999). A series of reports by IOM’s National Cancer Policy Board have found extensive evidence that the public can not depend on receiving even the most basic elements of quality care, such as cancer prevention and early detection, appropriate diagnosis and treatment, palliative care, and follow-up of survivors (IOM, 2001c, 2003a,b).

Focus on Four Common Cancers in Adults

The IOM committee limited its review to potential quality measures that might be used to track progress in controlling the four most common cancers in Georgia and the United States—namely, breast, colorectal, lung, and prostate cancers. Together these cancers account for approximately 58 percent of cancer incidence and 53 percent of cancer-related mortality in Georgia (Table 2-1). In 2000, these four cancers contributed 18,194 of the state’s 31,591 new cases of cancer and 7,213 of the 13,628 cancer-related deaths (NCI and CDC, 2004; GDPH, 2004).

The overwhelming majority of cancers are diagnosed in adults, so the committee chose to focus exclusively on adult cancers. This focus on the most common cancers in adults is a pragmatic one. Almost 9 in 10 incident cancer cases are diagnosed among adults aged 45 and older (Ries et al., 2004). In fact, cancers of the breast, colon and rectum, lung, and prostate almost never occur in children. In Georgia, only an estimated 150 children are diagnosed with cancer each year (McNamara et al., 2002).

Expert Panel Process

A technique that is used widely to define the attributes of good quality health care and to review and select measures of health care quality is an expert panel process (Brook, 1994; Shekelle et al., 1998; Asch et al., 2000). The IOM Committee on Assessing Improvements in Cancer Care in Georgia was constituted so that the committee could function as an expert panel.

TABLE 2-1 Incidence and Mortality in Georgia from the Four Most Common Cancers, 2000

|

Cancer site |

Incidence |

Mortality |

||

|

No. of cases/year |

Percent of all sites |

No. of deaths/year |

Percent of all sites |

|

|

Lung and bronchus |

5,060 |

16 |

4,143 |

30 |

|

Female breast |

4,953 |

16 |

996 |

7 |

|

Colorectal |

3,452 |

11 |

1,293 |

9 |

|

Prostate |

4,729 |

15 |

781 |

6 |

|

Subtotal |

18,194 |

58 |

7,213 |

52 |

|

All sites |

31,591 |

100 |

13,628 |

100 |

|

SOURCE: NCI and CDC, 2004; GDPH, 2004. |

||||

Members of the committee were recruited to ensure national-level leadership in the following disciplines:1

-

Academic-based cancer care

-

Cancer epidemiology

-

Community cancer care

-

Consumer and patient perspective

-

Disparities in care

-

Evaluation methods

-

Health policy/health services research

-

Management/academic cancer centers

-

Medical informatics

-

Oncology nursing

-

Outcomes research

-

Palliative care

-

Prevention

-

Primary care

-

Quality measurement/improvement

-

Radiation therapy

-

State cancer control

-

Tumor registries

In a series of six monthly sessions, the IOM committee held conference calls to individually review and vote on more than 80 candidate measures. The committee’s review process began with cancer prevention- and early detection-related quality measures, followed by diagnosis and treatment measures, and then palliative and end-of-life measures.

Before each review session, IOM staff sent committee members a one-page description of each potential quality measure. Each summary description included the following:2

-

a one- or two-line description of the measure;

-

the origin or source of the measure;

-

capsule summaries of the consensus on care, including the level of evidence supporting the underlying process to be measured and what is known about the gap between the consensus on care and actual care delivery;

-

the method for calculating the measure (including the numerator, denominator, population for whom the measure should be constructed, and comments;

-

potential sources of data and performance benchmarks, including any known data limitations; and

-

key references for the capsule summaries.

The staff also sent committee members a scoring tool to facilitate their evaluation of the potential quality measures (Figure 2-2). Committee members were asked to examine each summary and complete the scoring tools in advance of each review session. The scoring tool served as a decision aid and device for organizing the committee’s review—scores and numerical grades were tallied for discussion purposes only. The review process was iterative. During the first round of reviews, the committee discussed and voted “yes, no, or maybe” on each individual measure. “Maybe” measures were revisited in subsequent review sessions. “No” measures were discarded.

SOURCES OF QUALITY-OF-CANCER-CARE MEASURES CONSIDERED

The IOM committee drew its pool of candidate quality-of-cancer-care measures for Georgia from the cancer-related quality measures and clinical guidelines of more than 20 leading organizations—including the federal Agency for Healthcare Research and Quality’s newly released National Healthcare Quality Report, the U.S. Preventive Services Task Force, the Foundation for Accountability, the National Quality Forum, the National Committee for Quality Assurance, selected state cancer control programs, and RAND Health (Box 2-3). Descriptions of selected sources of cancer-related clinical guidelines and quality measures are presented in Appendix A, Sources of Cancer-Related Clinical Guidelines and Quality Indicators.

DATA FOR RECOMMENDED QUALITY-OF-CANCER-CARE MEASURES IN GEORGIA

The IOM committee strongly urges that Georgia make the necessary investment required to generate reliable data inputs into its quality-of-cancer-care information system. If the state’s cancer information system includes accurate, complete, and timely data, it will enable the state to identify where quality problems exist, to stimulate quality improvements, and to measure progress. The integrity of Georgia’s quality-of-cancer-care information system will depend on how the quality data inputs are defined and collected (Kahn et al., 2002). Methods must be uniform across multiple health care providers and settings throughout the state.

The data inputs to the state’s quality-of-cancer-care information system will originate in a variety of subsidiary information systems, including tumor registries; administrative claims databases (e.g., for Medicare benefi-

|

BOX 2-3 Accreditation Organizations

Federal Health Agencies

Provider Groups and Professional Associations

State Cancer Control Programs

Others

|

ciaries and Medicaid enrollees); the medical records of hospitals, physician offices, and pathology laboratories; patient- and population-based surveys, state and national datasets; and linkages between registry data and other data sources on, for example, comorbidities and use of cancer-related services (IOM, 2000a; McGlynn, 2003b).

As described in the discussion that follows, four types of data will be integral to Georgia’s quality monitoring system:

-

cancer registries,

-

medical records,

-

administrative claims (Medicare claims in particular), and

-

surveys.

Table 2-2 lists the potential data sources for selected quality-of-cancer-care measures recommended for Georgia. Table 2-3 summarizes the strengths and weaknesses of these critical data sources. Although it is beyond the scope of this report to provide more than this brief summary of these or other relevant data sources, additional information on potential data sources can be found elsewhere (see, for example, IOM, 2000a; Malin et al., 2002a; Howe et al., 2003; Clarke et al., 2003).

Cancer Registries

Cancer registries play a critical role in cancer surveillance and are also a vital resource for measuring the quality of cancer care (IOM, 2000a; Kahn et al., 2002; McGlynn, 2002; Malin et al., 2002b; Wingo et al., 2003). Population-based cancer registries maintain a complete enumeration of cancer cases in a specific geographic area, thereby providing the data that are integral to determining the risk of developing and dying from cancer in that area and to building an information base for studying the impact of cancer on important subgroups (Howe et al., 2003).

In Georgia, hospitals and outpatient facilities including pathology laboratories, radiation therapy and medical oncology centers, and physicians’ offices, are required by law to report information on newly diagnosed cancer patients to the state’s population-based cancer registries (GCCR, 2003). These include (1) the Georgia Comprehensive Cancer Registry (GCCR), where data on more than 60 percent of Georgia’s cancer cases are submitted; and (2) the Surveillance, Epidemiology, and End Results (SEER) registry, which covers an estimated 37.1 percent of Georgia’s population—35.6 percent in the five-county metropolitan Atlanta region and 1.5 percent in 10 rural counties with substantial African-American populations (NCI, 2004b). These and several other cancer registries are discussed further below.

Georgia Comprehensive Cancer Registry

The GCCR is a unit of the Georgia Department of Human Resources, in the state’s Division of Public Health. The GCCR’s data collection is managed by the Georgia Center for Cancer Statistics, a research division of the Rollins School of Public Health of Emory University, under contract with the state. In addition to collecting, editing, and processing the GCCR

TABLE 2-2 Potential Sources of Data for Quality-of-Cancer-Care Measures and Benchmarks

TABLE 2-3 Strengths and Weaknesses of Key Sources of Data for Quality-of-Cancer-Care Measures and Benchmarks

|

Data source |

Purpose |

Strengths |

Weaknesses |

|

Population-based tumor registries |

To track new cases of cancer, analyze cancer incidence, and monitor cancer trends |

—Complete enumeration of incident cancer cases —Detailed information on cancer stage, survival —Data on hospital-based services —Records can be linked with claims databases to provide a more complete picture of patient care |

—Relevant only to persons with confirmed cancers —Data on ambulatory care may be incomplete —Time lag can be 2 years —Secondary treatment data less reliable than data on initial treatment —Incomplete death ascertainment |

|

SEER registries |

To collect information on newly diagnosed cancers in nine U.S. regions, including metro Atlanta and 10 rural Georgia counties |

—High-quality data —Detailed public use datasets are available —Includes demographics, anatomic/ histologic details, stage, diagnostic techniques, first course of treatment, stage-specific survival —98 percent completeness in case ascertainment |

—Time lag greater than 2 years —In Georgia, not representative of state overall. In United States, not representative of racial/ethnic makeup —Treatment data are limited in scope —Provides no information on quality of life —Lacks staging data to correlate services with stage at diagnosis |

|

Claims |

To generate bills for health care services |

—Available in electronic format —Some can be linked with registry data —Data on discrete, billable services |

—Poor detail on patient diagnosis, comorbidities, unreimbursable services (e.g., provider advice on treatment options, bundled services) —Excludes persons without health insurance |

data, the Georgia Center for Cancer Statistics manages Georgia’s other central population-based registry—Georgia’s SEER registry.

The GCCR meets and exceeds the highest standards—gold certification—of the North American Association of Central Cancer Registries (NAACCR) (GCC, 2003). It is also a participant in the National Program of Cancer Registries. GCC is to be lauded for its early attention to improving the GCCR. GCC’s investment in the GCCR is largely responsible for the registry’s current high level of performance. Currently, GCCR data are 97 percent complete—a dramatic improvement from the pre-GCC era of 75 percent. NAACCR gold certification requires that the GCCR identify at least 95 percent of its region’s cancer cases; record all required data within 23 months of the diagnosis year; and meet other NAACCR standards for internal consistency, timeliness, minimal duplication of records, minimal reports by death certificate only, and minimal missing data fields (NAACCR, 2004).

The GCCR collects data on patient demographics (gender, age at diagnosis, race, Hispanic ethnicity, county of residence, etc.); cancer site (tumor topography and histology); SEER tumor stage (in situ, local, regional, or distant); SEER extent of disease;3 initial course of treatment including type and date of surgery and radiation therapy; reason for no surgery (if none); date and cause of death, if applicable; and other data elements (Bayakly, 2003).

Surveillance, Epidemiology, and End Results (SEER) Registries

The SEER registries are the nation’s most complete source of cancer incidence and survival data, and are considered the standard of quality for cancer registries worldwide (NCI, 2002). SEER registries, like Georgia’s, are considered superior for three important reasons. First, unlike other registry data, SEER data can be used to determine cancer survival rates because the registries actively follow up at least 95 percent of cancer cases to determine vital status and cause of death (if applicable). Second, the SEER program conducts extensive quality assurance that includes annual audits of data quality and completeness (Warren et al., 2002b). Third, SEER data are routinely linked with Medicare claims data, and this linkage greatly enhances the usefulness of SEER data, as discussed below.

SEER/Medicare Database

The SEER/Medicare database is a collaborative program of the National Cancer Institute, the SEER registries, and the Centers for Medicare and

Medicaid Services.4 It is a unique and indispensable resource for investigating the quality of cancer care. Unlike any other information source, SEER/ Medicare combines SEER’s patient-level information on cancer site, tumor pathology, stage, and cause of death with Medicare’s longitudinal data on services before, during, and after diagnosis (Warren et al., 2002b).

Although this combined SEER/Medicare database is greater than the sum of its parts, it carries some of the key deficits of its component parts. One problem is that with the combined SEER/Medicare database, as with SEER, there is a significant time lag, because registry data are approximately 2 years behind and the linkage is updated only every 3 years. The next linkage update, consisting of data on cases diagnosed in 2000 to 2002, is scheduled for completion in 2005 (Riley and Warren, 2005). Another problem with the combined SEER/Medicare database, like the Medicare database, is that the data are not useful for studies of cancers that are common to the under-65 age group and cannot be used to assess the unique concerns of individuals without health insurance.

Limitations of GCCR and SEER Registry Data

Both GCCR and SEER registry data have several limitations:

-

Registry data for a given year are not available for almost 2 years after the end of the diagnosis year (Clarke et al., 2003; Bayakly, 2003).

-

Registry records contain no information on patients’ comorbidities, therapy beyond the first course of cancer treatment, adjuvant therapies that are not completed (regardless of date received), and recurrence and long-term disease status. For patients with multiple surgical procedures, only the most definitive surgery is reported in registry records (Warren et al., 2002b).

-

Registry data may be incomplete unless vigorous attempts are made to ensure that all eligible patients and data are included.

-

Registries have limited value for studying the diagnostic process because they only maintain data on confirmed cancer cases. Assessing the quality of diagnosis requires data on people whose possible cancer is ruled out and people who get “lost” in the midst of the diagnostic process.

-

Cancer-related care is more likely to be documented in registries if it is hospital-based than if it is provided in an ambulatory setting (Bickell and Chassin, 2000; Malin et al., 2002b). This observation holds especially true for early-stage breast and prostate cancers and skin melanomas, compared with other types of cancer, because they are typically identified and treated in physician’s offices (Wingo et al., 2003). SEER registries appear to

|

4 |

Extensive information about SEER is available at http://seer.cancer.gov. |

-

do a better job of documenting treatment than do non-SEER cancer registries (Brown et al., 2000).

-

NAACCR-certified registries, including the GCCR, are not required to actively follow registered cancer cases. Consequently, neither vital status nor other follow-up information is available for many registered cases (Wingo et al., 2003; Howe et al., 2003). However, most registries, including the GCCR, do passive follow-up by linking with vital records to obtain death information.

Special Cancer Registries That Focus on Specific Cancers or Aspects of Cancer Care

Elsewhere in the nation, there are special cancer registries that focus on specific types of cancer or aspects of cancer care and collect extensive data to support a wide range of research. Such registries include, for example, seven mammography data collection and research sites that collaborate as part of the National Cancer Institute’s Breast Cancer Surveillance Consortium (BCSC).5 The BCSC registries and data centers link mammography data with local SEER registry data and collect extensive follow-up data on women who undergo screening mammography. The result is an especially rich database and powerful tool for studying how breast cancer screening relates to changes in stage at diagnosis, survival, or breast cancer mortality. Approximately 150 papers, published in peer-reviewed journals, have used the BCSC data to address a wide array of questions about screening mammography (NCI, 2004a). GCC should consider expanding its own registry operations to foster this kind of research.

Medical Records

Medical record data have been used extensively in health services research, including research into the quality of care. Much of the available research on the quality of cancer care draws from detailed abstracts of medical records (see, for example, Asch et al., 2000; McGlynn, 2002; Malin et al., 2002b). Medical records are an important data source because they contain extensive documentation of patients’ characteristics, comorbidities, and descriptions of the disease, its progression, the recommended course of treatment, and other important clinical details.

The obstacles to relying on medical records as a routine source of quality monitoring information are substantial (McGlynn, 2002). Data usually have to be manually abstracted from handwritten, paper records by trained personnel with clinical expertise, often nurses. The process is labor intensive and costly. Furthermore, the content and format of medical records is not standardized. Multiple records may have to be consulted to develop one episode of patient care, and some records are frequently missing. Considerable travel time in going from delivery site to delivery site is sometimes required, particularly in a state as large as Georgia (photocopying or scanning and transmitting encrypted electronic records may be a workable alternative if privacy issues can be appropriately handled). Some medical records may be inaccurate or incomplete. In light of these factors, the IOM committee recommends that GCC limit its use of paper medical records to occasional, high-priority studies.

An electronic health record system could help Georgia effectively and efficiently use medical records to assess the quality of cancer care. Perhaps more importantly, an electronic record system could also be a potent force for quality improvement (IOM, 2003c). Electronically managing the diagnostic phase of cancer care would have several advantages over paper-based reporting. With computerized recordkeeping, results from biopsies and radiology procedures, for instance, might be more readily obtained by providers at the time and place they are needed and thus reduce waiting time for results, expedite treatment, and ameliorate patient anxiety (Overhage et al., 2001; Olivotto et al., 2001; Schiff et al., 2003; Bates et al., 2003).

At present, few, if any, of Georgia’s cancer care providers use electronic health records. It should be noted, however, that a key aspect of the Georgia Center for Oncology Research and Education—one of GCC’s most significant initiatives—is to introduce electronic recordkeeping for its patients in clinical trials. Plans are now underway to expand the availability of cancer clinical trials in urban and rural areas throughout Georgia (GCC, 2003). GCC estimates that the percentage of Georgians with cancer who participated in a clinical trial in 2000 was under 2 percent (Russell, 2004).

Administrative Claims

Administrative claims are a relatively inexpensive, electronic data source for measuring quality of care. Administrative claims exist for payment purposes and are best used for determining patients’ receipt of particular services that are likely to generate a bill for a reimbursable service (e.g., for certain cancer screening tests, diagnostic procedures, or treatments) (McGlynn, 2002). Claims data are least useful for obtaining clinical details such as tumor stage or test results and most valuable when claims are linked with registry data as in the SEER/Medicare dataset.

Medicare claims have been used extensively in studies of cancer care and validated as a data source for information on use of cancer-related surgery, chemotherapy, and radiation treatments as well as home health and hospice care (Du et al., 1999, 2000; Cooper et al., 2002; Warren et al., 2002a). The Medicare program covers most adults aged 65 and older, as well as some other adults with a disability or end-stage renal disease. Because cancer occurs most frequently among older adults, Medicare claims are an important data source for quality assessments of cancer care. Nationwide, almost 57 percent of all cancer cases, from 1997 to 2001, occurred among persons aged 65 or older (Ries et al., 2004). In Georgia, 955,000 persons (11.2 percent of the total population) were Medicare beneficiaries in 2002 (CMS, 2003).

Medicare claims do have limitations. They tend to be less useful for studies of cancers that occur more frequently among younger people such as testicular cancer, leukemia, and lymphoma (Warren et al., 2002b). In addition, Medicare claims cannot be used to assess services which are not reimbursed by Medicare, such as long-term care outside of skilled nursing facilities. Accurate determination of doses of drugs is difficult, if not impossible from administrative data. It is also sometimes difficult to tell recurrent cases from follow-up cases in the absence of longitudinal patient files.

Patient and Population Surveys

Surveys are the one data source that can capture the perspective of cancer patients, their families, health care providers, and the public on many aspects of quality of care (McGlynn, 2002).6 Surveys that target patients and their families provide critical insights into issues such as patient involvement in treatment decisions, satisfaction with health care after a cancer diagnosis, access to recommended services, pain management, and quality of life including health and functional status. Surveys that target the broader Georgia population are also important because they offer insights into persons who are well and unwell, insured and uninsured, and users and nonusers of health care services. There are a number of ongoing population-based surveys, sponsored by the federal government and private sources, which collect data that are relevant to the quality of cancer care.

Unfortunately, most population-based surveys are not designed to provide state-level statistics. An important exception is the Behavioral Risk Factor Surveillance System (BRFSS).7 The BRFSS is an ongoing survey

|

6 |

See Chapter 7, Crosscutting Issues in Assessing the Quality of Cancer Care, for the IOM committee’s recommendations on how to use surveys to capture the experience of cancer patients. |

|

7 |

Further details on the BRFSS are provided in Appendix B. |

research collaborative of the CDC and U.S. states and territories (CDC, 2004). BRFSS field operations are managed by state health departments in accordance with CDC guidelines.8 The core activity of the BRFSS is a computer-assisted telephone-interview survey of a sample of each state’s adult population. The survey is designed to collect uniform, state-specific data on preventive health practices and risk behaviors that are linked to cancer and other chronic diseases, injuries, and preventable infectious diseases. States may oversample regional populations to ensure adequate sample size for smaller geographically defined populations of interest. Aggregate, national-level findings are available on the CDC website and provide useful benchmarks for each state to assess its progress in, for example, reducing smoking and advancing preventive health practices. The BRFSS design also allows for comparisons between individual states.

The BRFSS survey instrument has three parts—a core module used by all states, optional modules, and state-added questions. The core module includes questions on health-related perceptions, conditions, and behaviors. In 2004, several sections of the core module had direct relevance to the quality of cancer care, including series of questions on tobacco use, alcohol consumption, excessive sun exposure, breast, colorectal, and prostate cancer screening.

The BRFSS optional modules are sets of standardized survey questions on a variety of topics. In 2004, there were three cancer-related, optional modules—smoking cessation, other tobacco products, and secondhand smoke policy. States may add their own questions to the BRFSS, but state-added questions, unlike the core and optional modules, are not edited or evaluated by CDC.

BRFSS data do have limitations. Telephone surveys underrepresent households without telephones (approximately 8 percent in Georgia) and households that use only mobile telephones. In addition, telephone surveys tend to have low participation rates. Also, like other survey data, BRFSS data are self-reported and thus subject to recall error. Individual recollections have been found to be accurate for certain health care services, such as surgery, but less so for medications used or others aspects of health care (Kahn et al., 2002). Recent research raises some concerns about the reliability of mammography self reports in the BRFSS (IOM, 2004).

In addition, although the BRFSS sampling frame is designed to generate state-level estimates, it is insufficient for comparing regions of the states or for assessing trends among subgroups in the state. GCC must oversample

regional populations to ensure adequate sample size for smaller geographically defined populations of interest.

Finally, BRFSS does not collect diagnostic data. Even if Georgia were to add cancer-related questions to the survey, the sample is too small to collect sufficient numbers of respondents with a cancer diagnosis.

Issues in Interpreting Quality-of-Cancer-Care Data

Quality monitoring must be an ongoing, iterative process. First-time results can be used to identify problems and to establish baseline results. Subsequent measures will track progress over time, providing comparisons with past performance to help determine the impact of the GCC initiative. The significance of the quality measures will be clearer when the measures are presented along with a corresponding reference point or benchmark (McGlynn, 2003b; AHRQ, 2004). Using benchmarks or other standards makes information on quality more meaningful by providing a context for understanding the information (IOM, 2001b). Benchmarks can be drawn from past or baseline performance, clinical guidelines, expert groups, and other sources. Potential sources for performance benchmarks—for the quality measures recommended in this report—are detailed in the one-page measure descriptions that appear at the end of Chapter 3 through Chapter 6.

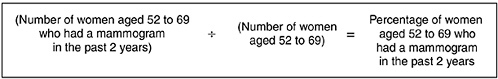

Quality measures are often reported as simple proportions that are calculated with a numerator equal to the number of individuals who received a recommended service and a corresponding denominator equal to the number of individuals who should have received the recommended service (Figure 2-3). Robust sample size is fundamental to this calculation, and it becomes an increasingly important and limiting factor if the analysis focuses on smaller subgroups or specific geographic areas such as adults with lung cancer in rural counties or African-American men with prostate cancer who reside in southwest Georgia (Clarke et al., 2003). The analytic challenge is further exacerbated when the objective is to compare the performance of hospitals or regions within the state or to assess subgroup disparities.9 In such cases, it may be necessary to pool data over several years in order to develop reliable estimates.

Quality measures, particularly health outcome measures, may need to be risk-adjusted to account for individual patient factors, such as cancer stage, age, and other demographic characteristics, which would otherwise confound the results. In general, risk adjustment will be less important when monitoring progress toward specific state goals than for comparing

|

9 |

See Chapter 7, Crosscutting Issues for Assessing the Quality of Cancer Care, for further discussion on addressing disparities in the quality of cancer care. |

FIGURE 2-3 Sample calculation of a quality-of-cancer-care measure (breast cancer screening).

performance among Georgia’s various regional cancer centers (McGlynn, 2003b).

Quality indicators, particularly process measures, often have a natural optimal standard of 100 percent (Landon et al., 2003). For example, all smokers should be offered help in quitting tobacco use. However, for some measures, optimal care does not imply a 100 percent standard because patients’ comorbidities or other clinical considerations preclude using the recommended process. In other circumstances, patients may prefer a different treatment approach or other nonclinical factors, such as health insurance coverage, may impede access to care. Today’s clinical guidelines, for example, recommend that women with early-stage breast cancer should receive adjuvant therapy if they undergo breast-conserving surgery (NCCN, 2004). The proportion of affected women who undergo breast-conserving surgery and receive appropriate adjuvant therapy might arguably be close to 100 percent. However, some women, after being fully informed of their treatment options, will opt for full mastectomies. Such realities must be taken into account but evaluators should also be vigilant in following up performance that falls short of a specific standard or 100 percent (whichever is appropriate).

SUMMARY

This chapter has described the conceptual framework and method for selecting quality-of-cancer-care measures to assess the progress of GCC’s impressive cancer initiative. There is a well-established body of research and a sound, scientific evidence base for selecting valid and usable quality indicators for cancer care in Georgia. GCC will need a quality-of-cancer-care information infrastructure to monitor its progress. If this cancer information system includes accurate, complete, and timely data it will enable the state to identify where quality problems exist, to stimulate quality improvements, and to measure progress.

REFERENCES

AHRQ (Agency for Healthcare Research and Quality). 2001. Your Guide to Choosing Quality Healthcare. [Online] Available: http://www.ahrq.gov/consumer/qualguid.pdf [accessed July 9, 2004].

——. 2004. Using Measures. [Online] Available: http://www.qualitymeasures.ahrq.gov/resources/measure_use.aspx [accessed December 10, 2004].

Asch SM, Kerr EA, Hamilton EG, Reifel JL, McGlynn EA, Editors. 2000. Quality of Care for Oncologic Conditions and HIV: A Review of the Literature and Quality Indicators. Santa Monica, CA: RAND Health.

Bates DW, Ebell M, Gotlieb E, Zapp J, Mullins HC. 2003. A proposal for electronic medical records in U.S. primary care. J Am Med Inform Assoc. 10(1): 1-10.

Bayakly AR. 2003. Georgia Comprehensive Cancer Registry. Presentation at the December 18, 2003, meeting of the IOM Committee on Assessing Improvements in Cancer Care in Georgia, Washington, DC.

Bickell NA, Chassin MR. 2000. Determining the quality of breast cancer care: do tumor registries measure up? Ann Intern Med. 132(9): 705-10.

Brook RH. 1994. The RAND/UCLA appropriateness measure. In: McCormick KA, Moore SR, Siegel RA, Editors. Clinical Practice Guideline Development: Methodology Perspectives. Rockville, MD: Public Health Service.

Brown ML, Hankey BF, Ballard-Barbash R. 2000. Measuring the quality of breast cancer care. Ann Intern Med. 133( 11): 920.

CDC (Centers for Disease Control and Prevention). 2004. Behavioral Risk Factor Surveillance System Technical Information and Data. Overview: BRFSS 2003. [Online] Available: http://www.cdc.gov/BRFSS/technical_infodata/surveydata/2003/overview_03.rtf [accessed July 13, 2004].

Clarke CA, West DW, Edwards BK, Figgs LW, Kerner J, Schwartz AG. 2003. Existing data on breast cancer in African-American women: what we know and what we need to know. Cancer. 97(1 Suppl): 211-21.

CMS (Centers for Medicare & Medicaid Services). 2003. Medicare Enrollment by State 2002. [Online] Available: http://www.cms.hhs.gov/researchers/pubs/datacompendium/2003/03pg74.pdf [accessed July 13, 2004].

Cooper GS, Virnig B, Klabunde CN, Schussler N, Freeman J, Warren JL. 2002. Use of SEER-Medicare data for measuring cancer surgery. Med Care. 40(8 Suppl): IV-43-8.

Donabedian A. 1980. The definition of quality and approaches to its assessment. In: Explorations in Quality Assessment and Monitoring, Vol. I. Ann Arbor, MI: Health Administration Press.

Du X, Freeman JL, Goodwin JS. 1999. Information on radiation treatment in patients with breast cancer: the advantages of the linked Medicare and SEER data. Surveillance, Epidemiology and End Results. J Clin Epidemiol. 52(5): 463-70.

Du X, Freeman JL, Warren JL, Nattinger AB, Zhang D, Goodwin JS. 2000. Accuracy and completeness of Medicare claims data for surgical treatment of breast cancer. Med Care. 38(7): 719-27.

GCC (Georgia Cancer Coalition). 2001. Strategic Plan. Atlanta, GA: GCC.

——. 2003. Mobilizing Georgia, Immobilizing Cancer. Atlanta, GA: GCC.

GCCR (Georgia Comprehensive Cancer Registry). 2003. Policy and Procedure Manual for Reporting Facilities. Atlanta, GA: Georgia Department of Human Resources, Division of Public Health, CCR.

GDPH (Georgia Division of Public Health). 2004. OASIS Web Query—Death Statistics. [Online] Available: http://oasis.state.ga.us/webquery/death.html [accessed April 2004].

Howe HL, Edwards BK, Young JL, Shen T, West DW, Hutton M, Correa CN. 2003. A vision for cancer incidence surveillance in the United States. Cancer Causes Control. 14(7): 663-72.

IOM (Institute of Medicine). 1990. Medicare: A Strategy for Quality Assurance, Volume II: Sources and Methods. Lohr KN, Editor. Washington, DC: National Academy Press.

——. 1998. Statement on Quality of Care. National Roundtable on Health Care Quality, Washington, DC: National Academy Press.

——. 1999. Ensuring Quality Cancer Care. Hewitt M, Simone JV, Editors. Washington, DC: National Academy Press.

——. 2000a. Enhancing Data Systems to Improve the Quality of Cancer Care. Hewitt M, Simone JV, Editors. Washington, DC: National Academy Press.

——. 2000b. To Err Is Human: Building a Safer Health System. Kohn LT, Corrigan JM, Donaldson MS, Editors. Washington, DC: National Academy Press.

——. 2001a. Crossing the Quality Chasm: A New Health System for the 21st Century. Washington, DC: National Academy Press.

——. 2001b. Envisioning the National Health Care Quality Report. Hurtado MP, Swift EK, Corrigan JM, Editors. Washington, DC: National Academy Press.

——. 2001c. Improving Palliative Care for Cancer. Foley KM, Gelband H, Editors. Washington, DC: National Academy Press.

——. 2003a. Childhood Cancer Survivorship: Improving Care and Quality of Life. Hewitt M, Weiner SL, Simone JV, Editors. Washington, DC: The National Academies Press.

——. 2003b. Fulfilling the Potential of Cancer Prevention and Early Detection. Curry S, Byers T, Hewitt M, Editors. Washington, DC: The National Academies Press.

——. 2003c. Key Capabilities of an Electronic Health Record System. Washington, DC: The National Academies Press.

——. 2004. Saving Women’s Lives: Strategies for Improving Breast Cancer Detection and Diagnosis: A Breast Cancer Research Foundation and Institute of Medicine Symposium. Herdman R and Norton L, Editors. Washington, DC: The National Academies Press.

Kahn KL, Malin JL, Adams J, Ganz PA. 2002. Developing a reliable, valid, and feasible plan for quality-of-care measurement for cancer: how should we measure? Med Care. 40(6 Suppl): III73-85.

Landon BE, Normand SL, Blumenthal D, Daley J. 2003. Physician clinical performance assessment: prospects and barriers. JAMA. 290(9): 1183-9.

Malin JL, Kahn KL, Adams J, Kwan L, Laouri M, Ganz PA. 2002a. Validity of cancer registry data for measuring the quality of breast cancer care. J Natl Cancer Inst. 94(11): 835-44.

Malin JL, Schuster MA, Kahn KA, Brook RH. 2002b. Quality of breast cancer care: what do we know? J Clin Oncol. 20(21): 4381-93.

Martin LM, Chowdhury PP, Powell KE, Clanton J. 2004. Georgia Behavioral Risk Factor Surveillance System, 2002 Report. Atlanta, GA: Georgia Department of Human Resources, Division of Public Health, Chronic Disease, Injury, and Environmental Epidemiology Section.

McGlynn EA (RAND). 2002. Applying the Strategic Framework Board’s Model to Selecting National Goals and Core Measures for Stimulating Improved Quality for Cancer Care (Background Paper #1). Bethesda, MD: National Cancer Institute.

——. 2003a. Introduction and overview of the conceptual framework for a national quality measurement and reporting system. Med Care. 41(1 Suppl): I1-7.

——. 2003b. Selecting common measures of quality and system performance. Med Care. 41(1 Suppl): I39-47.

McGlynn EA, Malin J (RAND). 2002. Selecting National Goals and Core Measures of Cancer Care Quality (Background Paper #2). Bethesda, MD: National Cancer Institute.

McGlynn EA, Asch SM, Adams J, Keesey J, Hicks J, DeCristofaro A, Kerr EA. 2003. The quality of health care delivered to adults in the United States. N Engl J Med. 348(26): 2635-45.

McNamara C, Bayakly AR, Powell KE. 2002. Georgia Childhood Cancer Report, 2002. Atlanta, GA: Georgia Department of Human Resources, Division of Public Health, Chronic Disease, Injury, and Environmental Epidemiology Section.

NAACCR (North American Association of Central Cancer Registries). 2004. Criteria and Standards for NAACCR Certification. [Online] Available: http://www.naaccr.org/filesystem/pdf/finalcriteriaforRegistryCertificationPage.pdf [accessed July 16, 2004].

NCCN (National Comprehensive Cancer Network). 2004. Clinical Practice Guidelines in Oncology-v.1.2004. Breast Cancer. [Online] Available: http://www.nccn.org/professionals/physician_gls/PDF/breast.pdf [accessed 2004].

NCI (National Cancer Institute). 2002. About SEER. [Online] Available: www.seer.cancer.gov/about [accessed May 20, 2004].

——. 2004a. Breast Cancer Surveillance Consortium: Evaluating Screening Performance in Practice. [Online] Available: http://breastscreening.cancer.gov/espp.pdf [accessed July 7, 2004].

——. 2004b. Rural Georgia Registry. [Online] Available: http://seer.cancer.gov/registries/rural_ga.html [accessed July 16, 2004].

——. 2004c. About Cancer Control & Population Sciences: Cancer Control Continuum. [Online] Available: http://cancercontrol.cancer.gov/od/continuum.html [accessed November 28, 2004].

NCI and CDC (National Cancer Institute and Centers for Disease Control and Prevention). 2004. State Cancer Profiles. [Online] Available: http://statecancerprofiles.cancer.gov/incidencerates/incidencerates.html [accessed July 9, 2004].

NQF (National Quality Forum). 2003. Standardizing Quality Measures for Cancer Care Summary Report. Washington, DC: NQF.

Olivotto IA, Borugian MJ, Kan L, Harris SR, Rousseau EJ, Thorne SE, Vestrup JA, Wright CJ, Coldman AJ, Hislop TG. 2001. Improving the time to diagnosis after an abnormal screening mammogram. Can J Public Health. 92(5): 366-71.

Overhage JM, Suico J, McDonald CJ. 2001. Electronic laboratory reporting: barriers, solutions and findings. J Public Health Manag Pract. 7(6): 60-6.

Palmer RH. 1997. Using clinical performance measures to drive quality improvement. Total Qual Manage. 8(5): 305-11.

Richard-Lee KM, Rochester PW. 2003. A comprehensive approach to cancer prevention and control: a vision for the future. In: CDC, Promising Practices in Chronic Disease Prevention and Control: A Public Health Framework for Action. Atlanta, GA: U.S. DHHS.

Ries LAG, Eisner MP, Kosary CL, Hankey BF, Miller BA, Clegg L, Mariotto A, Feuer EJ, Edwards BK, Editors. 2004. SEER Cancer Statistics Review, 1975-2001. Bethesda, MD: National Cancer Institute.

Riley G, Warren J (CDC, NCI). 2005. Surveillance, Epidemiology and End Results (SEER)—Medicare Linked Database. [Online] Available: http://www.academyhealth.org/2004/ppt/riley.ppt#256,1,Surveillance,Epidemiology,andEndResults(SEER)—MedicareLinkedDatabase [accessed January 24, 2005].

Russell K (GCC). 2004. Georgia Clinical Trials. Personal communication to Jill Eden.

Schiff GD, Klass D, Peterson J, Shah G, Bates DW. 2003. Linking laboratory and pharmacy: opportunities for reducing errors and improving care. Arch Intern Med. 163(8): 893-900.

Shekelle PG, Kahan JP, Bernstein SJ, Leape LL, Kamberg CJ, Park RE. 1998. The reproducibility of a method to identify the overuse and underuse of medical procedures. N Engl J Med. 338(26): 1888-95.

USPSTF (U.S. Preventive Services Task Force). 1996. Guide to Clinical Preventive Services. 2nd Ed. Rockville, MD: U.S. DHHS.

Warren JL, Harlan LC, Fahey A, Virnig BA, Freeman JL, Klabunde CN, Cooper GS, Knopf KB. 2002a. Utility of the SEER-Medicare data to identify chemotherapy use. Med Care. 40(8 Suppl): IV-55-61.

Warren JL, Klabunde CN, Schrag D, Bach PB, Riley GF. 2002b. Overview of the SEER-Medicare data: content, research applications, and generalizability to the United States elderly population. Med Care. 40(8 Suppl): IV-3-18.

Wingo PA, Jamison PM, Hiatt RA, Weir HK, Gargiullo PM, Hutton M, Lee NC, Hall HI. 2003. Building the infrastructure for nationwide cancer surveillance and control—a comparison between the National Program of Cancer Registries (NPCR) and the Surveillance, Epidemiology, and End Results (SEER) Program (United States). Cancer Causes Control. 14(2): 175-93.