Evaluation of Research Training and Career Development Programs at NIH: Current Capabilities and Continuing Needs

Charles R. Sherman

The National Institutes of Health (NIH) supports various manpower development programs needed to carry out its principal statutory responsibility: to support a high-quality national biomedical research enterprise. In earlier times, NIH had additional responsibility to increase the supply of well-trained manpower in emerging clinical specialties.

The information presented here focuses extramurally. There are also training programs and experiences in NIH’s own clinics and campus laboratories, the intramural program. But the vast majority of the manpower development is supported and conducted in extramural settings, such as degree-granting universities and affiliated training hospitals. Formal training programs and fellowship and career development applications are evaluated and awarded based on merit by the NIH peer review system. Much training is conducted in the course of research studies supported, similarly, after competitive peer review.

The effectiveness of many of NIH’s training and career development programs can be evaluated because NIH receives and keeps records of who the individual trainees are. The careers and productivity of the unknown students and postdoctorals supported by research grants—a number estimated to be about twice the number of programmatically supported postdoctorals—cannot yet be examined.

CONTEXT OF EVALUATION

Evaluation of training and career development is one aspect of a longstanding program of financial incentives in support of good management practices at NIH. The original “One Percent Set-aside” was established to provide extra resources to managers and administrators to conduct independent assessments of how well their programs work. For many programs, this is a challenge. For training programs it is somewhat straightforward. The goals are clearer than for research centers and programs: Training programs are expected to produce scientists who can compete, and compete successfully, for research support and who develop and publish new knowledge and discover and test new treatments for human disorders.

There is an additional strong incentive to evaluate our training programs within the context of the labor market: Congress tells the NIH to do this. Since 1974 the NIH has been required to ask the National Academies to establish the level of need for training of biomedical and behavioral researchers to keep this enterprise going. In the past 30 years, the National Academy of Sciences (NAS) has delivered 11 such reports, and the twelfth is being birthed right now. In addition to recommendations and justifications for the number of trainees NIH should be supporting, the academy has conducted or encouraged separate studies of the outcomes of these programs. The academy has also experimented with various mathematical models to build its understanding of the dynamics of the workforce.

But NIH has supported many more studies, reviews, and task forces over the years to see how it is doing and what should be done differently. NIH typically does not focus on the achievements of individual scholars but on aggregate statistics describing the training experiences or settings and subsequent careers of groups of new scientists.

This paper describes some of the data resources NIH has developed and a few examples of how they are used to evaluate the influence or productivity of the training programs, both longitudinally and retrospectively, and examines some characteristics of NIH’s training and workforce. Some data shortcomings and emerging difficulties will be mentioned, as well as the need for additional data resources.

Here are some important data, provided by the Office of Extramural Research, that show the size of NIH’s known research training enterprise:

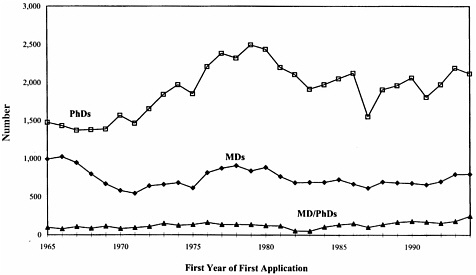

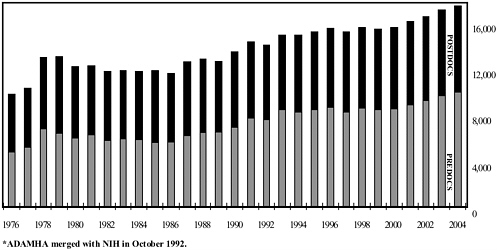

First, the numbers of trainees and fellows annually since 1976 are seen in Figure 1. There has been a gradual and persistent increase in the number of trainees during the past 25 years. In addition, the ratio of predoctoral to postdoctoral awards has increased slightly, from roughly 50 percent predoctoral awards in 1980 to 57 percent predoctoral awards in 2004.

FIGURE 1 Total number of predoctoral and postdoctoral positions on NIH training grants and fellowshipsa (fiscal years 1976–2004).

aADAMHA merged with NIH in October 1992.

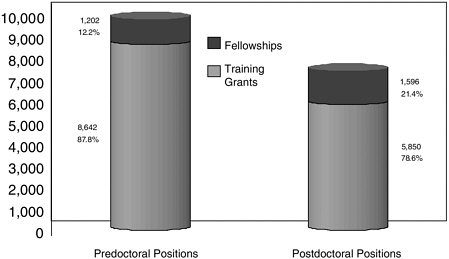

Second, the relative numbers of trainees and fellows in predoctoral and postdoctoral positions in 2004 are seen in Figure 2. The ratio of postdoctoral fellowships to traineeships is considerably higher than it is for predoctoral training awards.

Finally, the FY 2005 budget for traineeships, fellowships, and career development activities was $1.376 million, out of a total NIH budget of $28.6 billion. For traineeships and fellowships (T and F awards) the bud-

FIGURE 2 Predoctoral and postdoctoral research training positions on NIH training grants and fellowships fiscal year 2004.

geted amount was $762 million, and for career awards (K awards) the budgeted amount was $614 million.

It is “known” training enterprise, because a system is under development to identify the people in training with support from research grants. The departmental survey of graduate students and postdoctorals in science and engineering suggests this group is twice as large as the formal training group, but it is assumed that many are the same people at later stages of their training.

EVALUATION RESOURCES AND PERSPECTIVES

Trainee and fellowship appointment records are extracted from the NIH grants management and reporting systems (see Table 1) and compiled into a separate system of records known as the Trainee and Fellows File, or TFF. The multiple training and fellowship appointment records for each individual scholar, for all years and institutions where he or she may have been supported, are combined into one set of records that can be summarized and linked to other files. Similarly, all of the NIH grant application records are sorted by applicant into the Consolidated Grant Applicant File, or CGAF. These files were first created as part of an NAS research project in the early 1970s and have been refined and updated annually ever since. The files are linked so that the sum of the training experiences NIH supported and the grant applications and awards record of each person can be known. The utility of this combined resource should be obvious, and some simple examples of its use will be given. There are some inherent uncertainties and inaccuracies to minimize, but restructuring of the data outside the management systems simplifies analysis.

There are other data resources that are useful for evaluation of NIH’s programs. In collaboration with the National Science Foundation (NSF) and other federal agencies, NIH supports the Survey of Earned Doctorates, which adds annually to the Doctorate Records File, or DRF, and the biennial Survey of Doctorate Recipients, or SDR, tracking the careers of a 20 percent longitudinal sample of all Ph.D.s awarded in the United States.

TABLE 1 Evaluation Databases Available to NIH Evaluation Databases

|

|

The DRF and SDR are linked to the CGAF and TFF, thus enabling the comparison and cleaning of some data fields and the extraction of selected data sets for specific analyses and studies. Additionally, the Faculty Roster System from the Association of American Medical Schools is cross-linked with the above files, adding faculty career development to the outcomes, which can be relatively easily tracked for many NIH trainees, fellows, and career award recipients.

SOME USES OF THE EVALUATION DATABASES

Counting Groups and Subgroups

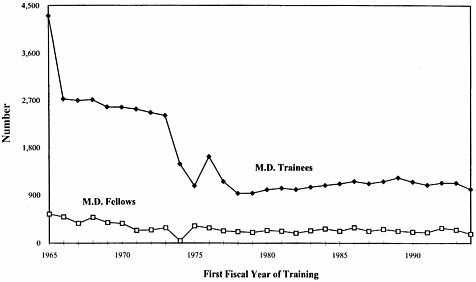

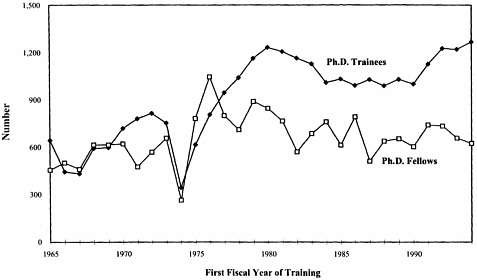

One way these databases are used is to simply count the numbers of people involved and observe how the numbers change over time. Do the numbers match the program’s goals or expectations? How many M.D.s are supported as fellows or on training grants (see Figure 3)? How many Ph.D. trainees and fellows (see Figure 4)? The sorted files allow us to count people, rather than positions. Each trainee is tallied only in the first year of training. Figures 3 and 4 show the number of M.D. and Ph.D. trainees and fellows supported from 1965 to 1994.

Parenthetically, it should be said that these graphs are out of date. A comprehensive set of tables and graphs used to be prepared annually, but for some reason, this simple procedure was discontinued after 1996. The

FIGURE 3 Number of M.D. postdoctoral trainees and fellows with nine or more months of training support of first fiscal year of training 1965 to 1994.

FIGURE 4 Number of Ph.D. postdoctoral trainees and fellow with nine or more months of training by first FY of training 1965 to 1994.

capability is still there for all of NIH or for any single institute or center that may want to do it.

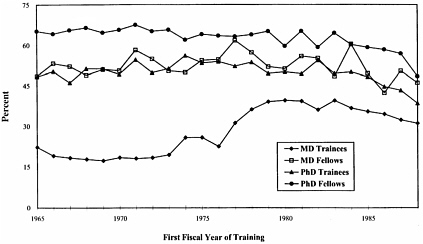

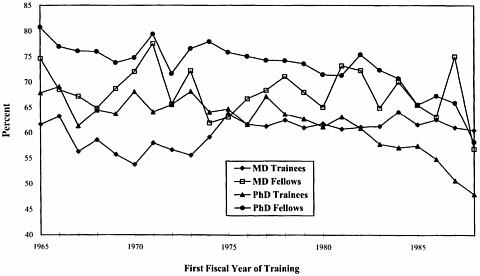

Second, these databases can be used to examine trainees and fellows longitudinally. Figures 5 and 6 show the percentage of these people, counted once each (arbitrarily, in the first year they were supported) to see

FIGURE 5 M.D. and Ph.D. postdoctorals: KRUMP application rates by first fiscal year and training in 1965 and 1988.

SOURCE: Consolidated Grant Applicant File and Fellow File.

FIGURE 6 M.D. and Ph.D. postdoctorals: KRUMP application rates by first fiscal year and training in 1965 and 1988.

how many eventually applied for a grant or received a grant. M.D.s supported on training grants did not do so well, and corrective action was taken. Missing from these tallies, ironically, were individuals who worked at the NIH and did not have to apply for “extramural” funding. Now, there is finally a new database of intramural staff.

There are many ways to count first-time grant applicants. In this case only first time R01 grant applications were counted (see Figure 7). Sometimes the first K or R or U or M or P activity applications are counted. Once identified, these newest members of the grant applicant pool can be examined, and retrospectively, the importance of NIH’s training programs in sustaining and regenerating the bioscience workforce—and whether this self-renewal is changing—can be observed.

Retrospectively, new entrants to the pool of grant applicants can be viewed, and how many received NIH training support can be assessed (see Figure 8).

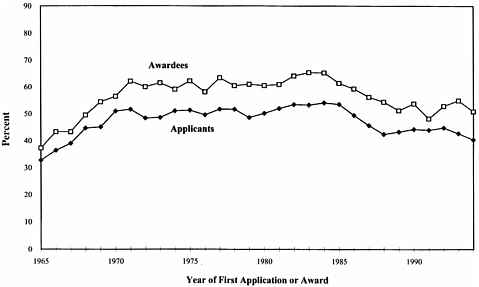

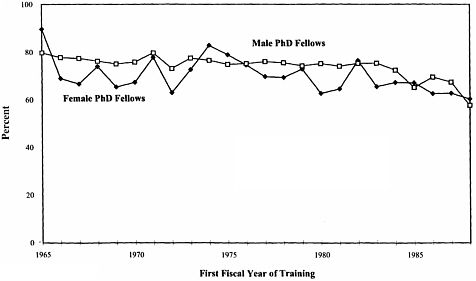

To the extent the gender and ethnicity data are complete and accurate, subpopulations of trainees can be examined to assess, for example, if women trainees have fared differently from men in being awarded research grants (see Figure 9).

An analysis done in 1983 observed that the total number of months of training received by M.D.s correlated with their subsequent participation in research. This was not the case for Ph.D.s. This issue was discussed by the NIH Director’s Advisory Committee, and new directives were issued by Dr. James Wyngaarden to urge the selection of M.D. trainees who were

FIGURE 9 Ph.D. postdoctoral fellows: KRUMP award rates by first fiscal year of training and gender 1965 to 1988.

willing to receive at least two years of training for research. Also, new physician-scientist award programs were subsequently introduced in the K series.

OTHER MEASURABLE OUTCOMES OF RESEARCH TRAINING AND COMPARISON GROUPS

Becoming an active member of the community of NIH grant-supported bioscience scholars is one countable career outcome, but it is not the only measurable, positive outcome. And sizable numbers of people make this achievement without the benefit of NIH-supported training. Additional measurements were taken in the more comprehensive evaluations of the impact of NIH predoctoral and postdoctoral programs, conducted in the 1980s by NAS and NIH (Coggeshall and Brown, 1984; Garrison and Brown, 1986) and by NIH in this decade with help from Pion (2001; Pion et al., in progress). Additional measures were observed, including time to complete training and earn the degree, pursuit of further (postdoctoral) training, working in a tenure-track position, ratings of the employing institution, application for NSF or other non-NIH grant, numbers of publications, and numbers of citations to published articles

Furthermore, the achievements of NIH-supported trainees and fellows are compared with scholars who did not receive NIH training funds but who were (1) at the same departments/institutions, or (2) at depart-

TABLE 2 Overview of Early Career Outcomes and Group Comparisons in the Biomedical Sciences

ments/institutions that did not have NIH training grants. In such comparisons those anointed by NIH frequently performed somewhat better, as can be seen in Table 2.

MENTORED CAREER DEVELOPMENT AWARDS

Career development awards were tallied in the training column before the National Research Service Award (NRSA) authority was established in 1974, and they have been largely overlooked in subsequent assessments of NIH training needs and programs. Nevertheless, there were excellent evaluations of career-enhancing fellowships such as the Markle Scholars program (Strickland and Strickland, 1976), the Hartford fellowships (a companion report by Carter, Robyn, and Singer of RAND was released in 1983), and the NIH Research Career Development Award (RCDA) program (Carter et al., 1987).

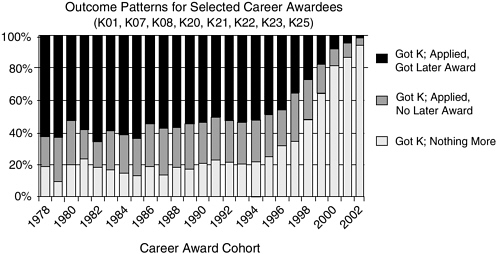

The goals of career development awards are mostly the same as for training and the outcomes and trends can be measured, as shown in Figure 10. The apparent decrease in subsequent grant applications by recent cohorts is an artifact of the limited time available to apply for them. NIH is now in the planning stages of a comprehensive assessment of the multiple career development programs.

FIGURE 10 Mentored career development awards: outcome patterns.

NEED FOR DATA MAINTENANCE AND IMPROVEMENT

NIH has done much, and for years it has wanted to do more. Each new major report from NAS calls for more data, but there have been persistent difficulties, and some new difficulties have emerged as well. Knowledgeable staff retire; personnel have not been replaced; and new responsibilities are added. There are several additional needs and concerns. Some of these are:

-

degree/data quality issues;

-

cost-benefit comparisons of training/career activities;

-

data capture improvement (e.g., “program” Ks and nonprincipal investigator personnel on research grants);

-

SDR-like tracking for researcher M.D.s, R.N.s and D.D.S.s, as well as for foreign-earned Ph.D.s;

-

collaboration between NIH outside organizations with similar career development evaluation interests (e.g. HHMI); and

-

new data access/privacy issues.

NIH leadership is becoming aware of these issues and is likely to address them to improve evaluation of its research training portfolio.

REFERENCES

Carter, G. M., A. E. Robyn, and A. M. Singer. 1983. The Supply of Physician Researchers and Support for Research Training: Part I of an Evaluation of the Hartford Fellowship Program.

Carter, G. M., J. D. Winkler, and A. K. Biddle-Zehnder. 1987. An Evaluation of the NIH Research Career Development Award.

Coggeshall, P., and P. W. Brown. 1984. The Career Achievements of NIH Predoctoral Trainees and Fellows. Washington, D.C.: National Academy Press.

Garrison, H. H., and P. W. Brown, eds. 1986. The Career Achievements of NIH Postdoctoral Trainees and Fellows. Washington, D.C.: National Academy Press.

Pion, G. M. 2001. The Early Career Progress of NRSA Predoctoral Trainees and Fellows. Bethesda, Md.: National Institutes of Health.

Strickland, T. G., and S. P. Strickland. 1976. The Markle Scholars: A Brief History. New York: John & Mary R. Markle Foundation.