Using Computational Cognitive Models to Improve Human-Robot Interaction

ALAN C. SCHULTZ

Naval Research Laboratory

Washington, D.C.

We propose an approach for creating more cognitively capable robots that can interact more naturally with humans. Through analysis of human team behavior, we build computational cognitive models of particular high-level human skills that we have determined to be critical for good peer-to-peer collaboration and interaction. We then use these cognitive models as reasoning mechanisms on the robot, enabling the robot to make decisions that are conducive to good interaction with humans.

COGNITIVELY ENHANCED INTELLIGENT SYSTEMS

We hypothesize that adding computational cognitive reasoning components to intelligent systems such as robots will result in three benefits:

-

Most, if not all, intelligent systems must interact with humans, who are the ultimate users of these systems. Giving the system cognitive models can improve the human-system interface by creating more common ground in the form of cognitively plausible representations and qualitative reasoning. For example, mobile robots generally use representations, such as rotational and translational matrixes, to represent motion and spatial references. However, because this is not a natural mechanism for humans, additional computations must be made to translate between these matrixes and the qualitative spatial reasoning

-

used by humans. By using cognitive models, reasoning mechanisms, and representations, we believe we can achieve a more effective and efficient interface.

-

Because the resulting system interacts with humans, giving the system behaviors that are more natural and compatible with human behaviors can also result in more natural interactions between human and intelligent systems. For example, mobile robots that must work collaboratively with humans can have less than effective interactions with them if their behaviors are alien or non-intuitive to humans. By incorporating cognitive models, we can develop systems whose behavior can be more easily anticipated by humans and is more natural. Therefore, these systems are more compatible with human team members.

-

One key area of interest is measuring the performance of intelligent systems. We propose that the performance of a cognitively enhanced intelligent system can be compared directly to human-level performance. In addition, if cognitive models of human performance have been developed in creating an intelligent system, we can directly compare the behavior and performance of the task by the intelligent system to the human subject’s behavior and performance.

Hide and Seek

Our foray into this area began when we were developing computational cognitive models of how young children learn the game of hide and seek (Trafton et al., 2005b; Trafton and Schultz, 2006). The purpose was to create robots that could use human-level cognitive skills to make decisions about where to look for people or things hidden by people. The research resulted in a hybrid architecture with a reactive/probabilistic system for robot mobility (Schultz et al., 1999) and a high-level cognitive system based on ACT-R (Anderson and Lebiere, 1998) that made the high-level decisions for where to hide or seek (depending on which role the robot was playing). Not only was this work interesting in its own right, but it also led us to the realization that “perspective taking” is a critical cognitive ability for humans, particularly when they want to collaborate.

Spatial Perspective Taking

To determine how important perspective and frames of reference are in collaborative tasks in shared space (and also because we were working on a DARPA-funded project to move these capabilities to the NASA Robonaut), we analyzed a series of tapes of a ground controller and two astronauts undergoing training in the NASA Neutral Buoyancy Tank facility for an assembly task for Space Station Mission 9A. When we performed a protocol analysis of these tapes (approximately 4000 utterances) focusing on the use of spatial language and commands from one person to another, we found that the astronauts changed their frame of reference approximately every other utterance. As an example of

how prevalent these changes in frame of reference are, consider the following utterance from ground control:

“… if you come straight down from where you are, uh, and uh, kind of peek down under the rail on the nadir side, by your right hand, almost straight nadir, you should see the….

Here we see five changes in frame of reference (highlighted in italics) in a single sentence! This rate of change is consistent with the results found by Franklin et al. (1992). In addition, we found that the astronauts had to adopt others’ perspectives, or force others to adopt their perspective, about 25 percent of the time (Trafton et al., 2005a). Obviously, the ability to handle changing frames of reference and to understand spatial perspective will be a critical skill for the NASA Robonaut and, we would argue, for any other robotic system that must communicate with people in spatial contexts (i.e., any construction task, direction giving, etc.).

Models of Perspective Taking

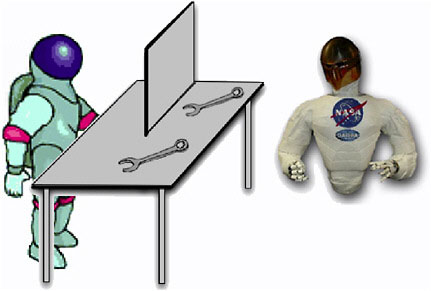

Imagine the following task, as illustrated in Figure 1. An astronaut and his robotic assistant are working together to assemble a structure in shared space. The human, who because of an occluded view can see only one wrench, says to the robot, “Pass me the wrench.” From the robot’s point of view, however, two

FIGURE 1 A scenario in which an astronaut asks a robot to “Pass me the wrench.”

wrenches are visible. What should the robot do? Evidence suggests that humans, in similar situations, will pass the wrench they know the other human can see because this is a jointly salient feature (Clark, 1996).

We developed two models of perspective taking that could handle this scenario in a general sense. In the first approach, we used the ACT-R/S system (Harrison and Schunn, 2002) to model perspective taking using a cognitively plausible spatial representation. In the second approach, we used Polyscheme (Cassimatis et al., 2004) to model the cognitive process of mental simulation; humans tend to simulate situations mentally to solve problems. Using these models, we demonstrated a robot that could solve problems similar to the wrench problem.

FUTURE WORK

We are now exploring other human cognitive skills that seem important for peer-to-peer collaborative tasks and that are appropriate for building computational cognitive models that can be added to our robots. One new skill we are considering is nonvisual, high-level focus of attention, which helps focus a person’s attention on appropriate parts of the environment or situations based on current conditions, task, expectations, models of other agents in the environment, and other factors. Another human cognitive skill we are considering involves the role of anticipation in human interaction and decision making.

CONCLUSION

Clearly, for humans to work as peers with robots in shared space, the robot must be able to understand the natural human tendency to use different frames of reference and must be able to take the human’s perspective. To create robots with these capabilities, we propose using computational cognitive models, rather than more traditional programming paradigms for robots, for the following reasons. First, a natural and intuitive interaction reduces cognitive load. Second, more predictable behavior engenders trust. Finally, more understandable decisions by the robot enable the human to recognize and more quickly repair mistakes. We believe that computational cognitive models will give our robots the cognitive skills they need to interact more naturally with humans, particularly in peer-to-peer relationships.

REFERENCES

Anderson, J.R., and C. Lebiere. 1998. Atomic Components of Thought. Mahwah, N.J.: Lawrence Erlbaum Associates.

Cassimatis, N., J.G. Trafton, M. Bugajska, and A.C. Schultz. 2004. Integrating cognition, perception, and action through mental simulation in robots. Robotics and Autonomous Systems 49(1–2): 13–23.

Clark, H.H. 1996. Using Language. New York: Cambridge University Press.

Franklin, N., B. Tversky, and V. Coon. 1992. Switching points of view in spatial mental models. Memory & Cognition 20(5): 507–518.

Harrison, A.M., and C.D. Schunn. 2002. ACT-R/S: A Computational and Neurologically Inspired Model of Spatial Reasoning. P. 1008 in Proceedings of the 24th Annual Meeting of the Cognitive Science Society, edited by W.D. Gray and C.D. Schunn. Fairfax, Va.: Lawrence Erlbaum Associates.

Schultz, A., W. Adams, and B. Yamauchi. 1999. Integrating exploration, localization, navigation and planning through a common representation. Autonomous Robots 6(3): 293–308.

Trafton, J.G., and A.C. Schultz. 2006. Children and Robots Learning to Play Hide and Seek. Pp. 242–249 in Proceedings of ACM Conference on Human-Robot Interaction, March 2006. Salt Lake City, Ut.: Association for Computing Machinery.

Trafton, J.G., N.L. Cassimatis, D.P. Brock, M.D. Bugajska, F.E. Mintz, and A.C. Schultz. 2005a. Enabling effective human-robot interaction using perspective-taking in robots. IEEE Transactions on Systems, Man, and Cybernetics, Part A: Systems and Humans 35(4): 460–470.

Trafton, J.G., A.C. Schultz, N.L. Cassimatis, L. Hiatt, D. Perzanowski, D.P. Brock, M. Bugajska, and W. Adams. 2005b. Communicating and Collaborating with Robotic Agents. Pp. 252–278 in Cognitive and Multiagent Interaction: From Cognitive Modeling to Social Simulation, edited by R. Sun. Mahwah, N.J.: Lawrence Erlbaum Associates.