8

Methods for Evaluation

This chapter presents two classes of methods for evaluating human performance and the interaction between humans and systems. The first class of methods, risk analysis, discusses the approaches to identifying and addressing business risks and safety and survivability risks. The second class of methods, usability evaluation, describes the range of experimental and observational approaches used to determine the usability of system features in all stages of the system development life cycle. Figure 8-1 provides an overview. This figure lists the foundational methods (e.g., surveys, interviews, experiment design) noted in the Introduction to Part II because they play a central role in evaluation.

RISK ANALYSIS

Overview

This section describes some commonly used tools for risk management, including failure modes and effects analysis (FMEA) and fault tree analysis (FTA). These tools are flexible and can be used to assess, manage, and mitigate

-

business risk due to faults in the development process, including failed steps to consider human-system integration (HSI).

-

failed usability outcomes (e.g., failure to meet customer usability objectives, which results in product failure in the marketplace).

FIGURE 8-1 Representative methods and sample shared representations for evaluation.

-

use-error faults that result in harm to product users (e.g., medical devices, failed mission objectives, such as failure to destroy enemy targets in a military system).

The emphasis is on use of these tools to evaluate and control negative outcomes related to use error or errors resulting from defects in the user interface element of human-system integration. By simple extensions, they can also be used to evaluate and control business risk related to the development cycle. Most of the following text is focused on use errors, but we make the case for the relative ease of using the philosophy behind these tools for many other purposes, including assessing and controlling business risk. In the military, the analysis of use error is especially relevant to the HSI domains of human factors, safety and occupational health, and survivability.

As noted, these tools and related methods are frequently applied to understanding use errors made with medical and other commercial devices.

Use errors are defined as predictable patterns of human errors that can be attributable to inadequate or improper design. Use errors can be predicted through analytical task walkthrough techniques and via empirically based usability testing. Here we explain and discuss the special methodology of use-error focused risk analysis and some of its history. Examples are presented that illustrate the methods of use-error risk analysis such as FTA and FMEA and some pitfalls to be avoided. These methods are widely used in safety engineering. The concepts are illustrated with a medical device case study using an automatic external defibrillator and a business risk example.

Risk-Management Techniques

Risk analysis in the context of use errors in products and processes has received increasing attention in recent years, particularly for medical devices. These techniques have been used for decades to assess the effect of human behavior on critical systems, such as in aerospace, defense systems, and nuclear power applications. Use errors are defined as a pattern of predictable human errors that can be attributable to inadequate or improper design. Use error can also produce faults that create failures for many types of systems and products, including

-

E-commerce web sites—the user fails to complete the checkout process and revenue from orders is lost.

-

Weapons systems—the user fails to arm and deploy the weapons system and a critical enemy target survives and goes on to destroy combat systems and personnel.

-

Energy systems—the operator fails to detect and isolate a component failure and the entire energy plant fails.

-

Transportation system—the driver fails to avoid another approaching vehicle and all occupants of both vehicles are killed in the subsequent collision.

Defining Use Error

Use error is characterized by a repetitive pattern of failure that indicates that a failure mode is likely to occur with use and thus has a reasonable possibility of predictability of occurrence. Use error can be addressed and minimized by the device designer and proactively identified through the use of such techniques as usability testing and hazard analysis. An important point is that, in the area of medical products, regulator and standards bodies make a clear distinction between the common terms “human error” and “user error” in comparison to “use error.” The term “use error” attempts

to remove the blame from the user and open up the analyst to consider other causes, including the following

-

Poor user interface design (e.g., poor usability).

-

Organizational elements (e.g., inadequate training or support structure).

-

Use environment not properly anticipated in the design.

-

Not understanding the user’s tasks and task flow.

-

Not understanding the user profile in terms of individual differences in training, experience, task performance, incentives, and motivation.

ANALYSIS OF HUMAN ERROR

The analysis of human error has played a central role in risk analysis since the 1950s. Initially in nuclear weapons assembly, then in the nuclear power industry and in industry more generally, particularly after the Three Mile Island accident in 1979. Although in this chapter, risk analysis focuses on safety critical systems, the risk of human error is relevant to human-system integration more generally because errors can also result in inefficiencies, excessive cost of operations, and wasted resources.

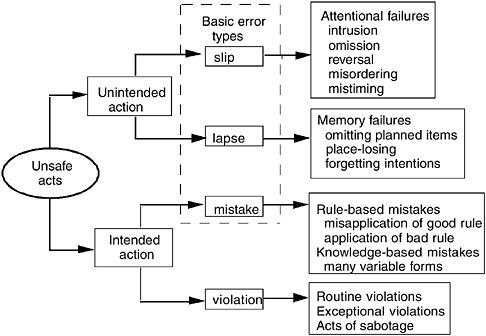

Reason (1990) provides a comprehensive classification of errors as shown in Figure 8-2. This classification makes clear that even though every error is identified by an action, the source of the error can be a much wider set of alternative failures. The category of knowledge-based mistakes can be expanded to include the many additional psychological sources of mistakes, including the following:

-

Situation awareness.

-

Decision making.

-

Estimation.

-

Computation.

Embry (1987) summarizes approaches to human reliability assessment—that is, assessment of the risk of human error. The oldest and most well-known technique is the technique for human error rate prediction (THERP) (Swain, 1963; Swain and Guttman, 1983). This approach is based on probabilistic risk analysis and fault tree task decomposition methods, and it has been applied extensively in nuclear power plant design and procedure assessment. The techniques described in this chapter are the basic building blocks of quantitative methods, such as THERP; the degree to which complex models involving estimates of error probability are necessary depends largely on the application and extent to which quantifica-

FIGURE 8-2 Reason’s error classification. SOURCE: Reason (1990). Reprinted with the permission of Cambridge University Press.

tion is necessary. However, basic error risk analysis as described in this chapter is relatively straightforward and is warranted in virtually all HSI applications.

Identification of Hazards and When Risk Management Is Conducted

An important first step in risk management is to understand and catalogue the hazards and possible resulting harms that might be caused by a product or system. Sometimes this is called hazard analysis. Others use the term in a more general way as a synonym for risk-management. Hazard analysis is often accomplished as an iterative process, with a first draft being updated and expanded as additional risk management methods (e.g., FMEA, FTA) are used. Medical experts and those in quality control and product development, among other commercial product disciplines, can brainstorm on harms and hazards. Technically, hazards are the potential for harms. Harms are defined as physical injury or damage to the health of people or damage to property or the environment. Box 8-1 shows examples

|

BOX 8-1 Possible Harms and Hazards from the Use of Medical Equipment Use of an Automatic Needle Injection Device

Use of an Automatic External Defibrillator

|

of harms from hazards for a penlike automatic needle injector device and shows similar harms and hazards from an automatic external defibrillator. Box 8-2 extends the notion of harm to negative business outcomes resulting from HSI faults.

Below we describe the most commonly used tools involved in user error risk analysis, FMEA, and FTA. These tools can also be used to assess and control business risk. The shared representations typically resulting from these methods are reports containing graphical portrayals of the fault trees or tabular descriptions of the failure modes. The FTA representations show cumulative probabilities of logically combined fault events demonstrating the overall risk levels. The tabular shared representations documented with FMEA tables show calculated risk levels associated with different business or operational hazard outcomes.

|

BOX 8-2 Negative Business Outcomes Resulting from HSI Faults

|

Shared Representations

FMEA

The recommended steps for conducting a use-error risk analysis are the same as for traditional risk analysis with one significant addition, namely the need to perform a task analysis. Possible use errors are then deduced from the tasks (Israelski and Muto, 2006). Each of the use errors or faults is rated in terms of the severity of its effects and the probability of its occurrence. A risk index is calculated by combining these two elements and can then be used for risk prioritization. For each of the high-priority items, modes (or methods) of control are assumed for the system or subsystem and reassessed in terms of risk. The process is iterated until all higher level risks are eliminated and any residual risk is as low as reasonably practicable (sometimes referred to as ALARP).

Among the most widely used of the risk analysis tools is FMEA and its close relative, failure modes, effects, and criticality analysis (FMECA).1

FMEA is a design evaluation technique used to define, identify, and eliminate known or potential failures, problems, and errors from the system. The basic approach of FMEA from an engineering perspective is to answer the question: If a system component fails, what is the effect on system performance or safety? Similarly, from a human factors perspective, FMEA addresses the question, “If a user commits an error, what is the effect on system performance from a safety or financial perspective?” A human factors risk analysis has several components that help define and prioritize such faults: (1) the identified fault or use error, (2) occurrence (frequency of failure), (3) severity (seriousness of the hazard and harm resulting from the failure), (4) selection of controls to mitigate the failure before it has an adverse effect, and (5) an assessment of the risk after controls are applied.

A use-error risk analysis is not substantially different from a conventional design FMEA. The main difference is that, rather than focusing on component or system-level faults, it focuses on user actions that deviate from expected or ideal user performance. For business risk, the development faults would include the items shown in Box 8-2. Table 8-1 summarizes the steps in performing FMEA.

FTA and Other Technique Variations

Other commonly used tools for analyzing and predicting failure and consequences are fault tree and event tree analysis. FTA is a top-down deductive method used to determine overall system reliability and safety (Stamatis, 1995). A fault tree, depicted graphically, starts with a single undesired event (failure) at the top of an inverted tree, and the branches show the faults that can lead to the undesired event—the root causes are shown at the bottom of the tree. For human factors and safety applications, FTA can be a useful tool for visualizing the effects of human error combined with device faults or normal conditions on the overall system. Furthermore, by assigning probability estimates to the faults, combinatorial probabilistic rules can be used to calculate an estimated probability of the top-level event or hazard.

An event tree is a visual representation of all the events that can occur in a system. As the number of events increases, the picture fans out like the branches of a tree. Event trees can be used to analyze systems that involve sequential operational logic and switching. Whereas fault trees trace the precursors or root causes of events, event trees trace the alternative consequences of events. The starting point (referred to as the initiating event) disrupts normal system operation. The event tree displays the sequences of events involving success and/or failure of the system components. In human factors analysis the events that are traced are the contingent sequences of human operator actions (Swain and Guttman, 1983).

TABLE 8-1 Steps in Performing FMEA

|

Steps |

Description |

|

The most effective risk analyses are performed by a team of stakeholders. |

|

A task analysis is a detailed sequential description (in graphic, tabular, or narrative form) of tasks performed while operating a devise or system. The analysis should cover the major task flows performed by users. |

|

There are a variety of FMEA worksheets for documenting use errors. Computer spreadsheets can be useful. |

|

Brainstorming involves identifying possible operator errors and actions that deviate from the expected or optimal behavior for each task identified in the task analysis (step 2 above). |

|

The team identifies potential harms associated with each failure mode. This step is important for subsequent determination of risk ratings. |

|

A severity rating determines the seriousness of the effects of a given fault if it occurs. Severity can be assigned a numeric value or a qualitative descriptive rating. |

|

Occurrence ratings are estimates of the predicted frequency or likelihood of the occurrence of a fault. These ratings should be based on existing data such as customer complaints or usability test results. |

|

A numeric risk index is calculated by multiplying the severity rating by the occurrence rating. A qualitative risk rating requires the development of criteria establishing risk levels based on combinations of severity and occurrence ratings. |

|

Risks are prioritized to determine how, when, and whether identified failure modes should be addressed. |

|

Organized problem-solving approaches are used by the team to select modes of control for each high-priority failure. The most desirable mode of control is design; training and warnings may also be considered. |

|

Effectiveness ratings are assigned to each mode of control selected/identified in step 10 above. Depending on the stage in the development life cycle, these ratings may be based on either formative or summative evaluation data. |

|

When modes of control are in place, numerical or qualitative risk indices are revised or recomputed. |

As with FMEAs, fault trees and event trees can be developed by teams or by individuals with team review. For more information, refer to the literature in reliability engineering or systems safety engineering (e.g., Nuclear Regulatory Commission, 1981).

In recent years, graphical software programs have been made available for personal computers that enable users to rapidly assemble fault trees by “dragging and dropping” standard logic symbols onto a drawing area and connections are made (and maintained) automatically. These tools automatically calculate branch and top-level probabilities based on the estimated event probabilities entered. Such tools make FTAs much more accessible and much less labor intensive. Figure 8-3 is an example of a fault tree diagram for an automatic external defibrillator. Table 8-2 shows a summary of the steps in creating an FTA.

Contributions to System Design Phases

Use-error-focused risk analyses including FMEA and FTA are particular methods in the user-centered or human factors design process. It is the analytical complement to empirical usability assessment, commonly called usability testing. Risk analysis, evaluation, and control starts early in any development process (e.g., the incremental commitment model development process) and is iterated and reassessed as the development process progresses and the system design matures. For human-system integration, risk is assessed for usability, systems safety, and survivability issues. There are opportunities to develop single analyses that would serve all of these purposes, with the proper coordination across these development teams.

Strengths, Limitations, and Gaps

These risk-management techniques can be powerful analytic tools. Tables 8-3 and 8-4 list advantages and disadvantages of both FMEAs and FTAs.

The limitations for FTAs and FMEAs are similar:

-

Achieving group consensus is difficult. Research is needed on more effective and reliable techniques, such as modifications of the Delphi group decision-making technique. Groups can be dominated by individuals based on their rank, power, or personality, and the final ratings may not really reflect the group opinion.

-

Estimating likelihood of occurrence is very unreliable. Better probability modeling is needed.

-

Better tools are needed to integrate FTAs and FMEAs to make them easier to modify and apply.

TABLE 8-2 Steps in Performing an FTA

|

Steps |

Description |

|

The team will brainstorm to identify the top-level hazards (undesired events) to be addressed. A fault tree will be developed for each of these hazards. |

|

Identify faults and other events (including normal events) that could result in the top-level undesired event. These can be documented in a list or on notes posted on a wall. |

|

These include events that may lead directly to harm (single-point failure) or cause another fault without other events occurring; events that must happen in conjunction with other events to cause failure; and events that must happen in sequence to cause a failure. |

|

A fault tree is constructed using symbols that represent individual events and the most significant logic symbols, the “OR gate” and the “AND gate.” |

|

If the fault tree does not sufficiently characterize the system and human interactions, the team will assign probabilities to each of the events based on quantitative data or estimates based on expert judgment. |

|

Fault tree probabilities propagate upward from the individual events. The probability of the individual gates and the overall fault tree probability are computed by using numerical combinatorial rules for various logic gates. |

TABLE 8-3 Advantages and Disadvantages of FMEA

|

Advantages |

Disadvantages |

|

Risk index /RPN enable prioritization of faults. |

Difficult to assess combination of events complex interactions (unless explicitly documented). |

|

Explicitly documents modes of control/mitigation. |

Large documents can be difficult to manage: minimize inconsistencies and redundant items. |

|

Format useful for tracking action items. |

Severity and occurrence ratings are often difficult for individuals or teams to estimate. Much time can be spent in discussions. |

|

Easily constructed using handwritten spreadsheets or computer-based software tools: spreadsheets/word processing tables specialized FMEA tools. |

Sometimes can be overly conservative. With each fault isolated, failure to consider combinatorial events (as do fault trees) may lead to the false conclusion that every item requires explicit mitigation. |

TABLE 8-4 Advantages and Disadvantages of FTA

|

Advantages |

Disadvantages |

|

Graphical format enables visualization of combination of events. |

Drawings can become large and unwieldy in complex systems. |

|

Enables estimation of overall probability of failure based on estimates of root causes. |

Modes of control are not always explicit. |

|

Small fault trees can be developed using common flowchart drawing tools. |

Requires more training than FMEA. |

|

|

Special software required for rapid development of fault trees. |

USABILITY EVALUATION METHODS

Overview

This section explains how usability evaluation methods can contribute to systems development by providing feedback on usability problems and validating the usability of a system. These methods not only help improve the user interface, but also often provide insight into the extent to which the product will meet user requirements.

There are four broad approaches to ensuring the usability of a product or system:

-

Evaluation of the user’s performance and satisfaction when using the product or system in a real or simulated working environment. Also called evaluation of “quality in use” or “human in the loop.”

-

Evaluation of the characteristics of the interactive system, tasks, users, and the working environment to identify any obstacles to usability.

-

Evaluation of the process used for systems development to assess whether appropriate HSI methods and techniques were used.

-

Evaluation of the capability of an organization to routinely employ appropriate HSI methods and techniques.

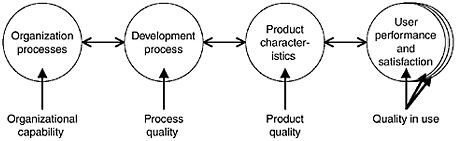

Thus, an organization needs the capability to apply human-system integration in the development process in order to be able to design a product or interactive system with characteristics that will enable adequate user performance and satisfaction in the intended contexts of use (Figure 8-4).

Evaluation of the usability of a product provides feedback on the extent to which a design meets user needs, and thus it is central to a user-centered design process. Feedback from usability evaluation is particularly important because developers seldom have an intimate understanding of the user’s per-

FIGURE 8-4 Approaches to ensuring usability. The quality in use is determined by the quality of the product, which is determined by the quality of the development process, which is determined by the organizational capability.

spective and work practices. In the collective experience of the committee, initial designs therefore very rarely fully meet user requirements. The cost of rectifying any divergence between the design and user needs increases rapidly as development proceeds, which means that user feedback should be obtained as early as possible.

Without proper usability evaluation, a project runs a high risk of expensive rework to adapt a system to actual user needs or of potential rejection of the product or system. Usability evaluation can be used to assess the magnitude of these. For more information on evaluating the development process and organizational capability, see Earthy, Sherwood Jones, and Bevan (2001).

Uses and Types of Methods

Usability is determined not only by the characteristics of the interactive product or system, but also by the whole context of use, including the nature of the users, tasks, and operational environment (see Chapter 6). In the broadest context, usability is concerned with optimizing all the factors that determine effective interaction between users and systems in a working environment. But in some cases, the scope of the evaluation is more limited, for example to support the design of a particular interactive component in an otherwise predetermined system. The word “product” is used below to refer to the component or system being evaluated.

Evaluations of user behavior and product characteristics are complementary. Although a user-based evaluation is the ultimate test of usability, it is not usually practical to evaluate all permutations of user type, task, and operational conditions. Evaluation of the characteristics of the product or interactive system can anticipate and explain potential usability problems, and it can be carried out before there is a working system. However,

evaluation of detailed characteristics alone can never be sufficient, as this does not provide enough information to accurately predict the eventual user behavior.

Uses of Methods: Formative and Summative Evaluation

The most common type of usability evaluation is formative: to improve a product by identifying and fixing usability problems. Formative evaluation of early mock-ups can also be used to obtain a better understanding of user needs and to refine requirements. An iterative process of repeated formative evaluation of prototypes can be used to monitor how closely the prototype designs match user needs. The feedback can be used to improve the design for further testing. Early formative evaluation reduces the risk of expensive rework. Formative evaluation is most effective when it involves a combination of expert and user-based methods.

Some examples of prototypes that can be evaluated are

-

paper-based, low-fidelity simulations for exploratory testing.

-

computer simulations (typically screen-based, e.g., Flash™, Macromedia Director™, Visual Basic™, Java, HTML). This can simulate the user interface while sacrificing full fidelity.

-

working early prototypes of the actual product.

Prototypes are discussed in more detail in Chapter 7.

In a more mature design process, formative evaluation should be complemented by establishing usability requirements (see Chapter 7) and testing whether these have been achieved by using a more formal summative evaluation process. Summative testing reduces the risk of delivering a product that fails as a result of poor user performance. Usability has also been incorporated into six sigma quality methods (Sauro and Kindlund, 2005).

Summative usability testing of an existing system can be used to provide baseline measures that can form the basis for usability requirements (i.e., objectives for human performance and user satisfaction ratings) for the next modification or release. A Common Industry Specification for Usability Requirements (Theofanos, 2006) has been developed to support iterative development and the sharing of such requirements.

Summative tests at the end of development should have formal acceptance criteria derived from the usability requirements. Summative methods can also be elaborated to identify usability problems, but if prior iterative rounds of formative usability testing are performed, then typically there will be few usability surprises uncovered during this late-stage testing (Theofanos, 2006).

Types of Methods

The remainder of this section describes methods in the following categories:

User Behavior Evaluation Methods

-

Methods based on observing users of a real or simulated system.

-

Methods that collect data from usage of an existing system.

-

Methods based on models and simulation.

Product Usability Characteristics Evaluation Methods

-

Methods based on expert assessment of the characteristics of a system.

-

Automated methods based on rules and guidelines.

All the methods can provide formative information about usability problems. The first two types of user behavior methods can also provide summative data. Other more informal techniques (such as a focus group) often do not provide reliable information for evaluation.

Methods based on observing users of a real or simulated system. Methods in this category are used

-

at all stages of development if possible.

-

to provide evidence for management.

-

in observing user trials, as a good way of providing incontrovertible evidence to developers.

In these user-based methods, users step through the design attempting to complete a task with the minimum of assistance. There are different types of user-based methods adapted specifically for formative testing or to also provide summative data (see Table 8-5).

-

Formative methods focus on understanding the user’s behavior, intentions, and expectations and typically employ a think-aloud protocol.

-

Summative methods measure the quality in use of a product and can be used to establish and test usability requirements. Summative usability testing, normally based on the principles of ISO 9241-11 (International Organization for Standardization, 1998), obtains quality in use measures for

TABLE 8-5 Types of User-Based Evaluation Method

|

Type |

Description |

When in Design Cycle |

Typical Sample Size (per group) |

Considerations |

|

Formative Usability Testing |

||||

|

Exploratory |

High-level test of users performing tasks |

Conceptual design |

5-8 |

Simulate early concepts, for example, with very low-fidelity paper prototypes or foam core models. |

|

Diagnostic |

Give representative users real tasks to perform |

Iterative throughout the design cycle |

5-8 |

Early designs or computer simulations. Used to identify usability problems. |

|

Comparison |

Identify strengths and weaknesses of an existing design |

Early in design |

5-8 |

Can be combined with benchmarking. |

|

Summative Usability Testing |

||||

|

Benchmarking Competitive |

Real users and real tasks are tested with existing design |

Prior to design |

8-30 |

To provide a basis for setting usability criteria. Can be combined with competitive comparison. |

|

Validation |

Real users and real tasks are tested with final design |

End of design cycle |

8-30 |

To validate the design by having usability objectives as acceptance criteria and should include any training and documentation. |

-

Effectiveness—“the accuracy and completeness.” Error-free completion of tasks is important in both business and military applications.

-

Efficiency—“the resources expended.” How quickly a user can perform work is critical for productivity.

-

Satisfaction—“positive attitudes toward the use of the product.” Satisfaction is a success factor for any products with discretionary use and essential to maintain workforce motivation.

Each type of measure is usually regarded as an independent factor with

a relative importance that depends on the context of use (e.g., efficiency may be paramount for employers, while satisfaction is essential for public users of a web site).

These measures can also be used to assess accessibility (the performance and satisfaction of users with disabilities), and learnability (e.g., the duration of a course or use of training materials), and the user performance and satisfaction expected immediately after training and after a designated length of use. In summative testing, the system to be evaluated may be a functioning prototype (e.g., in alpha or beta testing) or controlled trials of an existing system.

Methods that collect data from usage of an existing system. This category of methods is used when planning to improve an existing system. They include

-

Satisfaction surveys: Satisfaction questionnaires distributed to a sample of existing users can provide an economical way of obtaining feedback on the usability of an existing product or system.

-

Web metrics: A web site can be instrumented to provide information on entrance and exit pages, frequency of particular paths through the site, and the extent to which search is successful. If combined with pop-up questions, the results can be related to particular user groups and tasks.

-

Application instrumentation: Data points can be built into code that “count” when an event occurs (e.g., in Microsoft Office—Harris, 2005). This could be the frequency with which commands are used or the number of times a sequence results in a particular type of error. The data are sent anonymously to the development organization. This real-world data from large populations can help guide future design decisions.

For more information, see the section on event data analysis in Chapter 6.

Methods based on models and simulation. This category of methods is used when models can be constructed economically, particularly if user testing is not practical. Model-based evaluation methods can predict such measures as the time to complete a task or the difficulty of learning to use an interface. Some models have the potential advantage that they can be used without the need for any prototype. However, setting up a model usually requires a detailed task analysis, so model-based methods are most cost-effective in situations in which other methods are impracticable, or the information provided by the model is a cost-effective means of managing particular risks. See Chapter 7 for more information on modeling.

Methods based on expert assessment of the characteristics of a system. These methods are used for the following purposes:

-

To provide breadth that complements the depth of user-based testing.

-

When there are too many tasks to include all of them in a usability test.

-

Before user-based testing.

-

When it is not possible to obtain users.

-

When there is little time.

-

To train developers.

The several approaches to expert-based evaluation are discussed briefly in the paragraphs below:

Guidelines and style guides. Conformance to detailed user interface guidelines or style guides is an important prerequisite for usability, as it can impose consistency and conformance with good practice. But as published sources typically contain several hundred guidelines (e.g., ISO 9241 series), they are difficult to apply or assess unless simplified and customized to project needs.

Interfaces can be assessed for conformance with general guidelines, such as the usability heuristics recommended by Nielsen (Nielsen and Mack, 1994) and the ISO-9241-10 dialogue principles (International Organization for Standardization, 1996). Checking conformance with ISO 9241-10 forms part of the usability test procedure approved by DATech in Germany (Dzida, Geis, and Freitag, 2001).

Parts 12-17 of the ISO 9241 series of standards contain very detailed user interface guidelines. Although these are an excellent source of reference, they are very time-consuming to employ in testing. Further information on standards can be found in Bevan (2005).

Detailed guidelines for web design have proved more useful to both usability specialists and web designers. The most comprehensive, well-researched, and easy-to-use set has been produced by the U.S. Department of Health and Human Services (2006).

Following guidelines usually improves an interface, but they are only generalizations so there may be particular circumstances in which guidelines conflict or do not apply—for example, because of the use of new features not anticipated by the guideline.

Heuristic evaluation. Heuristic evaluation assesses whether each dialogue element follows established heuristics. Although heuristic evaluation (Nielsen and Mack, 1994) is a popular technique and research has shown

that heuristics are a useful training aid (Cockton et al., 2003), using heuristics in the context of a task-based walkthrough is usually more effective.

Usability walkthrough. Usability walkthrough identifies usability problems while attempting to achieve tasks in the same way as a user, making use of the expert’s knowledge and experience with relevant usability research. A variation is pluralistic walkthrough, in which a group of users, developers, and human factors people step through a scenario, discussing each dialogue element.

Cognitive walkthrough. This originally referred to a detailed process of analyzing the cognitive processes of a user carrying out a task, although it is now also sometimes used to refer to a usability walkthrough. The distinctions are summarized in Table 8-6.

Methods such as a usability walkthrough that employ task scenarios are generally the most cost-effective and can be combined with using heuristic principles or checking conformance to guidelines.

Expert evaluation is simpler and quicker to carry out than user-based evaluation and can, in principle, take account of a wider range of users and tasks than user-based evaluation, but it tends to emphasize more superficial problems (Jeffries and Desurvire, 1992) and may not scale well for complex interfaces (Slavkovic and Cross, 1999). To obtain results comparable to user-based evaluation, the assessment of several experts must be combined. The greater the difference between the knowledge and experience of the experts and the real users, the less reliable are the results.

Automated methods based on rules and guidelines. This category of methods is used primarily for basic screening. There are some automated tools (such as WebSAT, LIFT, and Bobby) that automatically test for conformance with some basic usability and accessibility rules. Although these are useful for screening for basic problems, they only test a very limited scope of issues (Ivory and Hearst, 2001).

TABLE 8-6 Types of Expert-Based Evaluation Methods

|

Guidelines |

Task Scenarios |

|

|

No |

Yes |

|

|

None |

Expert review |

Usability walkthrough Pluralistic walkthrough |

|

General guidelines |

Heuristic inspection |

Heuristic walkthrough |

|

Detailed usability guidelines |

Guidelines inspection |

Guidelines walkthrough |

|

Information processing view |

N/A |

Cognitive walkthrough |

|

SOURCE: Adapted from Gray and Salzman (1998). |

||

Shared Representations

All evaluations result in a list of usability problems, and these may be reported to the stakeholders in a written report, a presentation, or a video. While the people responsible for sponsoring the usability work may be quite receptive, those who have to act on the results may be less sympathetic. So it is good practice to praise the strengths of the system from a user perspective before listing the problems.

The list of problems can be categorized (typically by task or screen) and prioritized, either from a user perspective or by the estimated costs and benefits of fixing the problem. It may make sense to ignore very low-priority issues, although they are worth reporting if they are easy to fix.

For maximum impact, stakeholders should be invited to view user-based evaluations. If this is not practical, edited videos of major issues have a much higher impact than other types of report or presentation.

If the evaluation results are being used to validate requirements, they will probably be incorporated into an existing quality control process.

Contributions to System Design Phases

Evaluation from a user perspective should be an integral part of systems development. In a risk-driven development process, the question is not whether to evaluate, but how to evaluate and how often. Early expert or user-based evaluation of mock-ups of new designs is essential to clarify requirements and to assess the viability of design concepts.

Evaluation of working prototypes can assess both their ease of use and whether they support the needs and tasks of real users. Late evaluation can validate whether a system has met the usability requirements.

Strengths, Limitations, and Gaps

There is a long history of research into the usefulness of different types of usability evaluation, resulting in broad agreement on the value and importance of including it in any system development for human-intensive systems.

While the costs and benefits of usability evaluation are well established (Bias and Mayhew, 2005), there is no way to be sure that all the important problems have been found. With some complex applications like an e-commerce web site, 15 or more users may be required to identify all the serious problems (Spool and Schroeder, 2001). In some situations, this number or more test participants will be cost-effective.

There have been several reports of different teams identifying different usability issues for the same system (Molich et al., 2004; Molich and

Dumas, 2006). Optimizing the evaluation procedures to obtain maximum value and consistency is still a research issue.

There may also be a temptation to apply the same evaluation procedure to every project, although the most effective approach will depend on a wide range of issues, including the availability and diversity of users, the range of tasks, and the potential risks of poor usability (see Chapter 4 and the first section of this chapter). An experienced usability practitioner will tailor the evaluation to the needs of the situation. Appropriate tools could be developed to support this process and would be of particular assistance to the less experienced practitioner.