5

Earthquake Physics and Fault-System Science

Earthquake research focuses on two primary problems. Basic earthquake science seeks to understand how earthquake complexity arises from the brittle response of the lithosphere to forces generated within the Earth’s interior. Applied earthquake science seeks to predict seismic hazards by forecasting earthquakes and their site-specific effects. Research on the first problem began with attempts to place earthquake occurrence in a global framework, and it contributed to the discovery of plate tectonics; research on the second was driven by the needs of earthquake engineering, and it led to the development of seismic hazard analysis. The historical separation between these two problems, reviewed in Chapter 2, has been narrowed by an increasing emphasis on dynamical explanations of earthquake phenomena. In this context, the term dynamics implies a consideration of the forces (stresses) within the Earth that act to cause fault ruptures and ground displacements during earthquakes. The stress fields responsible for deep-seated earthquake sources cannot be measured directly, but they can be inferred from models of earthquake systems that obey the laws of physics and conform to the relationships between stress and deformation (rheology) observed in the laboratory.

This chapter describes how this physics-based approach has transformed the field into an interdisciplinary, system-level science—one in which dynamical system models become the means to explain and integrate the discipline-based observations discussed in Chapter 4. The chapter begins with an essay on the central problems of dynamics and prediction, which is followed by five sections on areas of intense interdisciplinary research: fault systems, fault-zone processes, rupture dynam-

ics, wave propagation, and seismic hazard analysis. Each of the latter summarizes the current understanding and articulates major goals and key questions for future research.

5.1 EARTHQUAKE DYNAMICS

For present purposes, the term “dynamical system” can be understood to mean any set of coupled objects that obeys Newton’s laws of motion—rocks or tectonic plates, for example (1). If one can specify the positions and velocities of each of these objects at any given time and also know exactly what forces act on them, then the state of the system can be determined at a future time, at least in principle. With the advent of large computers, the numerical simulation of system behavior has become an effective method for predicting the behavior of many natural systems, especially in the Earth’s fluid envelopes (e.g., weather, ocean currents, and long-term climate change) (2). However, many difficulties face the application of dynamical systems theory to the analysis of earthquake behavior in the solid Earth. Forces must be represented as tensor-valued stresses (3), and the response of rocks to imposed stresses can be highly nonlinear. The dynamics of the continental lithosphere involves not only the sudden fault slips that cause earthquakes, but also the folding of sedimentary layers near the surface and the ductile motions of the hotter rocks in the lower crust and upper mantle. Moreover, because earthquake source regions are inaccessible and opaque, the state of the lithosphere at seismogenic depths simply cannot be observed by any direct means, despite the conceptual and technological breakthroughs described in Chapter 4.

From a geologic perspective, it is entirely plausible that earthquake behavior should be contingent on a myriad of mechanical details, most unobservable, that might arise in different tectonic environments. Yet earthquakes around the world share the common scaling relations, such as those noted by Gutenberg and Richter (Equation 2.5) and Omori (Equation 2.8). The intriguing similarities among the diverse regimes of active faulting make earthquake science an interesting testing ground for concepts emerging from the physics of complex dynamical systems. One consequence of recent interactions between these fields is that theoretical physicists have adopted a family of idealized models of earthquake faults as one of their favorite paradigms for a broad class of nonequilibrium phenomena (4). At the same time, earthquake scientists have become aware that earthquake faults may be intrinsically chaotic, geometrically fractal, and perhaps even self-organizing in some sense. As a result, an entirely new subdiscipline has emerged that is focused around the development and analysis of large-scale numerical simulations of deformation

dynamics. Combined with insightful physical reasoning and intriguing new laboratory and field data, these investigations promise a better understanding of seismic complexity and predictability.

Complexity and the Search for Universality

Earthquakes are clearly complex in both the commonsense and the technical meanings of the word. At the largest scales, complexity is manifested by features such as the aperiodic intervals between ruptures, the power-law distribution of event frequency across a wide range of magnitudes, the variable patterns of slip for earthquakes occurring at different times on a single fault, and the richness of aftershock sequences. Individual events are also complex in the disordered propagation of their rupture fronts and the heterogeneous distributions of residual stress that they leave in their wake. At the smallest scales, earthquake initiation appears to be complex, with a slowly evolving nucleation zone preceding a rapid dynamic breakout that sometimes cascades into a big rupture. Among the many open issues in this field are the questions of whether these different kinds of complexity might be related to one another and, if so, how.

The most ambitious and optimistic reason for considering the ideas of dynamical systems theory is the hope that one might discover universal features of earthquake-like phenomena. Such features would, of course, be extremely interesting from a fundamental scientific point of view. They might also have great practical value, for example, as a basis for interpreting seismic records or for making long-term hazard assessments. Two thought-provoking, complementary concepts that look as if they might bring some element of universality to earthquake science are fractality and self-organized criticality. The first describes the geometry of fault systems; the second is an intrinsically dynamic hypothesis that pertains to the complex motions of these systems. Although each has provoked its own point of view among earthquake scientists—that seismic complexity is, on the one hand, primarily geometric in origin or, on the other hand, primarily dynamic—it seems likely that both concepts contain some elements of the truth and that neither is a complete description of the behavior of the Earth.

There is substantial evidence that fault geometry is fractal, at least in some cases and over some ranges of length scales. Fractality is a special kind of geometric complexity that is characterized by scale invariance (5). That is, images of the same system made with different magnifications are visually similar to one another; there is no intrinsic length scale such as a correlation length or a feature of recognizable size that would enable an observer to determine the magnification simply by looking at the image.

One result of such a property in the case of fault zones is that there would be a broad, power-law distribution of the lengths of the constituent fault segments (6). If, in the simplest conceivable scenario, the seismic moment of the characteristic earthquake on each segment were proportional to its length, and each segment slipped at random, then the moment distribution would also be a power law. This picture is too simplistic to be a plausible explanation of the Gutenberg-Richter relation, but it may contain some element of the truth.

Self-organized criticality refers to the conjecture that a large class of physical systems, when driven persistently away from mechanical equilibrium, will operate naturally near some threshold of instability, and will therefore exhibit something like thermodynamic critical fluctuations (7). Earthquake faults, or arrays of coupled faults, seem to be natural candidates for this kind of behavior; such systems are constantly being driven by tectonic forces toward slipping thresholds (8). If the thermodynamic analogy were valid, then the fluctuations—the slipping events—would be self-similar and scale invariant, and their sizes would obey power-law distributions. More important, systems with this self-organizing property would always be at or near their critical points. Critical behavior, with strong sensitivity to small perturbations and intrinsic unpredictability, would be a universal characteristic of such systems.

Elementary Models of Earthquake Dynamics

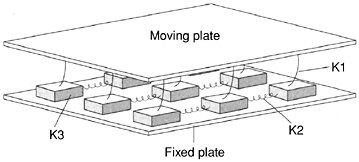

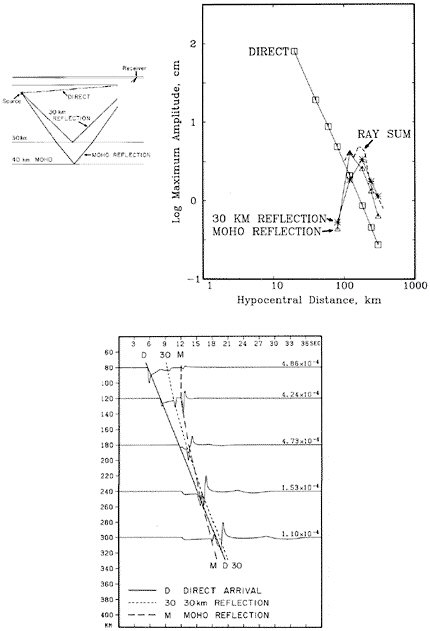

The ideas of fractality and dynamic self-organization have inspired a wide range of theoretical models of seismic systems. These models are almost invariably numerical: that is, they are studied primarily by means of large-scale computation. One class is cellular automata in which highly simplified rules for the behavior of large numbers of coupled components attempt to capture the essential features of complex seismic systems (9). Almost all cellular automata are related in some ways to the original one-dimensional slider-block model of Burridge and Knopoff (10), illustrated in Figure 5.1. Perhaps the most important result to emerge from such studies so far is the discovery that some of the simplest of these models, even the completely uniform Burridge-Knopoff model with a plausible, velocity-weakening dynamic friction law, are deterministically chaotic (11).

A chaotic system, by definition, is one in which the accuracy needed to determine its motion over some interval of time grows rapidly, in fact exponentially, with the length of the interval. Two identical systems that are set in motion with almost but not quite the same initial conditions may move in nearly the same way for a while. If these systems are chaotic, however, their motions eventually will differ from each other and, after a

FIGURE 5.1 Two-dimensional version of the Burridge-Knopoff spring-slider model. Leaf springs (K1) connect a moving plate to an array of smaller sliding blocks. These blocks are in turn connected to their nearest neighbors via coil springs (K2, K3). Sliding blocks also have a frictional contact with a fixed plate. Simulated earthquakes from models of this type display a wide variety of complexity. SOURCE: P. Bak, How Nature Works: The Science of Self-Organized Criticality, Springer-Verlag, New York, 226 pp., 1996. Copyright permission granted by Springer-Verlag.

sufficiently long time, will appear to be entirely uncorrelated. The correlation time depends sensitively on the difference in the initial conditions. In the context of predictability, this means that any uncertainty in one’s knowledge of the present state of a deterministically chaotic system produces a theoretical limit on how far into the future one can determine its behavior reliably, a topic explored further below.

One theoretical issue that has attracted a lot of attention has come to be known as the question of smooth versus heterogeneous fault models. This issue arose initially as a result of the unexpected success of the uniform Burridge-Knopoff slider-block models in producing very rough but interesting caricatures of complex earthquake-like behavior, which fueled speculation that some of the slip complexity of natural earthquakes might be generated by the nonlinear dynamics of stressing and rupture on essentially smooth and uniform faults. The more conventional and perhaps obvious assumption is that the heterogeneity of fault zones—their geometric disorder and strong variations of lithological properties—plays the dominant role. It appears that earthquake faults, when modeled in any detail, have relevant length and time scales that invalidate simple scaling assumptions. For example, the tectonic loading speed (meters per century) combined with known friction thresholds and elastic moduli of rocks suggests natural characteristic intervals (hundreds of years) between large slipping events. Models that incorporate these features produce event distributions in which the large events fail to be self-similar (12).

Another example is the thickness of the seismogenic layer, which is less than the rupture scale for larger earthquakes. It, too, seems likely to produce scaling violations both in dynamic behavior and in the geometry of fault systems (see Section 5.2).

The existence of relevant length and time scales does not, per se, invalidate dynamical scaling theories; it may merely limit their ranges of validity. In some smooth-fault models, for example, it appears that the small, localized seismic events are self-similar over broad ranges of sizes; however, the large, delocalized events look quite different and are substantially more frequent than would be predicted by extrapolating the scaling distribution for the small events (13), as in the “characteristic earthquake” model discussed in Section 2.6. The picture may change appreciably if one considers large arrays of coupled faults and, especially, if one includes the mechanism for creation of new faults as a part of the dynamical system. It is possible that this global system, in some as yet poorly understood average sense, may come closer to a pure form of self-organized criticality.

Chaos and Predictability

The theoretical issue of earthquake predictability (as distinct from the practical issue of how to predict specific earthquakes) remains a central, unresolved issue. The wide range of event sizes described by the Gutenberg-Richter law, the obvious irregularities in intervals between large events, the fact that chaotic behavior occurs commonly in very simple earthquake-like models, and many other clues, all argue in favor of chaos and thus for an intrinsic limit to predictability. The interesting question is what bearing this theoretical limit might have on the kinds of earthquake prediction that are discussed elsewhere in this report. If one could measure all the stresses and strains in the neighborhood of a fault with great accuracy, and if one knew with confidence the physical laws that govern the motion of such systems, then the intrinsic time limit for predictability might be some small multiple of the average interval between characteristic large events on the fault. Most of the seismic energy is released in the large events; thus, it seems reasonable to suppose that the system suffers most of its memory loss during those events as well. If this supposition were correct, earthquake prediction on a time scale of months or years—intermediate-term prediction of the sort described in Section 2.6—would, in principle, be possible.

The difficulty, of course, is that one cannot measure the state of a fault and its surroundings with great accuracy, and one still knows very little about the underlying physical laws. If these gaps in knowledge could be filled, then predicting earthquakes a few years into the future might be no

more difficult than predicting the weather a few hours in advance. However, the geological information needed for earthquake prediction is far more complex than the atmospheric information required for weather prediction, and almost all of it is hidden far beneath the surface of the Earth. Thus, the practical limit for predictability may have little to do with the theory of deterministic chaos, but may be fixed simply by the sheer mass of information that is unavailable.

Progress Toward Realism

Two general goals of research in this field are to understand (1) how rheological properties of the fault-zone material interact with rupture propagation and fault-zone heterogeneity to control earthquake history and event complexity, and (2) to what extent scientists can use this knowledge to predict, if not individual earthquakes, then at least the probabilities of seismic hazards and the engineering consequences of likely seismic events. Finding the answers is an ambitious and difficult task, but there are reasons for optimism. The speeds and capacities of computers continue to grow exponentially; they are now at a point where numerical simulations can be carried out on scales that were hardly imagined just a decade ago. At the same time, the sensitivity and precision of observational techniques are providing new ways to test those simulations.

There exists, at present, a substantial theoretical and computational effort in the United States and elsewhere devoted to developing increasingly realistic models of earthquake faults. Given a situation in which such a wide variety of physical ingredients of a problem remain unconstrained by experiment or direct observation, numerical experiments to show which of these ingredients are relevant to the phenomena may be crucial. Consider, for example, the assumptions about friction laws that are at the core of every fault model. For slow slip, the rate- and state-dependent law discussed in Section 4.4 may be reliable, at least in a qualitative sense. On the other hand, for fast slip of the kind that occurs in large events, there is little direct information. It seems likely that dynamic friction in those cases is determined by the behavior of internal degrees of freedom such as fault gouge, pore fluids, and the like. Laboratory experiments on multicomponent lubricated interfaces may provide some insight, but the solution to this problem may have to rely on comparisons between real and simulated earthquakes. There are suggestions that a friction law with enhanced velocity-weakening behavior (i.e., stronger than the logarithmic weakening in the rate and state laws) is needed to produce slip complexity and perhaps also to produce propagating slip pulses in big events (14). This conjecture needs to be tested.

Friction is not the only constitutive property that may be relevant. The laws governing deformation and fracture may play important roles, especially if the latter processes are effective in arresting large events and/or creating new fault surfaces. Other uncertainties in this category include the geometric structure of faults, the ways in which constitutive properties vary as functions of depth or position along a fault, the statistical distribution of heterogeneities on fault surfaces, and the parameters that govern the interactions between neighboring faults during seismic events.

An equally serious issue is whether small-scale physical phenomena are relevant to large-scale behavior. A truly complete description of an earthquake would involve length and time scales ranging from the microscopic ones at which the dynamics of fracture and friction are determined to the hundreds of kilometers over which large events occur. Numerical simulations, especially three-dimensional ones, would be entirely infeasible if they were required to resolve such a huge range of scales. There are, however, examples in other scientific areas where this is precisely what occurs. In dendritic solidification, for example, it is known that a length scale associated with surface tension—a length usually on the order of ångströms—controls the shapes and speeds of macroscopic pattern formation (15). Any direct numerical simulation that fails to resolve this microscopic length scale produces qualitatively incorrect results. There are indications that similar effects occur in some hydrodynamic problems, perhaps even in turbulence (16).

At present, it is not known whether any such sensitivities occur in earthquake problems, but there are possibilities. For example, it remains an open question whether simulations of earthquakes must resolve the details of the initial fracture and/or the nucleation process. It is possible that many features of this small-scale behavior are imprinted in important ways on the subsequent large-scale events, but it is also possible that only one or two parameters pertaining to nucleation—perhaps the location and initial stress drop (plus the surrounding stress and strain fields, of course)—have to be specified in order to predict accurately what happens next. Similarly, if the solidification analogy is a guide, then the small-scale, high-frequency behavior of the constitutive laws might be relevant to pulse propagation, interactions between rupture fronts and heterogeneities, and mechanisms of rupture arrest.

In order to study large systems on finite computers, investigators frequently study two-dimensional models, often accounting for deformations in the crustal plane perpendicular to the fault (in models of transverse faults) and omitting or drastically oversimplifying variations in the fault plane (i.e., motions that are functions of depth beneath the surface). How relevant is the third dimension? Some investigators have argued

that it must be crucial because, without a coupling between the top and bottom of the fault, there is no restoring force to limit indefinitely large slip or, equivalently, to couple kinetic energy of slip back into stored elastic energy. It is hard to see how the dynamics of large events, especially rupture arrest and pulse propagation, can be studied sensibly without full three-dimensional analyses.

The issues of how to make progress toward realism are theoretical as well as computational. There is an emerging realization among theorists working on earthquake dynamics, and in solid mechanics more generally, that the problems with which they are dealing are far more difficult mathematically than they had originally supposed. One of the reasons that small-scale features can control large-scale behavior, as mentioned above, is that these features enter the mathematical statement of the problem as singular perturbations. For example, the surface tension in the solidification problem and the viscosity in certain shock-front problems enter the equations of motion as coefficients of the highest derivative of the dependent variable. As such, they completely change the answer to questions as basic as whether or not physically acceptable solutions exist and how many parameters or boundary conditions are needed to determine them. A related difficulty that is emerging, especially in problems involving elasticity, is that the equations of motion are often expressed most accurately as singular integral equations. Except for a few famous cases due largely to Muskhelishvili (17), such equations are not analytically solvable. There are not even good methods for determining the existence of solutions, nor are there reliable numerical algorithms for finding solutions when they do exist. In general, the ability to resolve the uncertainties regarding connections between model ingredients and physical phenomena will depend on advances in both mathematics and computer science. These problems are solvable, but they are indeed difficult.

5.2 FAULT SYSTEMS

Most theories of earthquake dynamics presume that essentially all major earthquakes occur on thin, preexisting zones of weakness, so that the behavior of the biggest events derives from the slip dynamics of a fault network. There are strongly different conceptions of fault systems, all of which may have merit for some purposes (18). Faults can be modeled as smooth Euclidean surfaces of displacement discontinuity in an otherwise continuous medium; fault systems can be represented as fractal arrays of surfaces; fault segments can be regarded as merely the deforming borders between blocks of a large-scale granular material transmitting stress in a force-chain mode. Representing the crust as a fault system is especially useful on the interseismic time scales relevant to fault interac-

tions, seismicity distributions, and the long-term aspects of the postseismic response.

Fault-system dynamics involves highly nonlinear interactions among a number of mechanical, thermal, and chemical processes—fault friction and rupture, poroelasticity and fluid flow, viscous coupling, et cetera— and sorting out how these different processes govern the cycle of stress accumulation, transfer, and release is a major research goal. Moreover, progress on the problem of seismicity as a cooperative behavior within a network of active faults has the potential to deliver huge practical benefits in the form of improved earthquake forecasting. The latter consideration sets a direction for the long-term research program in earthquake science.

Architecture of Fault Systems

Thermal convection and chemical differentiation are driving mass motions throughout the planetary interior, but the slip instabilities that cause earthquakes appear to be confined to the relatively cold, brittle boundary layers that constitute the Earth’s lithosphere. With sufficient knowledge of the rheologic properties of the lithosphere and the necessary computational resources, it should be possible to set up simulations of mantle convection that reproduce plate tectonics from first principles, including the localization of deformation into plate boundary zones. However, the nonlinearity of the rheology and its sensitivity to pressure, temperature, and composition (especially the minor but critical constituent of water) make this a difficult problem (19). Tough computational issues are also posed by the wide range of spatial scales that must be represented in numerical models. Strain localization is most intense on plate boundaries that involve the relatively thin oceanic crust, although there are exceptions. One is a region of diffuse though strong seismicity (up to moment magnitude [M] 7.8) in the central Indian Ocean that may represent an incipient plate boundary (20). The study of these juvenile features may shed light on the localization problem.

In continents, earthquakes are typically distributed across broad zones in which active faults form geometrically and mechanically complicated networks that accommodate the large-scale plate motions. This diffuse nature is clearly related to the greater thickness and quartz-rich composition of the continental crust, as described in Section 2.4. The structure of continental fault zones is thought to be complicated by variations in frictional behavior with depth, changes in wear mechanisms, and a brittle-ductile transition (Figure 4.30), although the details remain highly uncertain.

Interesting issues also arise from attempts to understand how the

complexities are related to the long geological history of the continents. In the southwestern United States, for example, the fault systems that produce high earthquake hazards have developed over tens of millions of years by tectonic interactions among the heterogeneous ensemble of accreted terrains that constitute the North American continental lithosphere and the oceanic lithosphere of the Farallon and Pacific plates. These interactions have created a zone of deformation a thousand kilometers wide that extends from the continental coastline to the Rocky Mountains. The “master fault” of this plate-boundary zone is the strike-slip San Andreas system, but other types of faults participate in the deformation, from extension in the Basin and Range to contraction in the Transverse Ranges. Likewise, the great thrust faults that mark the subduction zones of the northwestern United States and Alaska are accompanied by secondary faulting distributed for considerable distances landward of the subduction boundary. Within the continental interior far from the present-day plate boundaries, deformation is localized on reactivated, older faults, and some of these structures are capable of generating large earthquakes (see Section 3.2).

The geometric complexity of fault systems is fractal in nature, with approximately self-similar roughness, segmentation, and branching over length scales ranging from meters to hundreds of kilometers (Figure 3.2). Fault systems also have mechanical heterogeneities due to litho-logic contrasts, uneven damage, and possibly pressurized compartments within fault zones (21). The understanding of fault system architecture and earthquake generation in such systems is at a rudimentary stage of development.

Fault Kinematics and Earthquake Recurrence

The subject of fault kinematics pertains to descriptions of earthquake occurrence and slip of individual faults at different time scales, and the partitioning of slip among faults to accommodate regional deformation. An important goal of this characterization is to address the fundamental question of how slow and smoothly distributed regional deformations across fault systems, as seen in geodetic observations, are eventually transformed, principally at the time of earthquakes, into localized slip on particular faults. To build a comprehensive picture of this process requires synthesis of detailed geologic, geophysical, and seismic observations. At present, some regions—particularly portions of California and Japan— have sufficient information to describe the recent history of large earthquakes, to make estimates of the long-term average of slip rates of the principal faults, and to map the surface strain field across fault systems. Though comprehensive descriptions of fault-system kinematics are not

yet possible in any region, some generalizations have emerged on fault-system behavior at different time scales.

Across periods of perhaps a million years, fault systems evolve as slip brings different geologic formations into juxtaposition, new faults become activated, and previously existing faults go dormant. Processes on these time scales are undoubtedly important for understanding the origins and evolution of fault-system architecture. However, for estimations of earthquake probabilities and simulations of seismic activity on shorter times scales, an assumption of fixed fault-system geometry appears to be a reasonable approximation.

On time scales of a thousand years and less, there is clear evidence that earthquake activity is not stationary in time or space. That is, some regions show episodes of high earthquake activity followed by long periods of relative inactivity. Perhaps the best known example of episodic earthquake activity on a regional scale is from the north Anatolian fault in Turkey (Figure 3.21). Similarly in China, which has a long historical record of major earthquakes, it is evident that large regions have been episodically activated for many decades followed by long interludes of low earthquake activity (22). In the United States, geologic studies in Nevada, the eastern California shear zone, and elsewhere have found evidence for periods of high seismic activity across broad regions followed by long intervals with little or no geologic evidence of faulting activity (23).

Questions relating to the repeatability and recurrence intervals of large earthquakes on shorter time scales are of particular importance for the evaluation of earthquake probabilities used in seismic hazard analysis. Current approaches to estimating earthquake probabilities assume either that earthquakes occur randomly in time, but at some fixed rate, or that major earthquakes have sufficient periodicity to permit estimates of probability to be made based on elapsed time from the previous earthquake on a fault segment. Few large faults have ruptured more than once during the instrumental or historical period, and only in rare cases have the ruptures been documented well enough to enable unambiguous comparisons of the sequential ruptures. Hence, discussions of the periodicity (or aperiodicity) of large earthquakes, and the degree to which earthquake source parameters vary through several slip events, are dominated by conjecture. One approach to evaluating repeatability and periodicity of earthquakes employs seismic data from smaller earthquakes. Along the creeping portion of the San Andreas fault in central California, M 4 to M 5 earthquakes have been frequent enough to enable studies of their similarity. Waveforms from these moderate events can be sorted into nearly identical groups, establishing the existence of small, active fault patches, each generating nearly identical characteristic earthquakes with well-defined periodicities (24). These characteristic patches

appear to be driven by aseismic slip of the surrounding regions of the fault plane (25).

Another approach to characterizing the repeatability and degree of periodicity of large earthquakes is based on paleoseismic investigations, which seek to reconstruct fault slip and earthquake histories over periods of thousands of years. Available paleoseismic data suggest that major earthquakes often involve distinct fault segments that tend to slip persistently in a similar manner from earthquake to earthquake. Some examples include portions of the Wasatch fault in Utah, the Superstition Hills fault in California, and the Lost River Range fault, a normal fault in Idaho (26). However, in other cases, more varied behavior among the segments appears to be the norm. For example, both the Imperial fault in southern California and the North Anatolian fault in Turkey have failed in a different manner in historic time (27). In some cases, paleoseismic data support the concept of periodicity, while in other situations, earthquake occurrence appears to have been aperiodic. These observations, together with episodic regional activation at long time scales, imply that simple characterizations of earthquake repeatability and periodicity may not be possible.

Seismicity and Scaling

Earthquake scaling laws, and the circumstances under which they break down, furnish insights on fault interactions that carry important ramifications for seismic hazard analysis and earthquake prediction. For instance, the seismicity of individual faults does not follow the Gutenberg-Richter relation (28), indicating that the frequency-magnitude power law is a property of the fault system, perhaps related to the fractal distributions of fault sizes. The Gutenberg-Richter relation also appears to break down for large earthquakes, where the earthquake rupture width is constrained by the depth extent of the seismogenic zone (29). The scaling laws for earthquake parameters at larger magnitudes also seem to be bounded by the thickness of the seismogenic zone. Although this topic has created a great deal of controversy, recent results suggest that the scaling of slip with rupture length in earthquakes is consistent with scale-independent rupture physics (30).

Uncertainty also exists on the breakdown of self-similarity and the Gutenberg-Richter relation at small magnitudes. Theoretical studies, which employ laboratory-derived fault friction laws, indicate there should be some minimum fault length for earthquake fault slip as defined by the nucleation zone for earthquake initiation (see Section 5.3). This dimension is of fundamental importance for two reasons. First, it sets a scale length that must be respected for realistic simulations of the earthquake initiation and rupture

propagation processes. Second, it defines the dimensions of the region of precursory strains related to the earthquake nucleation process. Small scaling lengths impose severe restrictions on numerical calculations and could also mean that precursory phenomena related to earthquake nucleation may be difficult or impossible to detect.

Stress Interactions and Short-Term Clustering

Although major earthquakes generally tend to be associated with large faults easily recognized at the surface, instrumentally recorded seismicity indicates that smaller earthquakes become more diffusely distributed as their size decreases. The smallest earthquakes often arise on faults with no known surface expression. Stress-mediated interactions among these fractal fault systems can be explored by using the scaling behavior of the seismicity to monitor system organization as a function of time. This type of regional seismicity analysis offers the most promising approach to intermediate-term prediction.

A widely studied type of fault interaction arises from the permanent change of the stress field following an earthquake. According to the Coulomb stress condition for frictional failure (Equation 2.1), an increase in the magnitude of the shear stress acting across a fault should push it closer to failure, while an increase in normal stress should increase the effective frictional strength, thus retarding failure. An important recent discovery is that regional seismicity appears to be correlated with the relatively small Coulomb stress increments calculated from static dislocation models of large earthquakes (31). This interpretation of seismicity has been largely successful in explaining the patterns of aftershocks as well as regions of reduced seismicity (“stress shadows”) following large events along the San Andreas fault system (32), the 1999 Izmit earthquake in Turkey (33), and various earthquakes in Japan, Italy, and elsewhere (34).

The Coulomb stress calculations usually assume purely elastic interactions at the time of the mainshock. This is a reasonable approximation in the outer layers of the brittle crust, but it does not describe known postseismic processes, which include ductile flow below the seismogenic zone, fault creep (earthquake afterslip), and poroelastic effects (due to fluid flow) that all result in extended intervals of stressing in the region of a large earthquake (Section 4.2). The role these postseismic effects have in controlling, or altering, aftershocks sequences is presently not well understood, but the stress changes due to these processes are usually rather small compared to the immediate stress change caused by the mainshock.

Aftershocks are thought to be primarily a response of the surrounding fault system to stress changes caused by the mainshock fault slip. That is, the Coulomb stress changes drive the aftershock fault planes to failure.

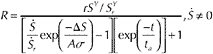

Aftershocks are an extreme example of short-term earthquake clustering that appears to be quite distinct from the long-term regional clustering of large earthquakes discussed above. Aftershocks can temporarily increase the local seismicity rates to more than 10,000 times the pre-mainshock level. Although Coulomb stress interactions provide an explanation for many aftershock patterns, those models alone do not account for either the rates of seismicity that occur in response to the stress changes or the subsequent decay of rates inversely proportional to time, as expressed in Omori’s aftershock decay law (Equation 2.8). The most fully developed explanation for these and other properties of aftershocks is based on the rate- and state-dependent fault frictional properties observed in laboratory experiments (see Section 4.4). These frictional properties require that the initiation of earthquake slip (earthquake nucleation) be a delayed instability process in which the time of an earthquake is nonlinearly dependent on stress changes (35). This approach has resulted in a state-dependent model for earthquake rates that provides quantitative explanations for observed aftershock rates in response to a stress change, the Omori decay law, and various other features of aftershocks (Box 5.1).

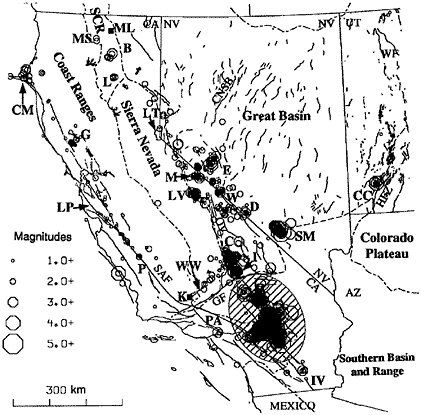

Aftershocks can also be generated by dynamic stresses during the passage of seismic waves. At large epicentral distances, these transients are much greater than the static Coulomb stresses, although they act only over short intervals. Short-term dynamic loading was responsible for triggering seismicity across the western United States after the 1992 Landers, California earthquake (36). Immediately following the Landers earthquake, bursts of seismicity were observed at locations more than 1000 kilometers from the mainshock (Figure 5.2). The mechanisms for after-

|

BOX 5.1 State-Dependent Seismicity A physically based method for quantitative modeling of the relationships between stress changes and earthquake rates is provided by the rate- and state-dependent representation of fault friction. This approach treats seismicity as a sequence of earthquake nucleation events and specifically includes the time and stress dependence of the earthquake nucleation process as required by rate- and state-dependent friction. The result is a general state-dependent formulation for earthquake rates:1 (1) where R is earthquake rate (in some magnitude interval), ? is a state variable, t is time, and S is Coulomb stress. The normalizing constant r is defined as the steady-state earthquake rate at the reference stressing rate |

|

constitutive parameter with values in the range 0.005 to 0.015. For this model, the Coulomb stress function is defined as (2) where t and s are shear and effective normal stress, respectively, acting across the fault planes that generate earthquakes; µ is the coefficient of fault friction; and a is a constant with values in the range 0 < a < µ. In equation (1) the term As is treated as constant (i.e., the changes in stress are negligible relative to total normal stress). A property of seismicity predicted by this model is that stress perturbations drive seismicity rates away from a steady-state condition set by the Coulomb stressing rate SY and seismicity seeks to return to steady state over the characteristic time ta = As/SY. The effects of a nearby earthquake on seismicity rates are given by the solution of (1) for a stress step ?S,  (3) where t = 0 at the time of the step. At t > ta, earthquake rates approach a constant background rate, and at t < ta this solution acquires the form of Omori’s aftershock decay law (Equation 2.8): (4) with p = 1, a = rta, and c = ta exp (–?S/As). Model predictions of aftershock rates, time-dependent expansion of the aftershock zone, proportionality of aftershock duration ta to the inverse of stressing rates, and spatially averaged aftershock decay by t–0.8 all appear to be consistent with the data.2 Variations of this approach have been used to model foreshocks3 and the statistics of earthquake pairs. Recently the state-dependent seismicity formulation has been used to invert earthquake rate for the changes in stress that drive earthquakes at Kilauea, Hawaii.4 |

FIGURE 5.2 Map of the western United States showing areas of increased seismicity dynamically triggered by seismic waves from the 1992 Landers earthquake. SOURCE: D.P. Hill, P.A. Reasenberg, A. Michael, W. Arabaz, G.C. Beroza, J.N. Brune, D. Brumbaugh, S. Davis, D. DePolo, W.L. Ellsworth, J. Gomberg, S. Harmsen, L. House, S.M. Jackson, M. Johnston, L. Jones, R. Keller, S. Malone, S. Nava, J.C. Pechmann, A. Sanford, R.W. Simpson, R.S. Smith, M. Stark, M. Stickney, S. Walter, and J. Zollweg, Seismicity remotely triggered by the magnitude 7.3 Landers, California, earthquake, Science, 260, 1617-1623, 1993. Copyright 1993 American Association for the Advancement of Science.

shock triggering by seismic waves are poorly understood but may involve fluid-rock interactions or triggering of local deformations that produce permanent stress changes after the waves have passed through a region.

Foreshocks Foreshocks are generally thought to arise by one of two mechanisms. The first proposes that a mainshock following a foreshock has an identical origin to that of aftershocks. In this case, earthquake frequency-magnitude statistics predict that occasionally an aftershock will

be larger than the prior event, which by definition makes the prior event a foreshock (37). The other proposed mechanism for foreshocks is that premonitory processes, perhaps the fault creep related to mainshock nucleation, result in stress changes that drive the foreshock process in surrounding areas. Models based on state-dependent earthquake rates indicate that both mechanisms are in general agreement with time and distance statistics of foreshock-mainshock pairs (38).

Short-term clustering, as manifest in foreshock-mainshock pairs and aftershocks, attests to large but transient changes in the probabilities of additional earthquakes that occur whenever an earthquake takes place. The concepts of stress interaction and state-dependent seismicity permit physically based calculations of earthquake probability following large earthquakes (39). This approach has been used to evaluate the changes in earthquake probability that arose as a consequence of stress interactions along the Anatolian fault in Turkey (40) and following the M 6.9 earthquake that struck Kobe, Japan, in 1995 (41).

Accelerating Seismicity and Intermediate-Term Prediction A central issue for earthquake prediction is the degree to which the seismicity clustering can be used to monitor the stress changes leading to large earthquakes. Various studies have shown that large earthquakes tend to be preceded by clusters of intermediate-sized events (42). This increase in seismicity can be fit to a time-to-failure equation in the form of a power law, which is commonly used by engineers to describe progressive failures that result from the accumulation of structural damage (43). The power-law time-to-failure equation is also expected if large earthquakes represent critical points for regional seismicity (44).

As described in Section 5.1, regional seismicity has many of the characteristics of a self-organized critical system, including power-law (Gutenberg-Richter) frequency-size statistics and fractal spatial distributions of hypocenters. However, the near-critical behavior of fault systems is the subject of some debate. If the crust continuously maintains itself in a critical state, as originally proposed by Bak and Tang, then all small earthquakes will have the same probability of growing into a big event. This hypothesis has been used as the physical basis for assertions that earthquake prediction is inherently impossible (45). Alternatively, the crust could repeatedly approach and retreat from a critical state. The working hypotheses for this latter view are (1) large regional earthquakes become more probable when the stress field becomes correlated over increasingly larger distances, (2) this approach to a critical state is reflected in an acceleration of regional seismicity, and (3) a system-spanning event destroys criticality on its network, creating a period of relative quiescence after which the process repeats by rebuilding correlation

lengths toward criticality and the next large event (46). It is the decay of the post-event stress shadows by continuing tectonic deformation that introduces predictability into the system.

The seismic cycle implied by these hypotheses agrees with some important aspects of the data on seismic stress shadows and accelerating seismicity (47). Many issues remain to be resolved, however. Quantitative testing will require precisely formulated numerical models adapted to specific fault networks (i.e., computer simulations with realistic representations of fault and block geometries, rheologies, and tectonic loadings). Such “system-level” models are in the early stages of development. The long-term clustering statistics generated by the models must be understood in terms of the underlying dynamics (48), and these behaviors will have to be evaluated against the extended earthquake records now being provided by paleoseismology (see Section 4.3). The key step is to deploy the models in regulated prediction environments to rigorously test their predictive skill.

Key Questions

-

What are the limits of earthquake predictability, and how are they set by fault-system dynamics?

-

Which aspects of the seismicity are scale invariant, and which are scale dependent? How do these scaling properties relate to the underlying dynamics of the fault system? Under what circumstances is it valid to extrapolate results based on low-magnitude seismicity to large-earthquake behavior?

-

Are there patterns in the regional seismicity that are related to the past or future occurrence of large earthquakes? For example, are major ruptures preceded by enhanced activity on secondary faults, temporal changes in b values, or local quiescence? Can the seismicity cycles associated with large earthquakes be described in terms of repeated approaches to, and retreats from, a regional critical point of the fault system?

-

On what scales, if any, is the seismic response to tectonic loading stationary? What are the statistics that describe seismic clustering in time and space, and what underlying dynamics (e.g., mode-switching) control this episodic behavior? Is clustering observed in some fault systems due to repeated ruptures on an individual fault segment or to rupture overlap from multiple segments? Is clustering on an individual fault related to regional clustering encompassing many faults?

-

What systematic differences in fault strength and behavior are attributable to the age and maturity of the fault zone, lithology of the wall rock, sense of slip, heat flow, and variation of physical properties with depth? Are mature faults such as the San Andreas weak? If so, why?

-

To what extent do fault-zone complexities, such as bends, stepovers, changes in strength, and other “quenched heterogeneities,” control seismicity? How applicable are the characteristic earthquake and slip-patch models in describing the frequency of large events? How important are dynamic cascades in determining this frequency? Do these cascades depend on the state of stress, as well as the configuration of fault segments?

-

How does the fault system respond to the abrupt stress changes caused by earthquakes? To what extent do the stress changes from a large earthquake change nearby seismicity rates and advance or retard large earthquakes on adjacent faults? How does stress transfer vary with time (49)?

-

What controls the amplitude and time constants of the postseismic response, including aftershock sequences and transient aseismic deformations? In particular, how important are the induction of self-driven accelerating creep, fault-healing effects, poroelastic effects (which involve the hydrostatic response of porous rocks to stress changes), and coupling of the seismogenic layer to viscoelastic flow at depth?

-

What special processes occur at borders or transition regions between creeping zones, whether localized on faults or distributed, and fault zones that are locked between seismic events? Do lineations of microseismicity provide evidence for processes along such borders?

-

What part of aseismic deformation on and near faults occurs as episodes of slip or strain versus steady creep?

5.3 FAULT-ZONE PROCESSES

The move toward physics-based modeling of earthquakes dictates that research be focused on relating small-scale processes within fault zones to the large-scale dynamics of earthquakes and fault systems. Earthquakes have many scale-invariant and self-similar features, yet numerical simulations must assume some smallest length scale in a grid or mesh, as well as a shortest time step, in order to discretize the computational problem. The issue then becomes how to refine the discretization adequately so that principal phenomena are represented qualitatively, if not at the quantitatively correct small size scale. There is also the question of whether it is possible to capture the wealth of processes that occur on sub-grid scales through judicious parameterizations. For example, rate- and state-dependent friction laws suggest that processes at a scale smaller than the coherent slip patch size can be swept into the macroscopic constitutive description. This characteristic dimension appears to be a very small, however—on the order of 0.1 to 10 meters (see Section 5.4). Numerical resolution of processes at that size scale is well

beyond the capability of current three-dimensional earthquake simulations (50).

Damage Mechanics

The question of how well earthquakes can be approximated as propagating dislocations on idealized friction-bound fault planes is also tied to the degree of rheological breakdown and damage in regions of significant lateral extent away from the rupture surface. Such damage zones can be investigated on large scales by seismological field experiments using fault-zone trapped waves (51) as well as by gravity and electromagnetic methods (52). On smaller scales, processes of rock failure can be studied in the laboratory and their effects observed by field work on exhumed faults.

Recent years have seen strong focus on the possibilities that fractal and granular aspects might be major parts of the observed complexity of fault systems and of fault-zone response. Nevertheless, over the same time, close geological investigations of exhumed fault zones (53) have strengthened the viewpoint that much of the observed complexity of damage zone and secondary fault structures bordering large-slip faults could indeed be a relatively inactive relic of evolution and that, with ongoing slip accumulation, faults become more like Euclidean surfaces (54). For example, studies at the Punchbowl and North Branch San Gabriel faults (55) show abundant complexity of structure, with damaged and faulted rock that extends on order of 100 meters from the fault core. Yet a severely granulated ultracataclastic core on the order of only 100 millimeters wide seems to have accumulated all significant slip, summing to several kilometers of motion. Also, a principal fracture surface that may be only a few millimeters wide seems to have hosted large amounts of slip, presumably corresponding to the last several earthquakes, whereas there is little evidence of significant slip accumulation on secondary faults in the damaged border zone.

This does not at all imply that the damaged zone is irrelevant to fault dynamics. First, it is a storage site for pore fluids. Second it provides a heterogeneity of elastic properties that may allow slip on the main fault, if not well centered within the damaged zone, to induce changes in normal stress, with consequences for frictional instability (56). Third, as a zone of low strength, it may react inelastically to the high stresses associated with a propagating rupture front. Stresses acting off the main fault plane become much larger than those along it as the rupture approaches what, in elastic-brittle dynamic crack theory, would be its limiting speed (57). It is likely that faulted rock within that border region acts as a macroscale plastic zone when rupture speed approaches the limit speed, so that much of the inferred fracture energy of earthquake faulting may emanate from

energy dissipation in the damage zone rather than exclusively from the main fault surface itself (as often assumed in relating seismic observations to parameters of slip-weakening rupture description). Also, the high off-fault stresses may activate rupture along fortuitously oriented, branch fault structures that intersect the main fault. Such a process is a possible source of spontaneous arrest of rupture and of intermittence of rupture propagation speed (enriching the radiated seismic spectrum at high frequencies), and it can be correlated to natural examples of macroscopic branching of the rupture path (58).

Friction of Fault Materials

Experimentally determined constitutive laws, such as those presented in Box 4.4, have been validated for slip rates between about 10–10 and 10–3 meter per second. As such, they cover the range from plate rates to rates at which incipient dynamic instabilities are well under way, so they probably provide an appropriate description of frictional processes during earthquake nucleation and postseismic response. In the common form of these laws, the logarithmic dependence of stress on sudden changes in sliding velocity, introduced empirically, is now generally assumed to descend from an Arrhenius activated rate process governing creep at asperity contacts (59). That is, the slip rate V for each active mechanism at the contacting asperities is proportional to e–Q/RT, where the activation energy Q is diminished linearly by stress over the narrow range sampled in experiments. This leads at once to the instantaneous ln V dependence of friction coefficient in the range for which forward-activated jumps are vastly more frequent than backward ones. Considering the backward jumps regularizes the ln V dependence at V = 0 (60). Experiments on optically transparent materials, including quartz, have linked the state evolution slip distance Dc to the sliding necessary to wipe out the original contact population and replace it with a new one (61). These experiments also showed time-dependent growth of contact junctions, which is a mechanism by which strength depends on the maturity of the contact population (measured by the state variable). Further, models have proposed thermally activated creep as a mechanism for contact growth that delivers a steady-state friction coefficient proportional to ln V (62), which is often observed, at least over limited ranges, in experiments.

The above description outlines the simplest physical understanding of the empirically derived friction laws. To confidently extend these relations to situations not directly studied in the laboratory, it will be important to put them on a firmer basis, in a way that deals more completely with contact statistics and the actual granular structure of fault-zone cores and that recognizes the possibility of multiple deformation mechanisms

with different dependencies on temperature, stress, and the chemical environment. A simple version is to assume that deformation in the fault zone can include both slip on frictional surfaces and more distributed creep deformation, with both processes taking place under the same stress (63). Based on earlier hydrothermal studies of granite and quartz gouge (64), F. Chester suggests that response can be modeled by three mechanisms: solution transfer, cataclastic flow, and localized slip (65). Each is assumed to follow a rate- and state-dependent law, but with additional terms to represent effects of changing temperature. Studies of this kind, firmly rooted in materials physics, are needed to extrapolate laboratory data confidently over a range of hydrothermal conditions to very long times at temperature on natural faults, to infer in situ stress conditions and the conditions of local stress and slip rate necessary to nucleate a frictional instability.

Earthquake Mechanics in Real Fault Zones

It may be conjectured that different physical mechanisms prevail at contacts during the most violent seismic instabilities, when average slip rates reach 1 meter per second and maximum slip rates near the rupture front might be as great as 102 meters per second. In that range, the dynamics of rapid stress fluctuations from sliding on a rough surface, openings of the rupture surfaces, microcracking, and fluidization of finely comminuted fault materials may result in a different velocity dependence, possibly with a dramatic weakening. Most significantly, very large temperatures will be generated in the rapid, large slips of large earthquakes. These are expected to lead to thermal weakening, but there is presently very limited laboratory study of the process (66). When two surfaces slide rapidly, compared to heat diffusion times at the scale of the asperity contacts, a first thermal weakening is due to flash heating and thermal softening of the contacts (67). With poor conductors such as rocks, continued shear—especially along narrow surfaces as inferred for the Punchbowl fault (68)—would necessarily lead to local melting. The amount of melt generated in actual faulting events is not well constrained. Pseudotachylytes (amorphous rocks, rapidly cooled from the melt) are sometimes seen as fillings of faults and of veins that run off them and at dilatational jogs (69). An open question is, how much of the finest-grain gouge components are also the result of rapid cooling of a melt that has been squeezed into narrow pore spaces where it solidified and thermally cracked to small fragments upon cooling.

Although there are presently few experimental constraints on response in the high-slip-rate range, experiments and coordinated theory for this range are essential to understanding the overall stress levels at

which faults operate, the heat outflow from faults (and whether its lowness is paradoxical or not), and the mode of rupture along them. For the latter, it is now understood (70) that strong velocity weakening together with low shear stress levels over the region through which a rupture propagates promote self-healing of the rupture behind the front, a phenomenon found in numerical simulations (71) and observed in real events (72). Yet whether it is velocity weakening or some other process or fault-zone property that controls the observed mode of rupture remain to be clarified. Good experiments and observations are a must, and velocity-weakening constitutive response is not the only route to short slip duration. They can also be induced by strong fault heterogeneity (73) and as a consequence of even fairly modest dissimilarity of elastic properties between the two elastic blocks bordering a fault zone (74).

Provided that typical laboratory friction coefficients for rocks (0.5 to 0.7) apply and that pore pressure is hydrostatic, the shear strength that must be overcome to initiate slip at, say, 10-kilometer depth is estimated to be about 100 megapascals. This is much larger than seismic stress drops, typically on the order of 1 to 10 megapascals. Thus, one option is that faults slide during large earthquake slips at stresses on the order of 100 megapascals. This is, however, in conflict with the well-known lack of a sharply peaked heat outflow over the San Andreas fault (see Section 2.5). It is also difficult to reconcile with observations (75) of a steep inclination (60 to 80 degrees) with the San Andreas fault of the principal compression direction in the adjoining crust. The possible ways around this problem are the subject of much discussion. It has been argued (76) that the heat flow data are unreliable, being influenced by shallow topographically driven groundwater flows, and that stress directions are dictated by bordering tectonics and are a misinterpreted signal of tectonics in the bordering regions. However, many workers have not been as ready to dismiss these considerations and have sought other modes of explanation. Pore pressure that is greatly elevated over hydrostatic, and nearly lithostatic, at seismogenic depth has been invoked. Also, the possibility has been raised that fault-zone material within well-slipped faults has anomalously low friction, due either to its mineralogical or its morphological evolution (e.g., possibly stabilizing hydrophilic phases with low friction comparable to that of montmorillonite clay (77)) or to the inclusion of weak lithologies, possibly serpentine, in the fault. In contrast to these propositions for zones of active tectonics such as the San Andreas, faults intersected by the few deep drill holes in stable continental crust seem to be at hydrostatic pore pressure and to carry maximum shear stresses consistent with friction coefficients in the range 0.5 to 0.7 (78). Thus, it is important to better constrain these possibilities

by drilling, such as that planned in the San Andreas Fault Observatory at Depth (SAFOD) component of the EarthScope Program, as well as by examinations of exhumed faults, to establish if and why major plate-bounding faults are different in composition or fluid pressurization.

Yet another possibility is that dynamic weakening may be responsible for the low-stress observations along the San Andreas fault. Sources could include severe thermal weakening, including melt formation, in rapid, large slips, as above, or the formation of gouge structures that accommodate slip by rolling with little frictional dissipation (79). In the case of sliding between elastically dissimilar materials, there is coupling between spatially inhomogeneous sliding and alteration of normal (clamping) stress. Mathematical solutions have been constructed that allow a pulse of slip to occur in a region of locally diminished clamping stress and hence diminished frictional dissipation (80). Experiments on foam rubber blocks (81) show a similar effect, even leading to surface separation. Analogous effects have not been found in laboratory rock experiments in the large sawcut apparatus at the U.S. Geological Survey (USGS)-Menlo Park, and the mechanism in the foam rubber remains obscure (nonlinearities in the surrounding continuum-like field could contribute); however, something similar to this could be found for natural faults, possibly as a result of the interaction of the fault core with the damaged zone adjoining it.

These considerations highlight the importance of determining the composition, structure, and physical state of fault-zone materials; of determining their rheology, especially in rapidly imposed large slips; and of understanding the dynamical processes within the core and their interaction with the heterogeneity and possible localized failure processes in the damaged border zones. At larger scales, there is a need for better characterization of fault junctions and of the structure and mechanical properties of fault-jog materials, over or through which rupture jumps in transferring slip from one fault segment to another.

Key Questions

-

Which small-scale processes—pore-water pressurization and flow, thermal effects and melt generation, geochemical alteration of minerals, solution transport effects, contact creep, microcracking and rock damage, gouge comminution and wear, gouge rolling—are important in describing the earthquake cycle of nucleation, dynamic rupture, and postseismic healing?

-

What fault-zone properties determine velocity-weakening versus velocity-strengthening behavior? How do these properties vary with temperature, pressure, and composition?

-

What rheologies govern the shallow deformation of fault zones? When does fault creep occur near the surface? Do lightly consolidated sediments allow distributed inelastic deformation?

-

How does fault strength drop as slip increases immediately prior to and just after the initiation of dynamic fault rupture? Are dilatancy and fluid-flow effects important during nucleation?

-

What is the nature of near-fault damage and how can its effect on fault-zone rheology be parameterized? Can damage during large earthquake ruptures explain the discrepancy between the small values of the critical slip distance found in the laboratory (less than 100 microns) and the large values inferred from the fracture energies of earthquakes and assumptions about the drop from peak strength for slip initiation to dynamic friction strength (5 to 50 millimeters if the strength drop is 100 megapascals, but an order of magnitude higher for 10 megapascals)?

-

Are the broad damage zones observed for some faults relics of the evolution of a through-going fault system on what was a misoriented array of poorly connected fault segments that were reactivated or originated as joints? Do the damage zones result from misfit stresses generated by the sliding of surfaces with larger-scale fractal irregularities? Are they just passive relics or do they also play a significant role in the dynamics of individual events?

-

How does fault-zone rheology depend on microscale roughness, mesoscale offsets and bends, variations in the thickness and rheology of the gouge zone, and variations in porosity and fluid pressures? How can the effects of these or other physical heterogeneities on fault friction be parameterized in phenomenological laws based on rate and state variables?

-

How does fault strength vary as the slip velocities increase to values as great as 1 meter per second or more? How much is frictional weakening enhanced during high-speed slip by thermal softening at asperity contacts and by local melting?

-

How do faults heal? Is the dependence of large-scale fault healing on time logarithmic, as observed over much shorter times in the laboratory? What small-scale processes govern the healing rate, and how do they depend on temperature, stress, mineralogy, and pore-fluid chemistry?

-

How does rupture on a major fault interact with faults in the bordering regions? Is this interaction a source of intermittent rupture propagation and resulting enriched high-frequency radiated energy or of the spontaneous arrest of ruptures? Are the high seismically inferred fracture energies (on the order of 100 times laboratory values for initially intact rock under high confining stress) actually due to induction of extensive frictional inelasticity in that border zone? Is fracture energy misinter-

-

preted as being due to slip weakening on a single major fault versus a network of dynamically stressed secondary faults?

-

When does the rupture path follow a fault that branches off from the major failure surface? What is the role of pre-stress magnitudes and orientations and of the dynamically altered stress distribution near the rupture front? How do ruptures surmount stepovers? Are elastic descriptions adequate for the stepped-over material, or is there an essential role for damaged rock and smaller fault structures within the stepover region?

5.4 RUPTURE DYNAMICS

Earthquake rupture entails nonlinear and geometrically complex processes near the fault surface, generating stress waves that evolve into linear (anelastic) waves at some distance from the fault. Better knowledge of the physics of rupture propagation and frictional sliding on faults is therefore critical to understanding and predicting earthquake ground motion. Research on rupture processes may also contribute to improvements in earthquake forecasting because of the dynamical connection between the evolution of the stress field on interseismic time scales and the stress heterogeneities created and destroyed during earthquakes.

Rupture Initiation

The process leading to the localized initiation of unstable stick-slip in laboratory (82) and theoretical (83) models of the earthquake process is referred to as earthquake nucleation. In frictional fault models, stick-slip instabilities can begin only in regions where the progression of slip causes the fault friction to decrease. For the rate-state model, this situation corresponds to velocity weakening—when the steady-state friction µss decreases with velocity V:

(5.1)

The dimensionless rate dependence a – b can vary with rock composition, temperature, and pressure. Equation 5.1 defines the condition at which earthquake nucleation can occur (Figure 4.30). However, a correspondence between the depth range at which earthquakes occur and the region where a – b is negative has not been confirmed by independent observations of velocity weakening, and there is no micromechanical theory that can be used to extrapolate laboratory data to crustal conditions. Nevertheless, the available lab information on the effect of temperature on the constitutive parameters, combined with inferred geotherms, suggests

a reasonable degree of agreement between the depth at which a – b is expected to become positive and the depth at which earthquakes stop.

As a fault is loaded, stress will fluctuate about the quasi-steady value tss = µsssn. Where stress is a bit higher than tss, the slip rate increases slightly and occasionally a fluctuation will occur over a large enough area to initiate an instability. The criterion for instability is that the patch size be larger than a critical value Lc:

(5.2)

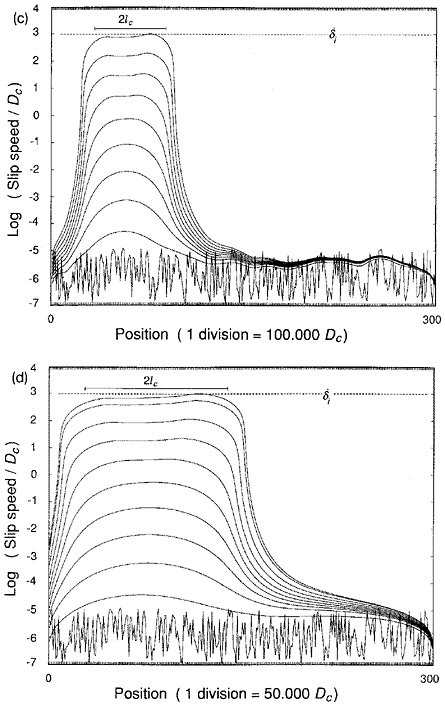

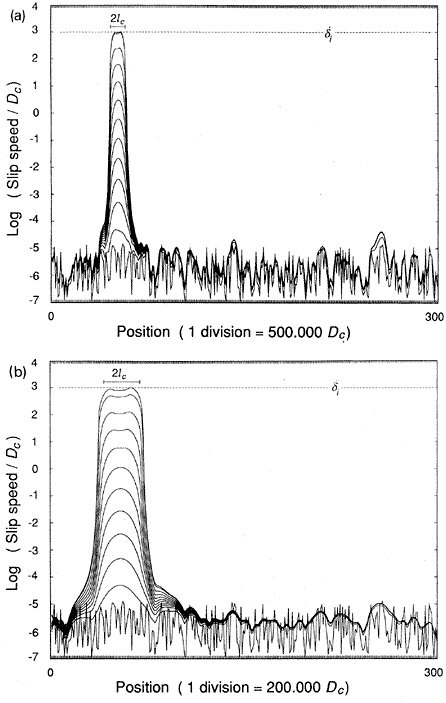

where G is the shear modulus. As nucleation begins, slip concentrates within a region of characteristic dimension Lc, and slip rate increases inversely with the time to instability (Figure 5.3). To what extent this type of behavior occurs in the Earth and what the size of Lc might be are two of the key questions in the science of earthquakes.

Earthquake nucleation is difficult to observe on faults in the Earth for two reasons. First, it is predicted to occur only over a spatially limited nucleation zone. If this zone is small, it will be difficult to detect. Second, nucleation may be a largely aseismic process such that it will not generate seismic waves. There are, however, observations that constrain possible models of earthquake nucleation, and these can be grouped into two classes: those that suggest the nucleation zone is small and those that suggest the nucleation zone is large.

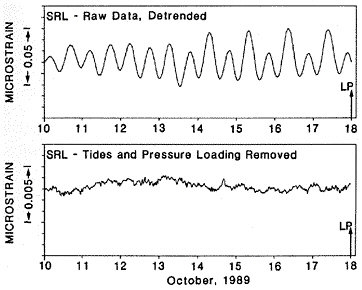

Several types of observations point to a small nucleation zone (Lc less than 100 meters). Borehole strainmeter data provide the most sensitive measurements of small strain signals in the near field. These data show no evidence of strain precursors at levels that correspond to about 1 percent of the mainshock seismic moment (84). This suggests that the nucleation zone and the amount of slip within it must be small (Figure 5.4). A second line of evidence comes from rupture dimensions of the smallest earthquakes, which place an upper bound on the size of the nucleation zone since slip over an area less than Lc must be stable. Microearthquakes on the San Andreas fault recorded on the downhole instruments of the deep Cajon Pass borehole have source dimensions of about 10 meters (85). This places an upper bound on the size of the nucleation zone, at least locally, though fault roughness, gouge thickness, and apparent normal stress all affect Lc and will vary spatially. If the laboratory parameters for smooth faults applied to faults in nature, the minimum earthquake size would be on the order of 1 to 10 meters. Direct evidence for a lower-magnitude cutoff at the upper end of this range (near M 0) comes from the microseismicity observed by sensitive networks in the deep gold mines of South Africa (86).

FIGURE 5.3 Numerical simulation of earthquake nucleation on a fault governed by rate-state friction with A = 0.004, B = 0.006, ? = 109 s, G = 104s, t = 0, and initial shear stress from (µ’0 + 0.06)s to (µ’0 + 0.08)s. The fault is discretized with 300 elements and initialized with random stress fluctuations. Lines show the slip speeds at successive times in the calculation; the time intervals are decreased by one order of magnitude for every order-of-magnitude increase in slip velocity. SOURCE: J.H. Dieterich, Earthquake nucleation on faults with rate- and state-dependent strength, Tectonophysics, 211, 115-134, 1992. With permission from Elsevier Science.

FIGURE 5.4 Preseismic strain observations of the 1989 Loma Prieta earthquake as recorded at San Juan Bautista. Upper panel shows a week of dilational strains with the oscillatory solid Earth tides. The lower plot presents the same record with Earth tides and atmospheric loading removed. The absence of a preseismic signal constrains the moment magnitude of an aseismic precursor to be no more than M 5.3, or less than 1 percent of the mainshock seismic moment. SOURCE: M.J.S. Johnston, A.T. Linde, and M.T. Gladwin, Near-field high-resolution strain measurements prior to the October 18, 1989, Loma Prieta Ms 7.1 earthquake, Geophys. Res. Lett., 17, 1777-1780, 1990. Copyright 1990 American Geophysical Union. Reproduced by permission of American Geophysical Union.

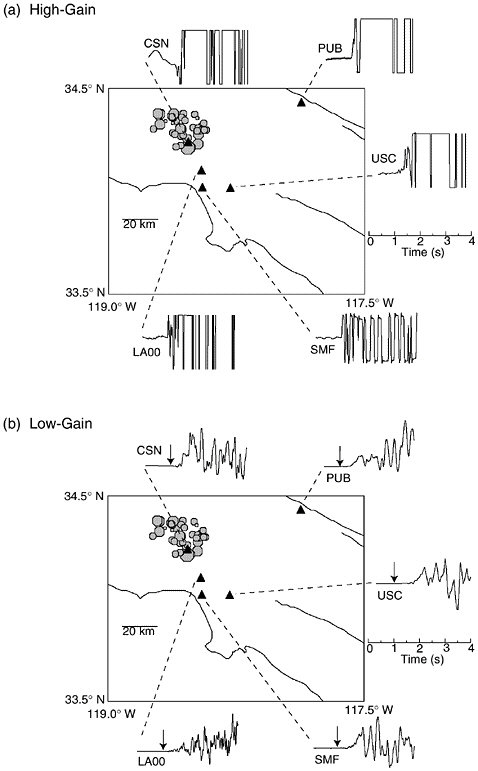

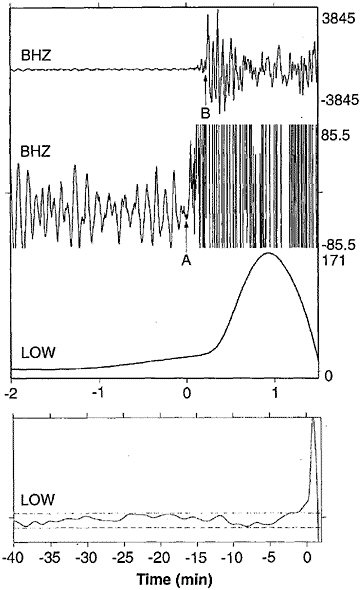

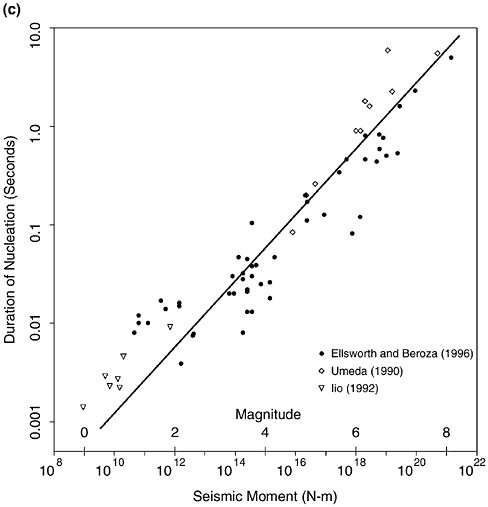

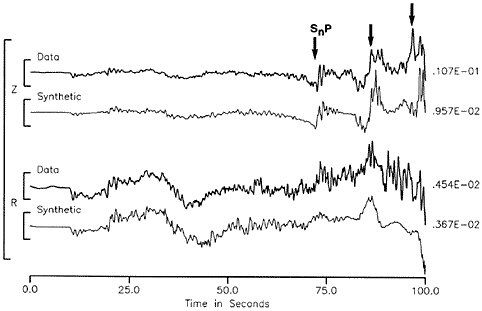

Several lines of evidence argue for a large nucleation zone (Lc greater than 100 meters). The low-frequency spectra for some earthquakes show a slow component that may precede the first detectable high-frequency waves by tens of seconds (87). For these events, there may be a gradual transition from aseismic nucleation to unstable rupture (88) (Figure 5.5). The character of the onset of microearthquakes suggests that very small events also begin with a slow onset that scales in duration with the overall source duration (89). The first arriving seismic waves of moderate to large events in the near field often show an initial phase of irregular growth (90). The duration of this phase shows a similar scaling with earthquake size as reported for the slow initial phase (Figure 5.6). If this phase represents the tail end of a process that is otherwise aseismic, then the dimensions of the nucleation zone are substantial.

Foreshocks provide the clearest evidence of a preparation process before at least some earthquakes. Approximately 40 percent of earthquakes

FIGURE 5.5 Vertical component P-wave seismograms from the 1994 Romanche Transform earthquake at low-noise GEOSCOPE station TAM. Top two panels show broadband trace at two magnifications and third panel shows a detided, low-pass filtered version of the same data revealing the precursory ramp beginning at least 1 minute before the high-frequency origin time. Lowermost panel shows filtered, detided data at a longer time scale indicating that the signal emerges from the background noise level (dashed lines). SOURCE: J. McGuire, P. Ihmle, and T. Jordan, Time-domain observations of a slow precursor to the 1994 Romanche Transform earthquake, Science, 274, 82-85, 1996. Copyright 1996 American Association for the Advancement of Science.

FIGURE 5.6 Left two panels show map view of the Northridge mainshock and aftershocks as filled octagons, with seismic stations as triangles. (a) Vertical component velocity seismograms of the initial P waves of the Northridge mainshock. SMF is a high-gain record that quickly clips, while the other traces are artificially clipped to simulate limited dynamic range recording and to show that the onset of the first P wave at this scale is abrupt. (b) Low-gain recordings of the initial P waves of the Northridge mainshock at the same sites. In each case the arrow indicates the first arriving waves shown in the upper panel. The onset, though abrupt, remains weak until about 0.5 second into the mainshock. Similar behavior is observed before other earthquakes. (c) The duration of the weak initial onset, or seismic nucleation phase, for earthquakes from several studies indicates that the seismic nucleation phase (vertical axis) scales with the seismic moment (horizontal axis) of the mainshock. SOURCE: G.C. Beroza and W.L. Ellsworth, Properties of the seismic nucleation phase, Tectonophysics, 261, 209-227, 1996. With permission from Elsevier Science. (c)

are preceded by at least one observable foreshock (91). Foreshock sequences are more common and are more protracted for earthquakes initiating at shallow depths, which is consistent with an expected decrease in frictional stability with decreasing normal stress (92). Foreshock frequency is observed to increase as t–1, where t is the time before the mainshock (93). In at least some cases, foreshock sequences were unlikely to have triggered the mainshock (94). Instead, some other process, such as aseismic nucleation, may have driven both the foreshocks and the mainshocks to failure.