2

The Current State: Opportunities for Applying Land Remote Sensing to Human Welfare

Remotely sensed land use and land cover data, in combination with other types of data, could profoundly influence decisions on human welfare, such as those related to subsistence and precision agricultural techniques, animal and human health, poverty mapping, and related environmental variables such as biodiversity.1 Satellite data have theSatellite data have the potential to reveal human, agricultural, and environmental interactions on a range of scales. The data could influence decisions addressing many health, agricultural, and environmental change problems threatening the sustainability of some of the planet’s most disadvantaged communities. This section summarizes presentations made at the workshop, including an overview of the use of land remote sensing for human welfare applications, and case studies in the food security and human health domains.

SOURCES OF REMOTELY SENSED DATA

Space-based remotely sensed data are obtained via several satellite platforms. Data obtained through satellite sources (listed in Box 2-1) provide a means of linking issues of the environment (such as resources and infrastructure), disease (such as malaria or cholera), and poverty (such as nutrition). Medium-resolution Landsat and SPOT data have traditionally

|

BOX 2-1 Examples of Remote Sensing Platforms Meteosat is a European, geostationary, earth observation satellite positioned to cover Europe and Africa. It uses both visible and infrared wavelengths to display weather-oriented imaging of the planet. Meteosat is one of several geostationary satellites positioned around the equator to cover the Earth’s surface. Meteosat is operated by the European Organization for the Exploitation of Meteorological Satellites (EUMETSAT), formerly an entity of the European Space Agency. Advanced Very High Resolution Radiometer (AVHRR) is a radiation detection imager used by the U.S. National Oceanic and Atmospheric Administration (NOAA) to remotely determine cloud cover and the temperature of land, water, and sea surfaces with global daily coverage at approximately 1 km spatial resolution. These polar-orbiting series of satellites provide regional and global data useful for tracking forest fires and hurricanes. The data have also been used to determine land cover based on phenological variations in vegetation. Systeme Pour l’Observation de la Terre (SPOT) is a remote sensing system integrating a series of optical remote sensing satellites to provide Earth imagery at resolutions from 2.5 to 20 m for commercial use in various fields including land use planning, agriculture, forestry, geology, and water resources. The SPOT-VEG-ETATION sensor provides daily coverage at 1 km resolution. SPOT satellites are operated by the French Space Agency, Centre National d’Etudes Spatiales. Medium Resolution Imaging Spectrometer (MERIS) is an imaging spectrometer satellite that primarily measures sea color in oceans and coastal areas via solar radiation reflected by the Earth. Ocean color data provide information about the ocean carbon cycle and thermal regimes of the upper ocean. This information is useful for scientific investigations as well as management of fisheries and management of coastal zones. MERIS is funded by the European Space Agency. |

been used to examine detailed environments from space. Satellite data collected by the U.S. National Oceanic and Atmospheric Administration (NOAA) and from the National Aeronautics and Space Administration (NASA) Earth Observation System provide daily imagery of coarser spatial resolution, which is nevertheless useful for understanding seasonal events associated with agriculture and the transmission of many diseases. Such data reveal large- or small-scale patches of environmental similarity (spatial clusters of similar climate, soil, or topography are labelled “eco-

|

Moderate Resolution Imaging Spectroradiometer (MODIS) is a sensor used by the National Aeronautics and Space Administration (NASA) to detect electromagnetic energy at 250 to 1,000 m spatial resolution. The images provide a complete electromagnetic picture of the globe every two days, allowing rapid biological and meteorological changes to be captured. MODIS is part of the Earth Observation System, a multinational and multidisciplinary mission managed by NASA to study earth-sea-atmosphere interactions in order to understand their singular and cumulative effects on global climate change and the environment. Landsat satellites capture high-quality, moderate-resolution images of the Earth’s land mass, coastal boundaries, and coral reefs every 16 days at approximately 30 m spatial resolution. The space-based land remote sensing data are acquired to support observation of changes on the Earth’s land surface and surrounding environment. The Landsat program is jointly managed by NASA and the U.S. Geological Survey. IKONOS is a commercial earth imaging satellite collecting high-resolution data. This satellite orbits the Earth every 98 minutes and provides images with 1 and 4 m resolution. QuickBird is a high-resolution commercial satellite owned and operated by DigitalGlobe. With a Ball Global Imaging System 2000 sensor, QuickBird uses remote sensing to a 0.61 m pixel resolution degree of detail. This satellite is an excellent source of environmental data—useful for analyses of changes in land usage, agriculture, and forest climates—and social data, such as house size, lot size, quality of roofing, and presence of cars, all of which reveal information about the vulnerability of the occupants to risk. SOURCES: ESA, 2006; EUMETSAT, 2006; NASA, 2006; NCAR, 2006; NOAA, 2006; Satellite Imaging Corporation, 2006; Space Imaging, 2006; Spot Image, 2006; USGS, 2006. |

zones”) and thus provide a unique view of the environmental context of human activities over broad regions.

MONITORING FOR FOOD PRODUCTION AND SECURITY

Workshop participants considered the current and future opportunities for applying remote sensing in the context of improving food security worldwide. The workshop considered food security as sufficient

agricultural productivity and access to food. Two extremes of technological intensity in agricultural systems were considered. The first extreme is the use of precision agriculture to maximize crop yields. Precision agriculture, or variable rate technology (VRT), combines the tools of global positioning system (GPS) technologies, remote sensing tools, geographic information systems (GIS) data, communications, and spatial statistics to identify variations within an agricultural field that can be considered individual management units. The second extreme is the avoidance or mitigation of food crises and famine in areas reliant on subsistence agriculture. In developing countries, remote sensing applications are useful for predicting and minimizing breakdowns in food production that often cause food crises and famine. Because it takes a considerable amount of time for remote sensing technologies to evolve into applications useful for human welfare, workshop participants also considered the history of the use of remotely sensed data as applied to agriculture.

The History of Remotely Sensed Data in Agriculture

Data from the Landsat series of sensors have been the cornerstone of remote sensing applications for monitoring agricultural yields since the 1970s.2 A series of large application programs were initiated in preparation for Landsat. The U.S. Landsat-based agricultural remote sensing programs were driven by crop identification and area estimation programs such as the Corn Blight Watch Experiment (MacDonald et al., 1972), the Large Area Crop Inventory Experiment (MacDonald and Hall, 1980), and the Agriculture and Resources Inventory Surveys Through Aerospace Remote Sensing (AgRISTARS).

The Corn Blight Watch Experiment was developed in response to the southern corn leaf blight (SCLB) fungus, first noted in cornfields in the southern and midwestern United States in 1970. A highly virulent strain of SCLB caused extensive and widespread damage to corn crops in those states and destroyed approximately 15 percent of the U.S. corn crop (Zadoks and Schein, 1979). This provided an opportunity for multiple agencies (e.g., NASA and the U.S. Department of Defense) to evaluate remote sensing technologies using the high-altitude Air Force RB57 aircraft equipped with cameras to collect data on selected flight-lines over an eight state area in the Midwest (Illinois, Indiana, Iowa, Minnesota, Missouri, Nebraska, Ohio, and Wisconsin). From a practical standpoint, the experiment failed to detect the SCLB until the corn crop

was severely damaged. However, it did demonstrate the importance of temporal coverage for crop identification and the performance of timely analyses of vegetative growth. More importantly, the results illustrated the feasibility of using aircraft multi-spectral photographic and scanner data for crop identification and condition assessment, and the potential of remote sensing techniques in detecting crop disease (Bauer et al., 1971).

After the launch of the Landsat mission, the Large Area Crop Inventory Experiment (LACIE) of the 1970s was initiated by NASA, the National Weather Service, and the U.S. Department of Agriculture (USDA) to create and test a wheat crop forecasting system. The crop monitoring system was designed to provide information on the area planted with a particular crop and to provide information on crop health based on temporal remotely sensed data and ancillary data such as weather data and USDA reports. While the program called attention to the possibilities for developing various useful applications of remote sensing data, the project did not anticipate problems of differentiating crops from satellite imagery (Mitchell, 1976). Similar to the Corn Blight Watch Experiment, LACIE never matched the ambitious goals set by its sponsors (Mack, 1990); however, the LACIE program similarly brought to light the capabilities of remote sensing technologies. Should LACIE and the Corn Blight Watch Experiment be conducted today with intervening advances, the experiments, it could be argued, would be successful.

The AgRISTARS program of the 1980s expanded on the LACIE partnerships to examine wheat, corn, and rice on a global basis (NASA, 1981). The AgRISTARS program was successful in demonstrating the value of timely data and limited ground reference information for identifying crops and predicting yield. The USDA Foreign Agriculture Service still uses the approaches developed from AgRISTARS.

The Corn Blight Watch Experiment, LACIE, AgRISTARS, and Famine Early Warning System Network (FEWS NET) programs recognized the utility of multi-temporal remotely sensed data for consistent and accurate crop identification, and firmly established the feasibility of using multi-spectral scanner data and digital analysis techniques for crop identification and aerial estimations. The Landsat System whetted the appetite for satellite data for many uses, especially in the research community. However, plans for operational use of the Landsat platform were weakened because of the inability to sustain operations with only one operating satellite, changes in management, and other issues.

Applications of Remote Sensing for Agricultural Monitoring and Support

The use of remotely sensed data for agricultural applications in the industrialized world, including precision farming, was presented by Chris J. Johannsen, professor emeritus of agronomy at Purdue University, and is summarized in Box 2-2. Dr. Johannsen showed workshop participants how farmers have used remote sensing data to more effectively manage their farms, with specific mention of the use of Landsat data for the Corn Blight Watch Program (CBWP) and the AgRISTARS program focused on global wheat, corn, and rice production.

The presentation provided the context for subsequent discussion focused on opportunities for more contemporary sensor technologies for agricultural monitoring. In particular, the availability of high-resolution imagery (e.g., less than 5 m spatial resolution) from commercial vendors has provided a better match to the scale of data that individual farmers need for farm-level monitoring. IKONOS and Quickbird data provide the opportunity for high-resolution crop monitoring, but the high cost of data limits the extent to which these technologies are actually utilized. While the application of high-resolution technology for frequent crop monitoring may not be feasible for agriculturalists in less developed countries due to these cost issues, the ability of remotely sensed data to provide general data on soils could offer immediate benefits. Workshop participants voiced some concern regarding the current number of sensors in orbit applicable to crop monitoring and the uncertainty of funding for future remote sensing missions with an emphasis on agricultural uses.

Monitoring of Subsistence Agriculture

Food production and income generating activities are far more complex among systems labelled subsistence agriculture than that term implies. Farming in such systems invariably involves consumption production (food for the household) but may also involve small-scale commercial production. In addition, such households increasingly rely on off-farm employment, remittances, and other non-food-producing activities. Typically, the more subsistence oriented the farming is, the more that system is constrained by the biophysical and socioeconomic environments in which it exists.

Remotely sensed data, in combination with other types of data, can reveal valuable information about environmental conditions that can subsequently impact the livelihoods of farmers. Environmental constraints on subsistence agriculture are revealed by comparing spatial distributions of agricultural indicators—such as the local abundance of humans, crops,

|

BOX 2-2 Land Remote Sensing Applications for Agricultural Support Chris J. Johannsen, Purdue University Precision agriculture enables farmers to predict and maximize crop yields and determine the extent of damage from storms or other events. The basic premise of precision farming is that identified variations within an agricultural field can be considered individual management units. VRT can be applied to the specific management units within a field by adjusting tillage according to soil type, adjusting seed variety and rates, and adjusting the rates of fertilizer or pesticide application. Adoption of GPS-based auto steer guidance systems can increase the accuracy of seeding and pesticide applications. Remotely sensed data can be used to determine vegetation activity and the locations of drought-prone areas, areas where growth tends to be favorable, areas to keep fallow, and areas prone to weed or insect infestation. Thirty percent of farmers who are equipped with yield monitors are effectively recording and using yield data, while the other seventy percent observe differences in yield but do not keep records (Lowenberg-DeBoer and Erickson, 2001). Remotely sensed data potentially play an important part of an early warning system (EWS) established to identify and notify farmers of agricultural pollutants or other problems that put their crops at risk. The U.S. Environmental Protection Agency (EPA) is implementing a three-phase program to test and evaluate early warning systems for drinking water infrastructures (EPA, 2005). The EWS setup for water quality assessment may include automated monitoring for contamination, with data transmitters communicating data via direct wire, phone line, radio, or satellite to data acquisition systems. Such systems would be able to validate, store, and analyze data by means of flow predictive models and GIS systems. When an appropriate alarm is triggered, the information is transmitted to decision makers who can then notify and orchestrate the appropriate response to the event. There are barriers to practical application of EWS: EWS cannot be established everywhere to monitor all potential contaminants at all times. Most EWS are currently deployed in source water to detect a contaminant, where contamination has already been found. When analyzing data, most hydrological models assume uniform conditions for large areas of land. The greatest barrier to automated EWS, however, is the cost of transmitting data on a real-time basis. Potential applications of remotely sensed data depend on the types and values of crops, geographic features such as soils of the area being farmed, farmer’s use of fertilizer, irrigation and other types of management, the remote sensing expertise available to the farmer, and the timeliness of remotely sensed data. Cost is a large barrier to the application of precision farming. Most applications occur when appropriate data are available at the right time, at an affordable cost, and when there is access to expertise to use the data. Progress is steadily being made with improvements in data quality, user-friendliness of software, and access and affordability of remotely sensed data. To expand the application of precision farming, systems have to become more automated, data and software formats must become more uniform to allow data sharing, and continued sources of application funding will also be required. In addition, more providers of remotely sensed data are required, and a greater emphasis on data calibration within all parts of the VRT system is needed. |

and cattle—with spatial distributions of ecozones, which are defined by similar seasonal patterns of vegetation, soil, or climate. Correspondence analysis commonly shows exceptionally good correlation between agricultural indicators and ecozones. Human farming systems are vulnerable to climate variability and other forces in much the same way as habitats and other ecological features are. By identifying particularly vulnerable ecozones, informed development policies aimed at reducing food insecurity may be formulated.

The Famine Early Warning System (FEWS)

Remote sensing technologies can be used, along with other data collection methods, to monitor agriculture in developing countries with populations dependent on subsistence agriculture, and its applications focus on the need to avoid food crises. Monitoring circumstances that can lead to an extreme breakdown in food production is a primary concern. Workshop participants considered the use of remote sensing technologies to monitor food security in Africa via the Famine Early Warning System Network (FEWS NET) (see Box 2-3 for a history of FEWS and FEWS NET).

Signs of imminent famine can be identified by combining remotely sensed data, precipitation data, and surface water data to characterize and model hazards threatening vulnerable livelihoods. Conventional ground-based networks of hydrometeorological data are sparse and unable to provide data in realistic time frames to be effective in predicting famine (Washington et al., 2004). Remotely sensed data, combined with numerical modeling and GIS, are important tools for FEWS NET (see Box 2-4 for Current FEWS NET programs).

Monitoring rain-fed agriculture and rangeland conditions is a key input to food security assessment in sub-Saharan Africa. Satellite remote sensing has played a vital role since the 1980s by complementing sparse conventional surface climate monitoring networks. Estimates of vegetation vigor, rainfall distribution, and surface water supplies are forthcoming from current systems. Future remote sensing missions, planned and proposed, promise to provide increasingly higher-quality coverage in terms of spatial resolution, frequency of acquisition, and sensor technology. Full implementation of these missions will be vital to famine early warning in Africa because surface climate monitoring networks, unfortunately, continue to weaken and will not likely improve any time soon.

While the environmental conditions monitored by remote sensing are critical, experience with FEWS reveals that these data are most useful in combination with socioeconomic data on the livelihoods of local populations. The combination of environmental data and knowledge of

|

BOX 2-3 FEWS and FEWS NET In response to the 1985 Ethiopian famine that resulted in more than 1 million deaths and an outpouring of worldwide aid, most of which arrived too late, the U.S. Agency for International Development (USAID) established the Famine Early Warning System in an effort to prevent future food security disasters. Beginning in 1986, FEWS provided timely and accurate information about drought-induced famine conditions in Africa so that decision makers could address food shortages and other causes of food insecurity. While the FEWS mission to improve food security in 17 drought-prone African countries remained the same, the need arose to establish more effective, sustainable, and African-led food security and response planning networks that reduce the vulnerability of groups to both famine and flood situations. In July 2000, the U.S. government built upon earlier FEWS operations to create a partnership-based Famine Early Warning System Network. The U.S. Geological Survey (USGS) Earth Resources Operations and Science (EROS) Data Center (USGS/EDC)—in cooperation with USAID, NASA, NOAA, and Chemonics International Incorporated—work to provide the data, information, and analyses needed to support FEWS NET activity. NASA and NOAA collect and process satellite data that provide the spatial coverage and temporal frequency necessary for monitoring both vegetation condition and rainfall occurrence throughout the entire African continent. Chemonics maintains staff in 17 African countries to provide ground-based input into FEWS NET activity. Chemonics is ultimately responsible for providing warning of potential problems. FEWS-Chemonics publishes a monthly bulletin distributed to decision makers around the world. The USGS/EROS Data Center provides technical support services to FEWS in the use of remote sensing and GIS technologies, and provides long-term data archive and distribution services. The African Data Dissemination Service provides Internet access to the data collected for FEWS NET activity. SOURCE: FEWS NET, 2006. |

livelihoods and coping capabilities determines vulnerability to local food shortages.

Box 2-4 summarizes a presentation made by James Verdin of the U.S. Geological Survey on how FEWS NET uses and treats remotely sensed data in its efforts to predict incidents of food insecurity. Dr. Verdin concluded that future missions using remote sensing platforms to track surface and environmental variables would greatly benefit the ability to monitor food security in Africa and much of the planet.

Workshop participants noted the success of FEWS NET in applying remote sensing data and, more recently, integrating these data with

|

BOX 2-4 Famine Early Warning System Network: Use of Remote Sensing to Monitor Food Security in Africaa James Verdin, U.S. Geological Survey The Famine Early Warning System Network interprets vulnerability and food security hazards to populations by quantifying which and for how long populations face food insecurity. Remotely sensed data are used to fill in the gaps in ground-based observations, as the routine monitoring of rainfall, vegetation, crops, and market prices is made more meaningful by assembling information on how households access food and income. Remotely sensed data are used to develop vegetation index imagery to identify changes in seasonal landscape that could indicate drought (Hutchinson, 1991). Below are some of the tools used by FEWS NET to measure and analyze conditions in Africa:

|

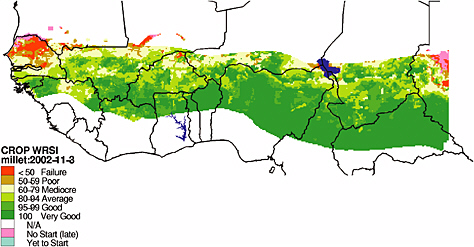

FIGURE: Map of the WRSI for the Sahelian countries of West Africa, 2002. Intervals of WRSI correspond to levels of crop performance. Growing conditions for millet that year were especially poor for northern Senegal and southern Mauritania. SOURCE: FEWS NET, 2004. Image provided by Gabriel Senay, USGS. Used with permission.

|

livelihood analysis. Participants did not have information on the actual effectiveness of the information on decision making and the degree to which this information has reduced food insecurity. Such information would be needed for a full evaluation of this remote sensing application.

Remote sensing platforms supply much of the data used in famine early warning. However, remotely sensed data are useful for predicting food shortages and vulnerabilities only when combined with other environmental, political, economic, and social data. Richard Choularton, contingency and response planning advisor at FEWS NET, presented the use of remotely sensed data to provide actionable information to decision makers trying to prevent or respond to famines and food crises. FEWS NET focuses on how populations in different areas access food and income and how they spend their income. FEWS NET combines socioeconomic data with remotely sensed data and other monitoring tools to estimate the likely impact of hazards such as drought, floods, or locusts on populations. Box 2-5 summarizes Richard Chourlarton’s presentation to the workshop participants.

MONITORING FOR HUMAN HEALTH

Human health in the broad sense results from a combination of multiple factors including food production, availability, and distribution; environmental health hazards; contagious and infectious diseases; chronic health issues; and health care delivery. All of these factors have traditionally been considered separately by a variety of organizations. Workshop participants viewed the potential for land remote sensing to help integrate these many factors.

Applications for Human Health

Infectious diseases affecting humans and animals in the tropics have spatially and seasonally varying impacts that can be studied using satellite data. There are two different ways to conduct research involving remotely sensed data in human health studies: using process-based and pattern-based models. Both are useful and important. Process-based or mechanistic models that simulate the underlying biological processes of disease transmission and spread are applied to foretell the future and predict the outcome of different land use, environmental, or policy scenarios. For most diseases, however, the current understanding of the processes has been insufficient; thus, the use of statistical analyses, or pattern-based, approaches can establish strength of certainty in understanding how the processes work. Statistical approaches reveal associations among different variables and do not determine the cause-and-effect relationships

required to build process-based models. Nevertheless a careful application of pattern-based approaches can suggest fundamentally important links between environmental drivers (including climate) and demographic processes (birth, death, infection, recovery). These links can then begin to inform a process-based modelling approach. The pattern-based technique is extremely flexible and achieves respectable levels of accuracy with remarkable consistency. Satellite data bridge the pattern-based approach to infectious disease modelling by providing environmental data over large areas at multiple spatial and temporal scales. Without the large spatial or temporal extents provided by remotely sensed data, the process-based modelling approach would be extremely difficult.

Disease spread, however, is made more complicated by ever-expanding regional and global transportation infrastructures. Remotely sensed data, especially in combination with other GIS data, can be used to determine the relationships between environmental conditions across the world’s air- and seaport network and the dramatic emergence and re-emergence of disease fostered by the transport of pathogens. By analyzing these relationships and understanding past trends, we can better predict and anticipate future disease patterns and movements.

Case Studies

Information derived from remotely sensed data, combined with traditional means of ground-based data collection, can be useful in identifying statistical patterns of disease origin and spread. Two case studies—hantavirus and cholera—were presented at the workshop.

Hantavirus

Terry Yates presented a case study describing how a 1993 outbreak of a novel and potentially deadly hantavirus in the American Southwest was linked to a species of deer mouse, and how the spread of the disease was exacerbated by climatic variability from El Niño conditions (see Box 2-6). This case study shows how remote sensing data can help monitor disease incidents and disease progression.

Remotely sensed data were used to relate known instances of infection, local rainfall, vegetation, topography, and deer mouse habitat and population. With this information, future potential outbreaks of the disease can be predicted and measures could then be taken to educate affected populations on steps to avoid contact with the carrier.

|

BOX 2-6 Opportunities and Challenges in Using Land Remote Sensing: A Case Study in Forecasting the Spread and Risk of Infectious Disease Terry L. Yates, University of New Mexico Hantaviruses are a group of negative-stranded RNA viruses, some of which are known to be highly pathogenic for humans. Diseases caused by hantaviruses were thought to be largely restricted to Europe and Asia until 1993 when an outbreak of hantavirus pulmonary syndrome (HPS) caused by a previously unknown hantavirus, Sin Nombre virus (SNV), occurred in the southwestern United States. Initially, there was a fatal outcome in more than 50 percent of human cases of the new virus. The deer mouse, Peromyscus maniculatus, was found to be the virus’s primary reservoir (Nichol et al., 1993). Since the discovery of SNV, some 27 additional hantaviruses have been described in the New World (Schmaljohn and Hjelle, 1997; Peters et al., 1999). While the cause of the outbreak in 1993 may be speculative, more than 10 years of ecological monitoring in the American Southwest and the results of retrospective serosurveys for SNV using archived rodent samples suggest a climate-driven trophic cascade model for SNV outbreaks in North America. It appears that increased late winter and spring precipitation in the southwestern United States driven by the El Niño-Southern Oscillation was responsible for an increase in plant primary productivity, which in turn resulted in increased rodent population densities. A direct but delayed correlation exists between increases in deer mouse population densities, increases in density of infected rodents, and increased incidence of HPS. Furthermore, retrospective data show that SNV and other New World hantaviruses have been present, essentially in their current form, in the Western Hemisphere for at least decades and probably have been coevolving with their rodent hosts in the New World for approximately 20 million years. An understanding of the relationship between climate change, ecology, and hantaviruses may enable development of improved predictive models for the prevention of human infection and improve the understanding of biocomplexity on a rapidly changing planet. A complex trophic cascade, in which impacts on one trophic level permeate through other levels, triggered by climate fluctuation can be a model for predicting HPS risk to humans. In addition, data from studies in North and South America suggest that certain human land use patterns that result in a reduction of biological diversity favor reservoir species for hantavirus and significantly increase human risk for HPS. These data make it clear that understanding the ecology of infectious diseases will require a long-term, multidisciplinary effort that is essential to public health efforts of the future. Although on a broad regional scale there is an increased risk to humans from the trophic cascade triggered by increased precipitation input into the environment, the actual risk to humans is highly localized and depends on a complex series of variables. Other factors, such as landscape heterogeneity, microclimatic |

|

differences, rodent disease, local food abundance, and competition, may be involved as well, and such complexity will have to be taken into account before a predictive model of HPS risk can be developed on a fine-grained scale. Understanding the biological complexity of natural and human-dominated ecosystems will be required before ecological and evolutionary forecasting can be employed on the scale needed to safeguard the public health against hantaviral and other zoonotic disease outbreaks. Large-scale, long-term, multidisciplinary studies also will be needed to determine if foreign or genetically modified pathogens are being introduced into our ecosystems. Near-real-time forecasting of risks of these types of diseases will be possible only if remote and other types of sensing become utilized on a continental or global scale. |

Cholera

Rita Colwell presented a case study using remotely sensed data to correlate water surface temperature and population densities with known cases of cholera infection (see Box 2-7). The case study illustrates the linkages between human populations and biological systems in coastal areas. Remotely sensed data have been a major factor in understanding these linkages.

By using remotely sensed data along with socioeconomic and other ancillary data, researchers are able to determine populations at risk for cholera infection, and educational outreach can be targeted to those populations. In the case study presented to workshop participants, women in Bangladesh were taught to filter their drinking water by pouring it through old sari cloth folded eight to ten times. Such folding created a 20-micron mesh filter (as determined by electron microscopy), reducing the number of zooplankton in the drinking water and potentially preventing the ingestion of infectious levels of cholera bacteria. This case study is a prime example of how integrating remotely sensed data with socioeconomic and other data can provide new applications by incorporating multidisciplinary information with existing remote sensing technologies.

|

BOX 2-7 Ecology and Epidemiology of Cholera: A Paradigm for Waterborne Diarrheal Diseases Rita Colwell, University of Maryland College Park and Johns Hopkins University Diarrheal diseases are among the leading global causes of death by infectious disease, third only to acute respiratory infections and AIDS, and particularly acute among children under 5 (WHO, 1999). Cholera is a diarrheal disease caused by the bacterium Vibrio cholerae that infects the intestine, and is transmitted through ingestion of water or food that is contaminated by the cholera bacterium. Pathogens such as V. cholerae can exist in a viable yet inactive state, like many other gram-negative bacteria that also enter dormancy when faced with adversity. Direct fluorescent and molecular genetic assays of water samples collected from the Chesapeake Bay and off the coast of Maryland and Delaware demonstrated that vibrios are present year-round, yet their levels were hard to determine with traditional methods of culturing. Similar results were obtained in the Bay of Bengal and the rivers and ponds of Bangladesh. With remote sensing, however, data can be gathered to supplement existing information that would be useful across multiple disciplines. For instance, it is known that the zooplankton and phytoplankton populations are highly correlated, since zooplankton consume phytoplankton. Phytoplankton blooms are strongly correlated with seasonal above-average temperatures at the surface of the sea. Sea surface temperature (SST) can be monitored with remote sensing instruments, and these SST measurements can be used to estimate phytoplankton and zooplankton blooms. Ocean temperature and height patterns were found to be linked to cholera outbreak patterns in Bangladesh, India, and South America. During the El Niño years, the associated warm water patterns were correlated with new cholera outbreaks during 1991-1992 on the South American coast of Peru. Using remote sensing, research has shown that copepod and Vibrio populations are coupled to salinity, temperature, and sea height and hence to both seasonal and interannual climactic patterns in a complex, nonlinear manner. Simply stated, there is a positive correlation between the seasonal increased sea surface temperature and sea surface height and subsequent outbreaks of cholera that occur in the late spring and fall months in Bangladesh. Thus, remote sensing has the potential to contribute to a global warning system for increased plankton production and associated cholera outbreaks. |