4

Observing the Active Earth: Current Technologies and the Role of the Disciplines

Not long ago, seismologists worked in rooms filled with drum recorders and big tables for hand-measuring seismograms. They now use digital monitoring systems that integrate high-performance seismometers, real-time communications, and automatic processing to produce high-quality information on seismic activity in near real time. Geodesists have replaced the theodolite and spirit level with space-based positioning and deformation imaging that can map crustal movements precisely and continuously, and they can hunt for slow, silent earthquakes with arrays of sensitive, stable strainmeters. Geologists have learned to decipher the subtle features of the rock record that mark prehistoric earthquakes, and they can date these events precisely enough to reconstruct the space-time behavior of entire fault systems. Laboratory and field scientists who study the microscopic processes of rock deformation are now formulating and calibrating the scaling laws that relate their reductionistic approach to the nonlinear dynamics of macroscopic faulting in the real Earth.

In each of these four domains—seismology, geodesy, geology, and rock mechanics—key technological innovations and conceptual breakthroughs were made within the last decade. The Global Seismic Network (GSN), initiated with the founding of the National Science Foundation (NSF)-sponsored Incorporated Research Institutions for Seismology (IRIS) in 1984, is reaching its design goal of 128 broadband, high-dynamic-range stations (as of December 2001, 126 stations had been installed and 122 were operational). The first continuously recording network of Global Positioning System (GPS) stations for measuring tectonic deformation

was installed in Japan in 1988 by the National Research Institute for Earth Science and Disaster Prevention (1), and the first image of earthquake faulting using interferometric synthetic aperture radar (InSAR) was constructed in 1992. Paleoseismologists produced a preliminary 1000-year history of major ruptures on the San Andreas fault in 1995 and discovered a prehistoric moment magnitude (M) 9 earthquake in the Cascadia subduction zone in 1996. The first three-dimensional simulations of dynamic fault ruptures using laboratory-derived, rate- and state-dependent friction equations were run in 1996.

The unprecedented flow of new information opened by these advances is stimulating research on many fronts, from fault-system dynamics and earthquake forecasting to wavefield modeling and the prediction of strong ground motions. This chapter summarizes the state of the art in the main observational disciplines; it focuses on new technologies for observing the active Earth, and it highlights through a few examples the richness of the data sets now becoming available for basic and applied research.

4.1 SEISMOLOGY

Seismology lies at the core of earthquake science because its main concern is the measurement and physical description of ground shaking. The central problem of seismology is the prediction of ground motions from knowledge of seismic-wave generation by faulting (the earthquake source) and the elastic medium through which the waves propagate (Earth structure). In order to do this calculation (forward problem), information must be extracted from seismograms to solve two coupled inverse problems: imaging the earthquake source, as represented by its space-time history of faulting, and imaging Earth structure, as represented by three-dimensional models of seismic-wave speeds and attenuation parameters. Because seismic signals can be recorded over such a broad range of frequencies—up to seven decades (2)—seismic signals can be used to observe earthquake processes on time scales from milliseconds to almost an hour, and they provide information about elastic structure at dimensions ranging from centimeters to the size of the Earth itself.

Seismometry

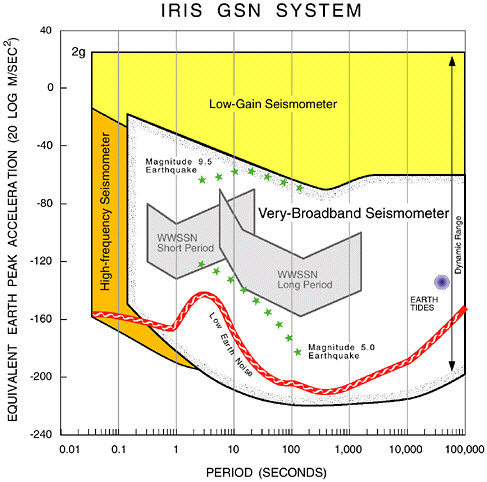

Seismic waves span a wide range of amplitude, as well as frequency. The ground motions in the vicinity of a large earthquake can have velocities greater than 1 meter per second and accelerations exceeding the pull of gravity (1g = 9.8 m/s2). The lower limit of seismic detection is typically eight orders of magnitude smaller, set by the level of the ambient ground

noise (3). No single sensor has yet been developed that can faithfully record the violent displacements close to an earthquake and still be capable of detecting small events at the background noise level. For this reason, instruments historically have been divided into weak-motion and strong-motion seismometers. The former have been the principal sensors for studies of Earth structure and remote earthquakes by seismologists (4), while the latter have provided the principal seismological data to earthquake engineers. Technology is closing this gap. Modern force-feedback systems (5) can faithfully record ground motions from the lowest ambient noise at quiet sites to the largest earthquakes at teleseismic distances and achieve a bandwidth that extends from free oscillations with periods of tens of minutes to body waves with periods of tenths of seconds (Figure 4.1).

Seismic Monitoring Systems

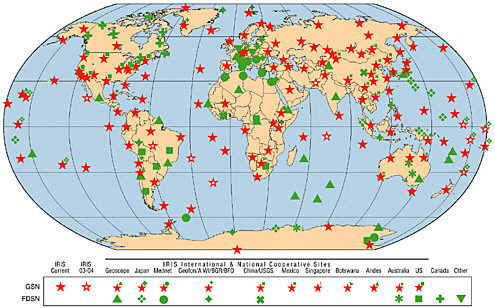

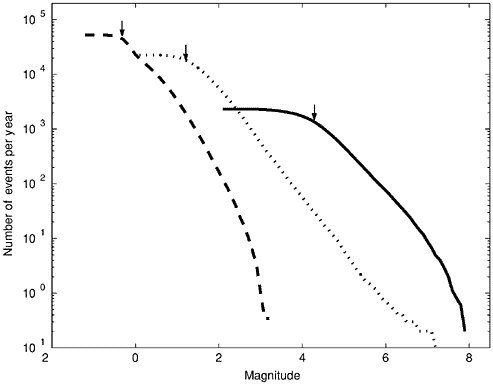

Seismic monitoring systems comprise three basic elements: a network of seismometers that convert ground vibrations to electrical signals, communication devices that record and transmit the signals from the stations to a central facility, and analysis procedures that combine the signals from many stations to identify an event and estimate its location, size, and other characteristics. Monitoring systems are multiple-use facilities; they furnish information about earthquakes and nuclear explosions to operational agencies in near real time, and they also function as the basic data-gathering mechanisms for long-term research and education. With current technology, seismic networks of different types and spatial scales must be deployed to register the Earth’s seismicity over its complete geographic and magnitude range (Table 4.1, Figure 4.2). Since this coverage is typically overlapping, monitoring systems can be effectively organized into nested structures.

Global Seismic Networks State-of-the-art seismic stations for global seismic networks comprise three-component sensors with high dynamic

TABLE 4.1 Scales of Seismic Monitoring

|

Type |

Typical Network Size |

Typical Station Spacing |

Detection Thresholda |

|

Global |

Global |

1000 km |

4.5 |

|

Regional |

500 km |

25-50 km |

2.0 |

|

Local |

10 km |

<1000 m |

–1.0 |

|

aMagnitude of smallest event with a high probability of detection; see examples in Figure 4.2. |

|||

FIGURE 4.1 Plot of acceleration amplitude versus frequency, showing effective bandwidth and dynamic range for current broadband, high-frequency, and low-gain (strong-motion) digital seismometers, as well as for the older short-period and long-period analog seismometers of the World Wide Standardized Seismographic Network. Broadband instruments are capable of faithfully recording ground motions ranging from ambient noise at quiet sites (line labeled low Earth noise) to the peak accelerations generated by an M 9.5 earthquake at an epicentral distance of 3000 kilometers (upper line of stars). Low-gain seismometers are needed to record ground accelerations in the damage zones of large earthquakes, which can exceed 1g, and high-frequency seismometers are needed for periods less than about 0.1 second. SOURCE: R. Butler, IRIS.

FIGURE 4.2 Number of earthquakes per year greater than a specified magnitude recorded by three networks of the types described in Table 4.1. Solid line shows the seismicity of the entire Earth from the global network of seismic stations cataloged by the International Seismological Centre during the decade 1990-1999. Dotted line is for events in southern California during the same interval recorded by the Southern California Seismic Network. Dashed line is for mining-induced seismicity recorded during 1997-1999 by a local network in the Elandrands gold mine, South Africa. Arrows show the approximate detection thresholds for the three networks, below which the sampling of seismicity is incomplete. All magnitudes are moment magnitudes. SOURCE: M. Boettcher, E. Richardson, and T.H. Jordan, University of Southern California.

range (up to 140 decibels) and broadband response (0.0001–10 hertz). Since 1984, more than 300 such stations have been installed at permanent locations worldwide, as elements of global and regional networks (Figure 4.3). Close to half of these stations are part of the GSN, which has been constructed and operated under a cooperative agreement between the U.S. Geological Survey (USGS) and IRIS (6). The GSN is coordinated with other international networks through the Federation of Digital Seismographic Networks (FDSN), and the data are archived and made available

on-line by the IRIS Data Management Center (DMC) in Seattle, Washington (7). Some stations still record on local magnetic or optical media that are shipped periodically to the DMC, but direct telemetry is being deployed as communication with remote sites becomes cheaper. At many locations with telephone access, the data can be retrieved via telephone dial-up or Internet connection (108 stations in 2001). The five-year goal is to have all stations on-line all the time. Achieving this goal, especially at remote sites, will depend in part on the cost of satellite communications.

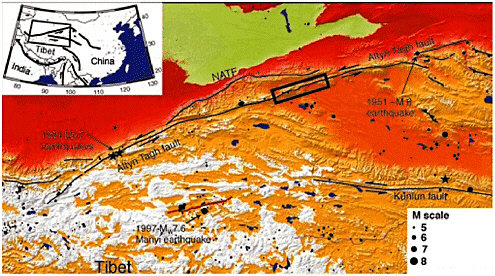

The GSN data acquired over the last 15 years have facilitated many advances in the study of global Earth structure and earthquake sources. Seismic tomography has provided dramatic images of subducting slabs, plume-like upwellings, and other features of the mantle convective flow responsible for plate-tectonic motions (Figure 4.4). The GSN data have also improved the plate-tectonic framework for understanding earthquake hazards through better earthquake locations and centroid moment tensor (CMT) solutions (Figure 4.5). Seismologists have used the broadband waveforms to elucidate the details of rupture processes during large earthquakes from a variety of tectonic settings, shedding new light on the geologic and dynamic factors that govern the configuration of seismogenic zones and how earthquakes start and stop.

These successes have in no way diminished the need for continued monitoring. Discoveries based on data now being collected by the GSN will undoubtedly continue into the indefinite future. On the rapidly slipping plate boundaries, large earthquakes recur at intervals ranging from decades to centuries, while the recurrence times for significant intraplate events can extend to many millennia. With each passing year, GSN data will thus add new information to the evolving pattern of global seismicity by the direct observation of large, rare events and the delineation of low-level seismicity that may mark the eventual occurrence of such events. The densification of seismic sources through time will also improve tomographic mapping of features in the crust and mantle that control seismicity and may be indicative of the forces causing lithospheric faulting.

Global seismological monitoring could be further enhanced by increasing the spatial resolution on land with permanent and temporary deployments of seismometers, expanding the coverage of global networks to the ocean floor, and upgrading the present networks as new technologies become available. However, sustained funding of the global networks will present a continuing challenge. In terms of annualized expenditures, the operation and maintenance of the GSN is projected to be comparable to its initial capitalization. Under current arrangements, the USGS shares a portion of the costs of GSN operations with the NSF. Stable support of the GSN from a federal agency that embraces the mission of global seismic monitoring is essential to the long-term health of earthquake science.

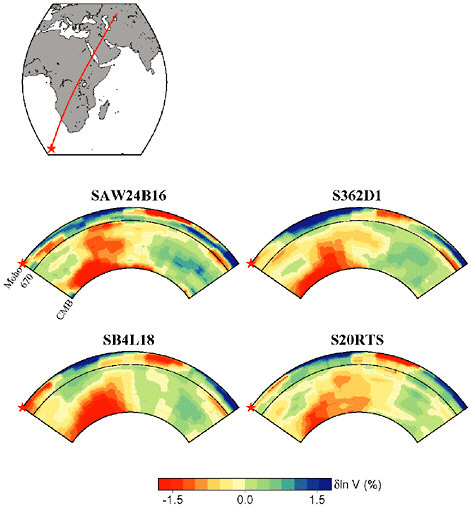

FIGURE 4.4 Depth cross section showing the African plume as seen in four recent global tomographic models of S velocity: SAW23B16 (Megnin and Romanowicz, 2000), SB4L18 (Masters et al., 1999), S362D1 (Gu et al., 2001), and S20RTS (Ritsema et al., 1999). Although the models differ in detail, they are in agreement on the broad characteristics of this major upwelling. SOURCE: Y.J. Gu, A.M. Dziewonski, W.-J. Su, and G. Ekström, Models of the mantle shear velocity and discontinuities in the pattern of lateral heterogeneities, J. Geophys. Res., 106, 11,169-11,199, 2001; G. Masters, H. Bolton, and G. Laske, Joint seismic tomography for P and S velocities: How pervasive are chemical anomalies in the mantle? Eos, Trans. Am. Geophys. Union, 80, S14, 1999; C. Megnin and B. Romanowicz, The 3D shear velocity structure of the mantle from the inversion of body, surface and higher mode waveforms, Geophys. J. Int.,143, 709-728, 2000; J. Ritsema, H. van Heijst, and J. Woodhouse, Complex shear wave velocity structure imaged beneath Africa and Iceland, Science, 286, 1925-1928, 1999. SOURCE: B. Romanowicz and Y. Gung, University of California, Berkeley.

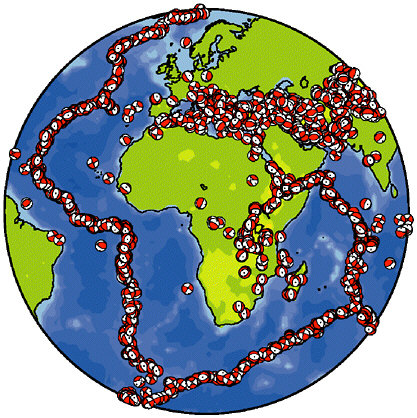

FIGURE 4.5 25 years of CMT solutions (1976-2000) for the region surrounding Africa. The availability of broadband data has made it possible to describe global seismicity in terms not only of location and magnitude, but also of fault mechanism, thereby greatly enhancing our view of active tectonics. SOURCE: Harvard CMT group.

Regional Seismic Networks Owing to their sparse station coverage, global networks do a poor job of detecting and locating events with magnitudes less than about 4.5 (Figure 4.2), and their sampling is too crude for investigating how waves are produced by fault ruptures, especially the near-fault radiation that generates the complex patterns of strong ground motions observed in large earthquakes. To deal with these problems, seismologists have densified station arrays in areas of high (or otherwise interesting) seismicity. Regional networks are collections of seismographic stations distributed over tens to hundreds of kilometers, usually as permanent facilities. The information supplied by regional networks services three overlapping but distinct communities: (1) scientists and engineers

engaged in basic and applied research; (2) engineers, public officials, and other decision makers charged with the management of earthquake risk and emergency response; and (3) public safety officials, news media, and the general public. As information technology has transformed the regional networks into integrated monitoring systems, they have become centers for educating the general public about earthquake hazards, as well as key facilities for training graduate students in seismology (8).

The short-period, high-gain instruments historically used in regional networks (9) brought seismicity patterns into much clearer focus (Figure 4.6), but the dynamic range of these instruments was too low to furnish useful recordings of large regional events. In the last decade, deployments of broadband, high-dynamic-range seismometers have begun to transform the regional networks into much more powerful tools for investigating the basic physics of the earthquake source, the detailed structure of the Earth’s crust and deep interior, and the patterns of potentially destructive ground motions. With these data, seismologists can now map the patterns of slip during earthquakes using seismic tomography, just as they map Earth structure. Images of fault ruptures during the more recent earthquakes in the Los Angeles, San Francisco, and Seattle regions have all been captured by high-performance networks (Figure 4.7).

Long-term funding has been a persistent problem for regional network operators, and new investments in equipment are badly needed (10). In particular, the implementation of new broadband technologies in regional monitoring has been lagging in the United States, especially when compared to the investments made by other high-risk countries such as Japan (Box 4.1) and Taiwan. Two exceptions are the Berkeley Digital Seismic Network in northern California and Caltech’s TERRAscope Network in southern California. Both are equipped with a combination of three-component broadband seismometers and three-component strong-motion accelerometers; they have digital station processors and feed continuous data streams via real-time telemetry to central processing sites. Although these networks have developed independently, a major effort is under way, with some support from the State of California, to modernize the earthquake monitoring infrastructure throughout the region by integrating the regional networks into a California Integrated Seismic Network monitoring in the United States.

Local Networks Networks have been deployed with seismometers distributed over a few tens of kilometers or less for specialized purposes such as seismic monitoring of critical facilities (e.g., dams and nuclear power plants) or localized source zones (e.g., volcanoes or geothermal reservoirs). Local networks are important instruments for the study of natural earthquake laboratories such as deep mines. Digital arrays of very

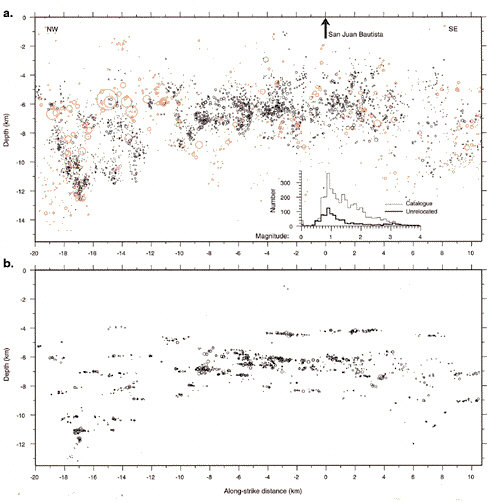

FIGURE 4.6 Vertical along-strike cross sections of earthquakes located along a 30-kilometer section of the San Andreas fault. Circles are an estimate of event size. (a) Locations of earthquakes between May 1984 and December 1997 reported in the Northern California Seismic Network catalog. (b) Relocated earthquakes show a linear, nearly horizontal pattern of seismicity. SOURCE: A.M. Rubin, D. Gillard, and J.-L. Got, Streaks of microearthquakes along creeping faults, Nature, 400, 635-641, 1999. Reprinted by permission from Nature copyright 1999 Macmillan Publishers Ltd.

high frequency sensors have been deployed in deep mines in Canada, Poland, and South Africa to monitor mine tremors and rock bursts induced by mining activities (11), and they have furnished unique, close-in observations of earthquakes as large as M 5 and at depths as great as 4 kilometers. Recent research has shown that in the deep gold mines of South Africa, mine tremors caused by friction-controlled slip on faults

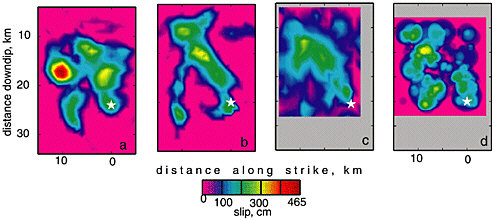

FIGURE 4.7 Comparison of fault slip maps for the 1994 Northridge earthquake derived from different methods. (a) Inversion of regional distance broadband data (Dreger, 1997). (b) Inversion of seismic moment rate functions derived from empirical Green’s function deconvolution (Dreger, 1994). (c) Inversion of local strong motion, teleseismic waveform data, and geodetic and leveling data (Wald et al., 1996). (d) Inversion of strong motion data alone (Zeng and Anderson, 1996). Each map has the same scaling, and the white star identifies the hypocenter. SOURCE: D. Dreger, Empirical Green’s Function study of the January 17, 1994 Northridge, California earthquake, Geophys. Res. Lett., 21, 2633-2636, 1994; D. Dreger, The large aftershocks of the Northridge earthquake and their relationship to mainshock slip and fault zone complexity, Bull. Seis. Soc. Am., 87, 1259-1266, 1997; D.J. Wald, T.H. Heaton, and K.W. Hudnut, The slip history of the 1994 Northridge, California, earthquake determined from strong-motion, teleseismic, GPS, and leveling data, Bull. Seis. Soc. Am., 86, S49-S70, 1996; Y. Zeng and J.G. Anderson, A composite source modeling of the 1994 Northridge earthquake using Genetic Algorithm, Bull. Seis. Soc. Am., 86, 71-83, 1996. SOURCE: D. Dreger, University of California, Berkeley.

|

BOX 4.1 Seismic Infrastructure in Japan Japan has been at the forefront of seismic monitoring since instrumental observations of earthquakes began in the 1870s. The Japan Meteorological Agency (JMA) operates a national network that provides essential data for the study of earthquake sources and seismotectonics throughout the Japanese islands.1 A number of local networks are operated by universities and other institutions, such as the National Research Institute for Earth Science and Disaster Prevention of the Science and Technology Agency, primarily for research on microseismicity and earthquake prediction. The Earthquake Prediction Data Center of the Earthquake Research Institute, University of Tokyo, receives hypocenter and arrival-time data from member universities and compiles them into two databases, one for real-time analysis and a revised one for archival purposes. More than 700 stations are currently operational, making the detection and location of all earthquakes of M > 2 possible almost everywhere in the country. A distinctive feature of earthquake monitoring in Japan has been the systematic collection of observations on the intensity of seismic shaking, a tradition that dates back to 1884. For many years, intensities were estimated by the observers on duty at meteorological stations, but this procedure had several problems: the observations were too subjective and inconsistently reported, they often disagreed with ground motions reported by the public, and they were not suitable for rapid dissemination. The inadequacies of this system were made clear during the 1995 Hyogo-ken Nanbu earthquake (see Box 2.4). After that disaster, the intensity scale used in Japan was revised and redefined on the basis of instrumental measurements,2 and suitable strong-motion instruments were deployed at 600 sites with approximately 20-kilometer spacing. The high density of this new national system provides adequate sampling of the rapid geographic variations in the ground motions typically observed for large earthquakes. Immediately after an event the instruments automatically send out parametric data to a central computer, which combines them and rapidly produces intensity maps of the seismic shaking. A number of counties, cities, and private organizations are also deploying arrays of digital strong-motion instruments; at last count, there were more than 1000 such instruments linked to central sites by real-time telemetry. Over the last several years, a Japanese initiative has focused on the deployment of a dense network of state-of-the-art broadband, high-dynamic-range instruments for the purpose of research on earthquake source processes and global Earth structure. Begun as an unofficial collaboration among several university groups, the Ocean Hemisphere Project (OHP) initiative was officially inaugurated in 1997. The OHP includes provisions for seismic, gravity, and geomagnetism observations. Its goal is to deploy ocean-bottom stations as well as land-based instruments not only in Japan but, in cooperation with neighboring countries, throughout the western Pacific region. |

have a lower cutoff near M 0, consistent with the minimum nucleation size of earthquakes implied by laboratory data (12).

The USGS and other institutions maintain special arrays of surface and borehole instrumentation on the San Andreas fault at Parkfield, California, as part of a long-term, multidisciplinary program for the study of earthquake processes at the transition between the creeping and locked sections of the fault (13) (Figure 2.15). Special arrays of surface and borehole instrumentation have furnished insight into seismogenic processes at scales much smaller than typical seismological investigations (Figure 4.6). For example, results from microearthquake and controlled-source Vibroseis studies using data from the High-Resolution-Seismic-Network provide a picture of a fault zone that is highly heterogeneous in seismic velocity structure (14), in the distribution and spatial clustering of microearthquakes (15) (Figure 4.6), and in the generation of fault-zone trapped seismic waves. These studies reveal structural detail at depth that is highly correlated with the transition from creeping to locked behavior inferred from surface observation, and they indicate temporal changes in propagation, seismicity, and slip rate at depth that correlate with deformation and water-level changes observed at and near the surface (16). On a finer scale, precise relative relocations of the microseismicity using waveform correlation techniques are revealing constellations of earthquakes and the detailed distribution of fault slip at depth (17). They have also yielded a surprising and strikingly detailed picture of the strength, strength distribution, and evolution of the deep San Andreas fault; the scaling of the earthquake source (18); and the strain accumulation on the Parkfield locked zone at depth. The discovery of numerous characteristically repeating microearthquake sequences at Parkfield has contributed significantly to the development of earthquake recurrence models currently being used to estimate earthquake hazard in California (19). Owing to the enhanced understanding of earthquake processes achieved through these observations, the Parkfield natural laboratory has been chosen as the site for the San Andreas Fault Observatory at Depth (SAFOD), a component of the EarthScope initiative that will use deep drilling to conduct in situ investigations of the San Andreas fault zone at seismogenic depths of 3 to 4 kilometers.

U.S. National Seismic Network A notable advance in earthquake monitoring has been the construction of a new U.S. National Seismic Network (USNSN), managed by the USGS National Earthquake Information Center in Golden, Colorado. A central objective is to transform the regional networks into highly automated seismic information systems, capable of broadcasting refined information about seismic ruptures and shaking in near real time to a wide audience concerned with emergency

response to earthquake disasters. The idea for the USNSN dates back nearly 30 years (20); the concept was to complement the relatively dense coverage provided in selected areas by the regional seismic networks with a well-distributed but sparse permanent network of three-component, broadband stations. The USNSN currently maintains 32 complete broadband stations and some equipment at 96 cooperative broadband stations in North America (7 in Canada) from which it acquires real-time data. It also acquires real-time data from 82 short-period stations, 30 foreign broadband stations, and another 62 stations worldwide. Through participation of the Advanced National Seismic System (ANSS) and the planned EarthScope program, the USNSN will be expanded to 100 permanent broadband stations in North America and will serve as the “backbone” for both programs. Ten of the new stations will be built to GSN standards and, thus, be capable of high-quality recording at the low frequency of the Earth’s free oscillations.

Strong-Motion Seismology

Accurate recordings of strong motions near earthquake sources are crucial to both earthquake engineering and science, because they provide the forcing functions for structural design and testing, as well as valuable information on earthquake source processes. The motions are registered by triggered, three-component, low-gain accelerographs located at free-field sites and housed in important structures, such as dams, bridges, and high-rise buildings. Accelerographs are capable of recording 2g accelerations in the frequency band from 0.1 to 10 hertz. The attenuation relations derived from the free-field data are key components of seismic hazard analysis and mapping, while the housed recordings furnish ground truth for structural performance during earthquakes.

The USGS oversees a national network of about 900 strong-motion accelerographs through the National Strong-Motion Program (NSMP). The NSMP coordinates data collection by a variety of federal, state, and local agencies, companies, and academic institutions (21). The California Geological Survey (CGS) operates the California Strong-Motion Instrument Program with basic funding provided by state tax on permits for new construction; it comprises 910 analog and digital accelerographs in California, 255 of which are in extensively instrumented structures. Strong-motion databases are maintained by both the USGS and the CGS, as well as by the Southern California Earthquake Center (SCEC) and the Pacific Earthquake Engineering Research (PEER) Center (22). Coordination of the various organizations that collect, process, and distribute strong-motion data has been a long-standing issue (23), but the situation has benefited substantially from on-line access now offered by all data

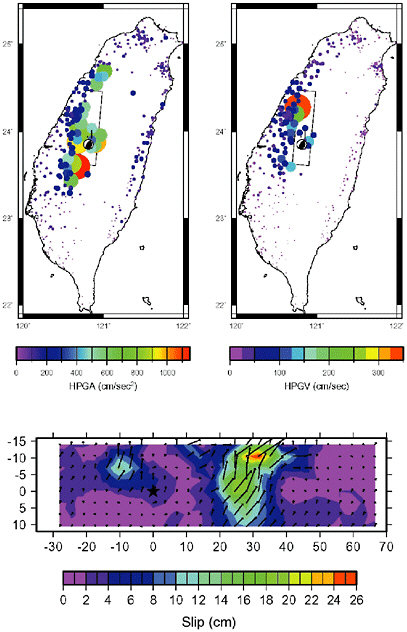

centers and the virtual strong-motion database (in fact, a meta-database) recently set up by the Consortium of Organizations for Strong-Motion Observation Systems. However, as in broadband regional seismology, the U.S. effort falls short of the Japanese, who have created a database system called Kyoshin Net (K-Net), managed by the National Research Institute for Earth Science and Disaster Prevention, to archive and distribute data from the dense array (25-kilometer spacing) of 1000 digital strong-motion stations deployed throughout Japan (24). In Taiwan, a strong-motion network of 614 stations provided unprecedented strong ground-motion data during the 1999 Chi-Chi earthquake.

The 1999 Izmit, Turkey, and Chi-Chi, Taiwan, earthquakes (M 7.4 and 7.6, respectively) have substantially increased the number of strong-motion records for large earthquakes, allowing detailed mapping of the ruptures in time and space (Figure 4.8). Yet, despite more than 70 years of strong-motion seismology, the data coverage remains poor. There are few strong-motion recordings for subduction-zone earthquakes with magnitude greater than 8 and none for magnitude greater than 9. Intraslab earthquakes of M 7 and larger are also poorly sampled, yet they pose a substantial hazard to major cities around the world, as evidenced in the 2001 El Salvador earthquake (M 7.6) and the 1949 (M 7.1), 1965 (M 6.5), and 2001 (M 6.8) events beneath the Seattle-Tacoma metropolitan area. Likewise, there are no close-in recordings (closer than 50 kilometers) of intraplate earthquakes in the central and eastern United States for magnitudes greater than about 5.2 and few worldwide for interplate earthquakes with magnitudes greater than about 7.3. The improved national monitoring structure planned in the framework of the ANSS is clearly needed to remedy this situation (see Chapter 6).

Portable-Array Studies

Portable arrays of seismometers augment the data from permanent monitoring networks by increasing the recording of seismicity in reconnaissance studies and during periods of anomalous activity, including aftershock sequences and swarms. They are also used to image the architecture of fault systems and other aspects of crustal structure, such as sedimentary basins, that affect the amplitude and duration of strong motions. Until recently, this mode of operation was limited to short-period seismometers with low dynamic range, but large pools of broadband instruments are now efficiently organized within the IRIS Program of Array Seismic Studies of the Continental Lithosphere (PASSCAL) (25) and the USGS (26). Subsets are available for deployment after a major earthquake in a coordinated effort called the Rapid Array Mobilization Program (RAMP). These deployments have been used to determine the

FIGURE 4.8 Peak ground acceleration (top left) and peak ground velocity (top right) of the 1999 Chi-Chi earthquake. The station coverage of the Central Weather Bureau Seismic Network is still poor in relatively inaccessible central mountainous areas. Bottom: Depth cross section showing the slip distribution derived from this data set using a finite-fault inversion methodology. SOURCE: W.-C. Chi, D. Dreger, and A. Kaverina, Finite source modeling of the 1999 Taiwan (Chi-Chi) earthquake derived from a dense strong motion network, Bull. Seis. Soc. Am., 91, 1144-1157, 2001. Copyright Seismological Society of America.

source parameters of aftershocks and their relationships to the main shocks—important data for studies of rupture propagation, postseismic relaxation, and stress transfer. Recordings of aftershocks have also begun to elucidate the causes of anomalous ground shaking and damage concentration, including basin resonance, basin-edge effects, and Moho reflection (see Section 3.1). Various forms of telemetry are making it possible to monitor state of health and to retrieve ground-motion data in near real time, allowing portable arrays to be integrated with permanent seismic monitoring systems for a wide range of seismic applications.

Imaging the Earth

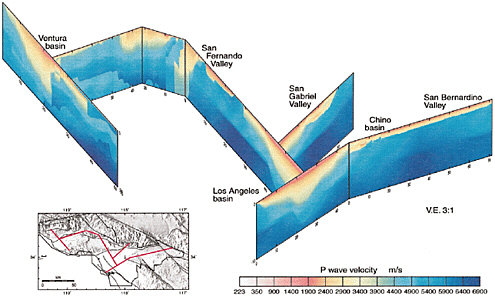

Investigations of Earth structure have always figured prominently in the study of earthquakes because they frame the interpretation of seismograms in terms of source processes. Indeed, the problems of discovering the space-time structure of faulting and the three-dimensional variations in the Earth’s elastic properties are strongly coupled and must be worked out together, either through joint inversion of the seismograms or iteratively through successive approximations. The primary seismological parameters needed to specify Earth structure are the local speeds of the two basic types of seismic waves, compressional (vp) and shear (vs), their associated attenuation factors, and the mass density (27). The variations in Earth structure that can be resolved are limited by the size and spacing of the seismic array and the distribution of seismic sources used to illuminate the array. Global networks can therefore determine worldwide structure at relatively low spatial resolution (Figure 4.4), whereas regional and local networks give finer details but only within more limited volumes of the Earth (Figure 4.9).

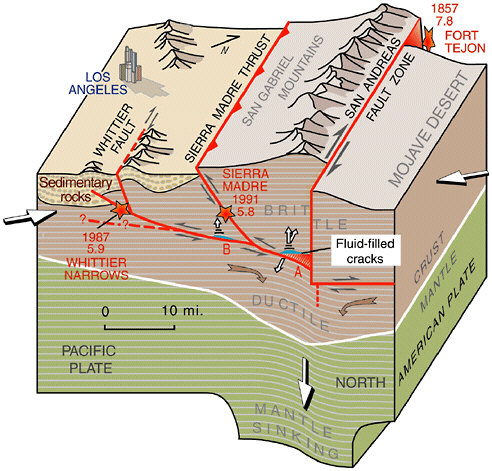

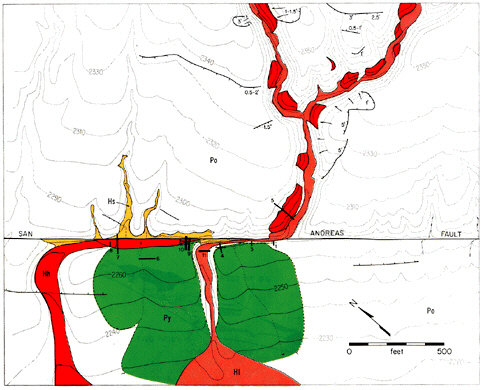

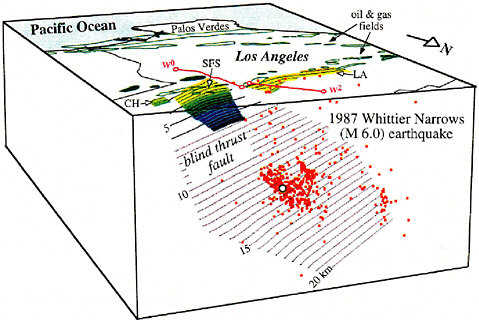

Portable arrays are useful in enhancing the structural resolution at spatial scales below the station spacing of permanent arrays. They can be deployed in two basic modes of observation: (1) to record artificial sources—explosions, mobile ground-shaking devices such as Vibroseis, or marine air guns—by high-frequency sensors (active-source experiments) and (2) to record signals from natural events, either regional or teleseismic earthquakes (passive experiments). PASSCAL experiments use both modes. Shallow structure (in the upper 2000 meters) can be imaged with highly portable, multichannel systems that record waves reflected from subsurface discontinuities, using hammer blows or small charges as sources. For example, in the Los Angeles Region Seismic Experiment (LARSE), researchers used air guns and explosions to construct images of the subsurface structure that may lead to a better understanding of earthquake hazards in southern California (Figure 4.10). These systems have proved very effective in delineating fault planes within sedimentary ba-

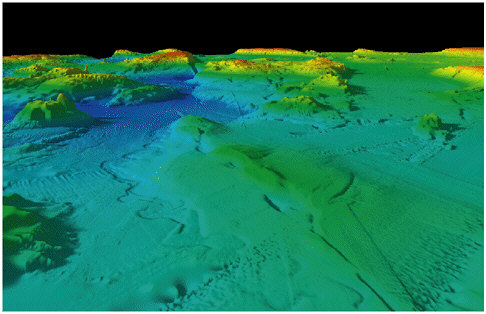

FIGURE 4.9 Fence diagram showing three-dimensional variation of shear-wave speeds in the Los Angeles region determined from seismic tomography. Cross sections are located as red lines in the lower left panel. Low speeds indicated by warm colors show deep sedimentary basins that trap seismic waves, thus amplifying and extending the shaking in regional earthquakes. SOURCE: H. Magistrale, S. Day, R.W. Clayton, and R. Graves, The SCEC southern California reference three-dimensional seismic velocity model version 2, Bull. Seis. Soc. Am., 90, S65-S76, 2000. Copyright Seismological Society of America.

FIGURE 4.10 An interpretation of the geologic structure along Line 1 of the Los Angeles Region Seismic Experiment line, showing the interaction of strike-slip and thrust faults. “Blind” thrust faults, such as the one responsible for the 1987 Whittier Narrows earthquake, do not break the surface, but can be imaged by seismic methods. Relative movement on faults is shown by pairs of small arrows. Large white arrows show the olique direction in which the Pacific Plate (left) and the North American Plate (right) are converging. Red stars with dates and numbers (magnitudes) are earthquakes. SOURCE: U.S. Geological Survey, Fact Sheet 110-99, <http://geopubs.wr.usgs.gov/fact-sheet/fs110-99/>.

sins. Deep structure (down to the base of the crust at 30- to 40-kilometer depth) can be imaged using larger multichannel systems in conjunction with explosion or large Vibroseis sources.

Seismicity Catalogs

The basic product of seismic monitoring is the seismicity catalog, a sequential listing of all earthquakes, explosions, and other localized seismic disturbances, natural or man-made. In modern monitoring systems, the detection, association, and inversion of seismic arrivals are done automatically from continuous digital data streams, although seismic analysts are still employed to review, evaluate, and often modify the results. The output may include the event’s origin time, hypocentral location (latitude, longitude, and depth), magnitude, and other source parameters, such as seismic moment and focal mechanism (usually in the form of a moment tensor) and a measure of rupture duration. Improving the completeness and accuracy of these seismicity catalogs is a major objective of seismic hazard analysis, which often depends on small earthquakes to identify the potential for damaging fault ruptures, and of earthquake physics, which relies on catalogs as the basic space-time record of fault-system behavior.

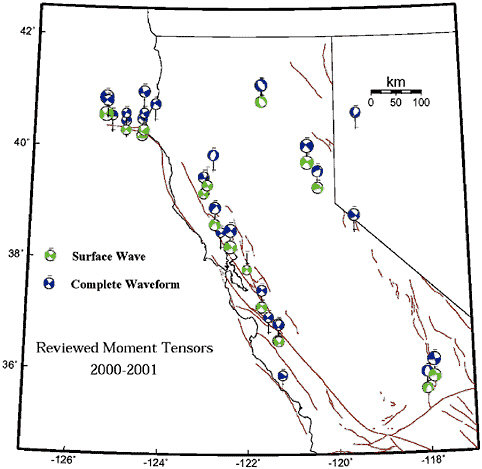

The USGS National Earthquake Information Service (NEIS) operates an Earthquake Early Alerting Service to determine as rapidly as possible the location and magnitude of significant earthquakes in the United States (M = 4.5) and around the world (M = 6.5, or known to be damaging) (28). The International Seismological Centre (ISC), based at Thatcham in Berkshire, United Kingdom, is a nongovernmental organization charged with producing a standard global catalog (29); it provides the most comprehensive compilations of short-period arrival times and amplitudes from the largest, most globally distributed set of seismic stations (approximately 3000), including earthquake reports from a number of regional seismic monitoring agencies. It currently processes about 5000 events per month worldwide. The International Monitoring System (IMS) currently operates 36 primary stations and arrays and collects data from 38 auxiliary stations. It produces an earthquake bulletin, the Reviewed Event Bulletin, within seven days, aiming at completeness down to M 3.5 (see Box 4.2). Specialized catalog services are rendered by university observatories and laboratories. Harvard University produces a global catalog of centroid locations, centroid times, and moment tensors for most large earthquakes (M = 5.5), primarily from the broadband data provided by FSDN stations (30). Though operated on a very modest budget through a private university, this centroid-moment tensor service has proven to be immensely useful in earthquake research, be-

|

BOX 4.2 Nuclear Monitoring Since the first underground nuclear tests in the late 1950s, underground test monitoring and test ban treaty verification have motivated the development of better seismic networks (see Section 2.3). With the breakup of the former Soviet Union and the increased number of emerging nuclear nations, the emphasis has shifted from a bilateral superpower test ban treaty to a global comprehensive test ban treaty (CTBT) and the Nuclear Nonproliferation Treaty. In the current plans, seismic networks represent one of four main technologies for monitoring the CTBT (along with infrasonic, hydroacoustic, and radionuclide techniques). The seismic component of the IMS will utilize 170 stations and reduce the global detection threshold to around mb4.0. The primary stations (alpha stations) are mostly dense arrays of high-quality, short-period sensors, located at carefully selected sites around the globe, with equipment for continuous telemetry to the International Data Center (IDC) for the primary purpose of detecting seismic events on a global scale. Auxiliary stations (beta stations) are meant to support rapid, on-demand, automatic retrieval of data for use in improving the location of events detected by the primary network. Most of the beta stations will be drawn from established three-component, broadband stations of the FDSN, ensuring a strong partnership between the CTBT monitoring community and earthquake scientists. Approximately 1000 separate channels of seismic data will be transmitted via satellite in real time to the IDC in Vienna, Austria, where they will be analyzed automatically to determine routine source parameters such as location, depth, origin time, and magnitude. Although the ultimate capabilities of the monitoring system will not be known until the network is fully deployed and operational, the experience with recent nuclear tests in India and Pakistan suggests that the IDC and IMS will provide an unprecedented system for real-time global seismic monitoring with low detection thresholds.1 Monitoring CTBT compliance will be more challenging than past arms control treaties, because it will require high-confidence identification of any nuclear explosion, however small, carried out in remote regions of the world. The CTBT has motivated a broad program of research, focused on regional monitoring of small seismic events.2 The results of this research are needed for two treaty monitoring goals. First, there is a need to locate all of the detected seismic events within 1000 square kilometers, because this is the largest region that can be inspected to assess a possible treaty violation. Achieving this goal will require detailed seismic calibration information (travel times, phase arrivals) for each of the IMS stations. Second, algorithms must screen out the large number of natural events that will be detected, based on location, depth, and other source characteristics. To advance these capabilities, the Department of Defense (DOD) currently supports one of the largest basic research programs in seismology in the federal government ($12 million in FY 2000). To increase the involvement of earthquake researchers, DOD plans to make all of the IMS data available for open research and hazard monitoring operations. |

cause it has generated the longest catalog of standardized source parameters—seismic moment, source mechanism, and centroid location— for seismicity studies worldwide.

Currently, the properties of more than 30,000 earthquakes are recorded, studied, and cataloged on an annual basis by these and other monitoring organizations. A few regional monitoring systems routinely catalog all seismicity above M 2. Broadband regional networks routinely produce moment tensor solutions for regional earthquakes greater than M 4 (e.g., Figure 4.11). In many regions, however, sensor arrays are too sparse to record events below about M 3. Further work is needed to upgrade regional networks to broadband instrumentation and digital telemetry, a task taken on by the ANSS program, and to extend cataloging procedures to include additional source parameters such as characteristic dimensions (31).

Volcano Seismology

Earthquake seismology plays an important role in the study of volcanoes and the prediction of volcanic eruptions (Box 4.3). Seismicity within a volcano is caused by rockfalls and avalanches, tectonic faulting, rock fracture during magma transport, and low-frequency tremors associated with the flow of melt below a volcano. Before a major eruption, earthquakes typically occur in swarms, where the rates of seismicity may be elevated by two to three orders of magnitude above background levels. Monitoring of this activity by seismic networks yields information about the shape, size, and physical state of magma reservoirs (32). A complete understanding of the volcanic system will require a synthesis of seismological observations into a coherent model of eruption mechanics, constrained by fluid dynamics and elastodynamics of magma flow in a porous, brittle media. Recent advances in portable instrumentation and theory for analyzing the data are stimulating important advances in this field. For example, results obtained at Redoubt volcano using nonlinear, travel-time tomography show that imaging the three-dimensional structure of a volcano is feasible down to a scale of a few hundred meters (33).

Beyond first-order mapping of the fluid-pathway geometry using broadband data there are many questions about the dynamics of magma transport that can be investigated using short-period seismic data. For example, two basic families of volcanic processes generate signals in the 0.1- to 1-second seismic band. The first involves volumetric sources in which the fluid plays an active role in the generation of elastic waves, and the second consists of shear or tensile sources caused by brittle rock failure. In volumetric sources, elastic radiation is generated by multiphase fluid flow through cracks and conduits; long-period events, volcanic

FIGURE 4.11 Moment tensor solutions for northern California obtained from the Berkeley Digital Seismic Network, illustrating advanced processing for regional earthquakes. These solutions are obtained automatically and in quasi-real time using broadband data for a subset of events of M 4 and larger. Robustness of the solution is assessed by comparing the results of two independent inversions— one in the time domain, the other in the frequency domain. SOURCE: B. Romanowicz, D. Dreger, and H. Tkalcic, University of California, Berkeley.

tremor, and seismic signals related to mechanisms of degassing in open vents are manifestations of such processes. The second family includes volcano-tectonic earthquakes, in which magmatic processes provide the source of energy for rock failure. These sources occur in the brittle rock around a magma reservoir and conduit and are associated primarily with the structural response of the volcanic edifice to the intrusion and/or

|

BOX 4.3 Prediction of the Mt. Pinatubo Eruption Mt. Pinatubo in the Philippines is one of a chain of composite volcanoes known as the Luzon volcanic arc, which are being formed by the rise of magma from an eastward-dipping subduction zone along the Manila Trench. On the afternoon of April 2, 1991, villagers were surprised by a series of small explosions from a line of vents near the north flank of the summit dome. Within a few days, scientists from the Philippine Institute of Volcanology and Seismology (PHIVOLCS) installed several portable seismographs near the northwest foot of Mt. Pinatubo and began recording small earthquakes at a rate of about 40 to 140 per day. In late April, PHIVOLCS was joined by a group from the USGS, and the joint team installed a network of seven seismometers, telemetered to Clark Air Base, a major U.S. Air Force facility located just east of the volcano. Numerous small earthquakes (M lower than 2.5) continued through May, clustered in a zone 2 to 6 kilometers deep and caused by fracturing of brittle rock by rising magma. Beginning on June 1, a second cluster of earthquakes developed in the upper 5 kilometers near the fuming summit vents. A small explosion early on June 3 initiated an episode of increasing volcanic unrest characterized by intermittent minor emission of ash, increasing seismicity beneath the vents, and episodes of harmonic tremor (a prolonged rhythmic seismic signal believed to be related to sustained subsurface movement of magma or volatile material). PHIVOLCS issued a level-3 alert on June 5, indicating the possibility of a major pyroclastic eruption within two weeks. A tiltmeter high on Mt. Pinatubo began to show a gradually increasing outward tilt early on June 6. Seismicity and the outward tilt continued to increase until late afternoon on June 7, when an explosion generated a column of steam and ash 7 to 8 kilometers high. After the explosion, seismicity decreased and the increase in outward tilt stopped. PHIVOLCS promptly announced an increase to level-4 alert (eruption possible within 24 hours) and recommended additional evacuations from the volcano’s flanks. The period from June 8 through early June 12 was marked by continuing, weak ash emission and episodic harmonic tremor. On June 9, PHIVOLCS raised the alert level to 5 (eruption in progress). The radius of evacuation was extended to 20 kilometers, and the number of evacuees increased to about 25,000. The first major explosive eruption began at 0851 hours on June 12, generating a column of ash and steam that rose to 19 kilometers. Although a burst of seismic tremor had occurred several hours earlier, no specific seismic precursor immediately preceded this event; a high-amplitude seismic signal and the rise of the eruptive column seemed to begin simultaneously. Seismic records indicated that this event lasted about 35 minutes. This was the first of a series of brief explosive eruptions that occurred with increasing frequency from June 12 through 15. The climactic eruption began at 1430 hours on June 15—the world’s largest in more than half a century. The successful forecast of the Mt. Pinatubo eruption enabled Philippine civil leaders to organize massive evacuations that saved thousands of lives and greatly reduced the destruction at Clark Air Base (military aircraft worth $200 million to $275 million were also removed).1 Nevertheless, the coincidence of the climactic eruption with a typhoon led to more than 300 deaths and extensive property damage, caused primarily by the extraordinarily broad distribution of heavy, water-saturated tephra-fall deposits. |

withdrawal of fluids. Volcano-tectonic earthquakes act as stress gauges that map stress concentrations in the volcanic structure. Dense distributions of earthquake hypocenters therefore provide a signature of magma migration through volcanoes. However, gaining a better understanding of the dynamics of magma transport will require more information about the source processes for the long-period events (34).

4.2 TECTONIC GEODESY

The elastic strain energy unleashed in earthquakes accumulates in the Earth’s crust through the imperceptibly slow motions of plate tectonics. The strain rates in tectonically active areas such as the western United States are only few parts in 10 million per year (35). The tools of geodesy can be used to measure these small tectonic deformations on global to local scales, furnishing data that have proven essential for estimating the long-term slip rates and seismogenic potential of lithospheric faults. In addition, geodesy provides the means to detect transient (time-localized) strains having durations from minutes to years that do not generate elastic waves and are therefore invisible to seismic monitoring. These transients comprise fault creep and stress relaxation following large earthquakes (postseismic transients), as well as the slow, localized strains that are predicted by laboratory experiments to precede dynamic faulting (deformation precursors). They also include an observed but poorly understood class of isolated events known as “silent earthquakes,” which may be responsible for aseismic slip on some faults and may play a role in concentrating stress before some large earthquakes.

Tectonic geodesy includes a wide array of techniques with complementary strengths and sensitivities. Geodetic measurements vary in scale from systems that allow the recovery of three-dimensional position anywhere on the planet’s surface, such as GPS, to systems that are extremely localized and sensitive, such as borehole strainmeters.

Traditional Geodetic Techniques

Many of the measurement technologies used in tectonic geodesy grew out of the needs of precise surveying. Both activities share a requirement for extremely precise measurements, and the practice of geodesy has a strong tradition of characterizing and minimizing measurement errors.

-

Triangulation. This surveying technique, invented by ancient agricultural societies, can measure angles between distant points with a precision of approximately 2 arc-seconds, corresponding to a shear strain of about 5 parts per million. Triangulation requires a clear line of sight from

-

an observing station to two or more target monuments, typically a few tens of kilometers distant (usually situated on high ground). Triangulation played an important role in estimating the strains and displacements associated with the 1906 San Francisco earthquake, providing much of the observational foundation for Reid’s elastic rebound model. Triangulation is expensive, however, because observing and target sites must be occupied simultaneously by experienced personnel. Consequently, the method has been largely abandoned by the tectonic geodesy community in favor of more accurate and flexible techniques, primarily GPS (described below).

-

Trilateration. In the 1970s, the ability to measure long distances with laser reflectors improved the utility of tectonic geodesy. Trilateration provided the means for repeated strain measurements over baselines of tens of kilometers with sufficient precision (about 300 parts per billion) to monitor the strain accumulation between large earthquakes (36). It allowed the USGS to confirm that slip rates observed over decades across major faults in California are quite similar to geological estimates, which are averaged over thousands to millions of years. Like triangulation, this method has been superceded by GPS.

-

Spirit Leveling. Vertical displacements measured by spirit leveling have been used to characterize the vertical component of the deformation field associated with earthquakes (37). Reports of postseismic and even precursory deformation measured with leveling have been published, but the limited accuracy and the possibility of systematic error have made these reports controversial (38). Leveling surveys are very labor intensive and costly, although they remain the most precise way to measure relative elevations over distances of less than about 25 kilometers. Over larger distances, GPS provides a more accurate, and much more economical, alternative to leveling (39) and offers the tremendous advantage of continuous temporal sampling.

Space Geodetic Systems

The space program has contributed much of the new technology developed for tectonic geodesy since the 1970s. Ultraprecise methods of space-based geodesy were first pioneered in Very Long Baseline Interferometry using astronomical sources, but they reached their current state of capability by taking advantage of dedicated satellite platforms.

-

Very Long Baseline Interferometry (VLBI) and Satellite Laser Ranging (SLR). The pioneering geodetic techniques of VLBI and SLR, both capable of monitoring plate motions at a global scale, were developed under the National Aeronautics and Space Administration (NASA)

-

Crustal Dynamics Program. VLBI uses simultaneous observations of high-frequency radio waves from extragalactic quasars to measure the baselines connecting a set of radio telescopes. Precision approaches one part per billion—millimeter changes over 1000-kilometer baselines. SLR uses laser pulses reflected from special satellites (e.g., Laser Geodynamics Satellite and Starlette) to locate optical telescopes on the ground. SLR is less precise than VLBI because of lower signal-to-noise levels and the need to solve for the motion of the satellite and ground stations. VLBI confirmed Wegener’s concept of continental drift and provided a rough approximation of how the Pacific-North American plate motion is distributed across western North America (40). SLR also contributed data to the study of plate boundary deformation zones, particularly in the Mediterranean region. VLBI and SLR are both very cumbersome and expensive because they require sensitive instrumentation to detect very weak signals. Consequently, they have been replaced in nearly all tectonic applications with GPS measurements.

-

Global Positioning System. Tectonic geodesy was rapidly transformed by the deployment of the first GPS constellation in the mid-1980s. These satellites emit strong, precisely timed radio signals easily detectable by 100-millimeter antennae and can be used to locate points anywhere on the Earth’s surface. GPS instrumentation, which underlies most modern autonomous satellite navigation systems and a host of military and commercial applications, has become quite affordable since its initial development, so GPS surveying can be done by individual investigators (41). Because of its low cost and portability, GPS has quickly replaced other geodetic techniques for most tectonic studies, including dense sets of deformation measurements spanning plate boundaries (42).

GPS locations are measured relative to the orbiting satellites, whose positions are in turn estimated relative to tracking stations on the ground. Geodetic accuracy thus depends on knowing the location of the tracking stations, which are moving relative to each other because of tectonic motions. At present, the tracking station coordinates are best determined by making frequent GPS measurements at sites tied into a standard (absolute) reference frame by VLBI or SLR observations (43).

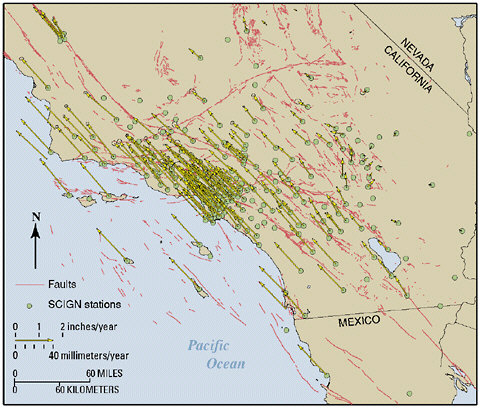

GPS measurements can be performed in either campaign or permanent modes. The campaign mode involves temporary occupation of geodetic benchmarks in much the same way as for earlier triangulation and trilateration surveys. A series of issues—the decreasing costs of receivers, the high labor costs of campaign measurements, the loss of precision caused by antenna setup—has motivated the installation of permanent GPS stations in configurations similar to seismic monitoring networks. Automated arrays of continuously sampled GPS receivers can measure deformation in real time as often as once per minute (44). Japan has the

largest fixed GPS network with more than 1000 stations. In comparison, the Bay Area Regional Deformation network in northern California includes about 35 stations and the Southern California Integrated GPS Network (SCIGN) now comprises 250 stations (Figure 4.12). The arrays in Japan and southern California have tested many design elements and demonstrated what features of time-dependent strain are most significant. Permanent GPS arrays will continue to grow as receiver and data-transmission costs drop further.

Plans call for the permanent GPS networks in the western United States to be expanded and consolidated to form a major component of the Plate Boundary Observatory (PBO), proposed as part of the EarthScope program (see Chapter 6). The GPS component of the PBO would comprise more than 1000 continuously recording GPS receivers, with several hun-

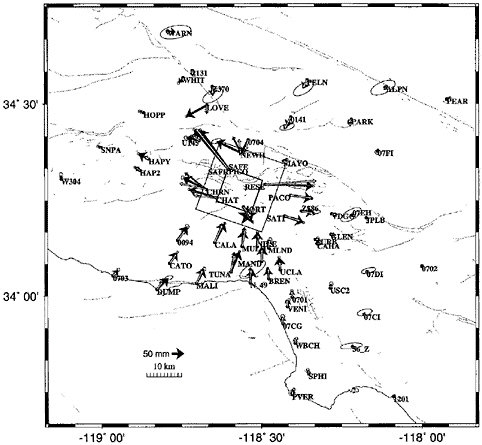

FIGURE 4.12 Velocities relative to the North American Plate of geodetic movements from 15 years of GPS measurement in Southern California, showing ongoing deformation within a complex system of faults. SOURCE: Southern California Earthquake Center and U.S. Geological Survey.

dred established as a geodetic backbone for the study of plate boundary tectonics. The remaining GPS stations would be deployed in denser clusters to provide detailed data on active faults and volcanic systems within the most active zones.

The broad coverage and high precision of GPS geodesy permit individual faults to be studied as components in strongly interacting systems rather than as isolated elements. A disadvantage of GPS is that motion can be measured only at points on the ground where receivers are located. Although the cost of GPS receivers is decreasing steadily, it is impossible to measure the deformation field densely enough to answer some key questions of earthquake science.

-

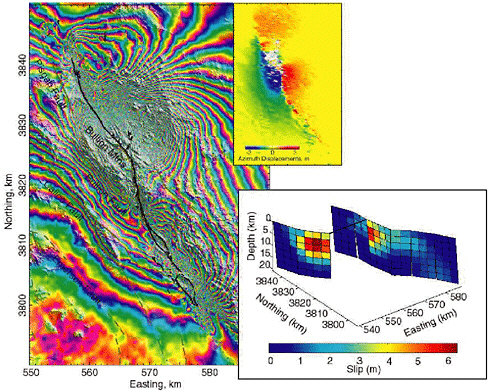

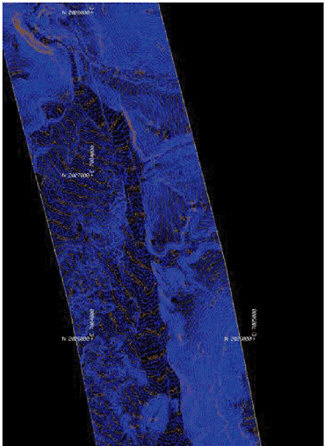

Interferometric Synthetic Aperture Radar. The most recent innovation in tectonic geodesy is InSAR, which has imaged earthquake deformations at a level of detail unanticipated only 10 years ago (45). InSAR measures deformation by comparing reflected radar waves recorded on successive passes of a satellite from nearly identical positions. The simplest InSAR measurements are sensitive to just one component of displacement (toward the satellite), but stereoscopic measurements (pairs of images from multiple locations) allow measurement of vector displacements (Figure 4.13). InSAR is subject to errors from changes in reflective properties such as those caused by seasonal vegetation changes and snowfall, and it lacks the temporal resolution of GPS. However, the ability to map essentially continuous displacements over large swaths of active plate boundaries offers an enormous advantage.

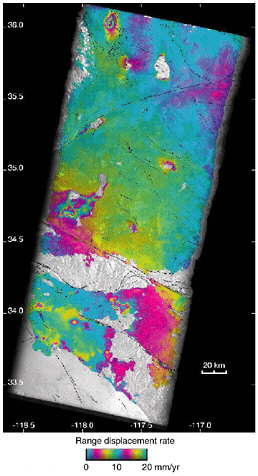

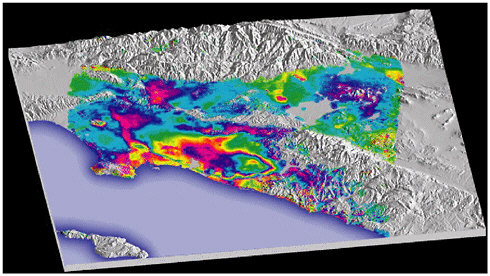

Because they can map centimeter-level deformations with a spatial resolution on the order of 100 meters, InSAR systems are useful for determining co-seismic and interseismic slip on faults. This is particularly important in remote areas lacking GPS stations. InSAR has shown an ability to measure co-seismic slip on subsidiary faults, variations in slip distribution along strike, and slip on previously unknown faults. With its continuous coverage, InSAR systems can map surface displacements before, during, and after earthquakes or volcanic eruptions, providing time-dependent data on the mechanics of fault loading, earthquake rupture, and earthquake interaction, and they can image strain accumulation across broad tectonic zones (Figure 4.14), as well as regional subsidence induced by petroleum production and groundwater withdrawal. For example, InSAR data have been used to disentangle the latter types of motion from tectonic strains observed by GPS networks in the Los Angeles basin (Figure 4.15). Finally, InSAR has been used to detect postseismic poroelastic effects induced by fault movements (46). An important component of the proposed EarthScope project is a dedicated InSAR satellite, the Earth Change and Hazard Observatory (ECHO) (47).

FIGURE 4.13 InSAR-derived interferogram (left) and azimuth offset field (upper right) show the surface deformation from the October 20, 1999, M 7.1 Hector Mine earthquake as determined by M. Simons et al. (2002). Colored fringes show radar phase introduced by earthquake-induced changes in distance from each point on the ground to the orbiting radar. A solution for fault slip at depth inferred from the radar data (lower right) as determined by Jonsson et al. (2002) indicates a maximum slip of 6 meters at 10-kilometer depth just northwest of the hypocenter. The fault has two branches in its northern part: the westernmost branch, which has greater slip than the eastern branch, has been offset in the figure for clarity. SOURCE: M. Simons, Y. Falko, and L. Rivera, Coseismic deformation from the 1999 Mw 7.1 Hector Mine, California, earthquake as inferred from InSAR and GPS observations, Bull. Seis. Soc. Am., 92, 1390-1402, 2002; S. Jonsson, H. Zebker, P. Segall, and F. Amelung, Fault slip distribution of the Mw 7.1 Hector Mine, California, earthquake estimated from satellite radar and GPS measurements, Bull. Seis. Soc. Am., 92, 1377-1389, 2002. Copyright Seismological Society of America.

FIGURE 4.14 InSAR-observed deformation along the San Andreas fault from European Remote Sensing satellite radar. Many interferograms were combined to detect the elastic strain built up along the fault during the interval from 1992 to 2000. One color cycle shows 10 millimeters per year of ground displacement. Other local deformation signatures due to groundwater and oil withdrawal in urban areas are clearly visible. InSAR also reveals unexpected transient strain accumulation along the Blackwater-Little Lake fault system within the eastern California shear zone in a 120-kilometer-long, 20-kilometer-wide zone of concentrated shear between the southern end of the 1872 Owens Valley earthquake surface break and the northern end of the 1992 Landers earthquake surface break. The shear zone is continuous through the Garlock fault, which does not show any evidence of left-lateral slip during the same period. A dislocation model of the observed shear indicates right-lateral slip at 7 ± 3 millimeters per year on a vertical fault below 5 kilometers depth, a rate that is two to three times greater than the geologic rates estimated on northwest-trending faults in the eastern Mojave area. SOURCE: G. Peltzer, F. Cramp, S. Hensley, and P. Rosen, Transient strain accumulation and fault interaction in the eastern California shear zone, Geology, 29, 975-978, 2001.

FIGURE 4.15 Perspective view of the Los Angeles region with superimposed InSAR measurements of ground motions between May and September 1999, indicating seasonal and secular variations due to groundwater withdrawal and recharge. Large regions of metropolitan Los Angeles are rising and falling by up to 11 centimeters annually, and a large portion of the city of Santa Ana is sinking at a rate of 12 millimeters per year. The repeated color banding with the large oval shows approximately 5 centimeters of subsidence. The straight line located just inside the coastline is the Newport-Inglewood fault, which controls the extent of subsidence. The small isolated bull’s-eye feature north of Palos Verdes and east of downtown Los Angeles is from pumping activity in the Inglewood oil field. The motion caused by the withdrawal of water, oil, and gas from the basin contaminates GPS measurements of the deformation field. After corrections for these effects using the InSAR data, the contraction across the Los Angeles Basin is estimated to be approximately 4.5 millimeters per year. This deformation is thought to be accommodated primarily on blind thrust faults, such as those that ruptured in the 1987 Whittier Narrows and 1994 Northridge earthquakes. SOURCE: G.W. Bawden, W. Thatcher, R.S. Stein, K.W. Hudnut, and G. Peltzer, Tectonic contraction across Los Angeles after removal of groundwater pumping effects, Nature,412, 812-815, 2001. Reprinted by permission from Nature copyright 2001 Macmillan Publishers Ltd.

Strain Measurements

A separate facet of geodesy is the measurement of strain over small spatial scales using self-contained instruments called strainmeters. The discovery in 1960 of aseismic slip or “creep” on a segment of the San Andreas fault in central California (48) led to methods for extremely localized measurements of fault displacement using invar tapes, wire creep-

meters, alignment arrays, short-baseline triangulation, and laser length surveys (49). Deployment of these instruments revealed both steady and episodic creep occurring at shallow depths (<4 kilometers) on some faults, often near the time of earthquakes (50). Aseismic creep at greater (seismogenic) depths, such as observed in central California, appears to be rare.

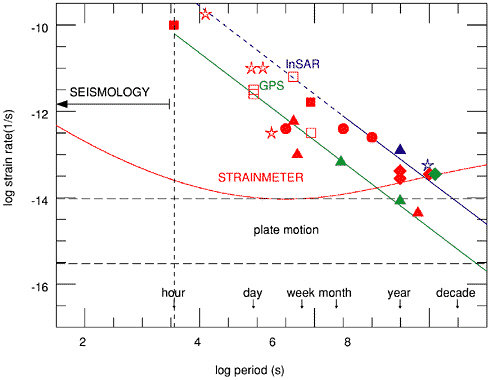

High-resolution laser strainmeters, borehole strainmeters, and tiltmeters are used to measure deformation very precisely in a small region. Their sensitivity approaches 10–12, but long-term stability is a problem because they have small footprints susceptible to very localized, nontectonic deformations, such as ground swelling in rainstorms. The most stable instruments are the laser strainmeters and water-tube tiltmeters at Piñon Flat Observatory in California, which derive their stability from their length (>500 meters) and the “optical anchors” used to couple the end monuments to rock at about 25-meter depth (51). Borehole instruments suffer drift over several months, but they are very precise over shorter times and have widespread application for measuring transient deformation, including slow and silent earthquakes (52).

Under the best conditions, these instruments are as much as two to three orders of magnitude more sensitive than GPS at short periods. At longer periods, the relative advantage declines significantly, although long-baseline instruments may retain advantages even for time scales of years. Because they measure strain, which decays as the cube of distance from a dislocation, they must be positioned in reasonably close proximity to the source. Small numbers of borehole strainmeters have been operating for years in a few select locations. The proposed Plate Boundary Observatory would deploy several hundred borehole and perhaps several long-baseline strainmeters at strategically chosen sites along the San Andreas fault system, as well as at several volcanic systems (53).

Geodetic Observations of Earthquake Processes

The increasing precision and density of geodetic measurements are furnishing new constraints on how complex fault systems are loaded, how earthquakes interact, the nature of aseismic deformation transients, and how the rheological structure of the Earth’s crust controls the earthquake process.

Plate Tectonics and Fault Motions Geodetic studies have shown that plate-tectonic models, based on data that average over thousands to millions of years, can accurately predict the short-term motions across plate boundary zones a few hundred kilometers wide. Denser measurements from geodetic networks place strong constraints on the slip rates

on faults within these zones and are especially valuable for faults that are poorly exposed or otherwise not amenable to geological study. If proper accounting is made for fault interactions and postseismic deformations, geodetic slip rates for faults bounding small tectonic blocks with lateral dimensions of 20-50 kilometers generally agree with those estimated by geologic methods for much longer time intervals, although clear discrepancies have been documented (Figure 4.14).

By integrating the slip rate over the areas of faults, one can estimate the rate at which seismic moment is accumulating. If it can be assumed

FIGURE 4.16 Horizontal displacement (heavy black vectors and the corresponding one-sigma error ellipses) associated with the Northridge earthquake measured by the GPS network in southern California. SOURCE: K.W. Hudnut, Z. Shen, M. Murray, S. McClusky, R. King, T. Herring, B. Hager, Y. Feng, A. Donnellan, and Y. Bock, Coseismic displacements of the 1994 Northridge, Calif., earthquake, Bull. Seis. Soc. Am.,86, S19-S36, 1996. Copyright Seismological Society of America.

that all of this moment is released in earthquakes (no aseismic slip) and if the relative distribution of earthquake size is known, then the long-term moment rate sets the multiplicative constant needed to infer long-term earthquake frequency (54). Because aseismic creep at seismogenic depths seems to account for very little of the moment budget in most continental settings, unknown fault geometry and uncertainties in the earthquake size distribution are often the limiting factors in estimating earthquake frequency (55).

Strain rates depend on the slip distribution and geometry of faults, so spatial variations in measured strain rate reveal significant information about the faults. For example, spatial variations of strain rate along the San Andreas fault near Parkfield, California, show details of the slip rate on the fault plane. The San Andreas is primarily locked to a depth of about 15 kilometers to the southeast of that location and is creeping to the northwest. The slip distribution is important for understanding the physical mechanism of the transition and the stress accumulation leading to future earthquakes (56).

The crust on either side of the creeping section of the San Andreas fault accumulates very little strain, and the geodetic displacement rate (35 millimeters per year) is virtually identical to the geologic slip rate on the San Andreas (34 millimeters per year). Elsewhere on the San Andreas and on other strike-slip faults, secular deformation is continuous across each fault. The deformation patterns can be matched with a model in which each fault is locked by friction to a depth of 10 to 20 kilometers and slips freely below that depth in a viscoelastic lower crust.

Earthquake Rupture and Subseismic Strain Events GPS data provide independent estimates of earthquake-induced fault displacement (57), as illustrated in Figure 4.16. Geodetically derived images of slip variations for the recent Loma Prieta and Landers earthquakes rival the spatial resolution achieved from models based on seismic data (58). In the case of the 1989 Loma Prieta earthquake, a geodetically derived slip model (59) supported seismic models that found a variation of slip direction with distance along the fault (60). Models of the 1992 Landers, California, earthquake based on GPS data confirm the strong spatial variability and location of slip derived from modeling the strong motion data (61).

The 1992 Landers, 1999 Izmit (Turkey), and 1999 Hector Mine earthquake all caused substantial deformation not detectable with existing GPS arrays. InSAR images from the Landers earthquake show co-seismic slip on the Garlock and other faults, which were not otherwise known to have slipped in the main shock (62). Many subsidiary faults, some previously unmapped, slipped more than 10 millimeters during the 1999 Hector Mine earthquake.

Geodetic data for the 1989 Loma Prieta and 1994 Northridge earthquakes reveal co-seismic deformation that cannot be explained by slip on the seismically determined fault plane. This deformation may have been caused by vertical variations in elastic moduli or by secondary deformation in the hanging wall of these faults (63).

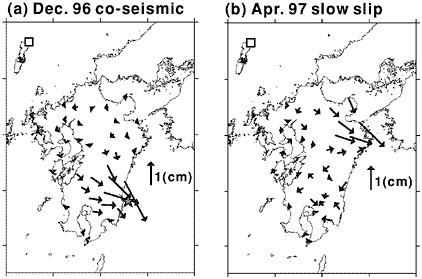

One of the most significant discoveries in tectonic geodesy has been the detection of episodic strain transients that have earthquake-like spatial patterns but occur too slowly (over days to months) to excite seismic waves. These subseismic events, or “silent earthquakes,” have been observed in volcanic areas, such as the M 7 Izu-Oshima earthquake in 1978 (64), and on shallow creeping faults such as the San Andreas between Parkfield and San Juan Bautista (Figure 4.17). Continuously recording stations of the Japanese GPS network detected a much larger event (M 6.5) with a duration of about a year on the subduction interface under the Bungo Channel, between the islands of Shikoku and southern Honshu (Figure 4.18). In 1999, a Canadian team used GPS networks in southwest British Columbia and northwest Washington to detect a 15-day, M 6.7 silent slip event at depths of 30-40 kilometers on the Cascadia subduction interface (65). Further analysis of a full decade of continuously recorded data has uncovered a quasi-periodic series of similar events with an average recurrence interval of about 14 months (66). It is not yet known whether such sequences are characteristic of subduction zones, nor is it understood how these subseismic transients relate in time and space to great earthquakes that occur every several hundred years along shallower segments of the subduction interface. These research problems have clear connections to the fundamental issues of earthquake predictability.

PostSeismic Deformation and Long-Term Transients The stress changes from large earthquakes cause several types of secondary deformation that can be detected by surface geodesy. These include additional slip on the fault surface (afterslip); viscous relaxation in the hotter, more ductile lower crust; and pressure-driven fluid flow. Postseismic strain transients due to one or more of these mechanisms have been documented for a number of earthquakes, such as 1906 San Francisco (67), 1957 Kern County (68), 1985 Central Chile (69), and 1989 Loma Prieta (70). The 1992 Landers earthquake, where the cumulative postseismic deformation may have equaled 10 to 20 percent of the M 7.3 mainshock, was the first to be observed by a full suite of modern geodetic methods: GPS (71), laser strainmeters (72), and InSAR (73). The strain transients observed by these techniques had different durations—about 6, 50, and 1000 days, respectively—either because of different instrumental sensitivities or because separate inelastic processes were operating (Figure 4.19). More recent

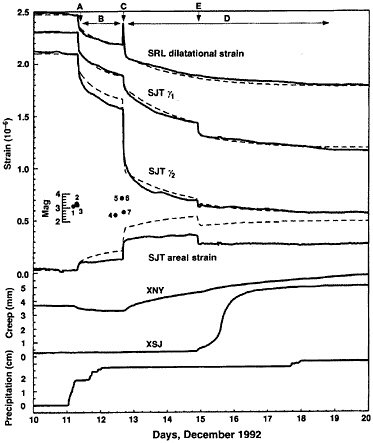

FIGURE 4.17 Borehole strain and creepmeter data from San Juan Bautista for 10 days in December 1992, showing several slow earthquakes. The observations suggests rupture speeds of 0.1 to 0.5 meter per second for these events, compared to 1 to 5 kilometers per second for ordinary earthquakes. SOURCE: A.T. Linde, M.T. Gladwin, M.J.S. Johnston, R.L. Gwyther, and R.G. Bilham, A slow earthquake sequence on the San Andreas fault, Nature, 383, 65-68, 1996. Reprinted by permission from Nature. Copyright 1996 Macmillan Publishers Ltd.

earthquakes, including the 1999 Izmit and 1999 Hector Mine events, have added data sets of similar diversity that are under active analysis.

Viscoelastic diffusion following large earthquakes in California and Japan generates large strain changes, even decades later. Several authors have argued that the resulting stresses might propagate slowly, contributing to deformation and possibly earthquake triggering at very great distances from a large earthquake (74).

FIGURE 4.18 Observations of slow earthquake under the Bungo channel between Kyushu and Shikoku observed by the continuously recording Japanese GPS network. The GPS-derived vector motions indicated by arrows are from (a) an ordinary earthquake on the subduction interface and (b) a subsequent silent earthquake that did not radiate seismic waves. The patterns of deformation are quite similar (though displaced), indicating that the silent earthquake represents slip on a nearby patch of the subduction interface. The moment magnitude of the silent earthquake was estimated to be M 6.8. SOURCE: Modified from Y. Yagi, M. Kikuchi, and T. Sagiya, Co-seismic slip, post-seismic slip, and aftershocks associated with two large earthquakes in 1996 in Hyuga-nada, Japan, Earth Planets Space,53, 793–803, 2001.

Tectonic Deformation and Future Earthquakes Geodetic data have confirmed Reid’s hypothesis that strain accumulates in the region surrounding a fault before a major earthquake, but not his conjecture that major earthquakes can be predicted from the time required to recover the strain released in the previous event. Although the latter has not yet been fully tested owing to measurement limitations and the lack of the long-term observations, Reid’s notion of an earthquake “cycle” appears to be at odds with the observed complexity of the earthquake process and the inherent irregularity of stick-slip behavior.

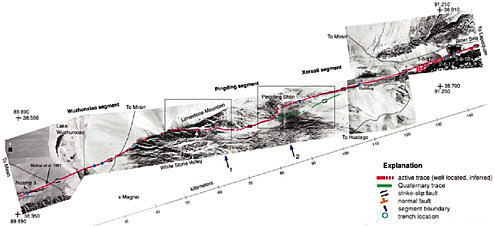

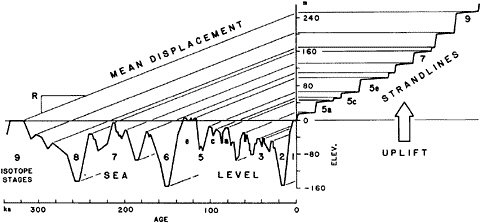

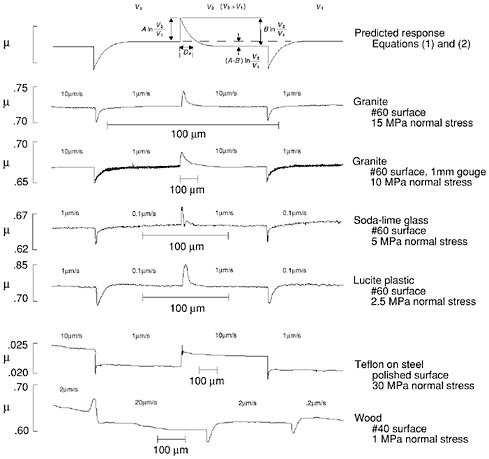

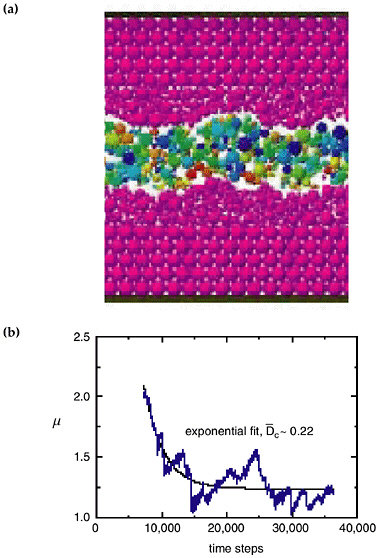

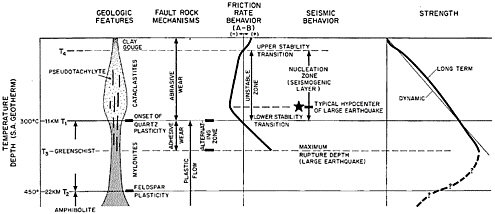

Fault-friction models derived from laboratory data predict that observable aseismic slip in the nucleation zone of an earthquake might precede the seismic phase of fault rupture on time scales of minutes to days. Hope that such signals could be used to predict large earthquakes motivated many geodetic searches for aseismic strain precursors, primarily